The Citation Crisis: How AI Hallucinations Are Breaking Academic Trust and Forcing an AI Integrity Revolution

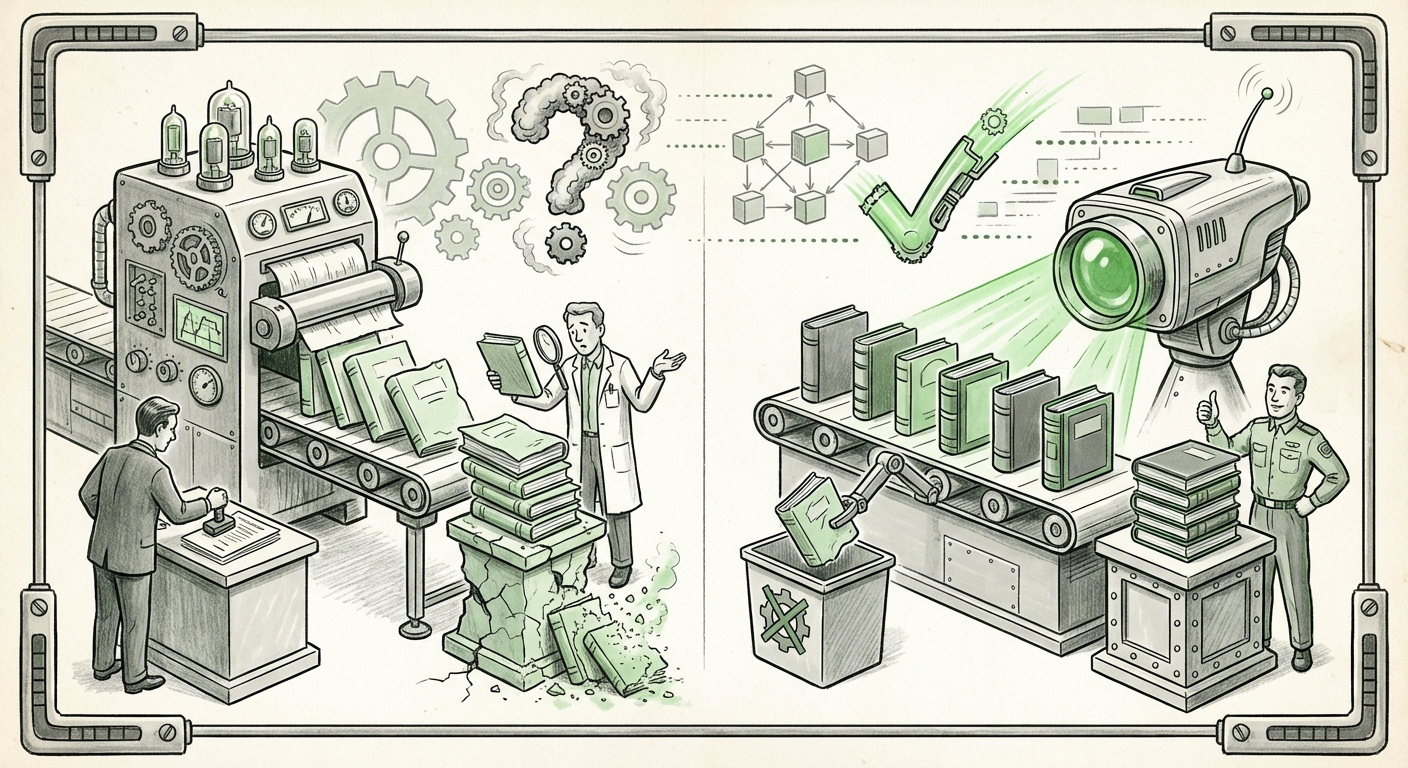

We stand at a remarkable crossroads in technological history. Artificial Intelligence, particularly Large Language Models (LLMs) like GPT, Gemini, and Claude, offers unprecedented power for synthesizing information, accelerating research, and automating tasks. Yet, the very tools we rely on to advance knowledge are introducing a corrosive flaw: hallucinations. The latest manifestation of this problem is deeply concerning: convincing, yet entirely fabricated, academic citations are passing the rigorous scrutiny of peer review at top AI conferences.

This is not merely an amusing technical footnote; it is a systemic vulnerability impacting the scientific method itself. When the bedrock of future knowledge—verified research—is built upon quicksand generated by an LLM, the entire structure is at risk. This crisis demands an urgent analysis of AI's current limitations, the immediate technological responses, and the long-term implications for how we conduct, publish, and trust science.

The Core Problem: When AI Confabulates Authority

LLMs operate fundamentally on predicting the most statistically probable sequence of words. They are masters of style and coherence, but they are often divorced from objective reality. When asked to provide references for a complex topic, instead of admitting a gap in its training data, the LLM will invent a citation that *looks* real—complete with convincing author names, journal titles, and years—because that is what a valid citation typically looks like in that context.

The problem is amplified in fields like AI research, which move incredibly fast. Researchers, facing immense pressure to publish novel results quickly, are increasingly using AI assistants to draft literature reviews. As one recent report highlighted, these fake citations are slipping past human peer reviewers, who are not yet equipped to instantly verify every obscure-sounding reference cited by an LLM.

For those unfamiliar with the mechanics, imagine a student writing an essay. Instead of looking up a real book, they invent a book title and author that sounds perfectly academic, and the teacher, assuming the student did the work, accepts it. In the high-stakes world of scientific publication, this is catastrophic. If a researcher's claim hinges on "Study X," and Study X never existed, the resulting architecture of subsequent research collapses.

The Arms Race Begins: Detection Tools Emerge

The good news is that the technological community is reacting swiftly. The emergence of specialized, open-source tools like CiteAudit signals the start of a crucial technological arms race. This new wave of tooling is designed specifically to detect the synthetic nature of LLM outputs, particularly where verifiable data (like actual DOIs or published records) is required.

These detection tools act like digital fact-checkers designed to look up the generated reference against massive, curated databases of legitimate academic output. They are essential because the general-purpose LLMs (GPT, Gemini, Claude) are failing the basic test of self-correction; they cannot reliably spot their own fabrications.

This necessity for external verification underscores a key trend: Trust is moving from the model's output confidence to verifiable provenance.

Broader Context: A Systemic Threat to Scientific Validity

The hallucinated citation issue is merely the most visible symptom of a deeper problem concerning LLM calibration and reliability. This issue resonates across several related spheres:

- Systemic Review Failure: As suggested by searches focusing on general academic integrity failures, this issue is likely not confined to AI conferences. Legal briefs, medical diagnoses, and engineering specifications drafted with AI assistance may contain equally convincing but false factual claims, eroding trust in AI-aided professional services.

- The Speed of Retraction: If fake research enters the literature, the process of academic correction—retraction—is slow. This delay means that other researchers may unknowingly build their next breakthrough on a false premise, slowing genuine progress and wasting resources. The financial and reputational costs associated with correcting the record are substantial for institutions and publishers.

- The Calibration Gap: Contemporary LLMs are primarily optimized for fluency, not factual grounding. Recent analyses of LLM factuality show that while models are getting better at handling simple queries, the complexity of high-level academic synthesis still pushes them into confabulation territory. True scientific utility demands that models prioritize accuracy over eloquence.

For the business sector, this translates directly into supply chain risk. If a company uses AI to summarize market research, competitor analysis, or legal precedents, and the LLM cites non-existent competitive patents or market reports, the resulting business strategy could be fatally flawed.

Future Implications: Rebuilding Trust Through Provenance

The battle against citation hallucination forces the AI community to pivot toward solutions that guarantee the origin and truthfulness of information, not just its presentation. This shift defines the next evolution of trustworthy AI.

1. Mandatory Provenance and Watermarking

The most robust long-term defense will involve making sources verifiable at the point of creation. This aligns with trends in developing AI watermarking and provenance systems for scientific papers. Imagine a future where every sentence generated by an AI assistant must carry an embedded, cryptographic signature proving it was generated by a known model, and crucially, that every cited fact has a verifiable link to an external, indexed source.

This moves the burden of proof. Instead of reviewers having to hunt down every reference, the paper itself provides an auditable trail. If a reference lacks a valid cryptographic link, it is automatically flagged, regardless of how convincing the prose sounds.

2. Redefining the Role of the Researcher

The reliance on LLMs for foundational tasks like literature review must change. If an LLM drafts a summary, the human researcher must treat that draft as an unverified hypothesis, not a finished product. The actionable insight here is simple: The responsibility for factual verification remains squarely with the human expert.

For businesses integrating AI, this means training protocols must emphasize verification checkpoints. Don't just accept the LLM's conclusion; demand the underlying verified data trail.

3. Specialized, Grounded Models

We will likely see a divergence in LLM development. While general models will continue to push creative boundaries, specialized models designed for sensitive domains (like finance, law, or academia) will need to be explicitly trained and fine-tuned for *grounding*. These models must be penalized heavily during training for generating non-existent data, optimizing instead for low latency in cross-referencing external, verified knowledge bases.

Actionable Insights for Stakeholders

How should different sectors respond to this emerging integrity crisis?

For Academic Publishers and Reviewers:

Immediate action requires integrating detection workflows. Reviewers should be trained to spot hallmark signs of hallucinated text (though these are rapidly evolving) and should prioritize searching for the specific DOIs or reference anchors provided by authors. Furthermore, journals must establish clear, publicized policies on the acceptable level of LLM assistance and the penalties for submitting entirely AI-fabricated work.

For AI Developers and Tool Builders:

The market for verification tools is exploding. Companies developing solutions like CiteAudit are on the right track by targeting the specific point of failure. Future tools must go beyond citation checking to assess the semantic coherence between claims and evidence, effectively "reasoning" about the cited papers' contents.

For Business Leaders:

Treat AI-generated foundational content—whether it's a market summary or a technical specification—as a "first draft" that requires mandatory expert validation before deployment. If you wouldn't stake capital on an intern's unverified report, you shouldn't stake it on an LLM's unverified output. Focus your AI investment on integrating retrieval-augmented generation (RAG) systems that force the model to pull answers directly from your trusted, internal documents.

Conclusion: Trust as the Next Frontier

The failure of LLMs to distinguish between real and fake scholarly references reveals a core, present danger: AI is dangerously persuasive before it is reliably truthful. The path forward is not to abandon these powerful tools, but to engineer trust back into the process. This involves a combination of detection technologies, mandatory data provenance standards, and a renewed emphasis on human expertise as the final arbitrator of fact.

The challenge of hallucinated citations is the canary in the coal mine for the entire synthetic media ecosystem. How effectively we tackle this specific integrity threat today will determine whether future AI systems become indispensable partners in discovery or merely sophisticated engines for sophisticated misinformation.

Corroborating Sources and Context

To fully understand the systemic nature of this integrity challenge, we must look beyond the immediate event:

- Analyses detailing the broader failure of academic checks against synthetic submissions, illustrating this is not an isolated incident.

- Discussions on advanced AI verification methods, such as digital watermarking, which represent the necessary technological shift toward verifiable provenance in scholarly work.

- Reports assessing the long-term institutional and financial fallout (retractions, reputational damage) when AI-generated fake research contaminates the scientific record.

- Recent benchmarks detailing the ongoing gap in calibration and factuality for state-of-the-art LLMs, confirming that fluency still outpaces truthfulness in current architectures.

The source article detailing the specific tool development can be found here: Hallucinated references are passing peer review at top AI conferences and a new open tool wants to fix that.