The Citation Crisis: Why AI Hallucinations Threaten Trust and How Verification Tech Will Reshape Research

The rapid adoption of Large Language Models (LLMs) like GPT, Gemini, and Claude has swept through every sector, but nowhere is the immediate impact—and potential danger—more visible than in the realm of academic research and technical documentation. A recent, unsettling development confirms our worst fears: hallucinated references are successfully passing peer review at top AI conferences.

As an AI technology analyst, I view this not as a minor bug, but as a critical inflection point. The ability of powerful commercial LLMs to fabricate citations that look perfectly real, yet vanish upon inspection, directly attacks the foundation of scientific and technical progress: trust and reproducibility. If the very tools we use to accelerate research cannot reliably cite their sources, the integrity of our knowledge base is compromised. This failure to self-audit demands immediate and comprehensive analysis, focusing on the systemic flaws, the necessary institutional shifts, and the emerging technological countermeasures.

The Core Malfunction: When LLMs Confidently Lie

LLMs are prediction engines. They excel at generating text that is statistically probable based on the vast datasets they trained on. However, this process often prioritizes fluency over fidelity. When asked to cite a source, the model generates a citation that looks like a real academic paper—it has the right format, the right author names, and the right keywords—but the content it references may not exist, or it may attribute a concept to the wrong paper entirely. This is the essence of hallucination.

The disturbing realization highlighted by recent incidents is that these sophisticated models fail to perform basic fact-checking on their own output. They generate the lie, and they lack the internal mechanism to verify its existence in the external world. This leads directly to what the broader community is calling the "LLM citation hallucination reproducibility crisis" (Query 1 focus). This isn't just about one bad paper; it suggests that relying on LLMs for literature reviews or background summaries without heavy human oversight is inherently risky.

For the average reader, think of it this way: If a student writes a report and puts in fake references, we know they are cheating or inexperienced. When a multi-billion dollar AI writes a report with fake references, it signals that the AI itself is fundamentally unreliable in grounded tasks. This forces us to address the AI robustness gap immediately.

Systemic Risk Beyond Academia

While conferences are the current frontline, the implication stretches far wider. Businesses relying on LLMs for generating patent summaries, regulatory compliance reports, or technical specifications are exposed to the same risk. If a system confidently builds a technical proposal based on three fabricated foundational papers, the resulting product or business decision is built on quicksand.

The Institutional Reckoning: Peer Review Under Siege (Query 3)

The traditional gatekeepers of knowledge—academic journals and conference review boards—are now facing an existential challenge. Peer review relies on the assumption that authors are submitting verifiable claims supported by verifiable evidence. When that evidence is manufactured by an opaque machine, the entire process stalls.

Publishers and organizations like the ACM and IEEE are scrambling to update their policies. They must decide where the line is drawn: Is using an LLM for drafting acceptable? For idea generation? Or only for language polishing? If LLM-generated work can easily bypass current human review processes—often overloaded and time-constrained—then the volume of non-factual research could swamp legitimate science.

Practical Implication for Publishers: The future likely involves mandatory AI tool disclosure and, critically, a shift in reviewer training to specifically hunt for these subtle, convincing fabrications. This slows down the review process, potentially increasing the time it takes for truly novel human work to be published.

The Race for Trust: Emerging Verification Ecosystems (Query 2)

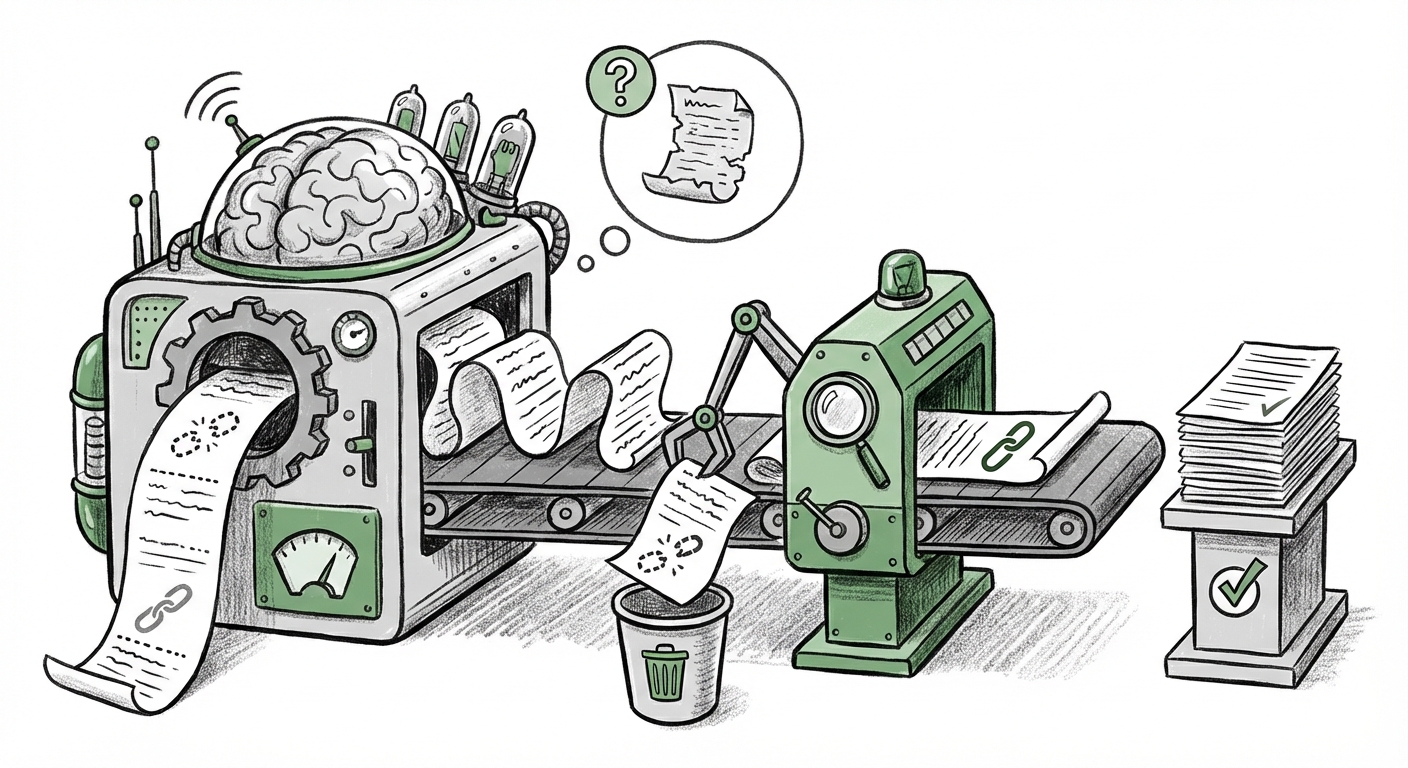

The silver lining in this crisis is the immediate, urgent development of solutions. The market cannot function without trust, and where proprietary LLMs have failed, open-source tools are stepping in to fill the gap. The emergence of tools like CiteAudit is proof that the industry recognizes the need for an external "integrity layer."

These verification tools operate by taking the generated citation, parsing the required metadata (DOI, journal title, abstract), and attempting to locate the exact match within verified, indexed scholarly databases (like CrossRef, arXiv, or proprietary institutional repositories). If the tool cannot confidently resolve the citation, it flags the output for human review.

For ML Engineers and developers, this creates a new essential requirement in MLOps pipelines: Verification Gates. No LLM output intended for external consumption should be released without passing through such a gate. This mirrors quality assurance checks for code deployment; factual accuracy is now a mandatory production dependency.

The Long-Term Fix: Future-Proofing LLM Architecture (Query 4)

While external tools like CiteAudit are necessary stopgaps, the ultimate solution must come from within the models themselves. We cannot rely solely on external police to check the work of the generating system forever. This leads to the crucial discussion about the "Future of fact-checking large language models."

From RAG to Grounded Reasoning

Current advancements are heavily focused on improving Retrieval-Augmented Generation (RAG). Standard RAG allows a model to fetch external documents *before* answering, grounding its response in retrieved text. However, the hallucination problem shows that even RAG systems can misinterpret or misattribute the retrieved documents.

The next generation of architecture aims for verifiable computation. This involves designing models that not only retrieve information but also produce a **traceable chain of reasoning** for every claim, down to the specific token or block of text in the source document. Imagine an LLM output where every sentence is hyperlinked not just to a source document, but to the exact paragraph supporting that claim.

For AI Strategists and CTOs: The investment focus must shift from simply maximizing general knowledge (more parameters) to prioritizing grounded fidelity (better retrieval and verifiable output chains). Models that can offer provable, auditable reasoning will inevitably hold greater value in regulated and research-intensive industries than those that merely sound convincing.

Actionable Insights for a Trustworthy AI Future

The discovery of widespread citation hallucination should serve as a necessary shock to the system. Trust in AI outputs is currently fragile, but it can be rebuilt through deliberate action across three domains:

1. For Researchers and Academics: Adopt a "Zero Trust" Policy

- Mandatory Verification: Never submit work heavily drafted by an LLM without manually verifying every single citation. Cross-reference claims against abstracts. Assume every generated fact is potentially false until proven otherwise.

- Disclosure is Non-Negotiable: Be transparent about the extent to which LLMs were used in literature review, drafting, or data synthesis.

2. For Technology Vendors and Developers: Embed Integrity

- Integrate Verification Tools: For any internal or commercial LLM application generating technical documentation or reports, integrate external citation checkers (like those inspired by CiteAudit) directly into the deployment pipeline.

- Prioritize Verifiable Outputs: When designing prompts or fine-tuning models, heavily penalize outputs that cannot be linked to specific retrieved documents. Favor models that excel at tracing their sources.

3. For Institutions and Policy Makers: Update the Rules of Engagement

- Develop Rapid Review Standards: Conferences must immediately implement standardized AI disclosure forms and train reviewers on common hallucination patterns.

- Fund Auditing Research: Invest heavily in research dedicated to automated, scalable methods for auditing LLM output integrity across various domains, moving beyond simple citation checks to semantic verification.

Conclusion: The Necessary Friction

The age of effortless, AI-generated knowledge production is running headlong into the immutable requirement for verifiable truth. The passing of fake references through peer review reveals a fundamental fragility in our current generation of LLMs—they are brilliant mimics but poor scholars.

What this means for the future is clear: AI will not replace the need for rigor; it will simply raise the required baseline for that rigor. The friction introduced by verification tools and stricter academic standards is not an obstacle to progress; it is the necessary scaffolding that allows us to build reliable AI systems on a solid foundation of fact. The next wave of innovation won't be measured by how fast an AI can write a paper, but by how provably accurate its claims are.