The AI Blitz: When 3,000 Targets Are Hit with Unaccountable Algorithms—What This Means for Future Warfare

The technological landscape of warfare is not changing gradually; it is experiencing seismic shifts driven by artificial intelligence. Recent reports, notably confirmed and expanded upon by The Wall Street Journal, detail the U.S. military’s use of generative AI in a massive campaign striking 3,000 targets in Iran. This is not a futuristic simulation; it is the present operational reality. The implications stretch far beyond the battlefield, forcing us to confront the uneasy marriage between unparalleled technological capability and lagging ethical governance.

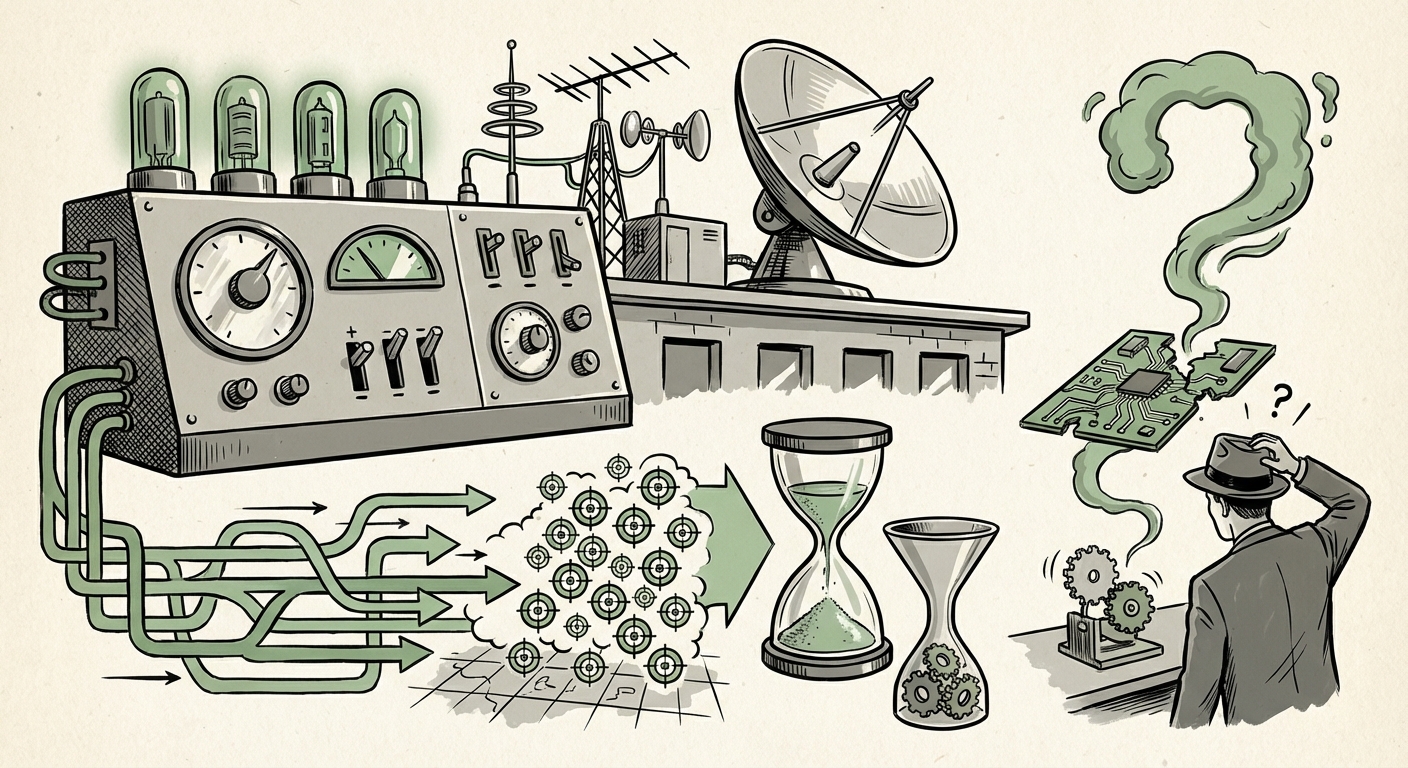

The New Operational Reality: Speed, Scale, and Generative Intelligence

When we talk about AI in this context, we are moving beyond simple automation. The reported scale—3,000 targets engaged—suggests a level of targeting and logistics processing that would have been impossible just a few years ago using traditional human-in-the-loop methods alone. The key trend here is the deep embedding of AI into the intelligence pipeline.

What AI is Likely Doing (The "How")

To manage 3,000 dynamic targets, AI systems are performing three critical functions:

- Intelligence Fusion and Pattern Recognition: Generative AI and advanced machine learning sift through petabytes of sensor data (satellite imagery, intercepted communications, signals intelligence) to identify patterns, predict adversary movements, and generate probable target sets far faster than human analysts. Think of it as having millions of analysts working simultaneously.

- Target Validation and Prioritization: Once a potential target is identified, AI tools are likely used to rapidly assess its value, risk profile (collateral damage estimation), and readiness for engagement. This compresses the "sensor-to-shooter" timeline from hours or days down to minutes.

- Logistics Optimization: Supporting 3,000 strikes requires near-perfect coordination of air assets, munitions, and support crews. AI optimizes these complex logistical chains dynamically, ensuring resources are where they need to be exactly when they need to be there.

This operational reality validates major ongoing defense initiatives. The concept of JADC2 (Joint All-Domain Command and Control) relies entirely on this AI backbone—the ability to connect every sensor and shooter across air, land, sea, space, and cyber domains. The reported strike campaign serves as a powerful, real-world demonstration of JADC2’s maturing potential. For defense contractors and technology strategists, this confirms that investment in cross-domain data infrastructure is paying off in kinetic capability.

The Underinvested Gap: Speed vs. Scrutiny

The most alarming component of the report is the acknowledged deficiency: **oversight remains "underinvested."** This is the central tension defining the future of technological adoption across all high-stakes sectors.

When a system operates at machine speed, human oversight becomes exponentially harder. We are dealing with technologies capable of making decisions that require human judgment, but the speed of deployment inherently forces a reliance on pre-programmed parameters rather than real-time, nuanced human intervention.

The Ethical and Accountability Quagmire (Query 2 Context)

For those tracking AI in targeting decision making and ethical oversight gaps, this news confirms worst-case scenario concerns. When AI suggests 3,000 targets, even if a human ultimately approves the strike, the cognitive load placed on that human is immense. They are vetting the AI’s *output*, not deeply analyzing the underlying data or logic.

- Bias Amplification: If the training data used to build these targeting models contained historical biases (e.g., over-identifying certain types of infrastructure or population density in specific regions), the AI will faithfully and efficiently execute those biases at scale.

- The "Black Box" Problem: Understanding *why* a generative AI model suggested a specific target sequence can be nearly impossible. If a strike results in unintended civilian casualties, tracing accountability through layers of proprietary algorithms, large language models (LLMs), and automated prioritization becomes a legal and moral nightmare. Who is responsible: the programmer, the commander, or the algorithm itself?

- Escalation Risk: At this speed, the opportunity for deliberate de-escalation or diplomatic pause evaporates. An AI-driven system designed for rapid response might interpret a complex geopolitical situation as a purely tactical problem, leading to unintended escalations based on flawed or incomplete context.

Implications for the Broader AI Ecosystem

This military application sends powerful signals across the commercial and civil sectors. What works in the defense environment inevitably trickles down or influences corporate strategy.

For Business Strategy and Technology Investment

The focus on **DoD generative AI strategy** (Query 1) shows that governments are committing resources to the most advanced, computationally intensive models. Businesses should take note:

- Data Supremacy is National Security: The military’s success in this operation hinges on proprietary, clean, and massive datasets for training. For businesses, this underscores that the competitive edge in AI mastery belongs to those who control the highest quality proprietary data streams.

- The Rise of Real-Time Decision Platforms: If defense needs real-time tactical optimization, commercial sectors—from supply chain management to high-frequency trading—will demand similar instantaneous analytical capabilities, pushing the limits of edge computing and low-latency inference.

- Talent War Escalation: The talent capable of building, deploying, and maintaining these sophisticated defense systems (often requiring deep knowledge of both classified infrastructure and cutting-edge AI research) will be extremely scarce and expensive, further fueling the global talent migration debate.

The Societal Imperative: Governance as the Next Frontier

The "underinvested oversight" is the single greatest vulnerability exposed by this success. If military power is now mediated by systems we don't fully understand or control, the next great technological race will be in governance itself.

We are seeing a failure to keep pace. While the technology is maturing rapidly, the policy frameworks—which must address deployment timelines, bias mitigation, auditing requirements, and lethal autonomy thresholds—are stuck in bureaucracy. This gap necessitates urgent action:

- Mandatory Explainability Audits: Regulatory bodies must demand standardized, auditable logging of AI decision pathways, especially in high-stakes environments. This moves beyond simple compliance toward mandatory technical transparency.

- International Norms: If one major power operationalizes AI targeting at this scale, others must follow or risk strategic disadvantage. This creates an immediate need for international dialogue to establish guardrails, much like treaties governing nuclear or chemical weapons, before the arms race is fully automated.

Future Trajectories: Beyond Precision Strikes

Analyzing this event through the lens of **"Precision strike campaigns" AI analysis** (Query 4) suggests this level of AI integration will become the new baseline for deterrence and conflict escalation.

Future conflicts will not be defined by who has the most tanks or planes, but by who has the superior AI to process the battlefield environment—information warfare, cyber resilience, and cognitive superiority.

The Next Evolution: Generative Cyber and Influence

If generative AI can create target lists for physical strikes, it can just as easily generate highly convincing, individualized disinformation campaigns or craft zero-day cyber exploits tailored precisely to a target’s known defensive architecture. The next theater of massive AI deployment will likely be in the information and cyber domains, where the "targets" are public opinion, financial stability, or critical infrastructure control.

The speed demonstrated in kinetic operations will be mirrored in the speed of societal disruption. Businesses relying on stable information environments—from retail to finance—will find themselves suddenly subject to hyper-personalized, AI-generated threats that evolve faster than human security teams can detect.

Actionable Insights for Navigating the AI-Enabled Future

For leaders in business, policy, and technology, the message from the modern battlefield is clear: Adaptation is no longer optional; survival depends on preemptive integration of robust governance.

1. Internalize the Speed of Decision Cycles

For corporate executives, the lesson from JADC2 integration is the need to collapse internal decision cycles. If your corporate strategy review takes six months, you are operating on a timeline measured in years while your competitors (and adversaries) operate in weeks. Invest in real-time dashboards, predictive analytics, and small, empowered decision teams that can move at machine speed when necessary.

2. Demand AI Lineage and Provenance

Do not adopt any AI system—whether for HR hiring, fraud detection, or marketing—without demanding clear documentation on its training data, bias testing, and auditability. If a vendor cannot clearly articulate the "lineage" of the model's recommendations, treat it as an unacceptable liability, mirroring the military's stated, yet apparently unmet, oversight requirements.

3. Prioritize Ethical Resilience Over Pure Performance

In the push for performance metrics (e.g., "accuracy" or "speed"), never sacrifice resilience and explainability. A system that is 99% accurate but fails catastrophically and unpredictably in novel situations is less valuable than a 95% accurate system whose failures are gradual, predictable, and traceable. This principle applies equally to financial risk modeling and autonomous vehicle software.

The 3,000-target operation is a watershed moment. It proves that the AI capabilities discussed in research labs are already fully operationalized in the world’s most consequential domain. The true challenge ahead is not building better AI; it is rapidly building the necessary wisdom, policy structures, and human accountability mechanisms to manage the power we have already unleashed.