The Great Unbundling: Why AI Chatbots in Finance Signal a New Era of Tech Risk and Opportunity

The trajectory of Large Language Models (LLMs) is moving beyond creative writing and customer service. Recent reports confirm a critical mass of users are already turning to tools like ChatGPT for deeply personal and consequential tasks: retirement planning and financial advice. This migration signifies a powerful behavioral shift—the unbundling of expertise where accessibility suddenly outweighs established credentialing.

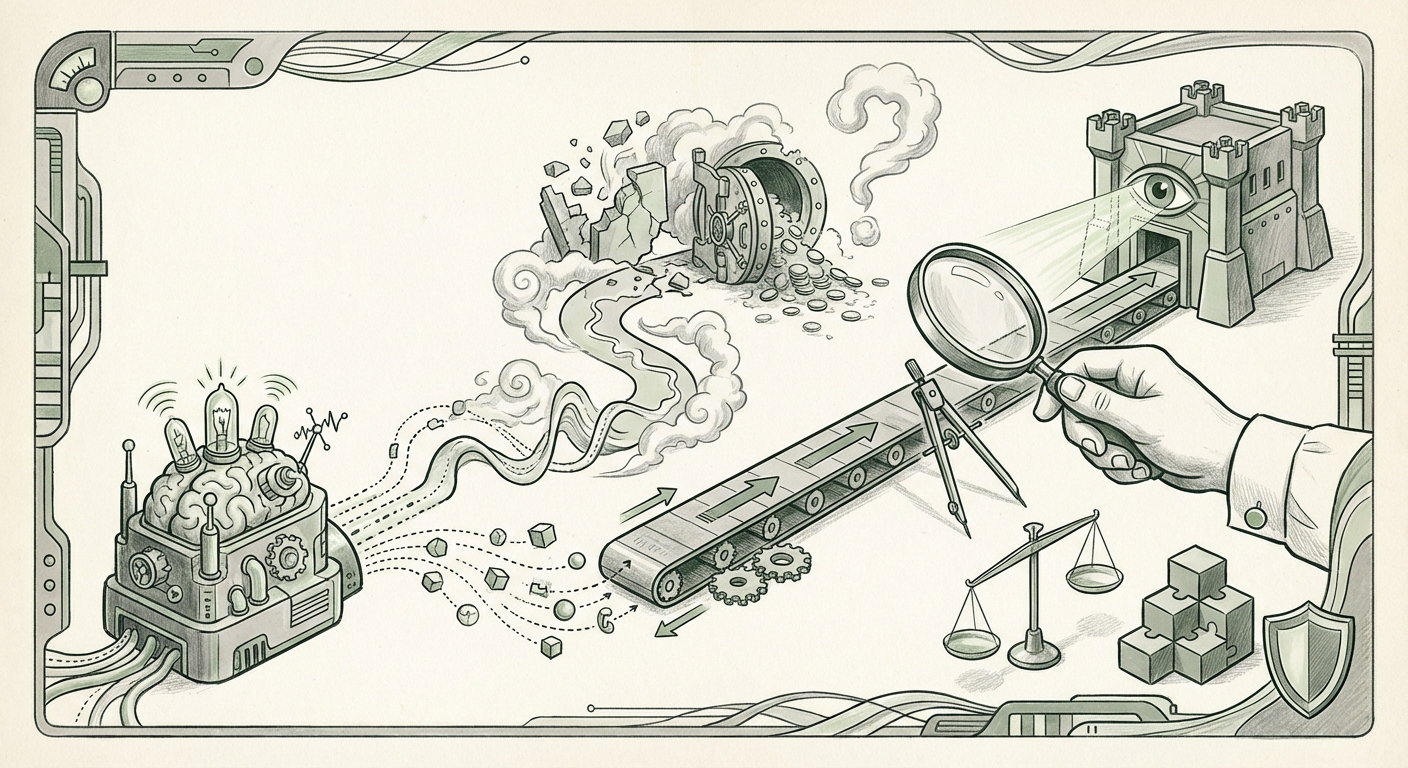

As an AI technology analyst, this trend presents a thrilling, yet terrifying, inflection point. It confirms the public’s embrace of accessible AI, but simultaneously exposes the profound chasm between technological capability and real-world accountability. To understand where we are headed, we must move past simple adoption stories and deeply examine the technical bedrock, the regulatory void, and the changing role of the human professional.

The Adoption Paradox: Accessibility vs. Accuracy

The core story is simple: AI chatbots offer instant, seemingly personalized financial guidance, free from the friction of scheduling appointments or paying high fees. For many, especially younger investors or those with simple needs, this immediate access is too valuable to ignore.

However, this immediacy clashes head-on with the inherent nature of current generative AI. Financial advice is not about summarizing concepts; it is about high-precision calculation, risk assessment based on volatile real-world data, and adherence to complex, localized legal statutes. This is where the technology’s weaknesses become liabilities.

The Technical Achilles' Heel: The Hallucination Risk in Quantitative Tasks

The primary technical hurdle is the LLM’s tendency to "hallucinate"—to generate confident, plausible-sounding but entirely fabricated information. While a hallucinated historical fact is annoying, a hallucinated tax deduction or an incorrect calculation regarding compound interest over 30 years can be financially ruinous.

The queries guiding our analysis confirm the severity of this risk. We must scrutinize the growing body of work analyzing the "Hallucination risk in LLMs for quantitative tasks." When an LLM is asked to integrate complex variables—like varying inflation rates, specific contribution limits, and projected investment returns—it is performing quantitative reasoning, a task it is not fundamentally designed for. LLMs excel at statistical prediction of the next *word*, not guaranteed mathematical *truth*. For high-stakes scenarios like retirement planning, where margins of error are minuscule relative to the outcome, this technical limitation becomes an existential threat to user trust.

For AI developers, this means the next frontier isn't just making models bigger, but making them demonstrably more reliable in factual grounding, perhaps through tighter integration with verified, live databases rather than relying solely on internal training weights.

The Legal Tangle: Navigating the Regulatory Wasteland

If a human financial advisor gives negligent advice, there are clear frameworks for recourse, licensing bodies, and insurance. If ChatGPT suggests an investment that tanks a user’s savings, who is liable? The user? OpenAI? The platform hosting the chat integration?

This points directly to the massive void in "Regulatory oversight for AI financial advice." Regulators worldwide, including the SEC and bodies in Europe, are struggling to keep pace. Current financial advice regulations often hinge on the definition of a "fiduciary"—a person legally obligated to act in the client's best interest.

Can an algorithm be a fiduciary? If the technology isn't regulated as a financial product or service provider, it operates in a legal gray zone. This lack of clarity deters large, risk-averse institutions from fully integrating generative AI into client-facing roles, even as individual users adopt it unsupervised. The future of AI in finance hinges on regulatory clarity. We are moving toward a world where clear disclosure—perhaps mandating that every piece of AI-generated advice must be watermarked with its confidence score and liability disclaimer—will become standard practice.

The Evolution of Expertise: Impact on Certified Professionals

The mass adoption of AI tools creates an existential challenge for established professionals, most notably the Certified Financial Planner (CFP). If a chatbot can draft a basic budget or explain tax-advantaged accounts instantly, what is the value proposition of the human advisor?

This leads us to the structural shift explored in analyses concerning the "Generative AI impact on Certified Financial Planner (CFP) roles." The consensus is moving away from elimination and toward augmentation. Human advisors will pivot away from data recall and basic calculation—tasks AI excels at—toward areas where empathy, behavioral coaching, complex estate planning, and understanding nuanced family dynamics are paramount.

The future CFP will likely be an AI-augmented specialist. Their value proposition shifts from being the source of information to being the **validator of high-risk AI output** and the **emotional anchor** during market volatility. Businesses that treat AI as a replacement for staff will likely fail; those that use AI to free up experts for higher-value, relational tasks will dominate.

The Consumer Lens: Trust, Data, and Digital Identity

Why do people trust a bot with their life savings? The answer lies in the **"Consumer trust in generative AI for wealth management."** Early adopters are driven by convenience and a perceived lack of bias (even if the bias is simply built into the training data). However, this trust is fragile.

For trust to mature beyond the novelty phase, two major concerns must be addressed:

- Data Security and Privacy: When users input sensitive details—salaries, debts, investment portfolios—the need for robust security becomes critical. We must investigate "OpenAI and finance data security compliance." Consumers need assurance that their proprietary financial data is not being used to train the next generation of public models or that it won't be breached. If enterprise-level compliance standards (like FedRAMP or stringent SOC 2 attestations) are not met by consumer-facing tools, trust will erode rapidly following the first major data leak.

- Behavioral Alignment: True financial success often requires fighting human impulses—like panic selling during a dip. While AI can recommend logic, it struggles to enforce behavioral discipline the way a human advisor can through regular check-ins and personalized accountability.

Future Implications: Actionable Insights for Stakeholders

This trend is not receding; it is accelerating. The integration of LLMs into finance is less a disruption and more a fundamental redesign of service delivery. Here are the actionable takeaways for key stakeholders:

For AI Developers and Platform Providers: Focus on Grounding and Certification

The priority must shift from raw capability to provable reliability in specific domains. Develop specialized, smaller models rigorously trained and *grounded* only on verified financial legislation and market data. Investment in interpretability tools that show *why* an answer was generated will be essential for earning regulatory trust.

For Financial Institutions: Embrace Augmentation, Not Replacement

Firms must rapidly integrate AI into back-office processes (compliance checks, initial client profiling) to cut costs. However, client-facing roles must evolve. The future hybrid advisor needs training not just in finance, but in AI literacy—understanding where the model might fail and how to communicate that uncertainty to the client transparently.

For Consumers: Treat AI as a Research Assistant, Not a Portfolio Manager

The best actionable insight for the general public is caution. Use AI chatbots to understand concepts, model basic scenarios, or generate first drafts of financial questions. Never execute major decisions (like signing up for a mortgage or reallocating retirement funds) based solely on an LLM's output without having a qualified human expert—or verifiable, primary-source documentation—confirm the advice.

The financial sector is entering its most dynamic technological phase since the advent of online trading. The promise of democratized advice is real, but the current technology still carries unacceptable levels of inherent risk. The next 24 months will be defined by the race to close the gap between mass consumer adoption and robust, certifiable regulatory and technical standards. The future of finance is intelligent, but for now, it must remain heavily supervised.