The Agentic Leap: How GPT-5.4, AI IDEs, and the New Desktop Are Reshaping Computing

The past few weeks in Artificial Intelligence have felt less like incremental updates and more like tectonic shifts. Recent analyses, such as "The Sequence Radar #820," highlight a critical convergence: the maturation of large language models (LLMs) into proactive, goal-oriented agentic systems, the rise of hyper-specialized AI applications, and the imminent redesign of our fundamental computing interfaces—the desktop.

This isn't just about chatbots getting slightly smarter; it's about the transition from AI as a *tool* you prompt to AI as a *colleague* that executes complex workflows. As technology analysts, we must look beyond the headlines of model version numbers (like the rumored GPT-5.4) and examine the architectural innovations and market disruptions that make this new era possible.

The Engine of Change: From Prompt to Plan (Agentic Architectures)

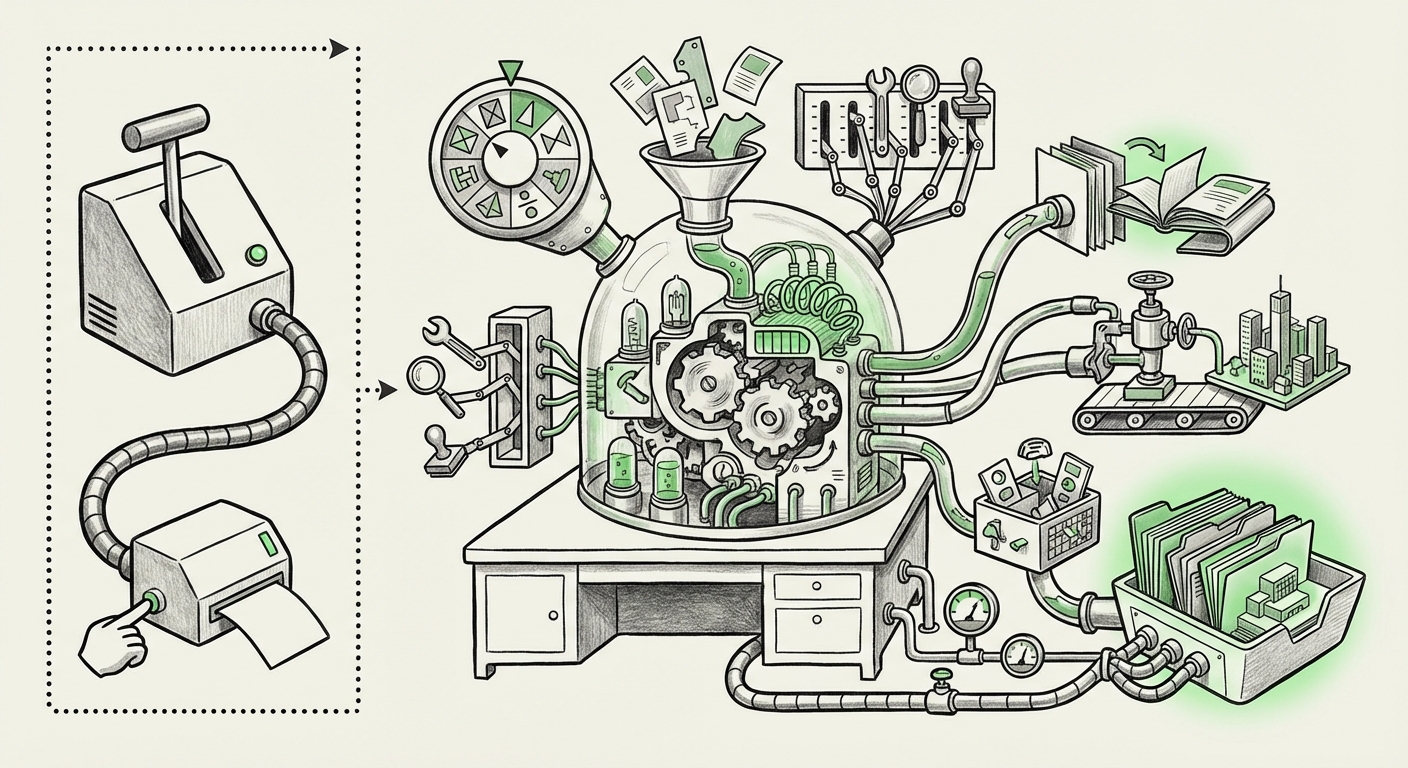

For years, the benchmark for LLMs was conversational fluency. GPT-4 stunned us with its ability to write poetry or summarize text. The next frontier is agency. An agentic system doesn't just answer a question; it breaks the request down, uses external tools, corrects its own mistakes, and achieves a final goal.

This requires deep architectural innovation that goes far beyond simply training a larger model. It demands robust planning and reasoning loops. When we look at speculation around models like "GPT-5.4," we are looking for evidence of superior internal scaffolding:

- Memory and State Tracking: Agents need long-term memory to remember past decisions and current context across long tasks.

- Tool Use Sophistication: The ability to select the *right* tool (be it a code interpreter, a web search API, or a database query) and use it correctly, even when instructions are ambiguous.

- Self-Correction: The capacity to evaluate an output, recognize an error (a bug in code, an incorrect calculation), and iteratively refine the result without human intervention.

As evidenced by technical discussions around frameworks that enable complex AI workflow architectures (often involving intricate routing between specialized models or modules), the focus has squarely moved to engineering reliability into the autonomous process. For technical audiences, this means investigating how these agents manage state and handle failure modes—a much harder engineering challenge than simple inference.

What This Means for the Future of AI: Autonomy is the New Metric

The future success of AI won't be measured solely by benchmark scores on standardized tests, but by the complexity of the *real-world tasks* it can complete autonomously. If an AI can successfully manage a small cloud deployment end-to-end, it has achieved a higher level of utility than a model that simply writes excellent documentation for that process.

The Application Layer Revolution: AI-Native Tools Like Cursor

If agentic models are the engine, specialized AI-native applications are the high-performance vehicles built around them. The excitement surrounding AI-powered IDEs like Cursor illustrates this perfectly. These tools don't just *add* AI features; they are *built* around the assumption that the user is collaborating with an AI partner.

For developers, this is a profound change. We are moving from a world where a developer uses a text editor (like VS Code) and then manually calls an external AI tool (like Copilot in a separate window) to a world where the entire environment is context-aware and proactive.

Market validation for this trend is strong. Established players are reacting forcefully. The massive adoption and increasing functionality of tools like GitHub Copilot confirm that developers are ready to delegate large chunks of repetitive, boilerplate, or debugging work to AI. Cursor and similar startups are simply pushing this envelope further by integrating the agentic loop directly into the core development cycle.

Practical Implication for Business: The Developer Productivity Multiplier

For CTOs and VPs of Engineering, this trend translates directly to productivity gains. If an AI can handle 40% of routine code changes, bug fixes, and unit test generation, the engineering team’s bandwidth shifts entirely toward novel problem-solving and complex system architecture. This fundamentally changes how teams staff projects and measure success. The bottleneck is shifting from *writing* code to *validating* AI-generated code and defining the high-level strategy.

When analyzing this market, we look for evidence of widespread productivity increases. Reports detailing Copilot usage show massive time savings, substantiating the core premise that developers are embracing AI-first coding environments. This suggests the "AI pair programmer" is becoming the industry standard, not just a novelty.

The Interface Overhaul: The Dawn of the AI Desktop

Perhaps the most disruptive implication highlighted by recent developments is the rethinking of the operating system itself. The traditional desktop metaphor—folders, icons, mouse clicks, and menus—was designed for human motor skills and finite memory. Agentic AI renders this paradigm cumbersome.

The "New Desktop" concept suggests that the OS should function as the ultimate personal assistant, understanding commands across all installed applications contextually. This is where major players become crucial indicators of future direction:

- Microsoft's Vision: The deep integration of Copilot in Windows aims to make AI the primary interaction layer. Instead of clicking through settings menus, a user asks, "Optimize my battery life for a long flight," and the OS manages the underlying configurations.

- Apple's Approach: Announcements around "Apple Intelligence" focus heavily on system-wide context awareness, allowing users to reference information across emails, photos, and notes via natural language requests executed locally and securely.

This shift means AI moves from being a side application to the glue holding the entire computing experience together. For enterprise IT, this requires rigorous assessment of security and data governance, as the OS now has unprecedented access to user context to fulfill complex goals.

What This Means for the Future: From GUI to CAI

We are moving from the Graphical User Interface (GUI) to the Conversational AI Interface (CAI). For general users, this means reduced cognitive load—less time spent navigating menus, more time achieving outcomes. For developers and researchers, it means the ability to benchmark the multimodal reasoning capabilities required for true OS integration. Can the AI understand a screenshot of a complex network diagram, diagnose a connectivity issue based on that visual, and then execute the necessary firewall command?

The capability of these next-generation models must support complex, cross-application reasoning. Research into next-generation LLM benchmarks is key here, as it validates whether models possess the depth of understanding necessary to safely manage a personal computer.

Actionable Insights for Navigating the Agentic Future

For organizations looking to thrive in this new landscape defined by agentic computing, three strategic areas demand immediate attention:

1. Standardize Agent Architecture, Don't Just Buy Applications

While tools like Cursor offer immediate productivity boosts for development teams, businesses must begin developing internal standards for agentic interaction. This involves defining the safe boundaries, acceptable latency, and required accountability mechanisms for any autonomous system interacting with core business logic or customer data. If your agents rely on internal APIs, treat them as critically as you treat external vendor integrations.

2. Prioritize Contextual Security Over Perimeter Security

As the OS becomes AI-aware, security must shift from defending the network perimeter to guarding the context the AI operates within. If an AI agent has access to your calendar, email, and code repository, a security breach in the agent itself is far more catastrophic than a traditional password leak. Companies must implement fine-grained access controls for their AI agents, ensuring least-privilege principles apply to autonomous workflows.

3. Re-Skill for Prompt Engineering and Outcome Validation

The job description for the future knowledge worker involves less manual execution and more precise goal definition and validation. Training staff not just on how to prompt, but how to *verify* complex, multi-step agentic outputs, is crucial. The focus shifts from "how to do the task" to "how to define the success criteria for the machine."

Conclusion: The Quiet Revolution of Utility

The current wave of AI innovation—encapsulated by sophisticated models, specialized execution environments, and OS-level integration—is not just iterative improvement; it is a fundamental change in the human-computer contract. We are moving into an era where the computer anticipates our needs, manages complexity behind the scenes, and acts on our behalf.

Whether the next major model is officially termed GPT-5.4 or something else entirely, its utility will be judged by its ability to power these new agentic workflows. The desktop, once a static collection of windows, is transforming into a dynamic, intelligent substrate ready to handle complexity. Those who understand the underlying architecture of agency and actively redesign their workflows to leverage these capable entities will be the ones who define the next decade of technological advantage.