The Agentic Leap: Why AI is Moving From Chatbots to Your Desktop OS

The digital landscape is shifting under our feet. For the past few years, the primary interaction model with advanced AI has been conversational—we type a prompt into a chat window (like ChatGPT) and receive a text response. This was the era of the powerful, yet isolated, chatbot. However, recent developments, highlighted by cutting-edge reports covering the implied power of models like a potential "GPT-5.4" and specialized tools like Cursor, signal a profound transition: the move to Agentic AI deeply embedded within our operating systems.

This is more than just a feature upgrade; it represents the arrival of AI that can act, not just answer. For technologists, businesses, and everyday users alike, understanding this inflection point is crucial to navigating the next decade of software evolution.

The Core Transition: From Prompt to Action (Agentic AI)

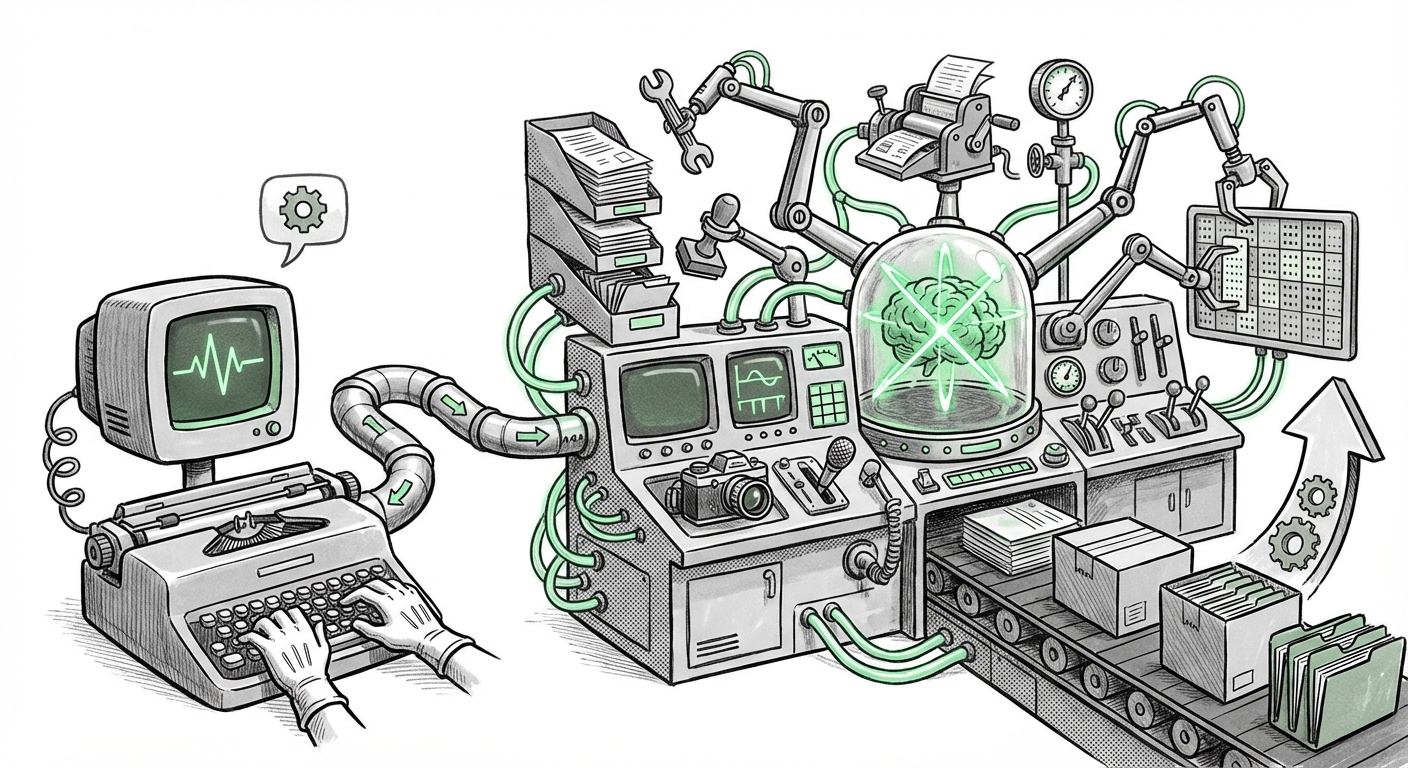

The essence of the change lies in agency. A traditional LLM is a brilliant consultant; an AI agent is a capable employee. The discussion around sophisticated AI systems points directly toward the rise of autonomous AI agents capable of complex, multi-step tasks. We are moving past simple API calls to models that can:

- Plan: Deconstruct a high-level goal ("Build a functional prototype website for my new product") into sequential, actionable sub-tasks.

- Reflect: Assess the outcome of a step, identify errors or gaps, and dynamically adjust the plan.

- Utilize Tools: Interact with external software, access files, and execute code reliably.

As analyses of agent architectures suggest, the challenge isn't just in generating good text, but in managing the loop of execution and error correction over extended periods. When an agent can handle the entire workflow—from understanding the initial request to validating the final output—the productivity gains become exponential. This requires the AI to have better memory, planning depth, and robust reasoning capabilities far exceeding early chatbot iterations.

The Technical Scaffolding Behind Agency

For those on the technical side, this means that foundational models must demonstrate mastery over symbolic reasoning and persistent context. We are seeing the underlying technology evolve to support these autonomous loops. This technical maturity validates the shift: if the underlying model is strong enough to reliably perform multi-step reasoning, the application layer (the agent) can finally deliver on its promise.

The Desktop Becomes the Battleground: AI Native Operating Systems

The practical manifestation of agentic AI is its integration directly into the user's workspace. Tools like Cursor are pioneering this by embedding the AI within the coding environment, allowing it to edit files, run tests, and interface with the local development stack. The next logical step is broader integration across the entire operating system (OS).

Imagine telling your computer, "Archive all invoices from Q3, summarize the spending trends for my manager in an email, and schedule a meeting to discuss the findings." This requires an AI that is not confined to a web browser tab. It must have secure, system-level access to email clients, file explorers, and scheduling software.

This necessitates what many technologists are calling an "AI Native Operating System Rebuild." Current GUIs (Graphical User Interfaces) were built for human manual input via mouse and keyboard. An AI-native OS would be optimized for high-speed, programmatic instruction from an autonomous agent. This means:

- Contextual Awareness: The OS must provide the agent with a real-time, holistic view of what the user is looking at, working on, and needs to do next.

- Permission Layering: Security becomes paramount. Agents need granular permission sets (e.g., "read-only access to Finance folder" vs. "full edit rights in Code Repository X").

- Unified Action Schema: Standardized ways for AI agents to trigger actions across diverse applications, regardless of whether they are legacy software or brand new cloud tools.

This structural shift is where the real battle for the future UI is being waged, whether by established giants updating Windows or macOS, or by new platforms built from the ground up around AI as the primary interface.

The Engine Room: Advanced Multimodality

For an agent to truly operate in the real world—or the digital desktop world—it must perceive the world beyond plain text. This is where the implied power of models like "GPT-5.4" comes into focus, driven by leaps in multimodality.

Multimodality means the AI can seamlessly process and generate content across different types: text, spoken audio, live video streams, and complex visual data like charts or diagrams. For an agent, this capability is transformative:

- Visual Debugging: An agent sees a screenshot of a broken webpage layout and diagnoses the incorrect CSS without needing a human to describe the visual error in text.

- Procedural Understanding: An agent watches a 30-second video tutorial and immediately translates that sequence of mouse clicks and menu selections into an executable script.

Benchmarks comparing recent large model releases confirm that the progress in unifying sensory inputs is accelerating. The integration of these powerful, multi-sensory models is what gives the agent the 'eyes' and 'ears' necessary to function intelligently within a dynamic desktop environment, moving it closer to human-level perception required for complex tasks.

Business Implications: The Productivity Shockwave

The convergence of agentic planning, deep OS integration, and advanced perception creates a potential Productivity Shockwave across knowledge work. This is not just about automating single tasks; it’s about automating entire workflows previously requiring sustained human coordination.

For Businesses and Leaders: Rethinking Roles

If an agent can reliably manage data extraction, analysis, reporting, and follow-up scheduling—tasks that currently consume significant portions of roles in finance, administration, and even software development—the economic calculus changes dramatically. Consulting reports often suggest that the immediate impact will be felt in areas requiring high volume, low-variance coordination.

Actionable Insight for Leaders: Instead of focusing solely on "AI replacement," focus on AI Augmentation Level 3: Autonomous Workflow Management. Identify the three most time-consuming, multi-step processes in your mid-tier knowledge worker roles. These are the prime candidates for immediate agent deployment. Train your teams not to *do* those tasks, but to oversee and refine the agents that perform them.

For Developers: The New Stack

For software engineers, tools like Cursor are just the beginning. The future stack will likely feature specialized AI components working in concert:

- A Planning Core (the orchestrator).

- Specialized Tool-Use Modules (for specific APIs or software).

- A Security/Audit Layer (monitoring agent actions).

This requires a shift in skill sets away from monolithic application building toward designing robust, trustworthy communication protocols between independent, intelligent components. Security and verification become the new bottlenecks, not just model performance.

Navigating the Road Ahead: Challenges and Guardrails

While the promise of agentic AI is immense, moving AI into the core of our workflow systems introduces significant risks that must be proactively managed.

Trust and Explainability

When an agent executes code that modifies critical files or sends sensitive communications, the user must trust it completely. This trust hinges on explainability. If an agent makes a costly mistake, we need forensic tools to trace the exact reasoning, planning steps, and tool usages that led to the error. Without strong explainability frameworks, adoption in high-stakes environments (like finance or engineering) will stall.

Security and Containment

Giving an autonomous system access to an entire desktop is the ultimate security challenge. Malicious prompts or unforeseen emergent behaviors could lead to widespread system damage or data exfiltration. This reinforces the need for the OS to build comprehensive sandboxing and capability checks specifically designed for agents, rather than retrofitting security onto legacy systems.

Conclusion: The Era of the Digital Coworker

The convergence of hyper-capable models, the architectural shift toward autonomous agents, and the integration into the operating system marks the definitive end of AI as a novelty search bar. We are entering the era of the Digital Coworker.

This shift promises unparalleled productivity, allowing human talent to focus exclusively on creativity, strategy, and complex, novel problem-solving—the areas where human intuition still far outstrips even the most advanced algorithms. However, realizing this future depends not just on the speed of model iteration, but on our collective ability to architect secure, trustworthy, and governable operating environments capable of hosting these powerful new entities.

The sequence is clear: better models lead to better agents, and better agents demand a better operating system. The next few years will be defined by how quickly we can rebuild the digital infrastructure to meet the intelligence we are now unlocking.