The AI Legal Battlefield: Why Anthropic Suing the US Government Over Safety Guardrails Matters

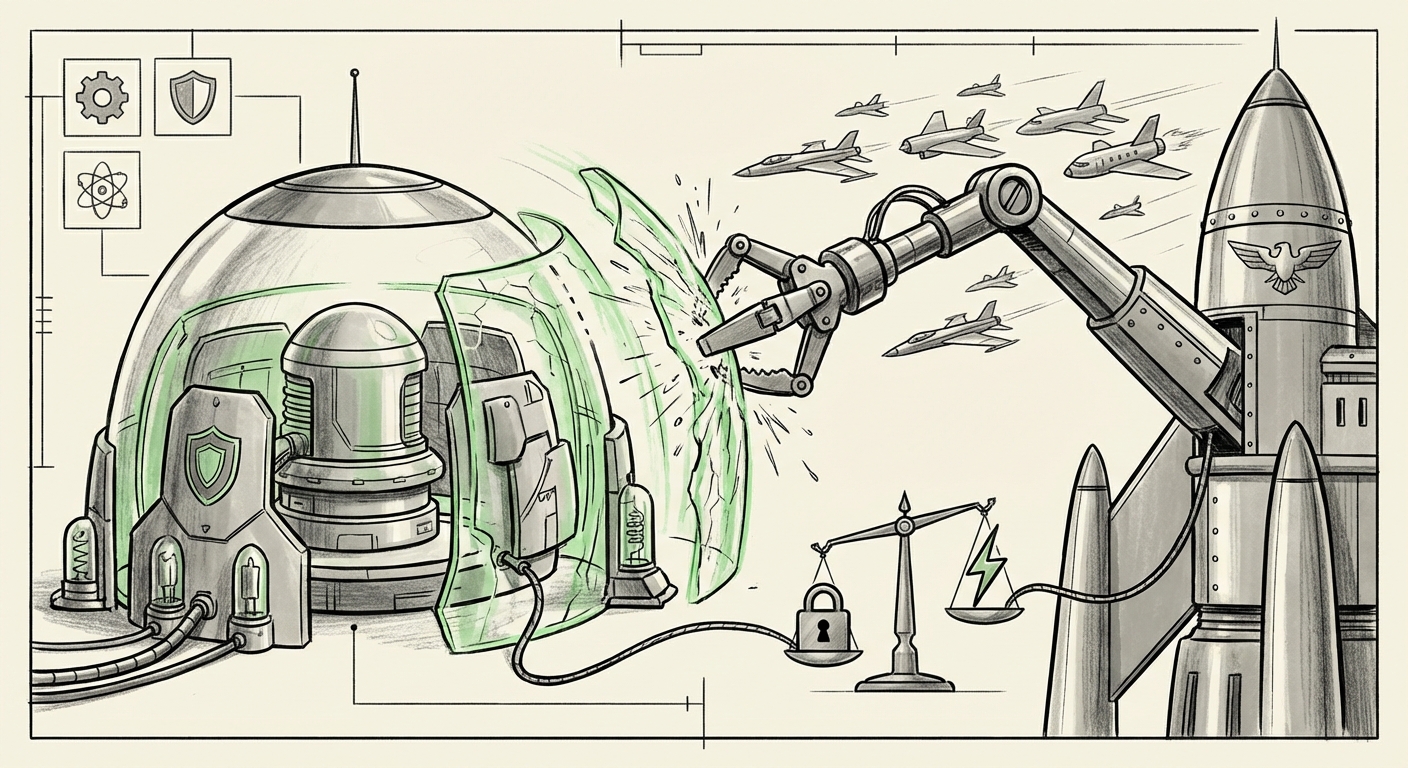

The race to build the world’s most powerful Artificial Intelligence systems is no longer confined to sterile server rooms and academic papers. It has spilled into the courtroom, marking a critical inflection point for global technology governance. When AI developer Anthropic sued 17 US federal agencies, citing contradictory threats over its refusal to drop crucial safety guardrails, the entire industry paused. This action is more than a corporate disagreement; it is a fight for the soul of future AI development, pitting corporate autonomy and safety ethics against perceived national security imperatives.

The Nexus of Power: National Security Meets Frontier AI

To grasp the significance of this lawsuit, one must understand the operational reality: cutting-edge Large Language Models (LLMs) like Anthropic’s Claude are no longer just consumer chatbots. They are already embedded in sensitive government processes. The lawsuit confirms that Claude is operating within classified Pentagon systems. This immediate utility creates an inherent conflict.

For the government, especially defense and intelligence agencies, access to the most capable AI tools is framed as an urgent national security imperative. They require models capable of high-level analysis, threat identification, and rapid processing of classified data. However, for companies like Anthropic, which was fundamentally built around the concept of "Constitutional AI"—a system where ethical rules are baked into the model’s core—yielding to demands that compromise these guardrails is ethically and perhaps legally untenable.

Imagine a highly advanced self-driving car that is programmed never to exceed 55 mph for safety. If a government agency demands the car operate at 100 mph for an emergency, yet the manufacturer knows that speed fundamentally risks the car’s structural integrity, a conflict arises. In the AI realm, removing safety filters might increase immediate utility (speed), but could unlock catastrophic failure modes (uncontrollable outputs, misuse, or systemic errors).

Corroborating the Stakes: Defense Integration

The context provided by searching the existing relationship between AI labs and defense departments is essential (as noted in the query: "Pentagon" "classified systems" "Anthropic Claude integration" OR "DoD AI procurement"). These integrations signify that the government has already decided that commercial frontier models are necessary infrastructure. This makes the situation delicate: the government is simultaneously trying to regulate these tools while being dependent on their immediate deployment. If Anthropic is forced to weaken its safety layer to comply with a specific defense contract, the risk profile for all users of Claude increases, not just military applications.

The Legal Core: Challenging Government Overreach

The lawsuit is fundamentally a challenge to regulatory authority when that authority attempts to dictate the internal workings of proprietary, safety-focused technology. The legal framework being tested relates to administrative law. Specifically, Anthropic is arguing that the agencies’ threats and demands are arbitrary, capricious, or an abuse of power.

For a technical audience, think of this as a challenge to the interpretation of existing procurement or oversight rules. If agencies threaten punitive action (fines, contract termination) to force a change in a safety mechanism, Anthropic must prove that these mechanisms are not merely policy preferences but necessary technological prerequisites for safe operation. Articles delving into the intersection of AI safety and the Administrative Procedure Act (as targeted in the first search query) confirm that this is virgin legal territory.

The Precedent: Whose Rules Apply?

If the government prevails, it sets a precedent that national security needs trump the developing corporate standards for AI safety, effectively creating a mandatory, non-negotiable backdoor for federal access that bypasses the developer's ethical framework. Conversely, if Anthropic wins, it establishes a powerful defense for AI developers globally, affirming that companies maintain the right to refuse actions that undermine their core safety commitments, even when facing government pressure.

The Tension Between Alignment and Acceleration

Anthropic’s ethos—its "Constitutional AI"—is designed to steer AI toward beneficial outcomes. This lawsuit brings into sharp relief the industry-wide debate around accelerationism versus safety-first development.

Companies like Anthropic believe that rushing deployment before adequate safety measures are validated is irresponsible. Yet, geopolitical competition means that governments prioritize rapid capability acquisition. The suggested search query, "Anthropic safety commitments" OR "Frontier AI safety standards" vs "Government pressure", helps contextualize how Anthropic’s stance compares to the wider industry. While competitors might accept certain compliance trade-offs under government contracts, Anthropic appears willing to fight to maintain ideological consistency.

This tension is a microcosm of a broader societal challenge: How do we govern technology that is developing faster than our laws can keep up? Governments want control; innovators want flexibility to learn and adapt safety protocols in real-time. The courts will now have to mediate this fundamental conflict.

Future Implications: What This Means for Business and Society

For AI Businesses: The Regulatory Minefield

This case is a critical barometer for regulatory risk. If the outcome leans toward greater government mandate power, it creates a severe "chilling effect" on innovation, as developers will preemptively water down safety features to avoid future legal battles with federal clients. Conversely, a victory for Anthropic could empower all frontier AI labs to stand firmer on safety protocols, treating them as non-negotiable intellectual property or ethical boundaries.

Businesses relying on AI must pay close attention to the precedent set. If external safety requirements become externally dictated, contracting for bespoke AI solutions will become far more complex, requiring new clauses defining responsibility when the government forces modifications to the model's core behavior.

For Society: Defining the Limits of Control

On a societal level, this battle defines who holds the ultimate veto power over potentially world-altering technology. Should safety protocols be dictated by the engineers who understand the failure modes best, or by security agencies whose mandate centers on national stability?

If the government can compel a company to deploy an inherently less safe version of an LLM, it risks democratizing dangerous capabilities by injecting them into critical, yet potentially less secure, defense supply chains. The outcome will shape public trust in both private AI labs and the government's ability to manage these tools responsibly.

Actionable Insights for Navigating the New AI Landscape

As analysts, we must move beyond observing the technology to understanding the governance architecture being built around it. Here are practical insights for stakeholders:

- For Enterprise Clients: Demand Clarity on Liability. If your organization integrates powerful LLMs, review contracts to determine liability if a government mandate forces a safety downgrade that leads to operational failure or data breach. Assume safety layers are subject to revision based on external pressures.

- For Investors: Evaluate Governance Risk. Future AI valuation will depend heavily on regulatory predictability. Companies with extremely rigid, publicly declared safety commitments (like Anthropic claims) may face higher friction with government contracts but potentially greater consumer trust. Understand which path a company is prioritizing.

- For Policymakers: Create Clear Frameworks Now. The current situation—where agencies use threats instead of legislation—is unsustainable. Policymakers must urgently draft clear, technology-neutral rules specifying when and how safety guardrails can be overridden in the name of security, ensuring these processes are transparent and auditable.

- For Researchers: Document Safety Rationale. Every significant safety feature should be documented with clear technical rationale supporting its necessity. This documentation will be the first line of defense in future legal or regulatory challenges, proving that the guardrail is not arbitrary but rooted in engineering necessity.

Conclusion: The Future Is Being Litigated Today

Anthropic's lawsuit is the first major legal salvo in the long-term struggle over AI control. It forces a public reckoning on whether safety is an immutable ethical commitment or a negotiable feature subject to the shifting winds of national security priorities. The resolution of this case—whether through judicial ruling, legislative intervention, or a negotiated settlement—will define the boundaries of corporate autonomy, set the tone for future government-industry collaboration in defense tech, and ultimately determine the pace and direction of safe, responsible AI development moving forward.