The Great AI Data Pivot: Why Video is the Next Massive Training Frontier After Text Exhaustion

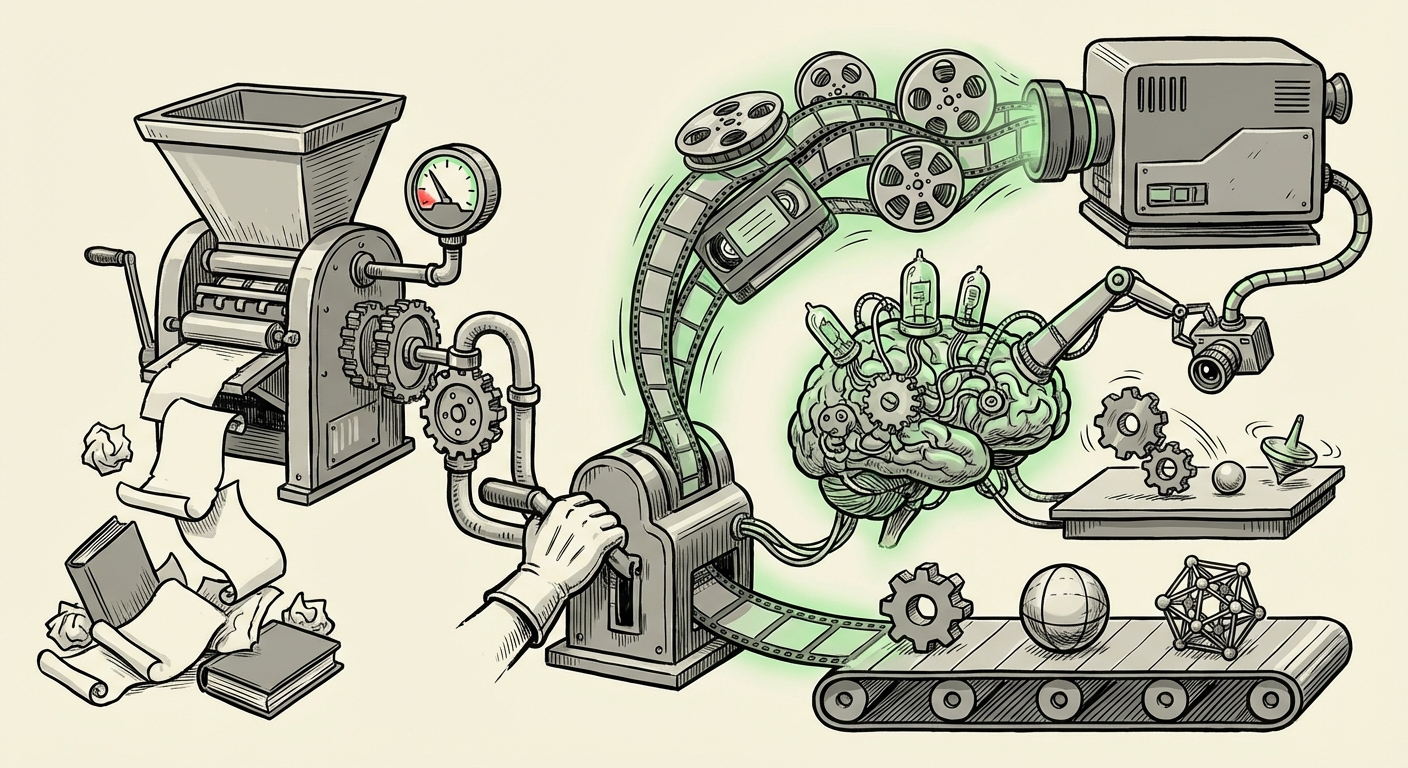

For the last decade, the engine powering the incredible leaps in Artificial Intelligence—from chatbots that write poetry to code—has been text. Specifically, massive amounts of publicly available human-written text scraped from the internet. But like any finite resource, the age of easy text data is nearing its end. Recent research, notably from Meta FAIR and NYU, suggests that the well of high-quality, unique text is running dry. This isn't just a logistical problem; it’s a fundamental signal that the next era of AI must be radically different: the true multimodal age, centered around video.

This pivot from words to moving images represents a shift from teaching AI what we *say* to teaching AI how the world *works*. It moves us closer to building systems that possess genuine common sense and understanding of physics, motion, and complex social dynamics.

The End of the Text Scrape: Understanding Data Scarcity

To appreciate the significance of video, we must first understand the problem with text. Large Language Models (LLMs) thrive on scale. To create models like GPT-4 or Llama 3, researchers needed trillions of words. While the internet seemed infinite, the amount of truly high-quality, diverse, and novel text is not. As analogous research suggests (see search query: "text data exhaustion" LLM training frontier), we are running into diminishing returns. After consuming almost everything publicly documented, models are starting to simply memorize, rather than learn fundamental concepts.

Imagine learning a language only by reading books. You might master grammar and vocabulary, but you wouldn't know how to catch a ball, gauge the speed of an oncoming car, or interpret sarcasm based on a flicker in someone’s eye. Text data is rich in semantics, but poor in embodiment.

What This Means for Scaling

- Quality Over Quantity: As raw text volume shrinks, the focus shifts to incredibly expensive, curated, or synthetic data generation—a bottleneck for democratization.

- Performance Plateau: Without new, rich input, the performance gains that characterized the early LLM era will slow down.

Video: The Infinite Textbook of Reality

If text is the transcript of the world, video is the live simulation. Meta’s research points toward unlabeled video as the next great repository of knowledge. Why is video so powerful?

Video data is inherently multimodal. Every frame contains visual information (pixels), audio information (sound), and temporal information (the sequence of change). Training a model on raw video forces it to build an internal, predictive model of reality.

For instance, if an AI watches 10,000 hours of cooking videos, it learns not just the names of ingredients, but the *physics* of mixing, the *timing* required for baking, and the *consequences* of impatience (a burnt dish). As indicated by research into The Rise of Foundational Models for Video Understanding, this capability allows models to grasp implicit rules—rules humans rarely bother to write down.

This type of implicit learning is key to achieving more robust Artificial General Intelligence (AGI). It teaches the machine *how* things happen, not just *what* is said about them.

The Richness of Unlabeled Data

The crucial word here is unlabeled. Labeling video is slow and prohibitively expensive—imagine manually describing every second of every video uploaded to YouTube. By training models directly on raw, unlabeled video streams, AI engineers are allowing the model to discover patterns autonomously, much like a human baby learns by observation rather than reading a manual.

The Competitive Landscape: A Multimodal Arms Race

This move by Meta is not an isolated academic exercise; it is a critical competitive maneuver. The major players in AI are already mobilizing resources toward video processing and understanding, confirming that this is the industry’s consensus next move.

When we look at what competitors like Google and OpenAI are prioritizing (as seen in their focus on video generation and advanced perception capabilities, referenced by searches like OpenAI Google video training data strategy), we see a unified direction. They are not just interested in generating better videos; they are building systems designed to reason over visual, dynamic data.

This intense focus means that the next generation of foundation models will likely be seamless integrators of text, image, audio, and motion. The user interface of the future won't just be a text box; it will be an environment where you can show the AI something happening and ask complex procedural questions about it.

Future Product Implications:

- Smarter Assistants: Assistants that can watch your screen while you work, identifying bottlenecks or suggesting shortcuts based on visual context.

- Advanced Robotics: Robots that can learn complex manipulation tasks just by observing human workers, drastically cutting down on complex coding requirements.

- Deep Search: The ability to search across all media not just by keywords, but by motion, feeling, or inferred context ("Find me the moment in that lecture where the speaker subtly shifted weight while making the main argument").

The Inevitable Hurdle: Computational Cost and Efficiency

While the potential of video data is boundless, the practical implementation faces immense computational gravity. Video is data-dense. A single minute of high-definition video contains vastly more information than a page of text. Scaling training to petabytes of video data presents an engineering challenge that dwarfs previous text-scaling efforts.

As detailed in analyses concerning The Scale and Sparsity Problem: Making Sense of Raw Video Data for AI Training, researchers are battling dimensionality. How do you efficiently process sequential data where every frame is related to the last, without using prohibitively massive amounts of memory?

This forces innovation in two key areas:

- Hardware Optimization: We will see faster adoption of specialized AI accelerators designed specifically for handling the sparse, temporal nature of video inputs, rather than general-purpose GPU clusters built around matrix multiplication for text.

- Algorithmic Sparsity: AI architects must develop clever ways to sample the video data—perhaps focusing attention only on frames where significant action or change occurs—to avoid processing redundant information.

For businesses, this translates to a significant upfront investment. Access to the next generation of highly capable AI might initially be centralized among those who can afford the custom infrastructure required to manage this video torrent.

Practical Implications for Businesses and Society

This transition from text-centric to multimodal reasoning has deep implications across every sector.

For Businesses: Re-evaluating Data Strategy

If your business relies on data that is easily digitized into text (e.g., customer support logs, internal documentation), you are training your AI on a limited fuel source. Businesses must begin auditing their existing video assets—surveillance footage, manufacturing process recordings, product demos, educational materials—not as passive records, but as invaluable, raw training materials.

Actionable Insight: Start investing in robust data tagging and pre-processing pipelines specifically for video. Even if you aren't training a foundation model tomorrow, standardizing your video assets ensures you are ready when fine-tuning on smaller, proprietary video sets becomes the norm.

For Society: The Deepening Sense of Reality

As AI becomes better at interpreting the continuous, chaotic reality presented in video, its ability to operate autonomously in the physical world accelerates. This is the bridge to advanced autonomous vehicles, sophisticated medical diagnostics based on imaging, and complex industrial automation.

However, this also amplifies ethical concerns. An AI trained deeply on human behavior via video might become unsettlingly good at prediction and persuasion. If models understand human movement and emotional nuance better than ever before, the potential for sophisticated manipulation—visual deepfakes that are physically coherent, or highly targeted emotional advertising—becomes a much more immediate threat.

Conclusion: Beyond Words to Worlds

The news that LLM text data is plateauing is not a sign of stagnation; it is a mandate for evolution. Meta’s focus on unlabeled video signals that the industry is moving past language processing as the ultimate benchmark for intelligence. We are entering an era where AI must see, hear, and move to truly learn.

The race is now on to tame the computational beast of video data. The companies that master the efficient ingestion and processing of these rich, dynamic streams will define the capabilities of the next wave of foundational models. The future of AI isn't just about writing better articles; it’s about building systems that can truly navigate the physical world, one frame at a time.