Luma AI Uni-1 Shatters Expectations: Why Logic, Not Just Looks, Defines the Next Generation of Image AI

The world of artificial intelligence has long been captivated by visual realism—the photorealism of a generated image, or the smooth motion of a synthesized video. But a recent breakthrough by Luma AI—the introduction of their Uni-1 model—suggests the conversation is rapidly shifting from *what* the model can create to *how well* it understands what it is creating.

Luma AI’s announcement that Uni-1 outperforms established leaders like Nano Banana 2 and GPT Image 1.5 on logic-based benchmarks is not just an incremental update; it’s a structural challenge to the incumbents. This development forces us to re-evaluate the core trajectory of multimodal AI, moving from impressive pattern mimicry toward genuine, albeit narrow, visual reasoning.

The Benchmark Shift: From Aesthetics to Acumen

For years, the primary measures of success for image models were subjective quality metrics (like FID scores) or simple prompt adherence. If you asked for "a red ball on a blue table," and you got a plausible image, the model was deemed successful. However, these models often failed simple tests of spatial awareness, object permanence, or causal relationships.

This is where Luma AI has drawn a line in the sand. By prioritizing performance on logic-based benchmarks (as suggested by the context of our analysis, likely involving complex Visual Question Answering (VQA), spatial puzzles, or counterfactual reasoning), Uni-1 claims a higher form of intelligence.

Demystifying Logic Benchmarks (For Everyone)

Imagine asking an older AI, "If the green block is behind the yellow one, and the yellow one is on the left, which block is closer to me?" A model focused only on surface-level texture and color might fail because it struggles to track relative positions and infer depth. Logic benchmarks test the AI’s ability to hold multiple facts in its "mind" and perform mental calculations on them before generating an image or answering a question.

When Luma AI beats established models here, it implies Uni-1 is better at understanding the rules of the scene it is describing or generating. This is critical for future applications where AI needs to interact with the real world.

The Architectural Revolution: Unification is Key

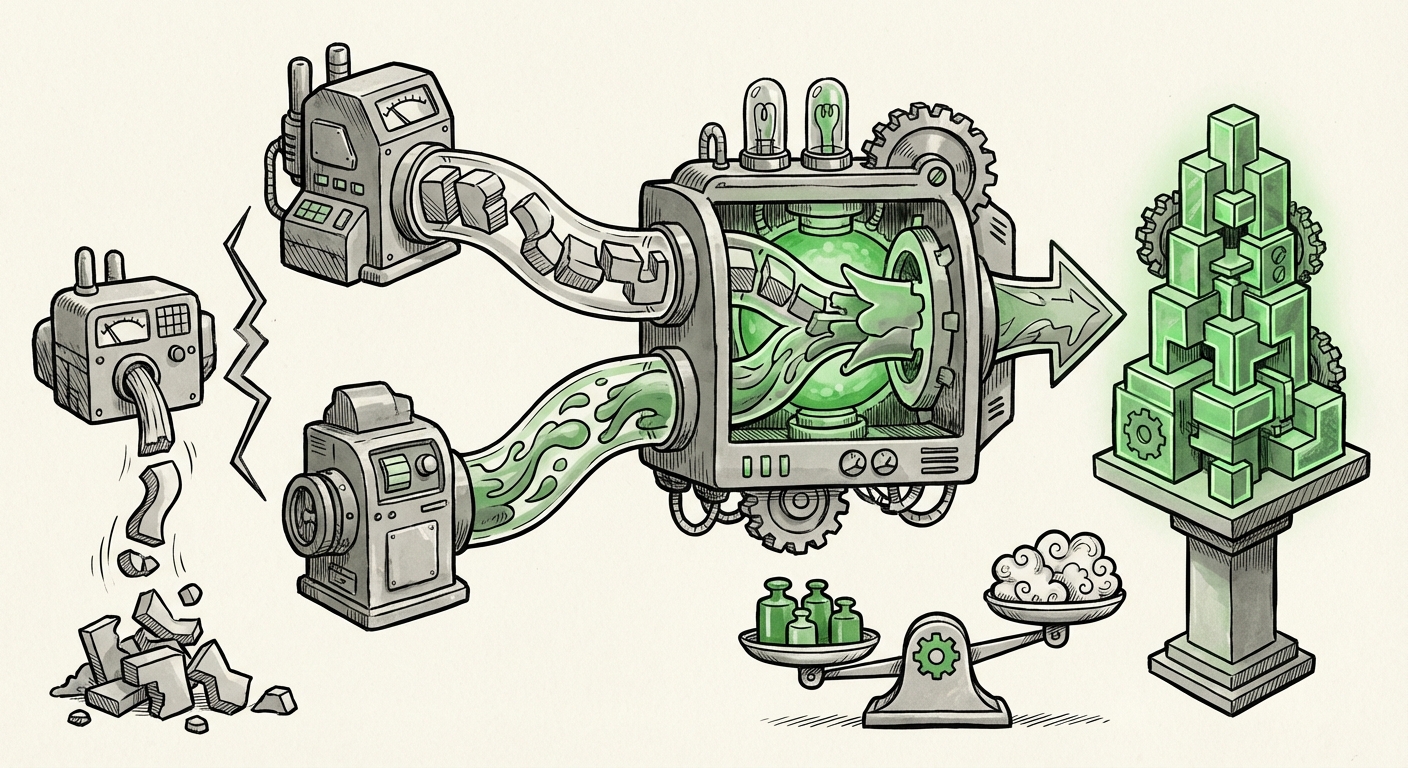

The technical secret sauce behind this claimed leap in reasoning seems to lie in the model’s core design. Uni-1 utilizes a single, unified architecture that handles both image understanding (perception) and image generation (synthesis). This contrasts with older, modular approaches.

Why Separate Systems Fall Short

In many previous multimodal systems, you had two distinct components:

- The Understanding Module (Encoder): This part reads the prompt and the existing image data, converting it into a numerical representation of meaning.

- The Generation Module (Decoder/Generator): This part takes that numerical meaning and renders the pixels to create the output.

The weakness here is the "translation layer" between the two. Information critical for reasoning can be lost or misinterpreted when moving from the understanding module to the generation module.

The Power of the Single Brain

Uni-1, by operating on a unified structure, essentially allows the understanding and generation processes to happen simultaneously within the same "thought space." As the model reasons through the prompt—"I need object A to be positioned *relative* to object B according to rule C"—it is building and visualizing that structure concurrently. This tight coupling fosters a deeper, more coherent internal representation, which translates directly into better logical adherence in the output.

This trend toward unified architectures—which we are seeing explored by nearly all major labs (as suggested by our search for similar multimodal architecture trends)—is arguably the most important underlying technological shift underpinning Uni-1’s success.

Challenging the Titans: OpenAI and Google Under Pressure

The significance of Luma AI’s achievement is amplified by who they are outpacing. OpenAI (with GPT models) and Google (with Gemini models) have dominated the narrative surrounding foundational AI capabilities. Luma AI, often seen as an innovator in specialized areas like 3D reconstruction and video, has now entered the foundational reasoning arena.

Framing the Competitive Landscape

When a smaller, specialized player scores higher on core reasoning tasks, it puts immediate pressure on the giants. It signals two things:

- The Incumbent’s Blind Spot: OpenAI and Google may have prioritized scale and aesthetic fluency, unintentionally neglecting the rigorous, granular testing of visual logic.

- Agility of Startups: Startups are often unburdened by legacy codebases or massive deployment infrastructures, allowing them to experiment faster with novel, perhaps computationally expensive, architectural designs like Uni-1’s unification approach.

The crucial next step for the industry is to see how quickly the incumbents integrate these reasoning improvements into their next major releases (like the rumored GPT-5 capabilities). If Uni-1’s lead on logic proves sustainable, it forces a complete re-prioritization of R&D resources across the industry.

Future Implications: What Does Reasoning AI Mean for Business?

The move from models that *draw* what you ask for to models that *reason* about what you ask for unlocks immense practical value across industries.

1. Advanced Simulation and Digital Twins

For engineering, manufacturing, and urban planning, models that truly grasp spatial logic are transformative. Instead of generating a nice picture of a factory floor, Uni-1’s descendants could generate complex simulations where parts interact according to physical laws inferred from complex instructions. This means better testing of designs before any physical prototype is built.

2. Scientific Discovery and Data Interpretation

In fields like biology or material science, researchers deal with complex, layered images (microscopy scans, astronomical data). An AI that can logically reason about the relationships between components in these images—such as, "If Molecule A binds to Receptor B, what spatial conformation prevents Molecule C from entering the site?"—moves beyond simple labeling into genuine hypothesis generation.

3. Safer Autonomous Systems

For self-driving cars or advanced robotics, reasoning is safety. These systems must predict the complex, logical outcomes of movement in real-time. A generative model capable of robust visual logic forms the bedrock for creating more reliable training environments and safer decision-making modules, as it understands causality.

Actionable Insights for Technology Leaders

For CTOs, product developers, and investors, the message from Luma AI’s Uni-1 is clear: Performance parity on generation quality is no longer the differentiator; logical coherence is.

Action Points:

- Rethink Benchmarking: Do not rely solely on aesthetic quality tests. Begin stress-testing your current multimodal vendors (or internal models) with spatial, numerical, and causal reasoning prompts. If your vendor struggles with "What if..." scenarios, they are lagging on foundational intelligence.

- Investigate Unified Architectures: Look closely at the underlying technology used by new players. The shift to integrated understanding/generation components is likely where future performance gains will be found. This impacts decisions on whether to build foundational models or integrate third-party APIs.

- Pivot Development Pipelines: For any application requiring interaction with complex digital or physical environments (AR/VR, design tools, robotics), prioritize models that demonstrate strong logical reasoning scores. Aesthetic appeal is secondary to operational correctness.

Conclusion: The Race for True Multimodal Cognition

Luma AI’s Uni-1 model is a potent reminder that the AI race is not always won by the entity with the largest model or the biggest budget, but often by the one with the most insightful architectural innovation.

By focusing computational resources on building a model where understanding and creation share the same cognitive pipeline, Luma AI has potentially leapfrogged competitors in the most critical area for the next phase of AI evolution: reliable, trustworthy reasoning.

We are moving beyond AI that paints pictures to AI that understands geometry, physics, and causality within those pictures. This convergence of generation and reasoning, proven successful on logic benchmarks, sets the stage for a new era of truly cognitive visual systems. The implications for everything from scientific modeling to everyday automation are profound. The age of the "smart" image model has officially begun.

*(Note: This analysis is based on the premise established by the initial report concerning Luma AI's Uni-1 performance on logic-based evaluations against models like Nano Banana 2 and GPT Image 1.5, viewed through the lens of industry trends in multimodal unification.)*