The Logic Leap: Why Luma AI's Uni-1 Signals the End of 'Pretty Pictures' in Generative AI

For years, the progress in generative AI imagery was measured in pixels. Could the model create faces that didn't look uncanny? Could it render realistic textures? Could it mimic the style of a master painter? The competition between giants like OpenAI and Google largely centered on mastering photorealism and speed.

However, a recent development from Luma AI—the introduction of their **Uni-1 model**—suggests we are standing at a critical inflection point. Uni-1 isn't just another tool for creating beautiful images; it is being benchmarked on its ability to perform **logic-based reasoning** while generating visuals. This subtle but profound difference marks a fundamental shift: AI is moving from being a sophisticated mimic to becoming a genuine visual thinker.

The Benchmark Battle: Moving Beyond Aesthetics

The excitement around Uni-1 stems from its performance on logic-based tests, where it reportedly outperformed models like Nano Banana 2 and even GPT Image 1.5. To understand why this matters, we must look beyond the flashy demos.

Imagine asking an AI to generate an image of "a stack of three blocks where the red block is physically supporting the blue block, and the blue block is resting on the green block."

Older generative models might produce three blocks, perhaps with the right colors, but the spatial or causal relationship—the logic of support—could be easily broken. They are excellent at pattern matching but poor at physics simulation or spatial logic.

Uni-1’s reported success on these benchmarks suggests it doesn't just map words to pixels; it seems to integrate an internal model of *how the world works* when it generates the image. This requires deep visual grounding. We need to contextualize this success by examining the standards used in the field.

Contextualizing Logic Tests: The New AI Barometer

The focus on logic confirms what many researchers suspected: standard image quality metrics are becoming saturated. The next frontier requires models to pass tests that measure causation, spatial awareness, and complex instruction following.

When evaluating models like Uni-1, we are looking at benchmarks designed to trip up models that lack true understanding. These tests demand that the AI:

- Understand **Occlusion**: What part of an object is hidden behind another?

- Grasp **Causality**: If object A moves, what happens to object B?

- Interpret **Spatial Relations**: Is something "to the left of," "above," or "inside of" another element?

Success here means the model isn't just remixing learned data; it's demonstrating *inference*. This is the hallmark of systems that are beginning to think, rather than just render.

The Architectural Revolution: Unified Multimodality

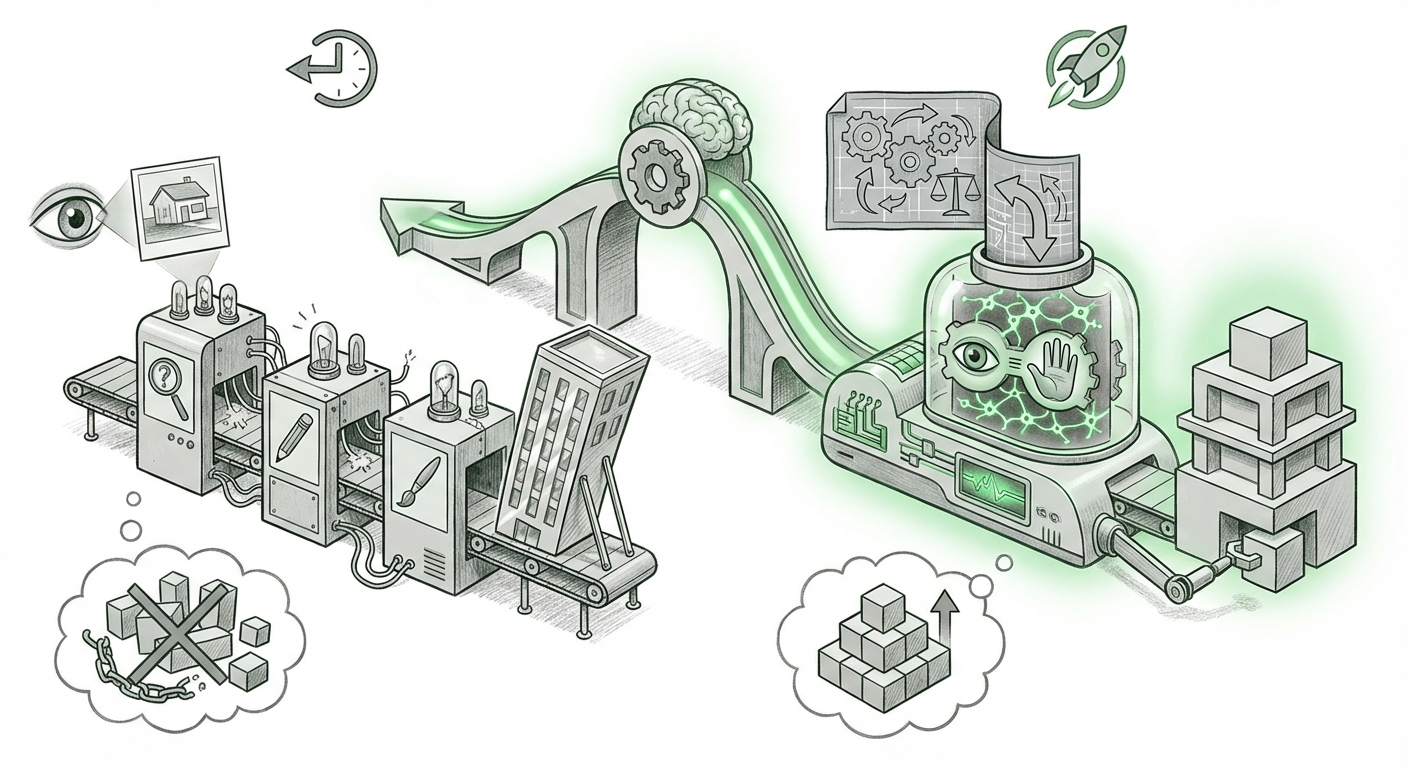

Perhaps the most enduring legacy of Uni-1 will be its structural approach. The article highlights that Uni-1 combines image understanding and generation within a *single architecture*. This is a major deviation from the established, pipeline-heavy approaches of the recent past.

In the older paradigm, you often had separate specialist models:

- A Vision-Language Model (VLM) analyzed the prompt and the input image (understanding).

- A large Language Model (LLM) formulated the text instructions.

- A dedicated Diffusion Model executed the generation (rendering).

This required complex orchestration, leading to potential points of failure or misunderstanding where one model’s output wasn't perfectly translated to the next.

Why Unification Matters for Performance

A unified architecture, similar in spirit to Google's Gemini, suggests a more fundamental integration of capabilities. When the same core computational engine handles both *seeing* and *creating*, the system benefits immensely from coherence.

If Uni-1 reasons through the prompt *as* it generates, the reasoning process is directly tied to the output pixels, ensuring that the final image perfectly embodies the logical constraints provided in the prompt. This architectural alignment is crucial for bridging the gap between language understanding and visual output. For business applications, this means fewer iterations needed to get the desired, logically sound result.

This trend confirms that the race is no longer about building the biggest model, but about building the most *integrated* one. Strategists need to track which companies are adopting this unified backbone, as it suggests a faster path to advanced, multi-sensory AI capabilities.

The Immediate Competitive Ripple

Luma AI, while perhaps not possessing the sheer scale of OpenAI or Google, is demonstrating that novel architectural choices and targeted benchmarking can allow smaller, focused players to leapfrog incumbents in specific, crucial domains. The very act of publicly challenging the established leaders on "logic" forces a broader industry self-assessment.

If Luma’s logic tests become the new standard, current leaders will have to swiftly pivot their evaluation processes. It’s a classic technology disruption pattern: a challenger defines a new, more demanding playing field, forcing market leaders to play catch-up.

The competitive dynamic is shifting from "Who can make the best photo?" to "Who can build the most reliable visual reasoner?" This is a more technical, and arguably more valuable, metric for enterprise adoption.

Future Implications: Visual Reasoning and the Rise of Actionable AI

The most profound implications of achieving robust visual logic lie outside the realm of digital art. They touch the core of creating AI systems that can interact safely and effectively with the real world.

From Pixels to Physicality: The Agent Revolution

The holy grail in robotics and autonomous systems is *actionable vision*—the ability for an AI to look at a scene and understand not just what is present, but what *can* or *should* happen next.

For example, consider a surgical robot or an autonomous delivery vehicle. These systems need more than simple object detection; they require causal reasoning based on visual input. If a self-driving car sees a ball roll into the street, a model proficient in visual logic should instantly infer that a child might follow, triggering a braking protocol based on predicted behavior, not just observed current state.

Uni-1’s success suggests that the foundational components required for such high-stakes applications are emerging rapidly. Models that can reliably reason about spatial relationships and predicted outcomes in static images are one step closer to mastering dynamic, real-time video environments.

Training the Next Generation of AI Planners

For businesses looking toward AI agents that manage complex workflows (e.g., supply chain optimization, software debugging, or scientific discovery), the ability to interpret visual schematics, diagrams, or even video demonstrations becomes vital. An agent that can watch a video tutorial on fixing a complex machine and then generate the correct sequence of physical actions demonstrates a level of integrated intelligence far beyond simple text summarization.

This requires visual grounding—the ability to truly connect abstract language concepts (like "stability" or "flow") to tangible visual representations. Luma’s focus suggests that the tooling for grounding is maturing quickly.

Practical Takeaways and Actionable Insights

For technologists and business leaders, the Uni-1 announcement is a flashing indicator that the requirements for successful AI adoption are changing.

For Technical Teams (ML Engineers, Researchers):

- Rethink Evaluation: Stop relying solely on subjective quality metrics (like FID scores for photorealism). Start prioritizing models that excel at established (and new) visual reasoning benchmarks (Query 1). If your AI needs to make decisions based on visual data, testing its logic is non-negotiable.

- Investigate Unified Architectures: Examine how unified multimodal models (Query 2) are implemented. The efficiency and coherence benefits of single-architecture systems will likely translate to lower latency and higher reliability in production environments compared to complex pipelines.

- Focus on Grounding: When sourcing or building next-gen vision models, specifically query their capabilities in spatial reasoning, physics simulation, and prediction (Query 4).

For Business Leaders (Strategy, Product Managers):

- Prepare for Agentic Workflows: The era where AI simply generates content is ending. The next wave involves AI that uses visual input to plan and execute complex actions. Start identifying workflows where visual logic—understanding diagrams, interpreting sensor data, or analyzing visual feedback loops—is the key bottleneck.

- Scrutinize Vendor Claims: When evaluating generative AI tools, ask vendors pointed questions about their models’ performance on causal and spatial reasoning, not just visual fidelity. A beautiful image that violates basic laws of physics is useless for industrial applications.

- Monitor the Challenger Ecosystem: While the hyperscalers dominate mindshare, specialized players like Luma AI are setting the pace on core capabilities (Query 3). Ensure your long-term procurement strategy accounts for nimble, focused competitors who might own critical specialized intelligence layers.

Conclusion: The Dawn of Intelligent Sight

The success of Luma AI’s Uni-1 on logic-based benchmarks is more than just a footnote in a competitive race; it is a declaration that the industry is moving past the "toy phase" of generative media.

We are demanding that our AI systems not only see but understand what they see, integrating that understanding seamlessly into their creative or operational output. The shift to unified architectures is the technological mechanism enabling this, and the rise of rigorous visual reasoning benchmarks is the metric defining success.

The future isn't just about generating images of what is; it’s about generating images, plans, and actions based on what must be. This logic leap is the foundation upon which truly intelligent, actionable AI will be built.