The New AI Frontier: Why Logic-Based Image Models Like Luma AI's Uni-1 Redefine Generative AI

For years, the race in generative AI was defined by pixels. Could a model create an image that looked indistinguishable from a photograph? Could it render a complex scene quickly? While models like those from OpenAI and Google have pushed the aesthetic envelope, a quiet but profound shift is occurring beneath the surface. The new battleground is not just visual fidelity, but visual reasoning.

The recent unveiling of Luma AI's Uni-1 model, which reportedly topped competitors like Nano Banana 2 and even specific versions of GPT Image models on logic-based benchmarks, marks a pivotal moment. It signals that the industry is moving past superficial image creation toward systems capable of true, coherent, and logical understanding embedded within their generative process. This isn't just about making pretty pictures; it’s about making pictures that make sense.

The Great Leap: From Synthesis to Symbiosis

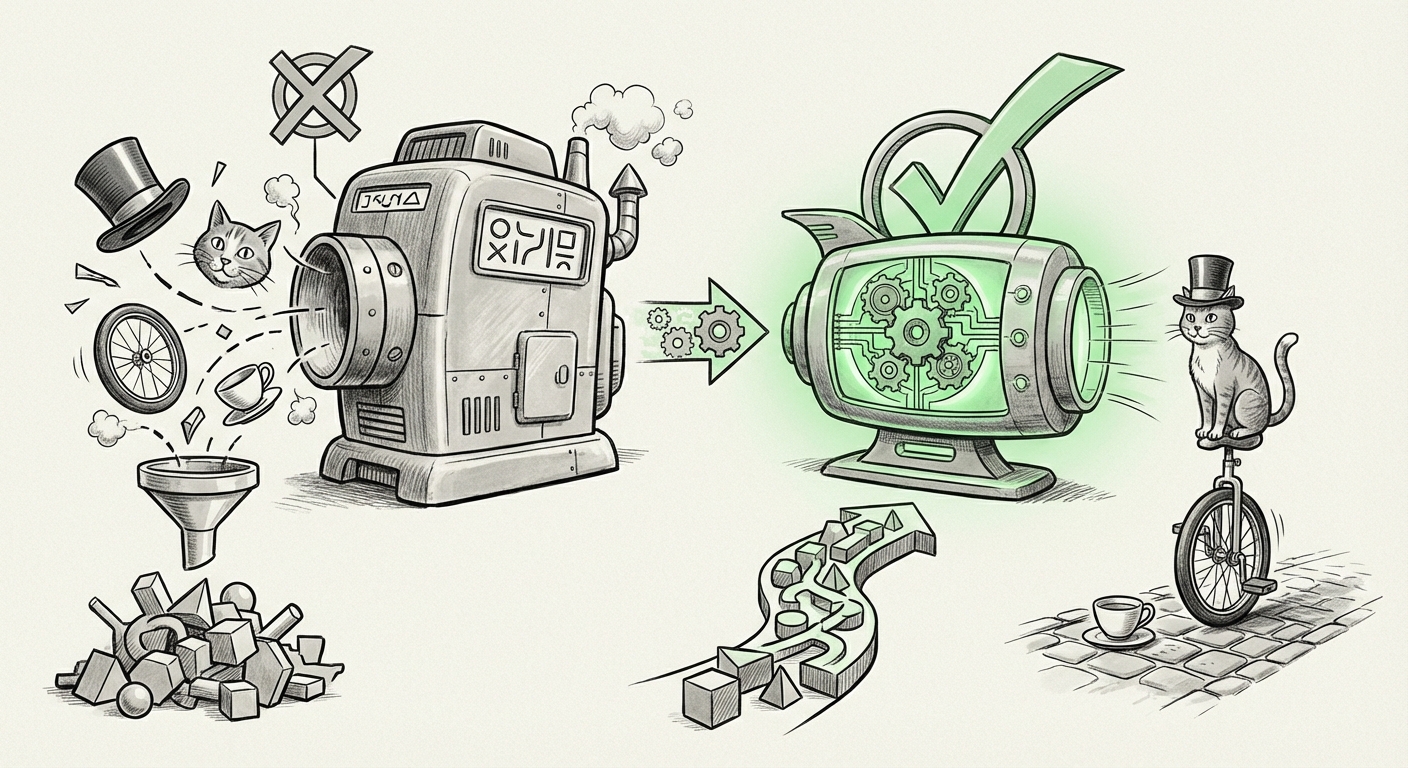

To understand why this is significant, we must look at how previous image generation models worked. Often, these systems were excellent at pattern matching based on massive datasets. If you asked for "a cat wearing a hat," the model recalled millions of images containing cats and hats and blended them together beautifully. However, if you asked a model a complex, multi-step question based on the generated image—like, "If the cat moves behind the red box, which way does the shadow fall?"—it often failed because it lacked an integrated world model.

Luma AI’s key innovation, as suggested by the initial reports, is the development of a single architecture that combines image understanding and generation. Think of it like this: instead of having one brain that looks at the world (the understanding part) and a separate hand that draws (the generation part), Uni-1 has one brain that both understands the rules of the world *and* draws based on those rules simultaneously.

What Are Logic-Based Benchmarks?

This shift in focus—highlighted by the search query, `"logic-based benchmarks" generative AI image models`—is crucial. Traditional benchmarks often measure metrics like CLIP scores or aesthetic quality. Logic-based benchmarks, however, test cognitive abilities:

- Spatial Reasoning: Does the model understand depth, occlusion (what's in front of what), and relative size?

- Causality: Can it accurately depict the result of a physical action? (e.g., if a glass tips, water spills out.)

- Constraint Satisfaction: Can it adhere to complex, multi-layered instructions that require planning?

When a new model excels here, it suggests that the generative component is deeply informed by a reasoning core. For technical audiences, this validates the path toward Artificial General Intelligence (AGI) where modality fusion is seamless.

The Competitive Crucible: Challenging the Titans

The fact that Uni-1 is explicitly measured against models associated with OpenAI and Google (as indicated by the query, `Luma AI Uni-1 vs OpenAI GPT-4V vs Google Gemini image reasoning`) places Luma in the heavyweight division. For years, only the largest labs with the deepest pockets could afford the compute power to train truly groundbreaking foundation models. Luma AI, a smaller, focused player, achieving top marks suggests two possibilities:

- Architectural Efficiency: They have found a significantly more efficient way to train reasoning capabilities without needing to simply scale up the entire model size exponentially.

- Data Quality Focus: Their training data or methodology prioritizes logically rich content over sheer volume, resulting in smarter models for the same compute budget.

This decentralization of cutting-edge performance is a healthy sign for the ecosystem. It shows that innovation is not solely confined to the established giants, providing fresh ideas and faster iteration cycles.

Architectural Implications: The Unified Future

The core takeaway for AI Architects (as explored by searching for `Uni-1 architecture combined image understanding and generation single model`) is the trend toward unified, innate multimodal models. Older systems were often a stack:

Prompt Input -> Text Encoder -> Visual Logic Module (sometimes separate) -> Image Decoder -> Final Image Output.

Uni-1’s unified approach aims to collapse this pipeline. When reasoning is built into the generator itself, the model inherently understands the context of its own creation process. This leads to outputs that are not just plausible, but structurally and physically sound, addressing a major weakness in current diffusion models.

Future Implications: Beyond the Art Portfolio

Why should businesses care that an image model can pass a logic test? Because the ability to reason visually opens doors to entirely new applications far beyond marketing imagery:

1. Robotics and Simulation

Autonomous systems—robots, drones, self-driving cars—rely on interpreting 3D space and predicting physical outcomes. A model that truly *reasons* about visual input (like Uni-1 suggests it can) is a critical component for realistic simulation environments where AI agents can be trained safely before being deployed in the real world. This capability dramatically lowers the cost and risk associated with testing new robotic hardware.

2. Scientific Visualization and Discovery

Imagine asking an AI to visualize a complex molecular interaction based on abstract scientific papers, and ensuring the resulting 3D model correctly depicts bond angles and spatial overlaps based on the chemical principles described. Logic-informed generation moves scientific visualization from mere illustration to a tool for hypothesis testing.

3. Enhanced Debugging and Design Iteration

For product designers, this means faster iteration cycles. Instead of generating 100 images hoping one is "close," a designer can prompt: "Generate this circuit board, but move the capacitor labeled C3 2mm to the left, ensuring it does not overlap with the thermal sink labeled T1." This level of precise, rule-based modification transforms the tool from a suggestion engine into a true design partner.

Actionable Insights for the Modern Enterprise

The excitement surrounding Uni-1 and the focus on logic benchmarks provide clear signals for where companies need to focus their AI strategy:

- Shift Evaluation Metrics: Stop only judging AI outputs on aesthetic beauty. Start demanding evidence of logical consistency, especially if your use case involves spatial layout, engineering, or scientific modeling.

- Prioritize Multimodal Talent: The future of AI development lies in architects capable of fusing perception (understanding) and action (generation). Invest in teams that understand transformer mechanics across different data types (vision, language, maybe even time-series data).

- Monitor Specialized Challengers: While the "Big Tech" labs set the pace, agile companies like Luma AI are winning specific, high-value sub-domains. Keep a close watch on competitors demonstrating superior performance in niche reasoning tasks, as they may be laying the groundwork for the next general-purpose breakthrough.

The Road Ahead: Reasoning Beyond Images

The trend toward deeper reasoning, as reflected in industry discussions (query 5: `future of multimodal AI reasoning beyond text and image`), suggests that the next major hurdle isn't just integrating text and images, but integrating time (video) and interaction (3D environments). Uni-1’s success on logic tests is a proof-of-concept for the entire field: A truly intelligent system must not only see the world but also understand the rules that govern it.

The implication is clear: AI is evolving from a sophisticated mimic to an actual, albeit synthetic, reasoner. This evolution, driven by specialized, high-performance models challenging incumbents on hard cognitive tasks, assures us that the next generation of AI tools will be fundamentally more reliable, capable of handling complexity, and ready to move out of the research lab and into mission-critical industrial applications.