The Reasoning Revolution: How Luma AI's Uni-1 Signals the End of Simple Image Generation

The world of generative Artificial Intelligence has moved at a breakneck pace. For years, the primary focus in image synthesis has been visual fidelity—making pictures look as real as possible. Now, the metric for success is shifting dramatically: from looking real to thinking correctly. The recent emergence of Luma AI’s Uni-1 model, which claims superiority over benchmarks like Nano Banana 2 and even models powering GPT Image 1.5 on logic-based tests, is not just an incremental update; it is a harbinger of the next major evolution in multimodal AI.

This development forces us to re-evaluate what we expect from generative models. If an AI can correctly interpret complex, multi-step instructions involving spatial relationships, object permanence, and causality, it implies a deeper level of understanding than simply stitching together patterns learned from billions of images.

The Competitive Arena: Beyond the Giants

For the past few years, the narrative around foundational models has been dominated by a few behemoths: Google (Gemini), OpenAI (GPT series, Sora), and Meta (Llama). When a smaller, focused player like Luma AI (known for 3D reconstruction and NeRF technology) enters the ring with a multimodal model—Uni-1—that competes directly on reasoning, it shakes up the established hierarchy.

The key context here is understanding the competitive landscape that Luma is challenging:

- The Fidelity Focus (e.g., Sora): OpenAI’s Sora emphasized video realism and temporal consistency. While impressive, many early tests showed that when the prompt required precise adherence to physics or specific object placement rules (logic), these models could still fail spectacularly.

- The Multimodal Integration (e.g., GPT-4o): Models like GPT-4o integrate vision and language deeply. However, they are often judged heavily on linguistic coherence. Luma’s focus suggests that while understanding the prompt (language) is important, successfully *executing* the complex constraints embedded in that prompt (logic) within the image domain is the new bottleneck.

Uni-1’s success suggests that a model built from the ground up to link perception (understanding the image components) and generation (creating the output) within a single, tightly coupled architecture offers superior constraint adherence. For technical audiences, this implies that the path to AGI might not just involve scaling up parameters, but optimizing the *integration* of sensory inputs.

The Crucial Pivot: From Pattern Matching to Logic

What does it mean for an AI model to use "logic" in image creation? Imagine asking an AI to generate an image of "a stack of three blue blocks, where the red block is hidden beneath the middle block, and the total structure must fit inside a clear glass box."

- Pattern Matching (Older Models): Might produce three blocks, one red and two blue, perhaps loosely stacked, ignoring the "hidden" instruction or misplacing the colors. It reproduces common visual patterns associated with the words.

- Logic-Based Reasoning (Uni-1): Must understand and enforce constraints: (1) Three blocks total, (2) Color mapping, (3) Positional hierarchy (red under middle), and (4) Final container requirement.

This leap signifies that Uni-1 isn't just sampling from its training data; it is executing an internal computational graph that simulates spatial relationships before rendering the pixels. This is the difference between an artist who vaguely remembers a scene and an engineer who must perfectly follow a blueprint.

The Benchmarking Wars: Why Logic Matters More Now

The shift in focus to "logic-based benchmarks" is perhaps the most telling technical detail. Traditional vision benchmarks (like those for object recognition) test perception. Reasoning benchmarks test application of knowledge. If Luma AI is winning here, it means they are pushing the envelope on:

- Spatial Reasoning: Understanding depth, overlap, and relative positioning.

- Constraint Satisfaction: Ensuring all conditions in a complex prompt are met simultaneously.

- Causality (Implied): If the prompt implies an action (e.g., "the shadow cast by the sun in the west"), the model must understand the light source rules.

To validate such claims, we must scrutinize the benchmarks themselves. As suggested by contextual searches, the industry is actively developing more rigorous testing suites to expose the "brittleness" of current models. If Uni-1 excels on these new, harder tests, it validates the architecture’s underlying robustness over systems that might be optimized purely for speed or subjective visual appeal.

Architectural Implications: The Unified Future

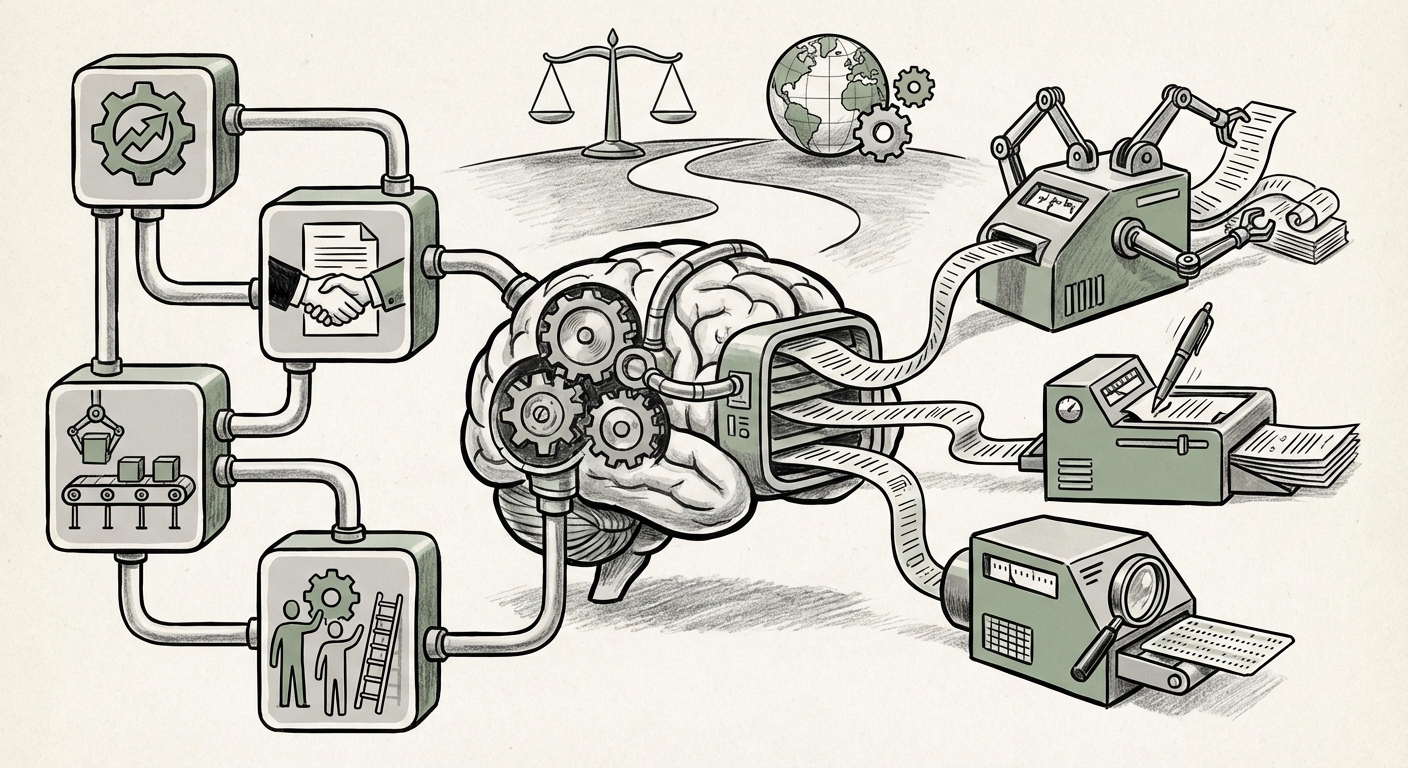

The article notes that Uni-1 uses a single architecture to combine understanding and generation. This echoes the industry push toward integrated multimodal foundation models (Query 4). Historically, complex tasks were "chained": a Vision Encoder would translate the image into tokens, those tokens would be fed into a Large Language Model (LLM) for reasoning, and then a separate Decoder would synthesize the output.

This chaining introduces latency and potential information loss at each handoff point. A unified architecture—where perception, reasoning, and generation happen within the same processing loop—is theoretically far more efficient and allows the reasoning process to directly influence the pixel generation in real-time. This unified approach is what allows the model to "reason through prompts as it creates," ensuring the logical constraints are built into the structure, not merely patched on afterward.

Future Implications: Beyond Pretty Pictures

Why should businesses, engineers, and everyday users care if an AI can correctly stack blocks in a prompt? Because the ability to reason logically within a visual domain unlocks massive commercial value that mere photorealism cannot touch.

1. Precision Design and Engineering (B2B)

For industries reliant on visual accuracy—architecture, mechanical engineering, material science simulations—the stakes are high. A model that can logically verify a design before rendering it is invaluable. Imagine prompt engineering a new circuit board layout where the AI must ensure trace widths meet minimum standards (a logical constraint) or designing medical implants that respect anatomical tolerances.

Actionable Insight: Companies should begin assessing internal use cases that currently require slow, manual CAD verification. AI that incorporates physical or logical constraints from the start will dramatically accelerate prototyping cycles.

2. Enhanced Training and Simulation

Complex simulations in robotics, autonomous driving, or even military training require environments that are not just visually accurate but physically and logically consistent. If an autonomous vehicle model is trained on simulations where the rules of friction or gravity are occasionally broken (a flaw in a purely visual generator), its real-world performance suffers.

Actionable Insight: Investment in simulation platforms that utilize reasoning-capable generative models will yield safer, more transferable training data compared to standard visual rendering engines.

3. Content Moderation and Verification

On the defensive side, logic-based generation is a double-edged sword. While it makes deepfakes more sophisticated, it also offers a superior tool for detection. A model trained to understand logical consistency can more easily spot an image where shadows fall the wrong way, or where an object appears to float due to flawed spatial reasoning.

4. The Business of Benchmarking (Query 1 Context)

Luma AI’s success highlights that the AI industry must quickly move past subjective leaderboards. The future battleground will be fought on specialized, domain-specific reasoning benchmarks. Developers will need to prove not just that their model can generate *an* image, but that it can generate the *only correct* image that satisfies every logical clause of the prompt. This shifts R&D focus from pure model size to algorithmic efficiency in constraint propagation.

The Road Ahead: Consolidation and Specialization

The narrative derived from Uni-1’s performance suggests two converging trends in the foundation model ecosystem:

Trend 1: Architectural Consolidation. The market is rejecting specialized, chained models. Whether it’s through massive scale (like Gemini) or specialized architectural efficiency (like Uni-1), the expectation is that the next generation of foundational models will be inherently multimodal and unified from the kernel up.

Trend 2: The Rise of the Challenger Ecosystem. As the giants focus on generalist AGI, highly focused players like Luma AI (Query 2 context) can gain market share by optimizing for a critical, underserved metric—in this case, logical precision in vision tasks. This specialization creates crucial competitive pressure, ensuring that no single capability gap remains unaddressed for long.

Ultimately, Luma AI’s Uni-1 is a critical waypoint on the map of AI progress. It tells us that the era where generative AI was largely treated as a sophisticated collage tool is drawing to a close. We are entering the age of the synthetic engineer—AI systems capable of adhering to rules, executing complex plans, and generating outputs grounded in demonstrable, verifiable logic. For anyone building products or services reliant on visual data, understanding this pivot from realism to reasoning is no longer optional; it is fundamental to future readiness.

Contextual References and Further Reading

To understand the broader context of this shift, these areas of investigation are crucial:

- Analyzing the emerging standards for "Next generation multimodal AI reasoning benchmarks" to gauge the true difficulty of Uni-1's achievement.

- Tracking "Luma AI funding and strategic partnerships" to understand the resources available for scaling this new architectural direction.

- Comparing the strategic focus of "OpenAI Sora vs Luma AI comparison technical depth" to see if the industry is diverging between video fluency and image logic.

- Investigating "The rise of integrated multimodal foundation models" to see how Uni-1 fits into the larger architectural trend toward efficiency and unification.