The Multi-Model Revolution: Why Microsoft is Sending Claude to Do the Heavy Lifting in Copilot

For the better part of two years, the generative AI landscape has been dominated by a clear dichotomy: OpenAI’s GPT models powering the cutting edge, and everyone else playing catch-up. Microsoft, as OpenAI’s biggest investor and primary cloud partner, has naturally leaned heavily on GPT within its Copilot ecosystem. However, a recent, pivotal development signals that the age of the single flagship Large Language Model (LLM) is already fading.

Microsoft is now integrating Anthropic’s Claude model to power specific autonomous tasks within Copilot, spanning critical applications like Outlook, Teams, and Excel. This isn't just adding another tool to the belt; it represents a fundamental architectural shift toward true multi-model orchestration in the enterprise workspace.

The Shift from Single Model to AI Operating System

Imagine you have a highly skilled assistant. If you ask them to write a creative marketing memo, they use their flair for language. If you ask them to analyze a complex, 300-page legal document for risk flags, you want them to switch to their specialized legal training. The Microsoft-Anthropic integration within Copilot is moving AI in the workplace from the first scenario to the second.

What is Copilot Cowork?

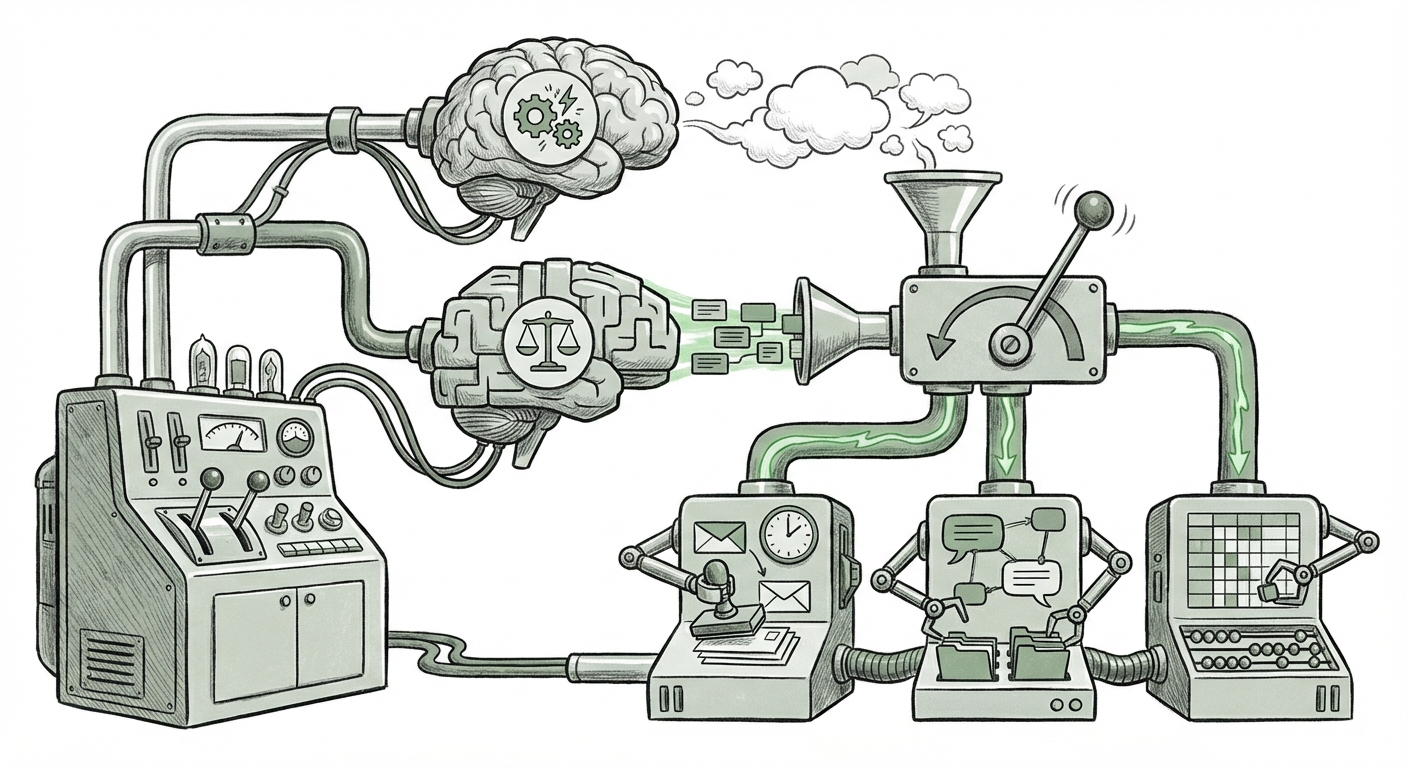

The concept introduced, often referred to as "Cowork," suggests that the AI is not just generating text based on a prompt; it is *working* autonomously on your behalf across your digital environment. Tasks like summarizing a dense Teams thread, automatically drafting a detailed response based on data in an attached Excel sheet, and then blocking time on your Outlook calendar for the follow-up require complex reasoning and the ability to safely interact with multiple software APIs (Application Programming Interfaces).

For these deep, multi-step tasks, developers must choose the right engine. The initial news suggests that for certain critical, high-stakes workflow actions, Microsoft is directing the request to Claude rather than GPT. This is a significant technical endorsement of Claude's capabilities in specific domains.

Why Diversify? The Strategic Imperatives

Why would the company that poured billions into OpenAI suddenly broaden its foundation? The reasons are multi-faceted, touching upon technology specialization, risk management, and market positioning. This diversification strategy addresses core concerns for any large organization deploying AI.

1. Model Specialization and Performance

No single LLM is the best at everything. Analysts have long noted that while GPT models excel in creative writing and general coherence, models like Claude have often shown superior performance in areas requiring extensive context management, nuanced reasoning, and, crucially, adherence to safety guardrails. For complex enterprise tasks—like sifting through years of meeting notes in Teams or reconciling financial data in Excel—the specialized strengths of Claude become vital. Microsoft is building a system that intelligently routes the job to the model best engineered for the task.

2. Managing Vendor Lock-In and Risk

The cloud computing world is built on choice. Relying entirely on one foundational model provider creates a single point of failure, both technically and commercially. By actively integrating Anthropic, Microsoft is hedging its bets. If API costs change dramatically, if one model experiences a significant outage, or if one vendor faces regulatory headwinds, the entire Copilot productivity suite does not grind to a halt. This move provides customers with a feeling of stability and choice, which is paramount for enterprise software adoption. This strategic hedging mirrors the wider trend of cloud providers actively building multi-model ecosystems.

3. The Rise of Agentic AI and Autonomy

The true test of Copilot is its ability to act as an autonomous agent. An agent needs to plan, execute, reflect, and correct. When these agents start interacting with core business data in Outlook and Excel, the stakes rise exponentially. We are moving beyond simple chatbot interactions. As detailed in industry discussions surrounding agentic AI development, these systems require robustness that goes beyond simple prompt engineering. The choice of Claude for these "Cowork" functions strongly implies that Anthropic’s architecture provides a more reliable framework for multi-step, API-calling agent tasks within the Microsoft Graph environment.

For the non-technical audience: Think of it like an autonomous factory. You wouldn't use the same robot arm for delicate circuit board soldering as you would for lifting heavy steel beams. Microsoft is making sure Copilot uses the "delicate soldering robot" (Claude) when summarizing your delicate HR documents, and the "heavy lifter" (likely GPT) for brainstorming new marketing slogans.

Corroborating the Trend: A Wider Ecosystem Shift

This development does not exist in a vacuum. It confirms broader trends observed across the tech sector:

- Enterprise Security Demands: Large regulated industries often prefer models built with specific "constitutional" AI principles, a cornerstone of Anthropic’s development philosophy. Reports discussing Anthropic’s enterprise adoption trends confirm that trust and safety are major selling points over sheer speed or size.

- The Marketplace Mentality: Leading technology platforms, including Microsoft Azure and AWS, are consciously positioning themselves as model marketplaces rather than exclusive distributors. This encourages competition and innovation, benefiting the end-user organization. Analysts suggest this "multi-model marketplace" approach is the inevitable future for resilient enterprise AI stacks.

The investment news between Microsoft and Anthropic, for instance, underscores this commitment: [Bloomberg has reported on Microsoft Deepening AI Ties with Anthropic Investment](https://www.bloomberg.com/press-releases/2024-03-04/microsoft-deepens-ai-ties-with-anthropic-investment), highlighting that large players are no longer placing all their chips on one square.

Implications for Businesses: Actionable Insights

What does this sophisticated orchestration mean for the organizations currently deploying or planning to deploy Copilot?

1. Re-evaluate Model Fit for Workflow

Businesses must move beyond asking, "Which LLM is best?" to "Which LLM is best for *this specific application*?" If you are automating complex reporting in Excel, start testing Claude-driven agents. If you are using Copilot for rapid, high-volume drafting in Outlook, GPT might still be the default. The era of one-size-fits-all AI integration is over.

2. Focus on Agent Frameworks, Not Just Prompts

The success of Copilot Cowork hinges on its *agent framework*—the software layer that manages when and how the AI switches models, calls external tools (like the Microsoft Graph), and handles errors. Businesses should prioritize understanding how their chosen AI platform manages these internal routing decisions. This is where real performance gains and security compliance will be won or lost.

3. New Benchmarks for Success

User satisfaction will no longer be judged solely on the eloquence of the output. Instead, it will be based on the success rate of autonomous execution. Can the agent successfully book the meeting without conflict? Can it accurately summarize the financial discrepancy? As noted by industry observers, we need new benchmarks for [defining the next generation of autonomous workplace AI agents](https://venturebeat.com/) that measure task completion, not just textual quality.

The Road Ahead: Greater Complexity, Greater Power

The technical sophistication required to seamlessly blend the capabilities of GPT, Claude, and potentially others (like Mistral or internal models) into a single, unified user experience is immense. This points toward an exciting, albeit more complex, future.

The next frontier isn't just creating smarter models; it's creating smarter *systems* that manage those models. We are witnessing the emergence of true AI Orchestration Layers. These layers act as sophisticated air traffic controllers, ensuring the right intelligence is applied at the right moment. This architectural maturity is what unlocks true productivity breakthroughs in the enterprise.

This strategic move by Microsoft validates a core tenet of long-term technology adoption: **resilience through diversity.** By embracing both OpenAI and Anthropic, Microsoft is not just diversifying its portfolio; it is future-proofing the very definition of digital productivity for the next decade. The competition now shifts from who has the single best LLM to who has the best platform for intelligently deploying *all* the best LLMs.