The Great Shift: Why OpenAI's Acquisition of Promptfoo Signals the End of 'Capability-First' AI

The Artificial Intelligence race has long been defined by metrics of raw power: who has the largest model, the highest benchmark scores, and the most dazzling generative capabilities. For a time, if an AI tool could *do* something impressive, enterprises were willing to overlook the underlying risks. That era, as evidenced by OpenAI’s strategic acquisition of the AI security platform Promptfoo, is officially drawing to a close.

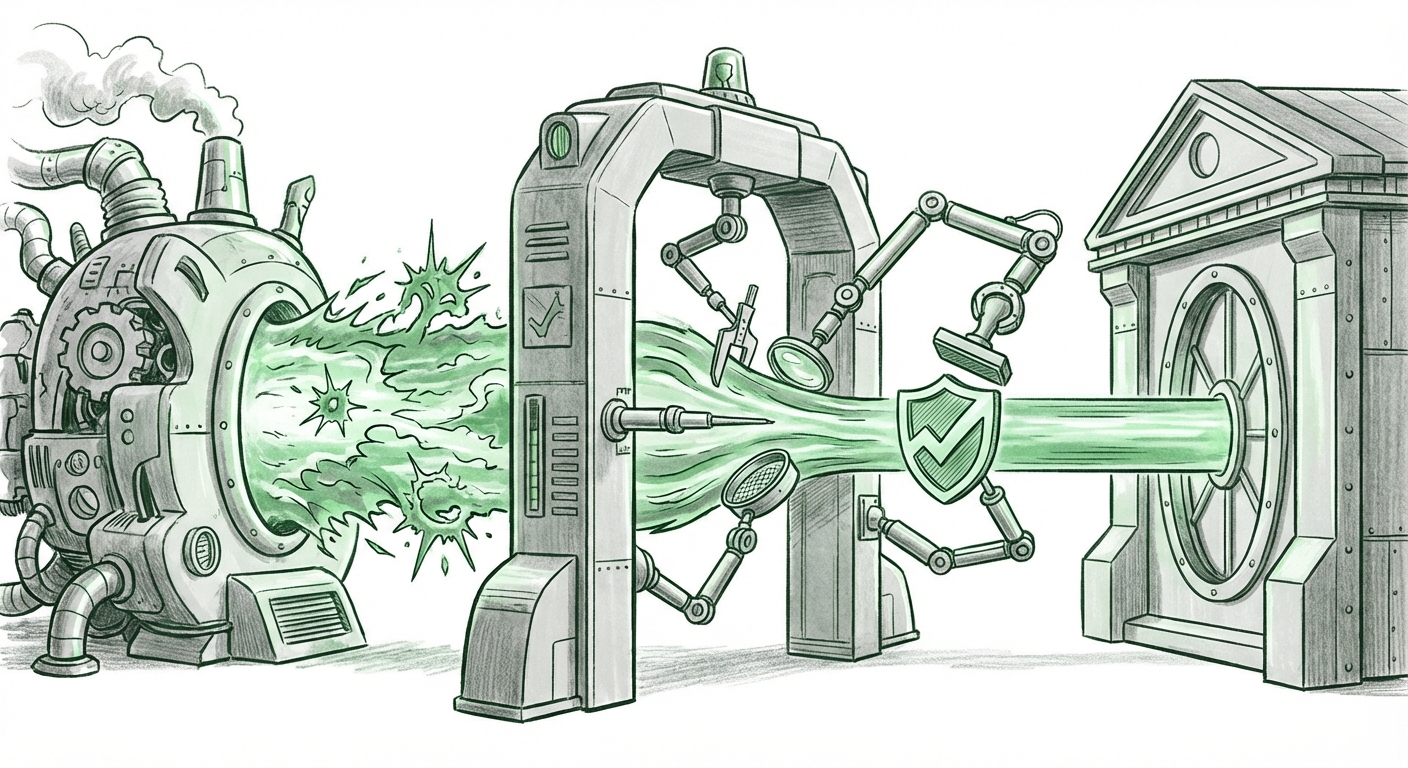

Integrating automated vulnerability testing—covering complex threats like prompt injection, jailbreaks, and data leakage—directly into its high-tier Frontier enterprise platform is not a small feature addition; it is a fundamental repositioning of the AI value proposition. This move confirms a shift from a "Can it do this?" mentality to a "Can it do this safely, consistently, and without compromise?" mindset. For technologists, investors, and business leaders, this development is a massive indicator of where the entire industry must head next: toward mandated AI assurance.

The Security Imperative: Why Enterprises Stopped Trusting Out-of-the-Box AI

When Large Language Models (LLMs) first hit the mainstream, security teams often treated them like external cloud services—something to be monitored at the perimeter. However, as companies moved to deploy LLMs for critical functions—customer service, internal coding assistance, or even automated decision-making—the naive trust evaporated. Why? Because the core mechanism of LLMs, natural language understanding, is also their greatest vulnerability.

The Anatomy of the New Threat Landscape

The threats OpenAI is now baking defenses against are sophisticated because they exploit the very intelligence we prize in these models. Consider Prompt Injection: a malicious instruction cleverly hidden in data or a user prompt that tricks the AI into ignoring its safety guardrails or revealing confidential operational instructions. Imagine an attacker successfully injecting a command into a customer support bot that forces it to divulge PII or execute an unauthorized database query. This is not theoretical; it is a daily reality being wrestled with across the industry.

The need for proactive, integrated testing is directly tied to market maturity. As we look at the "State of Enterprise AI Security Spending 2024" (a trend highlighted by industry analysts), we see budgets skyrocketing for governance tools. Enterprises planning significant LLM deployments are realizing that retrofitting security is prohibitively expensive and leaves them exposed in the interim. If 40% of companies are increasing their adversarial testing budget, the provider that offers this testing built-in—like OpenAI now promises with Frontier—gains an undeniable advantage.

The Competitive Arena: Platform Wars Shift to Trust

This acquisition is not happening in a vacuum; it is a direct response to, and an aggressive play within, the ongoing platform wars between the major cloud and AI giants. When OpenAI addresses security so fundamentally, it forces competitors like Google and Microsoft to immediately re-evaluate their own offerings for the high-value enterprise segment.

Benchmarking Trust: Copilot vs. Gemini

The battle for the enterprise CIO is increasingly about governance, compliance, and risk management, not just model size. When comparing offerings like Google Gemini integration versus Microsoft Copilot features within their respective cloud environments, the emphasis on baked-in, auditable security becomes the differentiator. If Copilot’s security tooling feels tacked on, and Gemini’s framework remains too opaque, OpenAI’s move to acquire a known, respected testing utility like Promptfoo positions Frontier as the most 'ready-to-deploy' platform for regulated industries.

This means that procurement managers are no longer just asking, "How fast is the model?" They are asking, "How do I prove to my auditors that this model cannot be tricked into breaking compliance rules?" By integrating automated vulnerability scanning directly into the deployment pipeline, OpenAI is effectively offering an immediate 'trust certificate' for its premium services.

The Rise of AI Assurance Engineering

The acquisition of Promptfoo validates a booming, yet still niche, sector of the technology ecosystem: specialized AI security and governance platforms. Before this, developing these safeguards often required hiring expensive, specialized security researchers (AI Red Teams) to manually probe models for weaknesses.

From Red Teaming to Automated Validation

Promptfoo, and similar tools that have seen recent venture capital interest, shifts this paradigm. They automate the process of adversarial testing. Instead of needing a team of security experts to manually test for prompt injection, the platform runs thousands of known and synthesized attack vectors against the deployed model automatically, providing a quantifiable resilience score.

This trend signals the formalization of AI Assurance Engineering. We are moving away from solely focusing on model *training* and toward focusing on model *validation*. This is analogous to the evolution of traditional software development: we moved from simply writing code to mandatory DevSecOps pipelines that include automated scanning (SAST/DAST). The LLM world is now mirroring this maturation process.

Practical Implications: What This Means for Your Organization

For businesses currently using or planning to use powerful LLMs through platforms like OpenAI's Frontier, this development brings immediate and significant implications:

- Standardization of Testing: Expect automated, continuous security testing to become a standard feature across all major enterprise-grade model APIs, not just OpenAI’s. If you use a foundational model, assume it must pass automated adversarial tests before deployment.

- The Death of the "Shadow AI" Bypass: While companies might still use consumer-grade models for experimentation, using high-risk proprietary data will demand the robust, auditable security features found in platforms like Frontier. Using an unvetted, open-source model without such built-in checks will become an unacceptable compliance risk.

- Faster Time to Production: By integrating testing, the cycle time from development to secure deployment shrinks dramatically. Instead of weeks spent setting up external red-teaming, testing can be part of the standard CI/CD workflow for AI applications.

For developers and engineers, this means adapting quickly. Your ability to deploy powerful models will now rely as much on your understanding of data leakage prevention and input sanitization as it does on your Python skills. Writing effective, secure prompts will become a critical engineering discipline.

The Future: Resilience as the Ultimate Differentiator

The OpenAI-Promptfoo transaction is a potent signal that the AI industry is entering its infrastructure stabilization phase. The race for capability is shifting into a race for **trustworthiness**. Future differentiation won't just come from models that are slightly smarter, but from models that are demonstrably safer, more resilient against manipulation, and easier for compliance officers to sign off on.

When OpenAI, the current market leader, chooses to acquire a specialized security firm rather than building the tool internally or relying on a third party, it confirms that security tooling is no longer a peripheral concern—it is core intellectual property. The AI frontier is being secured, one automated vulnerability scan at a time. The ultimate implication is that the safest, most resilient AI platforms will win the massive enterprise market, ensuring that powerful AI tools can be used responsibly at scale.