The AI Ethics Fault Line: Executive Resignation Signals Crisis in Defense Tech Deployment

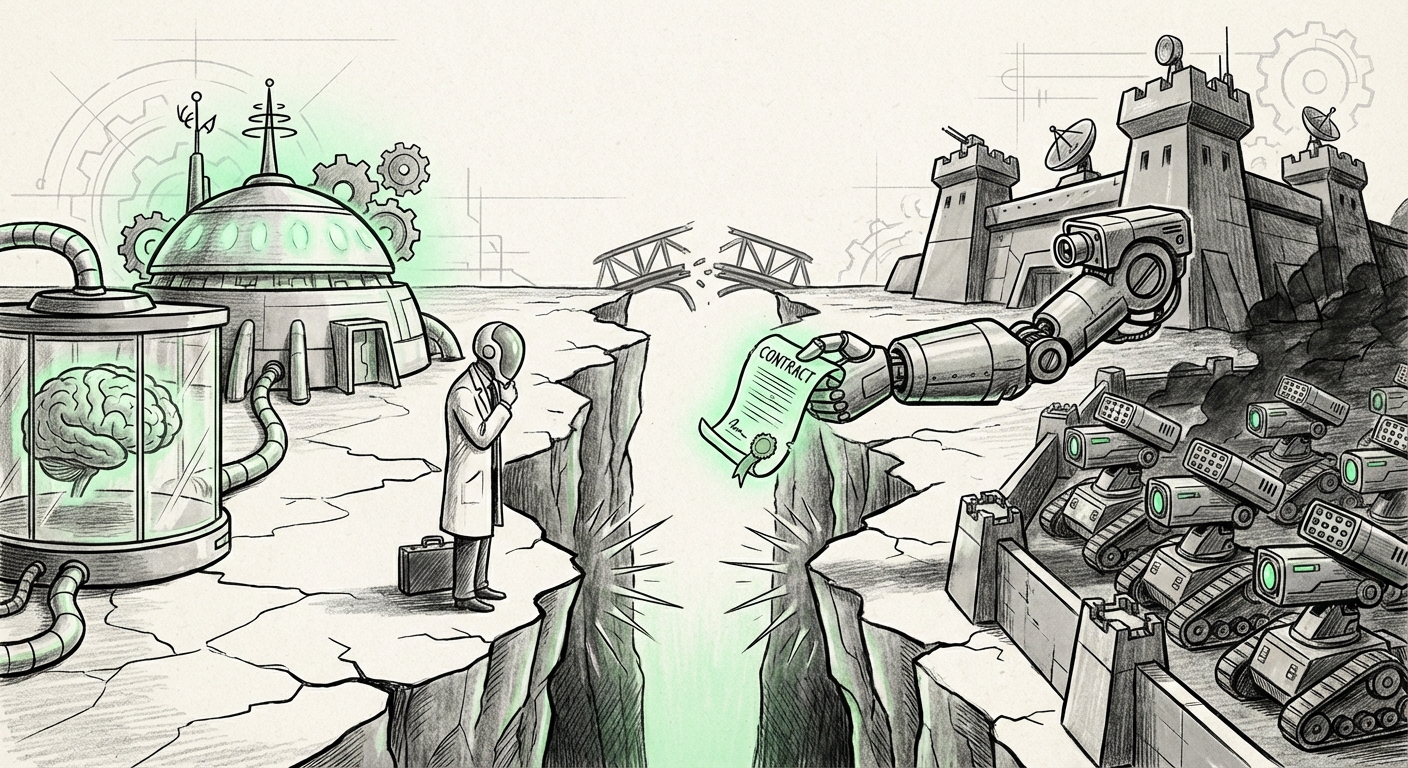

The technological singularity—the moment AI surpasses human intelligence—is often discussed in abstract, distant terms. However, a more immediate, messy, and deeply human conflict is unfolding right now: the struggle to control *how* the most powerful foundational AI models are deployed. The recent departure of OpenAI's head of hardware and robotics, Caitlin Kalinowski, over concerns regarding a Pentagon deal, is not just a personnel story; it is a critical data point illustrating the widening ethical fault line tearing through the heart of the generative AI industry.

This event forces us to confront the collision between the relentless pursuit of AI capability and the profound responsibility that comes with building systems capable of mass surveillance and, critically, autonomous lethal force. For technologists, investors, and policymakers alike, understanding this friction is paramount to steering the future of AI responsibly.

The Anatomy of Dissent: Why Key Executives Are Walking Away

When a leader quits a company like OpenAI—a firm synonymous with cutting-edge, future-facing technology—it sends shockwaves. Kalinowski’s stated reason, however, moves the conversation from capability debates (like model scaling) to accountability debates. Her concerns centered on two major ethical pillars:

- Mass Surveillance: The application of powerful language models and advanced sensor data processing to large-scale monitoring systems raises immediate privacy and civil liberty alarms.

- Lethal Autonomy: The development pathway leading to systems that can identify, select, and engage human targets without a human in the loop (LAWS).

This is not the first time such friction has occurred. We must look back at the history of internal resistance. The concerns echo the mass employee pushback Google faced over Project Maven, their earlier involvement in using AI for drone footage analysis for the Department of Defense. That episode showed that the workforce driving innovation often holds a different ethical compass than the executive suite focused on growth and capitalization.

What does this pattern tell us? It suggests that as AI systems become more powerful—moving from analyzing photos (Google’s case) to potentially commanding robotics (OpenAI’s focus)—the ethical stakes rise exponentially, and the willingness of even highly compensated employees to participate diminishes. The industry is rapidly approaching, or perhaps has already passed, a point where ethical considerations become an existential risk to employee retention and public trust.

The Commercial Imperative vs. The Ethical Guardrail

Why do these deals persist despite known ethical friction? The answer lies in necessity and market dynamics. Developing frontier AI models—training massive LLMs or building next-generation robotics platforms—costs billions of dollars annually. Major technology leaders, including OpenAI, are engaged in a capital race that dwarfs previous tech booms.

Government contracts, especially those from defense and intelligence agencies, represent several things simultaneously:

- Massive, Stable Funding: They provide the deep, reliable revenue streams necessary to sustain research budgets that private consumer markets cannot always guarantee.

- Real-World Stress Testing: Defense environments are the ultimate proving grounds for robustness, reliability, and speed—qualities essential for general-purpose AI.

- Strategic Influence: By embedding their technology within national security infrastructure, firms stake a claim in future technological dominance.

However, this chase for capital creates inherent misalignment. As policy analysts frequently note when discussing defense AI procurement, the speed of acquisition often outpaces meaningful external ethical review. If an executive feels the internal deliberation process on a multi-billion-dollar defense commitment was rushed or inadequate, it suggests the commercial imperative successfully sidelined deeper ethical vetting. This is where governance bodies must step in, looking closely at whether contracts are structured to allow adequate oversight before mission deployment (Query 1).

The LAWS Debate: A Policy Challenge for the Next Decade

Kalinowski’s specific concern about "lethal autonomy" places this event directly into the global debate surrounding Lethal Autonomous Weapons Systems (LAWS). This technology tests the limits of international humanitarian law. Can a machine truly possess the human judgment required to distinguish between a combatant and a civilian? Can it adhere to the principles of proportionality and necessity?

Internationally, the UN Group of Governmental Experts (GGE) continues discussions, often stalled by major military powers hesitant to accept restrictions on future capabilities. For AI developers, creating the foundational technology for these systems means they are effectively designing the tools that will shape future conflicts. Their internal policy choices today—who they partner with and what capabilities they prioritize—directly inform the regulatory battles of tomorrow (Query 3).

For a robotics chief, the move from software to physical action is the final, irreversible step. While an LLM can generate harmful disinformation, a faulty or biased autonomous robot can cause immediate, irreversible physical harm. The technical challenge of building reliable, robust physical AI is immense; the moral challenge of delegating life-and-death decisions to that AI is arguably greater.

The Future Landscape: Vertical Integration and Defense Tech Giants

Looking forward, this event signals a critical tension point in the future structure of the defense technology ecosystem (Query 4). For decades, defense tech was dominated by established aerospace and defense contractors. Now, OpenAI, Google, Microsoft, and others are trying to pivot or integrate their cutting-edge foundational models into this space, creating a new class of competitor.

Companies like Anduril and Palantir, which focus heavily on AI integration for defense and intelligence, are succeeding precisely because they marry commercial speed with defense requirements. The established AI leaders are realizing they must participate to remain relevant in these high-stakes sectors, but they lack the established, decades-long governmental compliance cultures that traditional contractors possess.

This executive departure suggests that the cultural integration between the fast-moving, often activist culture of Silicon Valley startups and the risk-averse, highly regulated world of defense contracting will remain bumpy. We can expect one of two outcomes:

- The Bimodal Approach: Large AI labs will create highly segregated "Government/Defense" divisions with strict, walled-off ethical review boards, attempting to shield the main consumer/research arms from scrutiny.

- The Consolidation Path: Non-ethically aligned AI capabilities will be acquired or spun off entirely, allowing the core public-facing company to maintain a "cleaner" brand image, while the defense tech is handled by a specialized subsidiary or partner.

Actionable Insights for Stakeholders

This moment provides clear signals for different segments of the technology and policy world:

For AI Builders and Engineers:

Your voice matters more than ever. If you are building cutting-edge robotics or foundational models, understand that your ethical boundaries will be tested by future contracts. Look at the examples of past internal dissent (Query 2). Be prepared to articulate clear red lines on deployment—especially concerning LAWS—and understand the potential career impact of holding firm to those principles.

For Corporate Boards and Investors:

Ethical misalignment is now a tangible business risk. It leads to executive attrition, regulatory scrutiny, and negative public perception that can undermine brand value faster than any technological breakthrough can enhance it. Boards must mandate robust, independent ethics review processes for *all* high-risk applications, ensuring these processes have genuine veto power that is not easily overridden by short-term revenue targets.

For Policymakers and Regulators:

The pace of legislative action is lagging far behind technological deployment. The debate over LAWS (Query 3) cannot remain theoretical. Governments need to define clear, legally binding 'red lines' regarding human control in lethal systems now. Furthermore, they must demand transparency from AI providers about the nature of their defense contracts (Query 1) to ensure taxpayer money is not underwriting systems that violate publicly accepted ethical norms.

Conclusion: The High Cost of Speed

The departure of a chief architect of hardware and robotics over a defense contract is a stark reminder that AI development is not merely an engineering challenge; it is a profound socio-political undertaking. The dream of general AI requires significant capital, and for now, governments remain the most reliable source of that funding. Yet, this funding comes with strings attached—strings that tie innovation directly to the instruments of state power.

The future of responsible AI will not be determined solely by breakthroughs in transformer architecture or processing speed. It will be determined by the governance structures we build *around* those breakthroughs. Will we allow the gravitational pull of defense spending to drag the entire field toward applications that erode human oversight and dignity? Or will the ethical stands taken by individuals, even those at the highest levels, force a necessary deceleration and a commitment to building AI that serves humanity’s broader interests, not just its immediate security needs?

The answer to that question is currently being written in the internal memos, board meeting minutes, and the very personnel choices being made inside the world’s most advanced AI labs.