The Omni-Model Revolution: Decoding OpenAI's Next Leap in Unified AI

The artificial intelligence landscape is perpetually defined by paradigm shifts. We moved from specialized models to Large Language Models (LLMs), and then rapidly into multimodal systems that can interpret images alongside text. Now, whispers from within the AI community—specifically from OpenAI employees referencing a potential project codenamed "BiDi"—suggest the next seismic event is imminent: the birth of the Omni-Model.

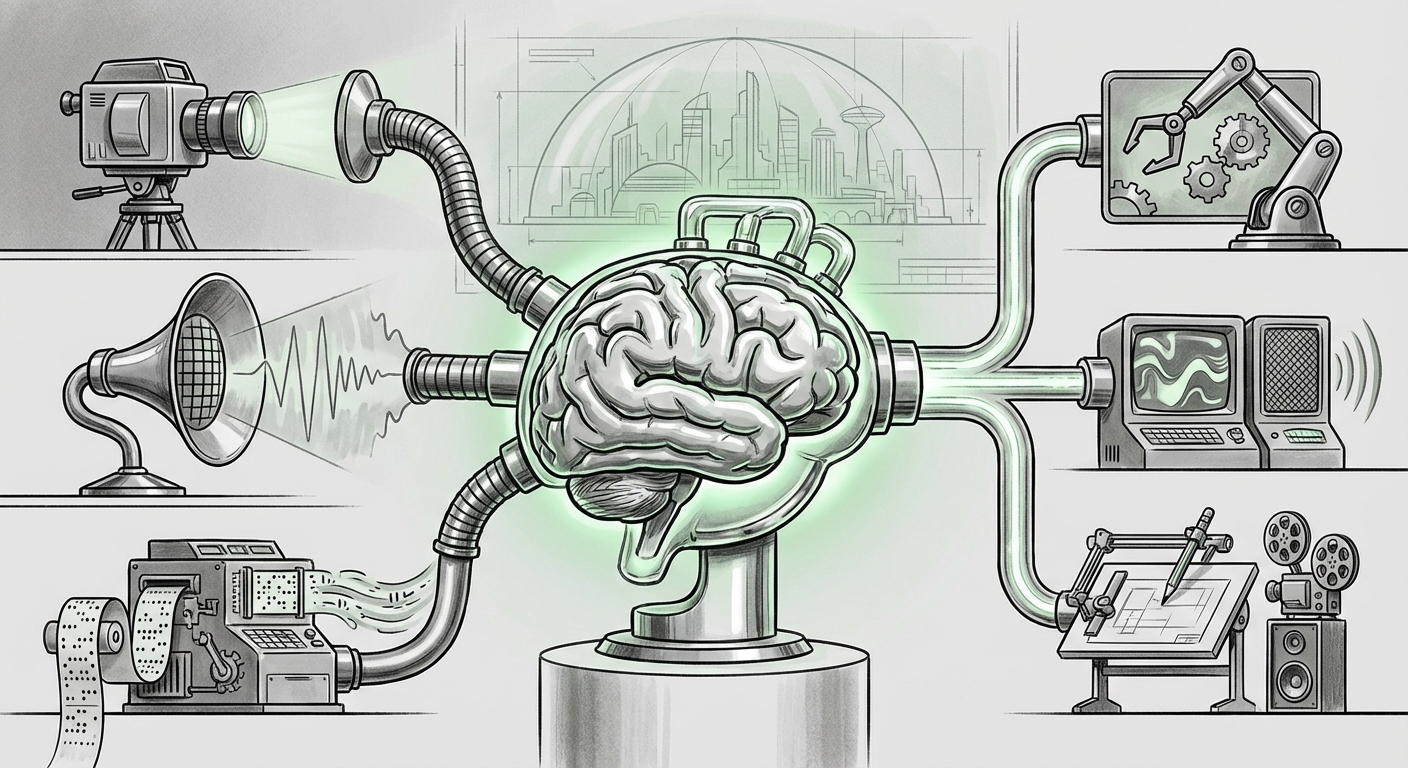

An omni-model is not just a slightly better multimodal system; it represents a fundamental architectural unification. It aims to ingest, reason over, and output information across *all* human communication channels—text, high-fidelity audio, video, and potentially even spatial data—using a single, coherent neural network. As an AI technology analyst, this rumored development signals the true arrival of generalized intelligence, moving us closer to systems that understand the world as humans do.

I. Defining the Next Technical Bar: What is an Omni-Model?

To appreciate the significance of the omni-model, we must first understand the limitations of today’s best models. Current systems, while powerful, often rely on a "chain" of specialized components. When you speak to a voice assistant, one model handles Automatic Speech Recognition (ASR), converting audio to text; a second model (the LLM) processes the text; and a third Text-to-Speech (TTS) model generates the response audio. This pipeline introduces latency, error propagation, and, crucially, a loss of contextual nuance.

The omni-model seeks to eliminate this chain. Leveraging insights derived from industry exploration (as highlighted by searches like "unified multimodal foundation model" trends), the goal is a truly unified embedding space. Imagine an AI where the concept of the color red, the sound of a siren, and the word "danger" all exist as deeply connected vectors within the same conceptual map.

The Architectural Shift: Beyond Patchwork Integration

For developers and researchers, the technical challenge—and the value—lies in creating shared attention mechanisms that can simultaneously weigh spoken inflection against written instructions while observing a real-time video feed. This requires novel transformer architectures capable of massive input and output diversity without degrading performance in any single domain.

If OpenAI is successfully working on this, they are achieving an architectural breakthrough that moves beyond mere capability stacking (adding vision models to text models) toward genuine, holistic understanding. This capability will be essential for the next generation of AI assistants.

II. The Competitive Crucible: Pressure from Google and Meta

Innovation in AI is rarely isolated. Rumors surrounding OpenAI's advancements must be viewed through the lens of intense, multi-front competition. The pressure to deliver a superior, unified experience is what defines the current market battleground.

Google’s Native Multimodality

Google’s **Gemini** family has long been positioned as natively multimodal, meaning it was designed from the ground up to ingest different data types simultaneously, contrasting with earlier models where modalities were added later. Analysis of this competition (via searches like Google Gemini 2.5 multimodality vs OpenAI) shows that the race is now focused on two key vectors: **context window size** and **cross-modal reasoning depth**.

If OpenAI’s omni-model, possibly tied to the "BiDi" project, focuses heavily on real-time audio interaction (as implied by related searches on "end-to-end voice model" AI), it suggests they aim to leapfrog Google’s current fluency in complex, spontaneous conversation.

Meta’s Open-Source Challenge

Meanwhile, Meta is pushing the boundaries through open-source releases, notably with models like **SeamlessM4T**, which focuses on unifying translation and speech tasks across many languages. This strategy creates a broad base for community iteration.

This competitive environment dictates that OpenAI cannot afford incremental updates. The omni-model is positioned not just as an upgrade to GPT-5, but as a statement piece designed to reassert the lead by achieving a level of integration that rivals find difficult or costly to replicate quickly.

III. Practical Implications: From Assistants to Embodied Agents

The transition to an omni-model is the missing link for several high-stakes, real-world applications. For both business executives and technologists, understanding these implications is crucial for future planning.

A. The End of Latency in Conversation

The most immediate impact will be felt in human-computer interaction. Imagine a true digital partner that hears your tone of voice, sees your body language through a camera, and can respond instantly without sounding robotic or requiring you to stop speaking.

This is where the "BiDi" rumor gains traction. A bidirectional, unified audio system means the AI can interrupt you naturally, detect frustration in your tone, and provide assistance that is contextually aware in a way that typing commands never could be. For customer service, education, and accessibility tools, this represents a qualitative leap in user experience.

B. The Dawn of Truly Capable Embodied AI

A foundational realization within the field is that truly intelligent robotics—embodied agents operating in dynamic physical spaces—require perfect, real-time sensory fusion. You cannot pilot a robot by reading text descriptions of its environment.

As explored through queries regarding "omni model" AI "embodied agents" implications, the omni-model provides the necessary "brain." A robot equipped with this model could look at a cluttered workbench (vision), hear a verbal instruction ("Find the wrench and hand it to me," audio), understand the implicit spatial relationship, and execute the task flawlessly. This capability drastically shortens the timeline for deploying sophisticated, general-purpose automation in factories, homes, and disaster zones.

C. Next-Generation Creative Workflows

Creative professionals will also see a paradigm shift. Instead of using separate tools for image generation, video editing, and scriptwriting, an omni-model allows for unified creative direction. A user could say, "Take this video clip, change the lighting to look like a moody film noir, replace the background music with 1940s jazz, and write a voiceover explaining the historical context." The model handles all modalities coherently, ensuring outputs match across visual, auditory, and textual elements.

IV. The Inevitable Shadow: Safety, Ethics, and Governance

With immense power comes unprecedented risk. The advancement toward fully integrated, highly realistic generative capabilities forces an urgent reckoning with safety protocols.

The Deepfake Dilemma Amplified

If an omni-model can perfectly generate convincing video, voice, and text simultaneously, the potential for misuse—from hyper-personalized social engineering attacks to undetectable political disinformation—skyrockets. Our current defenses, often relying on detecting inconsistencies between modalities (e.g., lip-syncing errors), may become obsolete overnight.

This concern is reflected in current industry dialogues surrounding safety concerns unified multimodal AI models. Regulators and safety advocates are already pushing for robust watermarking and provenance tracking for all synthetic outputs.

Alignment in a Complex World

Aligning a model with human values becomes exponentially harder when the model is interacting with the world across more sensory channels. How do we ensure an embodied agent, relying on real-time visual and auditory input, adheres strictly to safety guardrails when perceiving complex, novel situations that were not explicitly covered in its training data?

For business leaders, this means that investing in the *deployment* of omni-models must be paired with significant investment in *governance and monitoring*. The compliance burden associated with deploying a system capable of generating rich, persuasive synthetic reality will be substantial.

V. Actionable Insights for the Road Ahead

The potential arrival of an omni-model demands proactive strategy rather than reactive adjustments. Here is what leaders and developers should focus on:

- Audit Current Workflows for Bottlenecks: Identify where your current system relies on stitching together separate AI services (ASR, vision API, LLM). These workflows are prime candidates for obsolescence or radical simplification once true omni-models are available.

- Prioritize Real-Time Benchmarks: Focus development and procurement on latency metrics, particularly concerning audio and video processing. If the omni-model delivers on the promise of unified, low-latency interaction, speed will become the new primary differentiator over raw conceptual knowledge.

- Establish Multimodal Safety Guardrails Now: Do not wait for the final product release. Start developing internal policies for detecting and mitigating synthetic media across all possible modalities. Understanding regulatory shifts (like the growing focus in the EU AI Act on high-risk systems) is paramount.

- Invest in Data Unification Skills: The teams that thrive will be those capable of curating and managing data sets where text, sound, and image sequences are perfectly synchronized and annotated. This shifts data strategy from managing separate silos to managing deeply integrated sensory streams.

The technology glimpsed through employee leaks and project code names like "BiDi" suggests that the industry is about to move from AI that *reads and writes* to AI that truly *sees, hears, and interacts* with the world in a single, integrated thought process. This is not just the next model iteration; it is the threshold of a new era in cognitive computing.