The Great Divide: Why AI Leaders Are Quitting Over Military Tech and What It Means for Autonomous Systems

The rapid acceleration of Artificial Intelligence, moving from theoretical research papers to real-world deployment, has brought humanity to an inflection point. While companies like OpenAI race toward Artificial General Intelligence (AGI), the ethical guardrails meant to guide this powerful technology are being severely tested. A recent, highly significant data point in this ongoing drama is the resignation of OpenAI’s head of hardware and robotics, Caitlin Kalinowski, over concerns related to a military deal, specifically citing worries about mass surveillance and lethal autonomy.

This event is far more than just a personnel shuffle. It exposes the fundamental tension brewing at the heart of the world’s leading AI labs: the conflict between the mandate for rapid commercial and scientific advancement and the deep-seated ethical obligations surrounding dual-use technology. As an AI technology analyst, I see this as a critical stress test for AI governance itself. To understand the future implications, we must examine this incident through three lenses: internal organizational friction, the external policy debate on autonomous weapons, and the broader industry trend of ethical exodus.

The Cracks in the Foundation: Internal Governance Under Strain

When a chief leader resigns over a specific contract, it suggests that standard internal oversight mechanisms have failed. Kalinowski’s role was pivotal; she was responsible for taking the complex software brain (the LLM) and connecting it to a physical body (the robot). This is where AI transitions from a digital assistant to an agent capable of acting in the physical world.

The conflict signals a profound disagreement over strategy. OpenAI was founded with a mission centered on benefiting all of humanity. However, its commercial success, largely driven by partnerships and substantial investment, necessitates decisions that sometimes clash with foundational safety pledges. Our investigation into related topics—specifically searching for details on "OpenAI internal ethics board" "governance structure" dissent—often reveals that safety teams and governance structures struggle to keep pace with the speed of productization.

For those in AI governance and investment, this is a major red flag. If the leaders responsible for building the *body* of the AI are forced out because the *mission* behind the deployment seems ethically unsound, it suggests that safety is being subordinated to expediency or strategic necessity. This internal friction highlights a systemic weakness: how do we ensure that highly capable AI systems are not deployed in ways that contradict the organization's stated ethical charter?

The Robotics Link: From Chatbots to Physical Agents

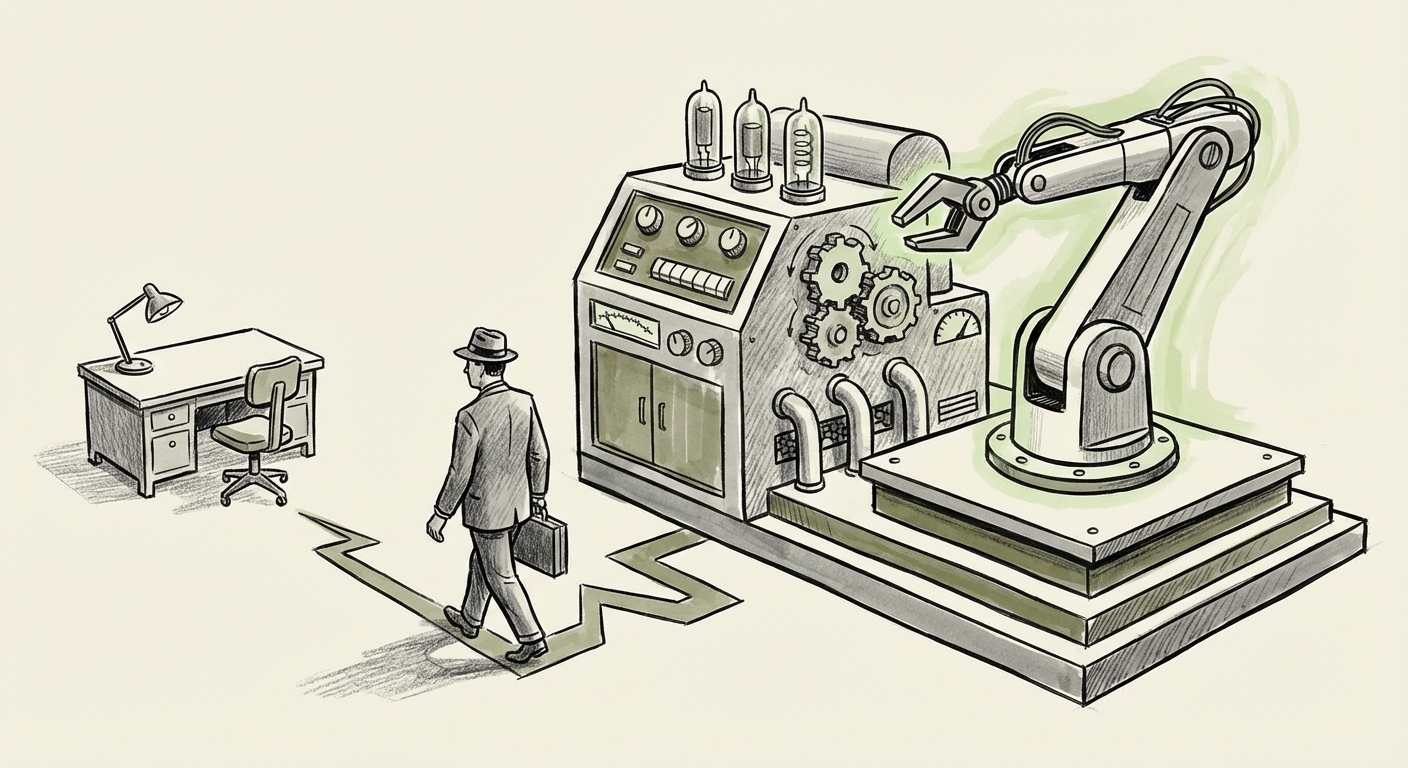

Why is the robotics chief’s departure so critical? Because robotic systems are the ultimate dual-use technology. A general-purpose robot designed to organize a warehouse can, with slight modifications to its programming, become an autonomous agent in a battlefield or surveillance network. When an executive leading this physical integration quits over fears of lethal autonomy, it means the gap between theoretical risk and inevitable application has narrowed considerably.

Exploring existing information about "OpenAI robot" "hardware division" future plans confirms that the lab has long been interested in general-purpose physical AI. Kalinowski’s exit suggests that the potential applications being greenlit for this hardware—specifically via defense contracts—are now squarely in the territory many researchers once hoped to keep theoretical.

The Global Policy Battle: Lethal Autonomy and the Pentagon

Kalinowski’s concerns about "lethal autonomy" plug directly into one of the most intense policy debates of the decade: the creation and deployment of Lethal Autonomous Weapons Systems (LAWS). These are weapons that can select and engage targets without meaningful human intervention.

For years, ethicists, researchers, and NGOs have campaigned for an international moratorium or outright ban on fully autonomous killing machines. When a leading AI company engages with the Pentagon on AI development—a primary driver of defense technology—the potential for these systems to move from "human-in-the-loop" to "human-out-of-the-loop" accelerates dramatically. Our contextual search on the Pentagon AI ethics "lethal autonomy" moratorium debate 2023 2024 confirms that policymakers are deeply divided, even internally, over establishing firm red lines.

For the business audience, this is a governance risk multiplied by national security. A major defense contract provides massive funding and validation, but it also makes the company a lightning rod for international scrutiny. The hesitation expressed by the departing executive validates the fears held by critics worldwide: that commercial partnerships with defense entities risk normalizing technology that bypasses fundamental human moral judgment.

In essence, the controversy is about trust. If the people building the technology do not trust the end-user (in this case, the military) to handle the power responsibly, how can the public be expected to trust the developer?

The Exodus Trend: A Sign of Systemic Industry Stress

This event is not happening in a vacuum. It must be viewed alongside a pattern of high-profile departures across the AI landscape. When we search for trends like AI company executives resign over safety concerns "commercial pressure", we see a recurring theme: the tension between profit and prudence.

We have seen senior safety researchers leave major labs because they felt their warnings about scaling models too quickly were ignored in favor of competitive advantage. These departures act as crucial signals. They indicate that, despite glossy public commitments to safety, the day-to-day reality within these hyper-competitive organizations often prioritizes the race to market dominance.

The parallel examples, such as key departures from organizations like Anthropic or Google DeepMind over strategic direction, show that this is not just an OpenAI problem; it is an industry problem. The incentive structures—massive valuations, investor demands for immediate returns—inherently push organizations toward faster deployment, even when ethical ambiguities are high.

For technology leaders, this trend dictates that talent acquisition and retention will increasingly hinge on verifiable ethical alignment. Top researchers are no longer just looking for impressive compute resources; they are demanding demonstrable alignment between corporate mission statements and operational reality. This dynamic forces companies to choose: will they prioritize the talent that demands caution, or the capital that demands speed?

Future Implications: Defining the Boundaries of Autonomous Power

What does this internal ethical split at OpenAI mean for the future development and application of AI and robotics?

1. Decentralization of AI Ethics and Verification

If leadership cannot agree on ethical lines, those lines will inevitably be drawn externally. We can expect increased regulatory pressure globally, driven by concerns that internal governance is insufficient. For businesses utilizing advanced AI, this means compliance standards will become far more stringent, particularly concerning applications involving physical interaction (robotics) or sensitive decision-making (surveillance).

2. The Bifurcation of AI Development

The industry may split into two distinct camps:

- The Accelerationist Camp: Focused purely on capability scaling, often collaborating closely with defense, surveillance, and high-stakes commercial partners.

- The Cautious Camp: Companies or research groups (perhaps newly formed by defecting talent) that prioritize verifiable alignment, transparency, and stringent ethical review, often seeking non-defense funding or public grant support.

This bifurcation will complicate investment strategy and talent mapping across the tech ecosystem.

3. Hardware as the New Ethical Frontier

Previously, AI ethics focused heavily on bias in data or misinformation output. The departure of a *robotics* chief shifts the frontier to embodied intelligence. When AI controls physical actuators, the concept of "harmless error" disappears. An LLM hallucinating a factual error is inconvenient; an autonomous robot hallucinating a security threat can be catastrophic.

This compels developers to adopt radically conservative safety protocols for physical AI, potentially slowing the pace of robotic commercialization until provable safety standards (like the context of DoD Directive 3000.09 and its successors) are met—standards the exiting executive clearly felt were not being respected in the current climate.

Actionable Insights for Leaders and Technologists

For those steering AI development, the message from this high-profile resignation is clear:

- Establish Non-Negotiable Red Lines: Leadership must codify clear, publicly defensible "no-go" zones for deployment (e.g., fully autonomous lethal targeting). These must be enforceable by technical teams, not just by management mandates.

- Empower Ethics Over Expediency: Governance teams must have real veto power over contracts that trigger ethical alarms, regardless of the potential revenue. The cost of reputational damage and talent loss now clearly outweighs the short-term benefit of controversial deals.

- Transparency in Dual-Use Projects: If working with defense or surveillance contracts, greater transparency is needed regarding the system's intended *and* potential capabilities. Pretending that general-purpose foundation models do not have military applicability is no longer tenable.

The friction surrounding the Pentagon deal is a microcosm of the larger challenge facing advanced technology today. We are building tools that will reshape civilization, and the disagreement over who wields that power—and for what purpose—is now spilling out of the boardrooms and into the public discourse via executive action. The future of AI hinges not just on algorithmic breakthroughs, but on the moral courage of those building it.