The Omni AI Revolution: Decoding OpenAI's "BiDi" Model and the Dawn of Truly Multimodal Intelligence

The AI landscape is defined by seismic shifts, and the most recent tremors emanating from OpenAI suggest we are on the precipice of the next great leap. Whispers, fueled by employee hints and internal project leaks concerning a new "omni model," potentially dubbed "BiDi" (Bidirectional), signal a move away from discrete, specialized AI tools toward a single, unified intelligence.

To the casual observer, this might sound like a minor upgrade—another version release. But for those tracking the core trajectory of artificial intelligence, this development represents a pivotal moment: the realization of natively multimodal systems. This isn't just about having a text model *talk* to an image model; it’s about creating a singular brain that processes sight, sound, language, and action simultaneously, mirroring how human intelligence functions. This evolution is tightly coupled with the most ambitious goal in the field: Artificial General Intelligence (AGI).

From Specialized Tools to Unified Perception: What is an Omni Model?

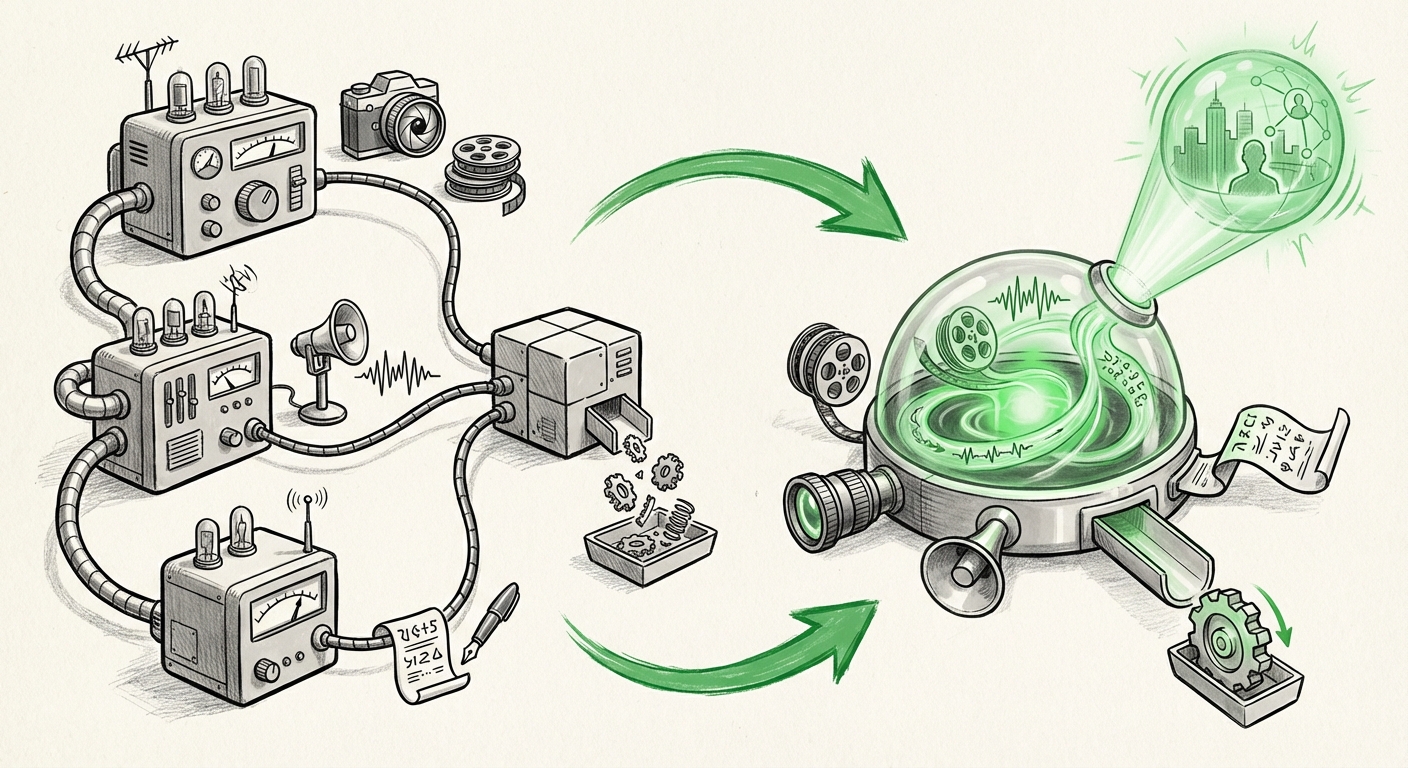

Currently, leading AI systems often operate like highly skilled specialists. We have large language models (LLMs) for text (like GPT-4), dedicated diffusion models for image generation (like DALL-E 3), and separate systems for video creation (like Sora). While these can be chained together—a process called "tool use" or "pipelining"—they rely on translation layers between different data formats (modalities).

The rumored "omni model," or "BiDi," suggests a radical departure. Imagine an architecture where text tokens, image pixels, and audio waveforms are mapped directly into a shared, fundamental understanding space. This is the essence of native multimodality. The "BiDi" (Bidirectional) aspect strongly implies that the model can flawlessly reason backward and forward across these inputs. If you show it a video of a complex machine breaking down, it can simultaneously generate the technical manual (text output), sketch the faulty component (image output), and verbally explain the repair procedure (audio output), all while understanding the initial visual context better than any prior system.

This move is validated by broader industry efforts. While OpenAI pushes "BiDi," major players are tackling similar challenges. The pursuit of a unified foundation model suggests a consensus among top labs that current modular approaches have hit a scaling ceiling in terms of complex reasoning.

Corroborating the Technical Shift: Architectural Necessity

For an omni model to work, massive architectural innovation is required. We are looking for evidence that researchers are solving the challenges of unified tokenization and shared latent spaces.

- Unified Tokenization: How do you turn a sound wave, a block of text, and a frame of video into a single, comparable 'language' for the neural network? The research points toward advanced methods that encode diverse sensory data into a common representational format, allowing the Transformer backbone to perform generalized reasoning across everything it "sees" or "hears."

- Bidirectionality Explained: In traditional models, information flows primarily one way. Bidirectional processing means the model continuously updates its understanding based on context from all inputs simultaneously. This depth of cross-referencing is crucial for tasks requiring deep causal reasoning, like understanding sarcasm in dialogue paired with subtle facial expressions.

This focus on deep architectural synthesis is what distinguishes the omni model ambition from iterative releases of existing models.

The Competitive Pulse: Why Now?

Major AI leaps rarely happen in isolation. The pressure cooker of competition is forcing OpenAI’s hand and providing context for the timing of the rumored "BiDi" release.

The development of Google’s Gemini models, which explicitly aimed for native multimodality from the start, set a high bar. Likewise, Anthropic’s ongoing focus on highly contextual and safe reasoning continues to push capability boundaries. The industry consensus is that the next trillion-parameter model must prove its AGI relevance by mastering input diversity.

When competitors like Google DeepMind publish insights on fusing different data streams (as seen in their progression toward Gemini), it validates the architectural path OpenAI appears to be following. The race is now about *efficiency* and *coherence* within that unified model. If OpenAI achieves a breakthrough that results in a single, coherent model far surpassing the performance of competitors’ stacked solutions, the competitive advantage will be enormous. This competitive dynamic suggests that if the "BiDi" project is real, its target release window is likely being dictated by benchmarks set by rivals.

Defining the Future: Capabilities That Change Everything

The transition to an omni model fundamentally changes the *utility* of AI. We move from models that *describe* the world to models that truly *understand* it across sensory domains.

Consider the implications for video understanding, a key area where current models often stumble:

- Long-Context, High-Fidelity Reasoning: Imagine uploading an hour-long security video. A current system struggles to summarize it reliably. An omni model can identify the exact moment a specific object appeared, cross-reference that moment with audio logs, and generate a detailed technical report on the interaction—all without losing temporal context.

- Interactive Simulation: The "BiDi" architecture could enable complex, real-time simulations. Developers could prompt the system: "Create a virtual testing environment where this new bridge design experiences a Category 5 hurricane, and report the structural stress points in real-time graphs." The model is simultaneously handling physics, visual rendering, and data analysis.

- Robotics and Embodiment: This is perhaps the most profound application. For an AI to effectively control a physical robot, it must process visual input, auditory cues (a warning siren), tactile feedback, and linguistic instructions *simultaneously*. The omni model provides the cognitive substrate necessary for robust, real-world interaction, drastically accelerating the timeline for practical, general-purpose robotics.

Implications for Business and Society: The Infrastructure Overhaul

The arrival of a successful omni model—whether GPT-5 or whatever comes next—is not just a product launch; it’s an infrastructural moment equivalent to the standardization of the internet protocol.

For Software Developers and Product Managers

The current development cycle often involves stitching together multiple vendor APIs: one for text, one for vision, one for embeddings. An omni model simplifies the stack dramatically. Developers will no longer need to manage complex data routing and translation layers between specialized models.

Actionable Insight: Organizations must begin auditing their AI integration pipelines now. Focus efforts on standardizing data ingestion formats (even if they are currently separate) to prepare for a future where data types are seamlessly merged by the backend infrastructure. Furthermore, look at current workflow bottlenecks that require human review between AI steps—these are the first areas where an omni model will offer 10x productivity gains.

For Enterprise Strategy and Automation

The business impact centers on the automation of complex cognitive tasks that require sensory integration—tasks previously considered safe from automation.

- Manufacturing and Quality Control: Real-time defect analysis using high-speed video, sound analysis (listening for anomalous machine noise), and operator communication (reading maintenance logs) becomes instantly unified.

- Media and Content Creation: True end-to-end content generation—from screenplay and storyboarding to final rendered video—becomes accessible through simple natural language command, drastically lowering barriers to high-fidelity production.

- Scientific Discovery: Researchers can feed raw experimental data—microscope imagery, sensor readings, handwritten lab notes—into one system for hypothesis generation and experimental design review.

If this unified architecture proves significantly more capable, the "AGI timeline shift" (as suggested by industry speculation) becomes reality faster than expected. Capabilities that previously seemed five years out might arrive in 18 months.

Navigating the Shift: Risks and Responsibility

With increased capability comes increased complexity and risk. A truly bidirectional, unified intelligence poses unique governance challenges.

If the model fails, the cascading error is more severe. A failure in a text-only model might lead to a bad summary; a failure in a unified model controlling a physical process could lead to unforeseen real-world consequences. Furthermore, auditing for bias becomes exponentially harder when the input modalities are so interwoven.

Policy and Safety Actionable Insight: The industry cannot wait for the product launch. Development must parallel investments in interpretability tools capable of dissecting decisions made across the unified latent space. We must demand transparency regarding how "BiDi" handles conflicting information across different sensory streams (e.g., if audio contradicts video).

Conclusion: The Integrated Mind is Arriving

The rumors surrounding OpenAI’s "omni model" or "BiDi" are not just exciting industry chatter; they represent the expected convergence point of current AI research. The move toward native multimodality is the most significant architectural challenge facing AI labs today, representing the clearest path toward systems capable of generalized reasoning.

The implications are staggering. For technology strategists, this means preparing for a world where software development is simpler but the underlying systems are vastly more powerful and interconnected. For society, it accelerates the AGI discussion by providing the cognitive scaffolding—the ability to perceive and reason across sensory reality—that truly intelligent agents require. Whether the next model is called GPT-5 or BiDi, the era of specialized AI is ending, and the era of the integrated mind is dawning.