The End of Text: Why Unlabeled Video is the Next Multi-Trillion Parameter Training Frontier

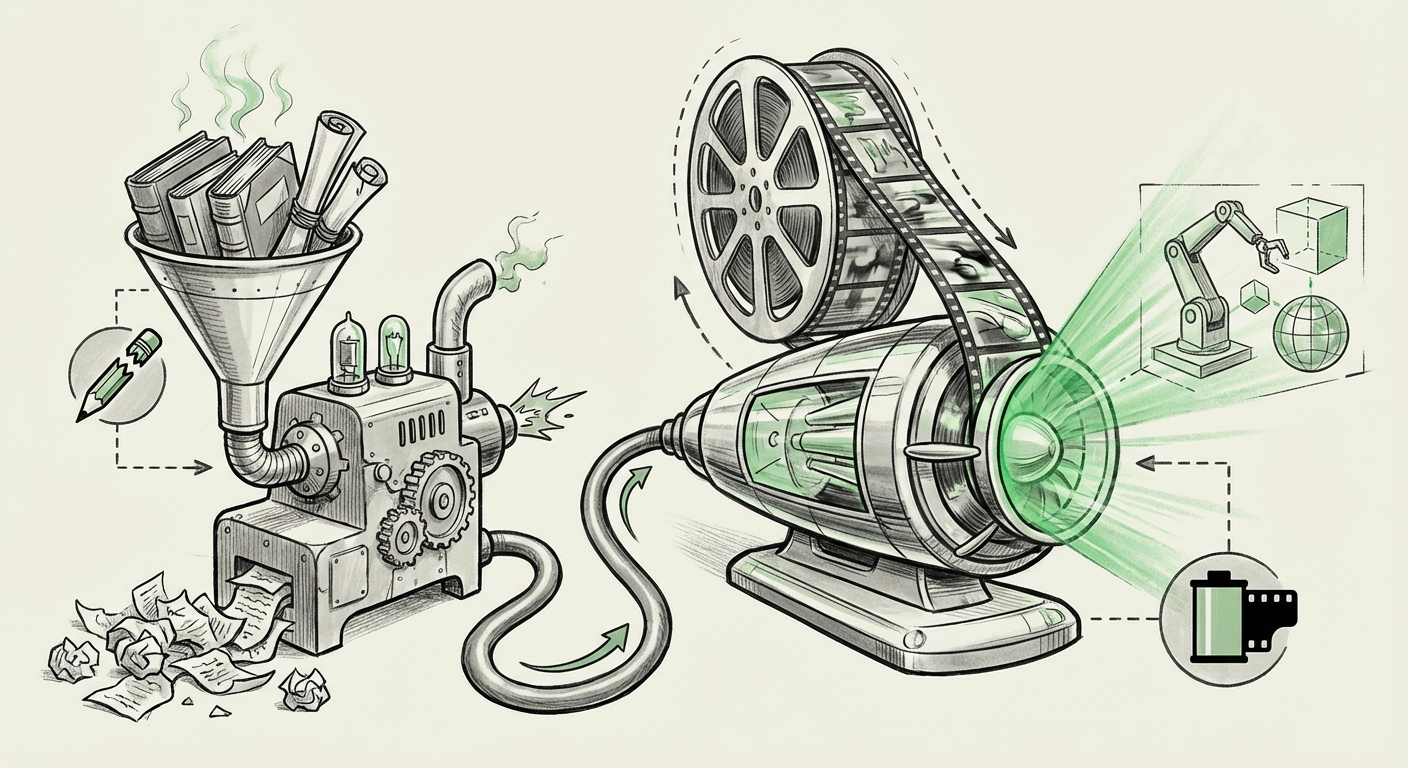

For the last decade, the fuel for the AI revolution has been text. From GPT-3 to the latest iterations of large language models (LLMs), the sheer volume of human-generated writing scraped from the public internet has driven unprecedented leaps in capability. However, a critical and often underestimated problem is surfacing: the digital library is finite.

New research, notably from Meta FAIR and NYU, is signaling a clear inflection point. The assumption that we can simply scale up current LLM architectures using more text is hitting a wall. The quality of available text is diminishing, and the quantity is running out. This bottleneck forces us to look elsewhere for the raw material required to build truly general, powerful AI systems. The answer, according to leading labs, lies in the most abundant and information-dense medium available: unlabeled video.

The Hard Stop: Text Data Scarcity

To understand the urgency of this pivot, we must first appreciate the scale of the text data crisis. Training massive models requires trillions of tokens—words or sub-words. While the internet seems infinite, the volume of *high-quality, novel, and publicly accessible* text is not.

Current models have already ingested the majority of quality text available online. As research suggests (corroborated by analyses examining **"AI data scarcity"** and **"web crawl exhaustion"**), the remaining data is often repetitive, low-quality, or locked behind paywalls. If data quantity is crucial for scaling, and quality is essential for avoiding model degradation (or "model collapse"), relying solely on text is no longer sustainable for exponential progress.

For business leaders, this means that the competitive advantage previously gained by simply having the largest text corpus is diminishing. The focus must shift from *what* data you have to *what kind* of data you can process.

The Economic Reality for AI Infrastructure

The economic model of LLM training is predicated on finding cheaper, richer data sources. Text data, while relatively cheap to store, is becoming expensive to curate effectively. The search for the next "data moat" is on, and it’s not going to be found in another sweep of Wikipedia.

The New Frontier: Unlabeled Video as the Ultimate Corpus

Video is the next logical—and necessary—leap. Why? Because video is essentially compressed reality.

When an AI model trains on text, it learns relationships between symbols (words). When it trains on video, even unlabeled video, it learns physics, causality, three-dimensional space, object permanence, and the relationship between actions and outcomes. A 10-second video clip contains the data density of thousands of paragraphs, provided the model has the architecture to interpret it.

This is the central finding highlighted by Meta’s research team: training multimodal models from scratch on diverse, unlabeled video streams proves that these models can absorb world knowledge much more efficiently than text-only models ever could. This validates the industry-wide trend, visible in competitors like Google DeepMind’s approach to **Gemini** training, which necessitates native multimodality from the ground up rather than bolting vision onto a language base later.

The Self-Supervised Revolution

The critical enabler here is self-supervised learning (SSL). Because annotating video content at scale is impossible—who can label every frame of every YouTube video on causality?—models must learn independently.

As researchers exploring **"Self-supervised learning" in video** have shown, models learn by prediction. They are shown the first half of a video clip and asked to predict the next segment, or they are asked to reconstruct a masked-out section. By constantly trying to predict the next moment in time or the unseen visual features, the model builds an internal "world model."

This process is profoundly powerful. It allows AI to grasp fundamental concepts like gravity, friction, and object interaction simply by observing the world move, without ever being told explicitly, "that is a ball falling due to gravity."

The Technical Hurdles: Scaling to the Third Dimension

Moving from static text or images into dynamic video is not just about adding more data; it’s about fundamentally changing the mathematics of the model. This introduces immense technical complexity, but also massive opportunities for architectural innovation.

Computational Cost and Architecture

Text data is one-dimensional (a sequence of tokens). Images are two-dimensional. Video is three-dimensional (width, height, and time). To process this third dimension, standard 2D convolutional or attention layers are insufficient. Models must incorporate temporal awareness.

This requires specialized architectures, often leveraging 3D convolutions or sophisticated temporal attention mechanisms. As noted in analyses discussing the implications of models like OpenAI's **Sora**, video models are poised to dwarf LLMs in parameter count and compute requirements because they must maintain coherence across extended sequences of time.

For technical teams, this means the next hardware generation needs not just more memory, but smarter ways to handle sequential, dense data streams. We are entering an era where efficient management of temporal relationships will be the key differentiator, not just parameter count.

The Unlabeled Data Dilemma: Noise vs. Signal

While video is abundant, it is also incredibly noisy. A typical video contains irrelevant information, poor lighting, camera shake, and abstract content. Training effectively on this requires models robust enough to filter out the noise and latch onto the underlying structural patterns. Meta's research suggests that when models are trained correctly from the start (i.e., natively multimodal), they become surprisingly adept at distinguishing signal from static.

Implications for the Future of AI Capabilities

This pivot from text-centric LLMs to video-centric multimodal models fundamentally changes what AI will be capable of delivering.

1. True Embodiment and Robotics

The primary limitation of current LLMs is their lack of physical understanding. You can tell an LLM how to bake a cake, but it doesn't inherently understand the viscosity of batter or the risk of burning sugar. Models trained on video, which captures interactions with the physical world, gain an implicit "body schema."

Actionable Insight: Expect the next generation of foundation models to be the backbone of advanced robotics and embodied AI. They will be able to perform complex, real-world tasks requiring physical dexterity and environmental reasoning, moving AI from a conversational partner to a physical agent.

2. Advanced Simulation and World Modeling

If an AI can learn the physics of the world from video observation, it can then be used to simulate new physics or test hypotheses within its learned "world model." This has profound implications for scientific discovery, engineering, and drug development.

For instance, an AI trained on video of chemical reactions or material stress tests can run millions of variations far faster than physical laboratories. This accelerates the discovery cycle across hard sciences.

3. Enhanced Generative Capabilities

The ability to generate realistic, temporally coherent video is already a reality (e.g., Sora). However, when this video generation capability is grounded in a deep, self-supervised understanding of physics, the results become not just aesthetically pleasing, but physically plausible. Future multimodal models will generate complex simulations, interactive 3D environments, and highly realistic digital assets.

Business Strategy in the Video-Centric Era

For organizations heavily invested in traditional NLP or image generation, this signals an urgent need for strategic realignment:

- Data Diversification is Non-Negotiable: Stop viewing video, audio, and sensor data as secondary inputs. They must be integrated into the core training pipeline. Businesses sitting on massive archives of operational video data (security footage, industrial processes, user interaction recordings) now possess latent, high-value training assets.

- Talent Acquisition Shift: The demand is rapidly moving from pure NLP experts to those skilled in spatio-temporal modeling, 3D vision, and high-throughput streaming data processing.

- Shifting Compute Focus: Infrastructure investments must prioritize memory bandwidth and parallel processing capabilities suited for sequential data, rather than just massive matrix multiplication for transformer layers optimized for text sequences.

Conclusion: Beyond Language to Embodied Intelligence

The exhaustion of high-quality text data is not a failure of the LLM paradigm; it is a sign of its natural progression. Meta’s findings confirm what many in the research community suspected: language alone is an insufficient basis for Artificial General Intelligence (AGI).

The next great leap in AI capability will not come from finding more clever ways to summarize the internet. It will come from finding better, more efficient ways to teach machines how to see, interact with, and understand the dynamic, messy reality captured in unlabeled video. This shift promises AI systems that are not just smarter communicators, but deeply integrated understanders of the physical world—a transition from the age of the LLM to the age of the World Model.