The AI Financial Reckoning: Why Millions Using Chatbots for Retirement Advice is a Tech Tipping Point

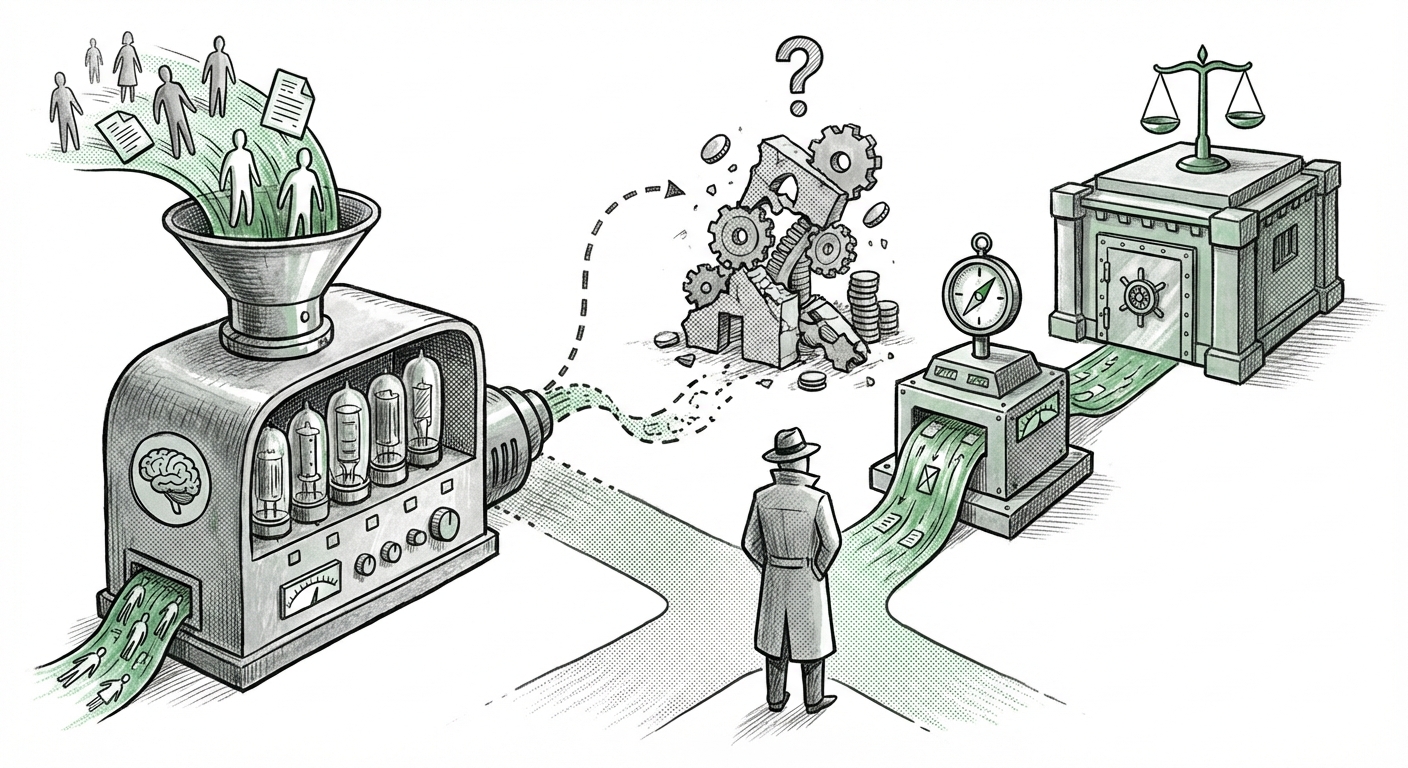

The digital revolution promised us convenience, but now it’s delivering complexity directly to our screens. Reports confirm that millions of everyday users are increasingly turning to powerful, general-purpose AI chatbots, like ChatGPT, for advice on sensitive, high-stakes matters—most notably, personal financial planning and retirement strategies. This isn't just a curious tech fad; it represents a seismic shift in consumer trust and AI application.

As an AI technology analyst, I see this trend as a critical tension point. On one side, we have unprecedented accessibility to what *feels* like personalized advice. On the other, we have industry experts sounding loud alarms about accuracy, liability, and the fundamental limits of current large language models (LLMs) when dealing with real-world finance.

This article synthesizes what we know about this adoption wave, analyzes the severe risks involved, and explores what this convergence means for the future of AI, FinTech, and regulatory bodies.

The New Digital Advisor: Accessibility Meets Ambition

Why are people asking an LLM about their 401(k)? The answer lies in immediate access and perceived personalization. Traditional financial advice often involves scheduling appointments, high minimum asset thresholds, and significant fees. A chatbot, however, offers instant, non-judgmental conversation, available 24/7.

This phenomenon is the ultimate expression of AI democratizing specialized knowledge. For a user seeking to understand basic concepts—say, "What is the difference between a Roth and a Traditional IRA?"—LLMs excel. They synthesize vast amounts of educational content into easy-to-digest summaries. This usage pattern, focused on **education**, is relatively low-risk.

However, the tipping point occurs when the user graduates from education to **execution**: "Given my income and age, should I contribute more to my company match or my HSA?" This requires complex, context-aware calculations based on frequently changing tax laws and personal risk tolerance. This is where the generalist nature of consumer chatbots becomes highly problematic.

Corroborating the Trend: Consumer Trust vs. Expert Fear

Our investigation into related data confirms this duality. While usage is burgeoning, surveys on consumer trust in AI financial planning highlight that users are often willing to overlook flaws for convenience. They treat the chatbot as a knowledgeable assistant rather than a licensed fiduciary. This adoption gap—where user confidence outstrips technological readiness—is the central challenge.

Conversely, looking into the established sector of **robo-advisor evolution** shows how sophisticated FinTech has become. Traditional robo-advisors use carefully constructed, rules-based algorithms designed to be transparent and auditable. The use of LLMs bypasses these established safety rails entirely, leading to the next major threat.

The Technical Abyss: LLM Hallucination vs. Financial Accuracy

The most urgent technical danger in using general-purpose LLMs for finance revolves around **hallucination**. In creative writing, a hallucination is a minor issue; in financial modeling, it is catastrophic. If an LLM confidently advises a user to execute a trade based on an invented regulatory requirement or misstates the current federal interest rate, the consequences are immediate and tangible loss of capital.

Financial planning relies on inputs that must be 100% factual, verifiable, and up-to-date. LLMs, by their design, predict the next most probable word based on massive training data, not by executing a real-time database query against the IRS code. For AI developers and risk managers, articles detailing LLM failure modes in specialized domains serve as stark warnings.

What This Means for the Future of AI: This use case forces AI development away from general, expansive models towards grounded, verifiable, specialized models. The future of AI in high-stakes sectors will not be about making LLMs smarter; it will be about making them demonstrably *safer* and tethered to external, authoritative data sources (a process often called Retrieval-Augmented Generation, or RAG, but applied with extreme rigor).

The Regulatory Time Bomb: Who Holds the Fiduciary Duty?

When a licensed financial advisor makes a recommendation, they are often held to a fiduciary standard—meaning they must act in the client’s absolute best interest. If an individual loses their life savings based on advice from ChatGPT, who is liable?

This question is currently being addressed in legal chambers and regulatory offices globally. Searching for **SEC guidance on generative AI in financial advice** reveals that regulators are scrambling. They must determine if the AI platform provider, the user who prompted the advice, or perhaps the model developer is responsible for damages.

For compliance officers and policymakers, this ambiguity is paralyzing. Without clear rules, regulated institutions are hesitant to integrate generative AI into client-facing tools, yet they cannot ignore the reality that consumers are using unregulated versions already.

Implications for Businesses: Compliance vs. Innovation

This tension creates an innovation gap. FinTech firms have a choice:

- Wait for Regulation: This ensures safety but surrenders first-mover advantage to less regulated entities operating overseas or in consumer niches.

- Innovate Safely: This means building complex guardrails, rigorous testing, and deep integration with proprietary, verified financial data, essentially creating specialized "Financial LLMs" that are far more expensive to build than generalist tools.

The transition from current chatbot usage to regulated AI advisory services will be defined by which companies successfully implement these guardrails first. The era of "ask anything and trust the answer" is ending; the era of "ask a verified, auditable AI" is beginning.

The Future of Personalized AI Advisory Services

The massive consumer appetite demonstrated by millions using LLMs for finance is an undeniable market signal. The next generation of AI financial tools will capitalize on this desire for instant, personalized interaction while mitigating the risks associated with the current technology.

From Chatbot to Certified Co-Pilot

The future is not about replacing human advisors entirely, but about creating highly effective AI co-pilots. These systems will look very different from today's chatbots:

- Verifiable Outputs: Every calculation, investment suggestion, or tax implication must be accompanied by a citation to the exact statute, filing form, or market data feed that supports the conclusion.

- Risk Profiling Integration: Future tools will need deeper, secure integration with banking data and explicit user risk profiling, making recommendations that evolve dynamically, far beyond what a single chat session can capture.

- Explainability (XAI): Users must understand *why* the AI made a recommendation. "Because the model said so" will not suffice for retirement planning.

This moves us away from the generalist LLM and toward specialized applications that marry advanced NLP with deterministic financial engines. This transition addresses the concerns raised by experts regarding accuracy and liability while satisfying the consumer demand for conversational access.

Actionable Insights for Stakeholders

This moment requires clear, proactive steps from all involved parties:

For Consumers: The Immediate Reality Check

Treat any general-purpose chatbot advice as a preliminary thought starter, not a final plan. Always, always verify critical figures (tax rates, deadlines, investment limits) through official government sources or licensed professionals before taking action. Your retirement is too important to be staked on predictive text.

For Financial Institutions: Embrace Specialized Integration

Do not dismiss these tools; recognize them as proof of concept for user demand. Start investing in building proprietary, domain-specific LLMs grounded in internal, audited data. Focus on creating explainable interfaces that build trust rather than just mimicking human conversation.

For Regulators: Define the Boundaries of Liability Now

The existing regulatory patchwork is inadequate for this technology. Frameworks must be established quickly to define the permissible scope of LLM use in offering personalized financial guidance. Clarity on liability—especially concerning hallucinations—is essential to preventing widespread consumer harm and fostering responsible institutional adoption.

The mass adoption of LLMs for finance is more than a trend; it is an inflection point. It exposes the raw appetite for accessible complex decision support while simultaneously highlighting the immature state of generalized AI reliability. Navigating this requires recognizing that while conversation is easy, fiduciary duty demands verifiable truth. The next phase of FinTech will be about engineering that truth into the very fabric of the AI experience.