The Agentic Frontier: How Iterative Models, Desktop AI, and Specialized Tools are Redefining Software

The AI landscape rarely offers a quiet week, but recent developments suggest we are not just seeing iterative improvements; we are witnessing the building blocks of a fundamental shift in computing. Recent reports highlighting the rumored **GPT-5.4**, the rise of specialized coding agents like **Cursor**, and the overarching march toward fully autonomous **agentic AI** systems are not isolated events. They are three crucial vectors converging to redefine how we interact with technology—moving from simple prompting to delegated execution.

This article synthesizes these trends, explores what they mean for the next era of AI architecture, and outlines the immediate, practical implications for developers, businesses, and the very definition of a "computer."

Trend 1: The Perpetual Upgrade—Iterative Scaling and Model Evolution

The chatter around models like a hypothetical "GPT-5.4" is telling. It suggests that the industry may be leaning into an era of rapid, granular iteration rather than waiting years for the next monolithic breakthrough. This is a critical distinction for both investment and planning.

Beyond Parameters: The Focus Shifts to Refinement

If the foundation models (like GPT-4 or Claude 3) are the powerful engines, these incremental updates (the ".4" in 5.4) are about optimization, safety, and tool integration. We are moving past the "is it big enough?" phase into the "is it smart enough to act?" phase.

For the non-technical reader, imagine a world-class chef (the large model). Instead of needing a completely new chef every year, you are constantly upgrading their knife set, teaching them a few new safety protocols, and giving them better recipes. The chef is still powerful, but their ability to execute complex, multi-step tasks reliably improves significantly with each fine-tuning.

This iterative scaling forces a strategic pivot. Businesses must stop basing roadmaps on distant, theoretical AGI releases and start planning for **continuous, incremental capability gains** that demand constant workflow adaptation. This aligns with external discussions suggesting that the path to advanced reasoning might involve optimizing data quality and retrieval augmentation rather than solely relying on exponentially larger parameters (a concept echoed in research areas focused on moving beyond simple scaling laws).

Trend 2: Specialization Wins—The Rise of AI-Native Tools (The Cursor Effect)

The second major theme is the pivot from using a general-purpose chatbot (like ChatGPT) for coding, to using a deeply integrated, AI-native tool like Cursor. Cursor is an integrated development environment (IDE) built from the ground up to treat the LLM as its core operating logic, not just an add-on feature.

From Copilot to Autonomous Teammate

For years, we had AI assistants sitting in a separate window, waiting for copy-pasted code blocks. The Cursor model bypasses this friction. It understands the context of the entire codebase, can run tests, debug errors it creates, and propose structural changes—all within the environment where the work happens. This is the maturation of the AI "co-pilot" into an **AI teammate**.

This trend has massive implications for productivity. If developers are no longer context-switching between their terminal, their editor, and a browser window to ask the AI a question, the velocity of development skyrockets. We see this reflected in the intense competition in the developer tooling space, where every major player is racing to embed deeper agentic behavior into their platforms.

Actionable Insight for Business: Early adoption of specialized AI tools focused on high-leverage tasks (like software development, legal drafting, or complex data analysis) will generate immediate ROI. Waiting for the generalized model to get good enough means ceding a competitive advantage to firms that leverage task-specific agents today.

Trend 3: The Convergence—Agentic AI as the New Operating System

The most profound shift indicated by these developments is the move toward true **agentic AI**. This isn't just about generating text; it’s about systems that can *plan, execute a sequence of steps, use external tools, and self-correct* to achieve a high-level goal provided by a human.

Defining True Agency

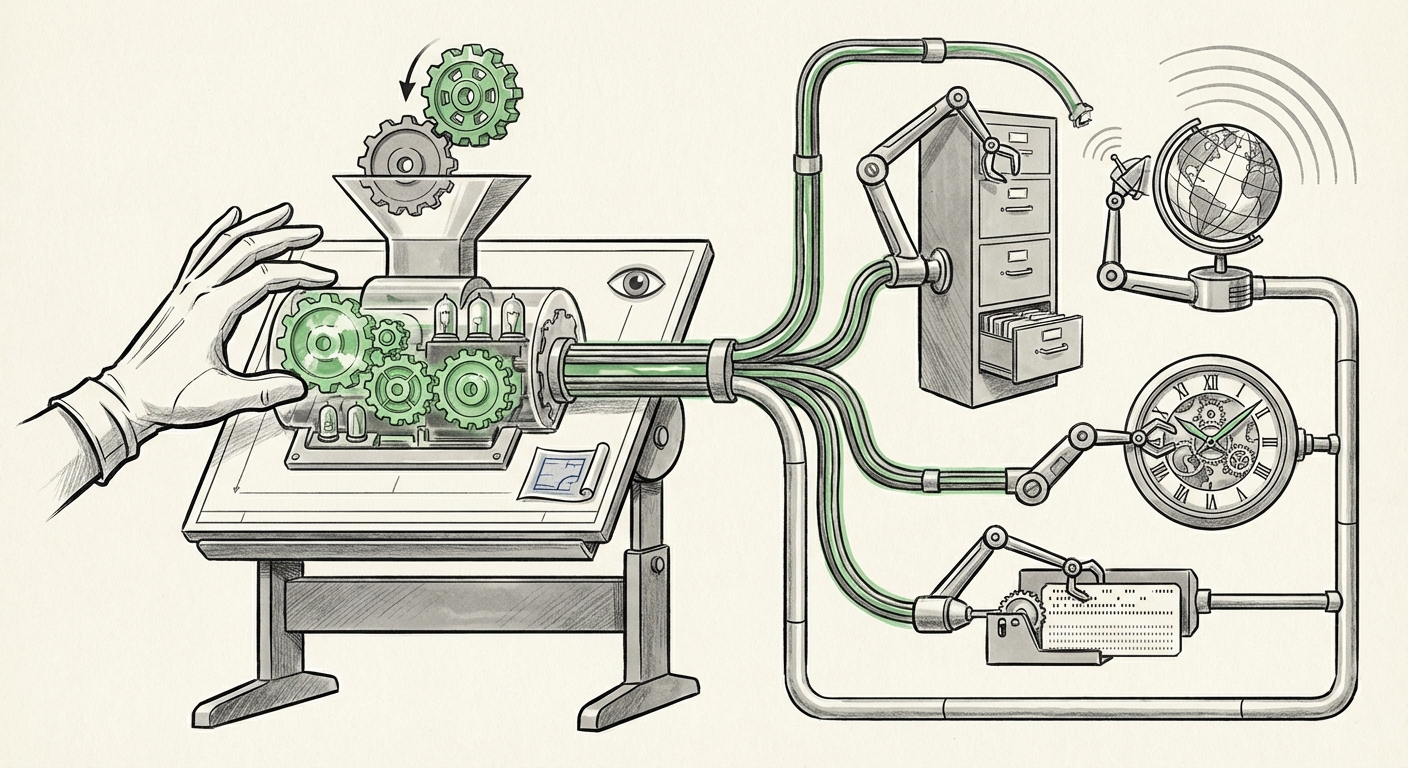

What separates a basic script from an agent? It’s the loop: Observe $\rightarrow$ Plan $\rightarrow$ Act $\rightarrow$ Reflect $\rightarrow$ Repeat. While current models can simulate planning, the frontier research is focused on building robust memory and tool-use capabilities that allow agents to operate reliably for hours or days on complex projects.

When a model like GPT-5.4 is designed to interface seamlessly with tools like Cursor, we are no longer talking about an application. We are talking about an **AI Operating System (AIOS)**. The desktop itself—the file system, the application launcher, the network stack—becomes the agent’s sandbox.

Imagine telling your computer: "Research the top three supply chain bottlenecks in Q3 for our industry, generate a presentation summarizing the risks, and schedule a meeting with the relevant department heads to discuss mitigation." In an AIOS future, the system doesn't just search the web; it opens the necessary analysis software, interacts with your email client, and manages the calendar—all autonomously.

Future Implications: The Re-architecting of Digital Work

These three trends—better core models, specialized tools, and unified agency—do not exist in isolation. They are mutually reinforcing and point toward a future where human work is drastically re-scoped.

For the Developer and Creator

Software development will bifurcate. Many routine coding and maintenance tasks will be delegated to agents like Cursor, operating on the model’s improved reasoning capabilities. The developer’s role evolves into that of an Architect and Auditor. Their value lies not in writing boilerplate code, but in designing robust agentic workflows, defining complex system requirements, and verifying the security and correctness of the agent's output. This echoes discussions in the developer community regarding the need for better validation frameworks for AI-generated code.

For Business Strategy and Operations

Businesses must prepare for workflow automation at a speed previously impossible. If a junior employee took a week to produce a market analysis report, an agentic system might do it in an hour. This means:

- Compression of Middle Management Layers: Tasks involving information synthesis, coordination, and routing will be heavily automated.

- Demand for Prompt Engineering and Oversight: The new core competency will be translating ambiguous business goals into clear, executable instructions for autonomous systems.

- Security Redefined: As agents gain system access (the desktop integration discussed in the search queries regarding local orchestration), ensuring these agents operate within strict security sandboxes becomes paramount. A failure in an agent's planning loop could lead to widespread system errors or data leaks.

The Hardware and Infrastructure Question

The trend toward deeper desktop integration raises critical infrastructure questions. If agents need access to proprietary data and need to perform complex local tasks quickly, reliance solely on massive, remote cloud GPUs becomes a bottleneck. This fuels the need for more powerful local AI capabilities, pushing hardware manufacturers toward designing chips optimized for running sophisticated agentic loops efficiently on personal devices, balancing performance with privacy concerns.

Actionable Insights: Navigating the Agentic Wave

The current moment demands proactive adaptation, not passive observation. Here are concrete steps to prepare for this agentic future:

- Audit Workflow Friction: Identify tasks that involve multiple distinct software tools and human context switching. These are the prime candidates for initial agentic takeover. Start experimenting with specialized tools (like advanced coding assistants or specialized data agents) in low-risk areas.

- Invest in Agent Governance: Before deploying agents widely, establish clear rules of engagement. What tools can they use? What data can they access? This proactively addresses the security challenges inherent in highly autonomous software.

- Upskill for Abstraction: Training should shift from teaching employees how to use specific software features to teaching them how to define desired outcomes for an AI system. The skill is in the delegation, not the execution.

- Monitor Iterative Releases: Pay close attention to incremental model updates. They often signal feature parity improvements (like better tool-calling or reduced hallucinations) that unlock new levels of reliable automation.

We are moving beyond the era of the helpful chatbot and entering the era of the delegated executor. The convergence of powerful, continuously improving core models, specialized execution environments, and the infrastructure to grant them operational authority marks the true beginning of the agentic frontier. Understanding how these pieces fit together is the key to leading the next technological revolution.