The 900 Million User Benchmark: Analyzing the Sustainability and Future of Hyper-Scale AI Adoption

The latest reports indicating that ChatGPT is clocking 900 million weekly users is more than just a new vanity metric; it represents a seismic shift in how the world interacts with digital technology. This figure suggests that Generative AI is transitioning from a novelty item into an essential utility—a true step-change in software adoption comparable to the early days of web browsing or mobile messaging.

As technology analysts, our primary task is not just to note the number but to contextualize it. Is this adoption rate sustainable? What engineering feats are making this possible? And most importantly, where does this usage pattern lead the entire AI ecosystem?

Contextualizing the Scale: Is 900 Million Users a New Normal?

Before celebrating, we must anchor this massive figure against broader market activity. A standalone number, however large, must be validated against industry movement. Our initial analytical approach involves cross-referencing this usage against established trends:

The search for **Global Generative AI Adoption Statistics** reveals that the market is undoubtedly hot. Reports often show massive increases in enterprise pilot programs and individual experimentation. If market research suggests that 60% of knowledge workers are actively testing AI tools, then 900 million weekly users for the leading platform becomes plausible, signaling that ChatGPT is capturing a dominant share of a rapidly expanding pool of users. This suggests AI is no longer niche; it’s rapidly becoming foundational for many digital tasks.

For businesses and investors, this means AI readiness is no longer optional. The platform achieving this scale sets the de facto standard for expected performance and capability.

The Crucial Metric: Moving Beyond the Top Line to Retention

While 900 million weekly visits is impressive, the sustainability hinges on **Long-term user retention rates for consumer AI apps**. Early novelty drives massive spikes; habits drive longevity. Are these 900 million people logging in once a week to ask a philosophical question, or are they relying on it to draft critical reports, debug code, or manage complex schedules?

If analysis shows high retention associated with integrating the tool into specific, high-value workflows—such as in coding or specific creative pipelines—then this usage is sticky. If the usage is mostly ephemeral, the number is vulnerable to the next shiny object. The future of this adoption relies on successful integration into the daily "digital hygiene" of the modern worker.

The Hidden Engine: Infrastructure and the Economics of Scale

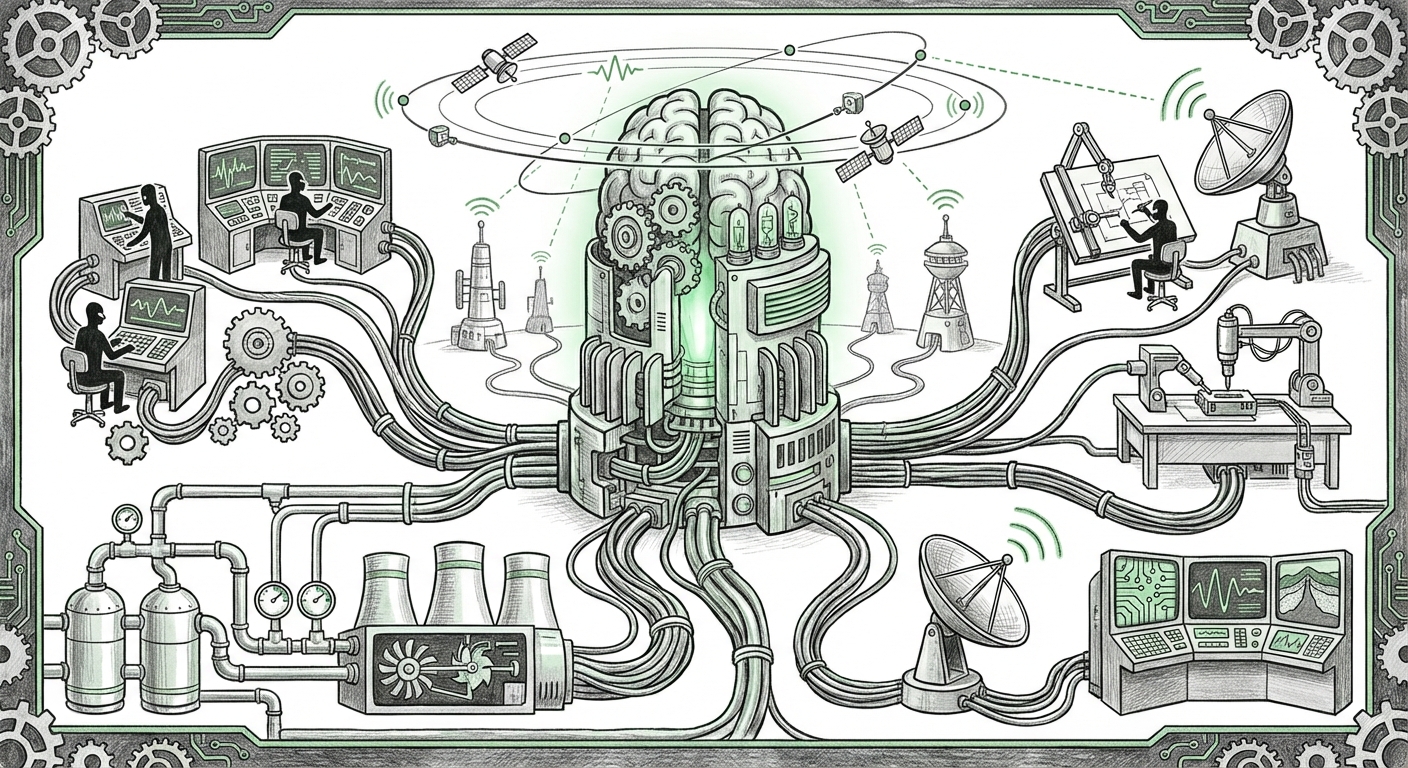

Serving nearly a billion users every week demands a level of computational power that strains even the largest technology firms. This leads us directly to the second critical factor: **Infrastructure costs for large language model scaling**.

Imagine every single one of those 900 million users submitting a query that requires accessing hundreds of billions of parameters, running through specialized hardware (GPUs), and generating a unique response. The sheer energy and capital required are astronomical. For the adoption to be sustainable, the economics must be viable.

Analysts focusing on infrastructure look for evidence of breakthrough efficiency:

- Inference Optimization: Success here means techniques like model distillation (creating smaller, faster, nearly as capable versions) or advanced quantization (reducing the precision needed for calculations) are effectively reducing the cost-per-query. An article detailing a 40% reduction in inference cost means that scaling to 1 billion users becomes far more affordable than it was a year ago.

- Hardware Availability: This scale puts immense pressure on the global supply chain for high-end AI accelerators. The continued accessibility and deployment of cutting-edge hardware are non-negotiable prerequisites for maintaining—let alone growing—this user base.

For technologists, this massive scale serves as the ultimate proving ground. Failures in scaling infrastructure immediately translate into degraded user experience (slow responses), which directly impacts retention (Query 4). Therefore, the sustained success validates radical advances in computational efficiency.

The Competitive Gauntlet: Feature Parity and Market Polarization

No market leader operates in a vacuum. The reported dominance of one platform immediately triggers an aggressive **Competitor response to OpenAI user growth**. The future isn't just about how many people use ChatGPT; it’s about how the ecosystem reacts.

We see this response manifesting in two key areas:

- Feature Convergence: Competitors like Google Gemini and Anthropic Claude rapidly introduce features targeting ChatGPT’s weaknesses (e.g., better real-time data access, superior contextual memory, or enhanced multimodality). When competitors offer comparable quality for free or bundled into existing services (like Workspace), the proprietary advantage of the leader erodes.

- Strategic Pricing: If competitors begin offering high-tier models at lower subscription prices, it forces a market re-evaluation. This is the looming threat of a 'price war' in the AI utility space.

The implication for the future is a potential market polarization:

- The Utility Layer: Basic, high-frequency tasks (summarization, quick drafting) will become commoditized, driven by price competition.

- The Specialized Layer: True differentiation will move toward specialized models, vertical expertise (legal, medical), or deeply integrated enterprise workflows that are difficult to switch away from.

The 900 million user number is a target, not a permanent safe harbor. It validates the category, but the market will quickly consolidate around the provider that best manages cost, provides the deepest integration, or offers the most unique utility.

Future Implications: From Tool to Essential Infrastructure

What happens when nearly one billion people rely on the same underlying technology weekly? The implications ripple across business, education, and societal structures.

Actionable Insights for Businesses

For enterprises, the takeaway is clear: AI literacy is now an operational requirement, not an IT project. Businesses must rapidly determine their stance on AI:

- Standardize and Secure: If your workforce is using public tools for proprietary work, you face severe data leakage risks. Businesses must move quickly to adopt secure, enterprise-grade versions of these models or implement strict usage guidelines.

- Workflow Transformation: Identify the 20% of tasks that consume 80% of employee time (e.g., report generation, internal documentation, first-draft correspondence). These are the areas ripe for immediate transformation powered by LLMs.

- Talent Strategy: Hiring must prioritize prompt engineering and critical verification skills. The future employee must be an effective AI collaborator, adept at leveraging these tools to increase output velocity dramatically.

Societal Shifts: Information Integrity and Dependability

This hyper-adoption introduces systemic risks that must be addressed proactively. When a large percentage of generated content (emails, articles, code comments) flows through a handful of centralized AI models, the potential for subtle, large-scale biases or errors to propagate is significant.

This necessitates a renewed focus on **model auditing and transparency**. The reliance on AI for decision support means that the reliability mentioned in our infrastructure analysis (Query 2) must extend to trustworthiness. We need robust systems to verify AI outputs, especially in high-stakes fields like finance or healthcare.

The Road Ahead: Beyond the Chat Interface

The 900 million weekly users are currently interacting primarily through a chat interface. However, the future of this massive user base lies in **invisible integration**.

The trend suggests that the user experience will evolve away from actively visiting a website (like ChatGPT) to AI being embedded seamlessly within every application they use—their CRM, their operating system, their design software. The success story of 900 million users today is merely paving the way for billions of passive interactions tomorrow.

This transition requires a fundamental rethink of software architecture. Instead of building applications layer-by-layer, developers will increasingly orchestrate complex workflows composed of specialized, fine-tuned models. The platform that serves 900 million users today is currently winning the interface battle; the next generation of winners will dominate the integration layer.

The scale reported confirms that Generative AI is not a fad. It is the new foundation for digital productivity. The challenge now shifts from demonstrating capability to ensuring sustainable economics, building resilient infrastructure, and navigating the societal responsibility that comes with such profound technological penetration.