900 Million Weekly Users: Analyzing the Infrastructure, Competition, and Future of Mainstream AI Adoption

The recent announcement that ChatGPT is clocking an astonishing 900 million weekly users marks a critical inflection point in technology history. This isn't merely a successful app; it represents the rapid normalization of powerful Artificial Intelligence into the daily routines of nearly one billion people. As technology analysts, we must move beyond the headline numbers to investigate the ecosystem that supports this scale, the competitive forces it unleashes, and the profound implications for the future of work and digital life.

The Scale of Success: From Novelty to Necessity

To put 900 million weekly users into perspective, consider the user bases of established digital giants. This level of sustained, weekly engagement places generative AI on par with major social media platforms or core internet utilities. It suggests that AI is transitioning from an experimental tool used by early adopters to an essential utility—a default setting for drafting emails, debugging code, brainstorming ideas, and seeking instant information.

If we look for corroborating evidence of this massive shift (such as reports on general "Generative AI weekly active users" market share growth 2026), we see that this growth is likely sector-wide. While ChatGPT leads, this figure confirms that the entire industry has achieved "mass-market saturation" far faster than previous technological revolutions like the smartphone or social networking.

Demystifying the Use Case

Why are so many people returning weekly? The adoption suggests that the primary barrier—ease of use—has been overcome. Users are finding clear value in:

- Productivity Augmentation: Automating mundane tasks (summarization, translation, first drafts).

- Knowledge Access: Replacing traditional search engines for complex or nuanced queries.

- Creative Scaffolding: Serving as a co-pilot for programmers, writers, and designers.

For a casual user, interacting with an LLM is as simple as typing a question. For a developer, accessing the API or integrated features is becoming seamless. This accessibility is key to sustaining such high weekly numbers.

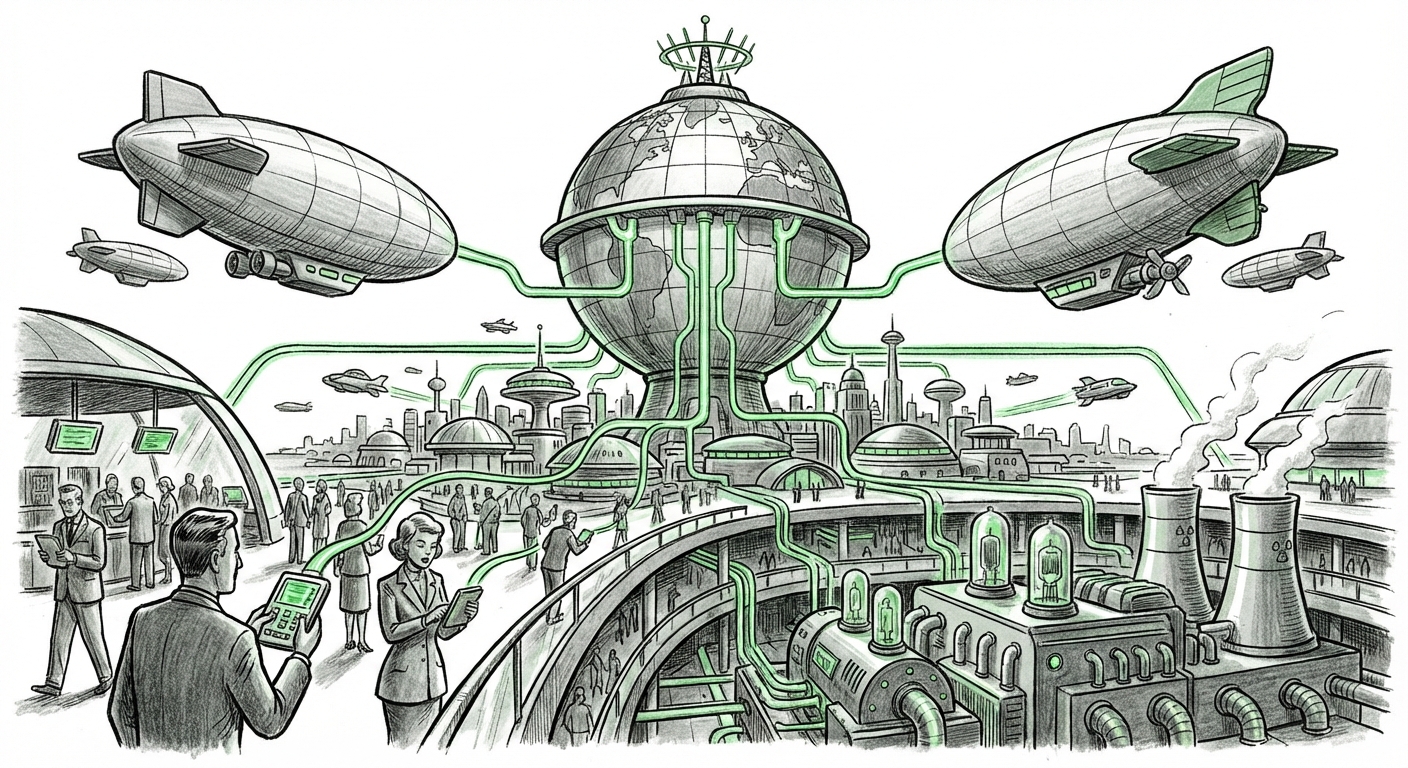

The Unseen Engine: Infrastructure and Sustainability

A service reaching 900 million users is not just a software victory; it is a triumph of global engineering. Every query, every generated response, requires massive computational power—a process known as *inference*. Supporting this demand creates significant technical and economic pressures.

If we investigate the underlying technical feasibility (by searching terms like "AI model inference cost scaling" "cloud provider strain" 2026), we find that the industry's viability depends on two factors: efficiency and supply.

The Efficiency Imperative

Running enormous models like those powering ChatGPT is expensive. To keep the service accessible (and perhaps even free for basic tiers), companies must aggressively optimize how quickly and cheaply they can generate answers. This drives intense research into:

- Quantization: Techniques that shrink the model size without losing too much accuracy, allowing more responses per chip.

- Specialized Hardware: Moving beyond standard GPUs to custom AI accelerators (like advanced TPUs or custom silicon) designed specifically for inference rather than training.

- Sparse Activation: Designing models where only necessary parts of the neural network activate for a specific prompt, saving power.

The future of AI monetization often hinges on whether these infrastructure costs can drop low enough to make AI a truly ubiquitous, low-cost utility, rather than a premium subscription service.

The Great Compute Race

Furthermore, supporting this user base strains the global supply chain for high-end semiconductors. The continuous need for more advanced GPUs and specialized data centers means that cloud providers (AWS, Azure, GCP) are under immense pressure to expand capacity. This capital expenditure dictates who can compete in the large-scale AI arena.

This infrastructure bottleneck directly influences market dynamics. Only organizations with access to staggering amounts of capital and deep partnerships with chip manufacturers can maintain parity at this scale. This sets a very high barrier to entry for any new contender.

The Battlefield: Competition and Feature Parity

Massive adoption rarely occurs in a vacuum. The surge in ChatGPT users forces competitors to innovate or risk being left behind. By examining the current rivalry (e.g., comparing "Google Gemini" vs "ChatGPT" feature parity and enterprise adoption trends), we see that the battleground is shifting beyond raw intelligence scores.

From General Models to Integrated Agents

In the early days, the focus was on how "smart" the model was. Today, the critical factor is **integration**. Users are demanding that AI tools become native parts of their workflows:

- Operating System Hooks: AI integrated directly into desktop environments, making context-aware suggestions automatically.

- Multimodality: Seamlessly handling text, images, audio, and video inputs and outputs within the same conversation thread.

- Enterprise Guardrails: Offering robust security, data governance, and fine-tuning capabilities necessary for large corporations.

If the 900 million users are using ChatGPT for writing, competitors must offer a superior *writing assistant* experience, perhaps one deeply embedded in office suites (like Microsoft 365 or Google Workspace). If users leverage it for coding, the competitor offering the best real-time debugging within an IDE wins.

The Rise of the AI Ecosystem

Competition is also fueled by ecosystem creation. The concept of custom, specialized chatbots or 'GPTs' demonstrated that users value customization. Future success will belong to the platform that allows developers and power users to build the most robust, easily shareable, and profitable ecosystem of specialized AI agents on top of their foundational models.

Societal Ripples: Measuring Real-World Impact

While user counts are impressive, the true measure of this technology is its impact on human endeavor. When nearly a billion people are using AI weekly, we must look at longitudinal data concerning productivity and societal shifts (often found in reports covering "AI adoption rates" impact on white-collar productivity 2026 survey).

Productivity Gains and the Skills Shift

Early indicators suggest significant productivity gains, particularly in tasks involving synthesis and first drafts. This means routine, lower-level creative or analytical work is being compressed from hours into minutes. This is transformative for businesses, but it necessitates an immediate focus on workforce adaptation.

Practical Implication for Businesses: Companies that successfully integrate AI tools into their standard operating procedures—training employees not just how to *use* the tools, but how to *prompt* and *verify* the output—will see immediate competitive advantages. Employees who view AI as a mandatory skill will thrive; those who resist risk redundancy in specific task categories.

The Information Landscape

A major implication of this usage scale is the massive data generation involved in training future models. If 900 million people are inputting prompts, this provides an unparalleled, continuous stream of real-world data on human intent, language gaps, and problem-solving patterns. This feedback loop accelerates model improvement exponentially.

However, this raises urgent questions regarding digital provenance and critical thinking. As AI becomes the primary interface for information access, society must develop robust filters and educational strategies to combat misinformation generated at scale, even if that misinformation is simply based on outdated training data.

Actionable Insights for the Road Ahead

For leaders looking to navigate this AI-saturated landscape, the path forward requires strategic alignment across technology, talent, and governance.

For Technology Leaders: Prioritize Integration and Edge Performance

Do not chase the next 10x model breakthrough if you cannot efficiently deploy the current iteration. Focus on **workflow integration**. Is your AI assisting a customer service agent natively within their CRM, or does the agent have to switch tabs to ask a question? Furthermore, start planning for a world where some inference moves closer to the user ("on the edge") to reduce latency and dependency on centralized data centers.

For Business Strategists: Redefine Roles, Not Just Processes

Simply automating 20% of a job title won't yield full returns. You must redefine what success looks like in that role. If AI writes the first draft of a legal brief, the human lawyer's value shifts entirely to critical review, strategic nuance, and ethical positioning. Upskilling must pivot from rote task mastery to **AI oversight and advanced prompt engineering**.

For Policy Makers and Educators: Focus on Digital Literacy and Ethics

The speed of adoption outpaces regulatory frameworks. Clear guidelines on data usage, intellectual property generated by AI, and transparency about when users are interacting with an AI versus a human are paramount. Education systems must rapidly integrate AI literacy as a fundamental skill, similar to basic computer skills 30 years ago.

Conclusion: The Utility Layer of Intelligence

The 900 million weekly user statistic for ChatGPT is less a snapshot of a single product’s popularity and more a seismograph reading of global technological transformation. We have collectively crossed the threshold where powerful AI is viewed as a discretionary luxury and have entered the era where it is treated as essential infrastructure.

The future implications are clear: the compute layer will become fiercely competitive, innovation will prioritize seamless integration over raw power, and the relationship between human effort and digital output will be permanently altered. The challenges ahead are not just about building faster chips or smarter algorithms; they are about adapting our economies, our skill sets, and our information ecosystems to sustain and ethically govern a world where generative intelligence is just a click away.