The Inference Revolution: Why Specialized Endpoints and Tool Use Are Replacing Generalized Cloud AI

The initial phase of generative AI adoption was defined by access. Companies flocked to the major cloud players, utilizing their established platforms to call powerful, often proprietary, Large Language Models (LLMs). However, as AI moves from novelty to core business functionality, the requirements have fundamentally changed. We are now witnessing the "Inference Revolution"—a decisive migration toward specialized hardware and tightly integrated software solutions designed not just to *run* models, but to run them fast and make them useful in complex workflows.

Recent analyses, such as those highlighting the offerings of providers like Clarifai alongside specialized hardware accelerators like Groq, confirm this structural shift. The days of simply waiting for a generic API response are ending. The future belongs to low-latency, tool-enabled AI.

The End of the Latency Trade-Off: Speed as a Feature, Not a Luxury

For many real-time applications—such as customer service bots requiring instant responses, financial trading tools, or interactive AR/VR experiences—a slight delay in AI response time (latency) is unacceptable. This is where specialized inference providers fundamentally disrupt the generalized cloud model.

The Race for Tokens Per Second

Generalized cloud providers generally optimize for massive scale and flexibility, often running models on standard, widely available GPUs. This is efficient for batch processing but can introduce bottlenecks for interactive workloads. Specialized players, on the other hand, are optimizing entire stacks around one goal: maximizing speed.

Consider the emergence of hardware designed specifically for inference, such as the example of the LPU (Language Processing Unit) cited in competitive analyses. When infrastructure is purpose-built for matrix multiplication, the raw speed metrics—tokens per second (throughput) and time to first token (latency)—skyrocket. When we look at performance benchmarking discussions, the focus is stark: can a specialized endpoint deliver conversational speed that feels instantaneous?

For the Developer: If an application needs to feel natural, every millisecond counts. A response that takes 500ms feels instant; one that takes 3 seconds feels broken. This drive forces developers away from generalized solutions toward providers who treat latency as their primary product feature.

Function Calling: Transforming LLMs from Chatbots to Agents

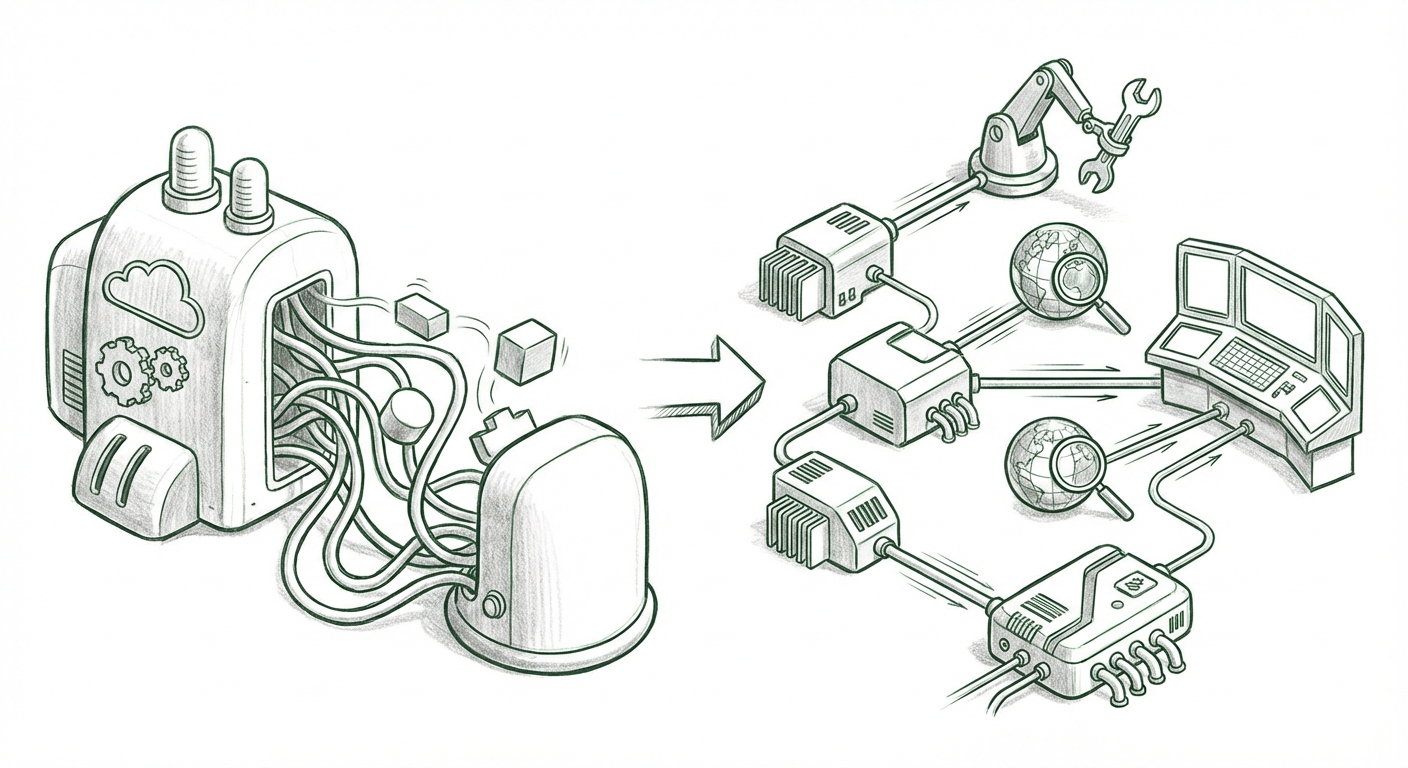

Speed alone isn't enough. A fast model that can only chat is limited. The critical development making LLMs truly powerful in enterprise settings is their ability to interact with the outside world—a capability often codified through Function Calling or Tool Use.

This is the process where the LLM is taught to recognize when a user request requires an external action. For example, a user might ask, "What is the weather, and can you book me a flight to that location?" The LLM doesn't inherently know the weather or how to book flights. Instead, it outputs a structured command (a function call) like `get_weather(city="New York")` or `book_flight(destination="X", date="Y")`.

The application hosting the LLM then executes that function against the relevant database or API and feeds the *result* back to the LLM. The LLM then summarizes the factual result for the user. This capability turns a static text generator into a dynamic AI agent.

Native Tool Integration as a Competitive Edge

As highlighted in technical discussions about developer tooling trends, function calling is quickly becoming the expected standard for production AI. Providers like Clarifai are strategically positioning themselves by integrating these developer tools directly into their deployment APIs (their MCP servers). This tight coupling means less boilerplate code for developers.

Instead of manually parsing JSON outputs and managing separate libraries for tool invocation, developers using optimized endpoints can integrate complex, multi-step agentic workflows with greater reliability and speed. This move simplifies the MLOps pipeline for agent development significantly.

The MLOps Calculus: Total Cost of Ownership (TCO) Re-evaluated

The decision to adopt specialized inference is not purely technical; it is deeply economic. We must move past the sticker price of an API call and look at the Total Cost of Ownership (TCO) of an entire AI system.

Self-Hosting vs. Managed Specialized Service

Traditionally, the argument for large enterprises was: self-host on massive, general cloud infrastructure to maximize utilization and lower per-token cost at enormous scale. However, this analysis often ignores hidden costs:

- Engineering Overhead: The cost of hiring specialized engineers to manage GPU clusters, optimize schedulers, implement auto-scaling for inference traffic, and keep up with rapid framework updates is substantial.

- Model Drift and Maintenance: Managing the lifecycle of multiple open-source models requires constant engineering effort.

- Hardware Waste: General-purpose hardware often sits idle or underutilized when traffic fluctuates.

Specialized inference providers compete by offering a compelling alternative: they take on the TCO burden. By paying a managed fee for an optimized endpoint, companies effectively outsource the hardware optimization and scheduling complexity. While the per-token cost might seem higher initially, when factoring in reduced engineering salaries and faster time-to-market, the managed service often proves more economical for mid-to-large scale deployments where engineering focus is better spent on the application layer, not the infrastructure layer.

The Ecosystem Advantage: Multi-Model Strategy

A final, crucial aspect of this trend is the shift toward a diversified model portfolio. The dominance of a single model (like GPT-4) is waning as powerful open-source alternatives (like Mistral or specialized fine-tunes of Llama) become competitive in specific domains.

Enterprises cannot afford vendor lock-in to a single API. The best long-term strategy involves deploying the right model for the right task—perhaps using a small, fast open-source model for simple summarization and reserving the largest proprietary model for complex reasoning.

Platforms that host a wide variety of models—from cutting-edge open-source weights to proprietary foundation models—and serve them all through consistent, low-latency APIs (like Clarifai positions itself) become essential infrastructure hubs. This multi-model deployment strategy allows businesses to remain agile, optimize costs per task, and adhere to data sovereignty requirements by choosing the model that best fits the task and regulation.

Implications: What This Means for the Future of AI Deployment

This bifurcation of the AI infrastructure market—specialists competing on speed and integration, generalists competing on breadth and existing ecosystem lock-in—has profound implications.

For Businesses: Specialization Drives Value Creation

Businesses must audit their current AI usage. If latency is impacting user satisfaction or if engineering teams are bogged down maintaining inference clusters, it is time to look seriously at managed, specialized endpoints. The focus must shift from "which cloud do we use?" to "which specialized inference pipeline delivers the necessary performance and tooling for this specific use case?"

Furthermore, the emphasis on function calling accelerates the timeline for production AI agents. Companies that rapidly adopt this tooling strategy will be the first to automate complex, multi-step processes across their operations.

For Developers: The Rise of the AI Systems Architect

The role of the ML Engineer is evolving. It’s no longer enough to know how to train a model; developers must become AI Systems Architects, proficient in stitching together specialized components—fast inference providers, external knowledge bases (RAG systems), and precise tool-calling mechanisms—to build robust, reliable agents.

For the Hardware Landscape: Inference as the New Frontier

The pressure from specialized providers fuels intense competition in hardware innovation. If the LPU example holds, we will see continued diversification beyond standard GPUs. The market will reward accelerators optimized solely for the massive parallel processing required by inference workloads, rather than training.

Actionable Insights for Leaders

- Benchmark Latency, Not Just Cost: Mandate real-world latency testing (Time to First Token) for all mission-critical AI features before committing to an infrastructure provider.

- Prioritize Tooling Integration: When selecting an inference platform, evaluate its native support for function calling/tool use. Abstracting this complexity saves significant development time.

- Embrace Multi-Model Flexibility: Build MLOps pipelines that allow easy swapping between open-source and proprietary models served via consistent endpoints. Avoid being tied to a single vendor’s model catalog.

The AI infrastructure landscape is maturing rapidly. The age of generalized, slow, "good enough" AI is giving way to an era defined by razor-sharp focus: speed for real-time interaction and specialized tooling for intelligent automation. The winners in the next wave of AI adoption will be those who leverage the specialized inference revolution to deploy smarter, faster, and more capable AI agents.