The Era of Parallel AI Agents: Why Multi-Agent Code Review is the Next Leap in Software Reliability

The integration of Artificial Intelligence into software development has moved rapidly from novelty to necessity. Tools like AI pair programmers have become commonplace, suggesting code snippets and auto-completing functions. However, the latest development from Anthropic—the release of parallel AI agents within Claude Code specifically designed for rigorous code review—marks a subtle but profound shift. We are moving away from simple suggestion engines toward sophisticated, collaborative validation systems.

This isn't just about checking syntax; it’s about tackling mission-critical tasks like bug detection and security gap analysis before code ever reaches a production server. To understand the significance of this trend, we must analyze the architectural change it represents, how it impacts the highly competitive LLM landscape, and what it means for the future of secure software delivery (DevSecOps).

From Linear LLMs to Collaborative AI: The Power of Parallel Agents

Imagine asking a single person to check a complex contract for every potential legal loophole, financial risk, and grammatical error simultaneously. They might miss something due to cognitive load. Traditional, single-pass LLM code review often faces a similar challenge: the model processes the code sequentially, checking one thing at a time. If the prompt is complex, the analysis might become shallow.

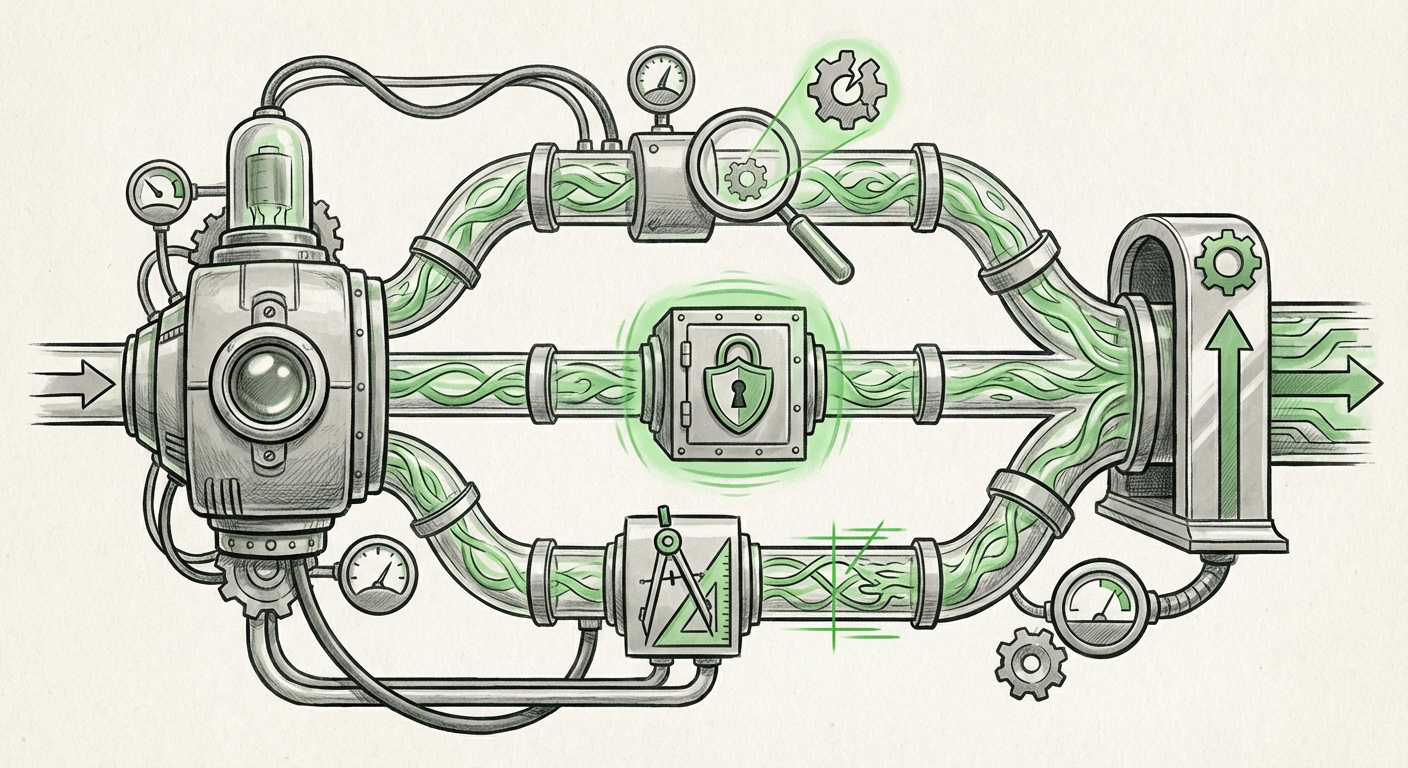

The concept of parallel AI agents fundamentally changes this paradigm. As suggested by recent technical analyses, this approach mimics how expert human teams collaborate. Instead of one large model doing everything, the task is broken down:

- Agent A (The Bug Hunter): Focuses exclusively on runtime errors, logic flaws, and potential crashes.

- Agent B (The Security Auditor): Scans for known vulnerabilities (e.g., SQL injection paths, insecure dependencies, hardcoded secrets).

- Agent C (The Style & Performance Checker): Ensures the code is efficient, readable, and adheres to established architectural patterns.

These agents work *in parallel* on the same changeset, and their findings are synthesized—or perhaps they even "debate" their findings—before presenting a final report. This ensemble methodology dramatically boosts reliability and depth.

Architectural Necessity: Addressing LLM Limitations

The technical motivation behind this shift is clear: overcoming the inherent limitations of monolithic Large Language Models (LLMs). When assessing complex systems, single LLMs often suffer from scope blindness or superficial hallucination. By distributing the verification workload across specialized, concurrent processes, developers can achieve a higher degree of confidence. This architectural evolution is central to advancing AI in engineering workflows.

For engineering leaders tracking technological trajectories (Query 1), this signals that the next generation of AI tooling will be judged not just on the power of its base model, but on the sophistication of its prompting architecture.

The Competitive Arena: Specialization vs. Generalization

The entry of Anthropic's specialized Claude Code into the automated review space intensifies the competition with existing leaders like GitHub Copilot (backed by OpenAI) and Google’s emerging Gemini capabilities. This move highlights a crucial ongoing debate in AI development (Query 4): the future belongs to the specialists.

The Rise of Vertical AI

While generalist models like GPT-4 can perform many tasks reasonably well, mission-critical applications—where a missed bug costs millions or compromises user data—demand hyper-specialization. Claude Code is fine-tuned on vast quantities of secure, high-quality codebases, making it intrinsically better at spotting subtle coding anti-patterns than a general model instructed to "review this code."

This competitive dynamic means that every major LLM provider must now showcase proof of specialization in vertical markets. If a model cannot outperform specialized tools in coding, legal, or scientific research, it risks being relegated to consumer-facing tasks.

CTOs and engineering managers evaluating tooling (Query 1 audience) must now prioritize models that offer demonstrable, specialized performance over sheer parameter count. The market is rapidly shifting toward tools that integrate deeply into the CI/CD pipeline and speak the precise language of software security and performance.

Implications for DevSecOps and Security Gaps

Perhaps the most significant practical implication of parallel AI reviewers is the immediate uplift in the DevSecOps cycle. Traditionally, security scanning involves multiple, often slow, specialized tools run at different stages: SAST (Static Analysis) early, DAST (Dynamic Analysis) later, and manual human review last.

When AI agents, particularly those designed with security verification in mind, can review a Pull Request (PR) immediately upon creation, they inject security oversight right at the point of change. This aligns perfectly with the "shift-left" security mantra—fixing issues when they are cheapest and easiest to correct.

Raising the Bar on Vulnerability Detection

The question, as posed in Query 3, is about *effectiveness*. Can an AI spot a novel vulnerability better than a highly paid security engineer? While AI may not yet find true zero-days consistently, its ability to catch thousands of known, high-frequency mistakes (like using deprecated cryptographic functions or ignoring input sanitization guidelines) is unmatched when running at scale.

The parallel agent architecture enhances this further. If one agent flags a piece of code as potentially vulnerable, a second agent can be tasked specifically with generating test cases to prove or disprove the vulnerability. This immediate, automated threat modeling elevates the baseline security posture of any codebase.

For security professionals (Query 3 audience), this technology does not eliminate their role but elevates it. They shift from chasing low-hanging fruit identified by scanners to focusing on high-level architectural risks that still require nuanced human intuition.

Practical Takeaways and Actionable Insights for Engineering Teams

The integration of advanced, specialized, multi-agent AI into the software development lifecycle is no longer a distant prediction; it is a present reality shaping deployment strategies. What should businesses do now?

1. Audit Current AI Tooling for Agentic Capabilities

If your current AI coding assistant only offers single-pass suggestions or basic summarization, you are already lagging. Engineering leaders should actively benchmark tools based on their ability to handle complex validation tasks. Look for tooling that explicitly supports ensemble verification, debate, or multi-step reasoning pipelines.

2. Redefine the Role of the Human Reviewer

If an AI can handle 80% of standard bug and style checks, the role of the human reviewer must evolve. Actionable insight: Reallocate senior engineering time away from mundane style fixes and toward complex architectural decision reviews, performance tuning, and strategic security planning.

3. Embrace Specialization in Your AI Strategy

Do not assume one generalist LLM will rule all domains. Just as Anthropic has specialized Claude for code, organizations should seek out models fine-tuned for their specific domain—be it regulatory compliance parsing, specialized database querying, or complex scientific simulation.

4. Mandate Security-Focused Agent Review

For all new code merges, mandate that the AI review includes a dedicated security audit step powered by a specialized agent. Treat the AI’s security report with the same seriousness as a failing unit test. This proactive step is critical for minimizing technical debt and compliance risk.

The Future Landscape: Autonomous Engineering and the Architect AI

Looking ahead, the parallel agent structure seen in Claude Code is the foundational stepping stone toward truly autonomous engineering systems. If multiple agents can reliably review code, the next step is enabling them to fix the reviewed code, test the fix, and submit the resolved PR—all without human intervention for routine changes.

We are moving toward a future where an "Architect AI" manages a swarm of specialized, parallel agents. These agents will handle the entire spectrum of software quality: from initial design integrity to deployment readiness and post-release monitoring. This promises an unprecedented acceleration in development velocity, provided we can maintain oversight and control over these increasingly complex, collaborative systems.

The introduction of parallel AI agents for code review signifies a maturation of applied LLMs. Reliability, depth, and security are now prioritized over mere capability. This is the turning point where AI stops being a helpful assistant and starts becoming an indispensable, self-correcting quality gatekeeper for the digital world.

Further Context and Corroborating Trends

To fully understand the trajectory implied by this development, consider these related areas of AI advancement:

- Competitive Feature Parity: Tracking how rivals like GitHub Copilot respond to this multi-agent validation approach confirms the industry's direction toward more robust PR integration. (Relevant to Query 1)

- Multi-Agent System (MAS) Research: Exploring academic work on how diverse AI agents collaborate helps explain the technical backbone making this parallel review reliable. (Relevant to Query 2)

- AI in Vulnerability Management: Understanding current industry benchmarks for automated security scanning provides a performance baseline against which these new AI reviewers must be measured. (Relevant to Query 3)

- The Vertical AI Investment Thesis: Analyzing trends that favor deep, specialized model tuning over general performance illustrates why models like Claude Code gain traction in specific engineering tasks. (Relevant to Query 4)