The Inference Wars: How Groq, Clarifai, and Specialized APIs are Redefining LLM Deployment Speed and Cost

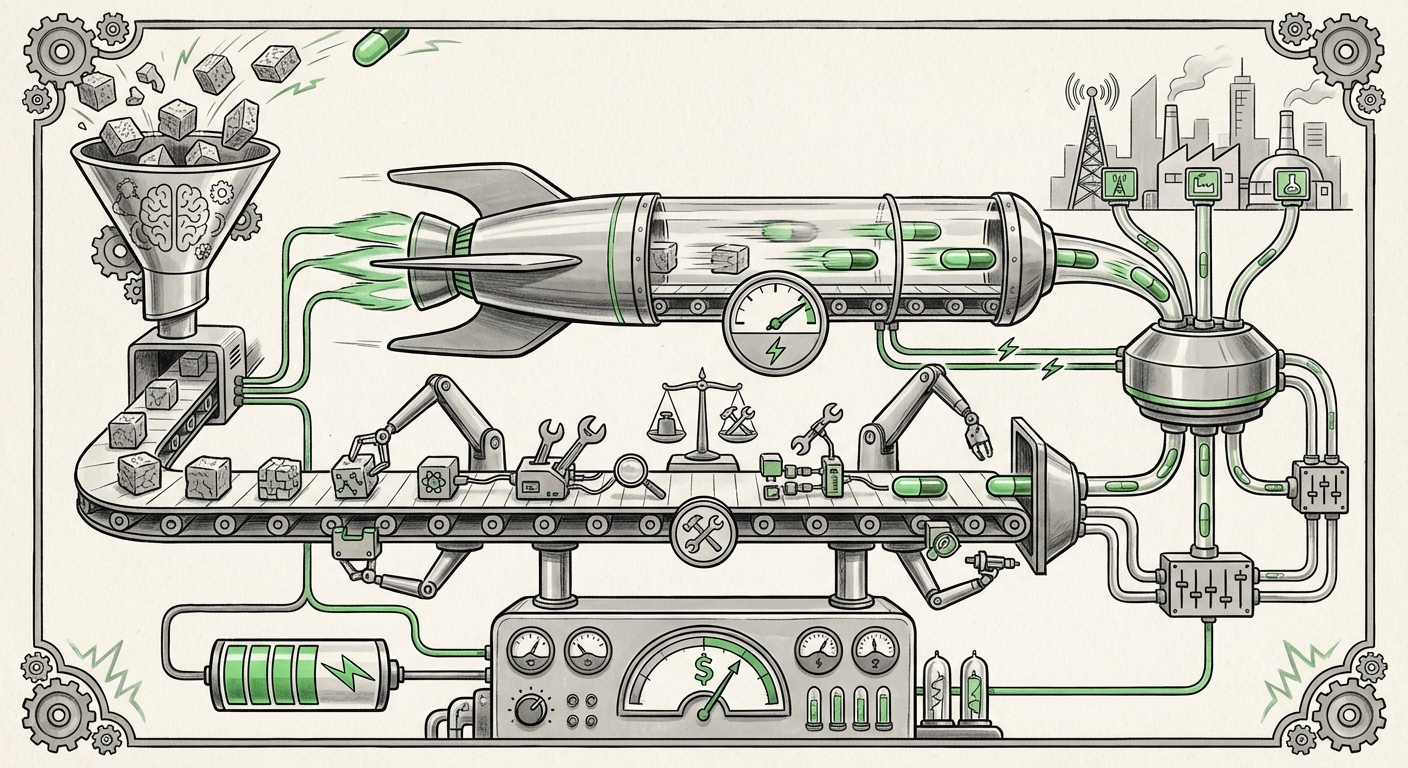

The race to build the most capable Large Language Model (LLM) often captures the headlines, but the true battleground for AI adoption—and profit—is increasingly shifting to inference. Inference is the process of actually running the finished model to generate answers, summarize data, or execute tasks. If building the model is like designing a supercar engine, inference is the high-speed track where the vehicle’s performance is truly tested.

Recent comparisons between AI platforms like Clarifai, Groq, Fireworks AI, and Together AI reveal a critical moment of divergence in the industry. We are moving beyond simple cloud hosting towards highly specialized infrastructure designed for speed, flexibility, and seamless integration. Understanding these dynamics is crucial, as they will dictate which applications become viable and how quickly AI transforms our daily digital interactions.

Phase One: The Need for Speed—Hardware Innovation Takes Center Stage

For many user-facing applications, latency—the time it takes for the AI to start responding—is the single biggest killer of user experience. A three-second wait for a chatbot feels slow; a half-second response feels instantaneous.

The introduction of Groq, driven by its proprietary Language Processing Unit (LPU) architecture, highlights this hardware focus. Traditional LLMs run best on Graphics Processing Units (GPUs), originally designed for rendering video games. While GPUs are great at parallel tasks (doing many small things at once), LLMs are fundamentally sequential—they must generate one word before deciding on the next.

As industry analysis of LLM chip comparisons shows, LPUs are designed specifically to excel at this sequential processing. This translates directly into breakthrough token-per-second rates. For engineers and infrastructure planners, this means the hardware foundation of AI serving is undergoing its first major shakeup since the dominance of NVIDIA GPUs.

What this means for the future: We are entering an era where hardware specialization dictates competitive advantage. If your application requires real-time decision-making—such as high-frequency trading analysis, instant content moderation, or highly responsive conversational agents—the speed offered by LPU-like architectures will soon become a non-negotiable feature rather than a premium option.

Phase Two: Flexibility in the Model Zoo—Open Source vs. Proprietary

While speed is intoxicating, accessibility and cost are the practical gatekeepers for mass adoption. This is where the business models of providers like Clarifai, Fireworks, and Together AI become relevant, particularly in how they treat open-source models (like Llama 3 or Mistral).

Running proprietary models (like GPT-4) means relying entirely on the provider’s API, which comes with opaque pricing and potential vendor lock-in. In contrast, services that excel at hosting and optimizing open-source models offer several advantages, often revealed when looking at detailed cost analyses of running LLMs:

- Cost Control: Open source allows providers to optimize the model serving stack aggressively, potentially offering significantly lower price-per-token rates than closed models.

- Data Sovereignty: Running an open-source model on a dedicated inference endpoint, rather than sending sensitive data to a third-party large language model provider, offers greater control and security.

- Customization: Businesses can fine-tune these models for proprietary tasks without licensing restrictions.

The comparison highlights a split in the market: Some providers focus on being the best "delivery truck" for the newest proprietary models, while others act as optimized "warehouses" for the vast, rapidly improving library of open-source alternatives. Clarifai, for example, often emphasizes its ability to integrate and manage this entire "ModelOps" landscape, regardless of the model’s origin.

Practical Implication for Businesses: CTOs must decide where their core intellectual property resides. If proprietary knowledge is key, flexibility in hosting open-source models becomes a vital hedging strategy against unforeseen price hikes or service deprecation from the major proprietary vendors.

Phase Three: From APIs to Agents—The Centrality of Workflow Integration

The ultimate goal of modern AI is not just smarter text generation; it’s creating autonomous agents that can perform multi-step tasks. This requires the LLM to interact with the real world—checking databases, sending emails, or looking up current information. This integration is achieved primarily through function calling (or tool use).

As industry discussions around function calling integration confirm, this feature is rapidly moving from a novel trick to a fundamental requirement for production AI systems. If an LLM cannot reliably use tools, it remains a sophisticated chatbot; if it can, it becomes a digital assistant.

Platforms that focus heavily on ModelOps, like Clarifai, emphasize making this integration seamless. They offer environments where developers can easily define, deploy, and connect external APIs to the LLM’s decision-making process. This abstraction layer saves enormous development time.

Future Implications: The value proposition of inference providers is expanding. It’s no longer enough to be fast; they must also be smart connectors. The next generation of AI platforms will be judged less on the speed of a single token and more on the reliability and breadth of the tools their integrated LLMs can wield. This is the democratization of agent building, making complex automation accessible to more developers.

The Evolving Infrastructure Map: Centralized Power vs. Decentralized Alternatives

While the primary competition centers on centralized, high-performance API endpoints, the underlying infrastructure is quietly diversifying. Research into decentralized AI inference platforms reveals a growing philosophical and practical counter-narrative to reliance on the handful of dominant cloud hyperscalers.

Decentralized networks leverage distributed GPU power, often pooling resources from smaller data centers or even individuals. This trend offers potential resilience and cost optimization, particularly when analyzing the pros and cons versus centralized providers.

- Geopolitical Risk Mitigation: Relying solely on one national infrastructure for critical AI capability is increasingly seen as a strategic risk. Decentralization spreads this risk.

- Cost Arbitrage: When demand spikes (like during a major model release), decentralized options can sometimes offer cheaper access to compute power that might otherwise be locked up in high-cost, high-demand cloud regions.

However, this remains the most immature segment. While promising lower long-term costs and greater autonomy, decentralized inference often struggles with the consistency, security guarantees, and immediate scalability offered by established players.

Contextualizing the Market: Where Do the Giants Stand?

The specialized inference providers are competing not just against each other, but against the massive ML Platform-as-a-Service (MLaaS) offerings from AWS, Azure, and Google Cloud. Analyst reports, such as those summarized in Gartner's latest MLaaS evaluations, typically place these giants high on "Completeness of Vision" due to their end-to-end cloud integration.

This context is vital: Groq, Together AI, and Fireworks are not trying to replace the entire cloud stack; they are aiming to own the *performance layer* within that stack. They succeed when developers realize they need speed or cost optimization that the generalist cloud infrastructure cannot provide out-of-the-box.

The continued disruption by these focused players validates the hypothesis that LLM inference is unique enough to warrant dedicated, specialized services, even if they eventually plug *into* the larger cloud ecosystems.

Actionable Insights for AI Leaders

For business leaders and engineers navigating this fast-moving front, strategic decisions must be made today:

- Benchmark Latency vs. Cost: Do not rely on marketing headlines. Run proof-of-concepts comparing the token-per-second speed of a Groq integration against the cost structure of a Together AI or Fireworks endpoint for your specific LLM workload. A 50% speed increase might only be valuable if the cost increase is less than 50%.

- Prioritize Tooling Readiness: If your roadmap involves building complex AI agents, immediately assess the function-calling and tooling integration capabilities of your chosen provider. A fast model that cannot use your internal APIs is useless.

- Adopt a Hybrid Hosting Strategy: Assume vendor lock-in is dangerous. By heavily utilizing optimized open-source deployments (via services like Fireworks or Together) for high-volume, stable tasks, you maintain leverage and insulate yourself from volatility in the proprietary model market.

- Monitor Hardware Evolution: Keep a close eye on LPU and other custom silicon developments. These breakthroughs will continue to lower the floor price for real-time AI interaction, potentially making previously impossible applications financially feasible within the next 18-24 months.

The inference landscape is a fierce, dynamic ecosystem. It’s a competition that moves at the speed of silicon innovation, where the winner is not necessarily the creator of the biggest model, but the provider who can deliver the smartest, fastest, and most cost-effective action when you press "Enter."