The Inference Arms Race: Why Serving Frameworks Define the Next Era of Production AI

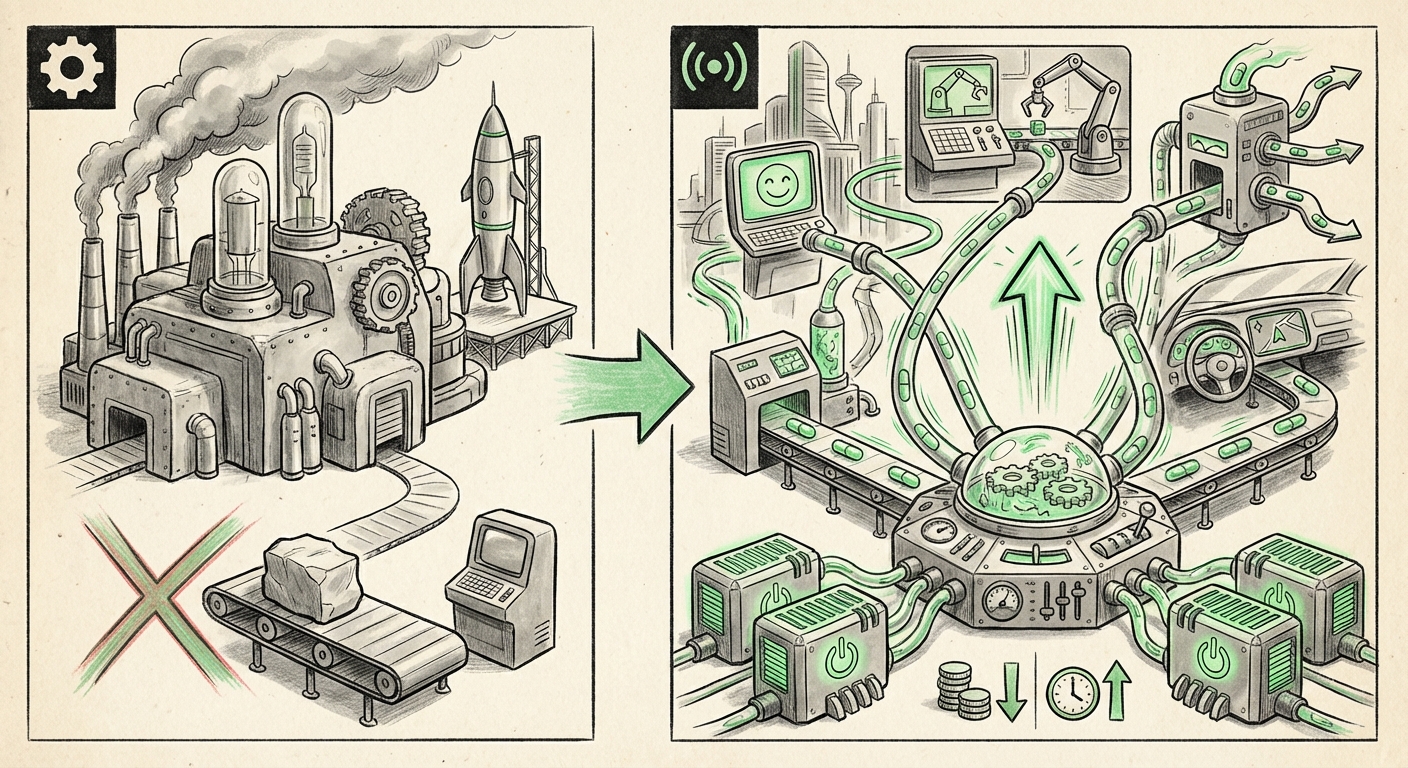

For years, the story of Artificial Intelligence was about the training run—the massive, multi-million dollar sprint to create a foundational model. Today, that narrative has flipped. The real barrier to entry, the true choke point for mainstream AI adoption, isn't training; it's inference. It's about serving those giant models quickly, affordably, and reliably when millions of users ask a question.

This shift from training to serving has brought fierce competition to the often-overlooked software layer sitting between the GPU hardware and the user application: the LLM Serving Framework. Tools like **vLLM**, **NVIDIA Triton Inference Server**, and **Text Generation Inference (TGI)** are no longer just technical footnotes; they are the engines dictating the viability of AI products.

The Crux of the Matter: Speed Meets Scale

Imagine running a popular website. If every page load took 30 seconds, no one would use it. LLMs face the same reality. Users demand instant replies. In the world of generative AI, this speed is measured in latency—specifically, the Time to First Token (TTFT)—and throughput—how many requests the system can handle per second.

Why do we need specialized software for this? Standard model loading is incredibly inefficient. LLMs use up memory awkwardly, leaving GPU power idle much of the time. Serving frameworks solve this by introducing clever engineering tricks:

- Continuous Batching: Instead of processing one user request fully before starting the next, these tools juggle many requests simultaneously, maximizing GPU usage.

- Memory Management: Techniques like vLLM’s PagedAttention are revolutionary, handling the memory required for long conversations without wasting vast chunks of expensive GPU RAM.

When we look at recent analyses comparing leaders like vLLM, Triton, and TGI, we see clear winners emerge based on specific workload needs. This choice is where the rubber meets the road for ML Engineers, determining whether an application costs $100 or $10,000 a day to run.

Connecting the Technical Benchmarks to Business Reality

The technical depth behind these tools is significant, often focusing on specific memory optimizations. However, the implication for the business is simple: Inference cost is the primary driver of AI business models today.

Reports tracking the "LLM inference cost optimization trends 2024" consistently highlight that inference OpEx now dwarfs initial training expenditure for many deployed applications. If your serving framework can double your requests per second (throughput) using the same hardware, you have effectively halved your running cost. This single optimization can transform an unprofitable AI feature into a market differentiator.

For the CTO, the decision is purely financial: which framework provides the best performance-per-dollar ratio on our chosen cloud or proprietary hardware? For the product manager, it translates to user experience: can we support peak traffic without introducing frustrating delays?

From Static Response to Dynamic Action: The Agentic Future

The next major leap in AI usage involves moving beyond simple chatbots to creating sophisticated AI Agents—systems that can reason, plan, and execute complex tasks by interacting with the outside world. This is powered by Function Calling (or Tool Use).

As demonstrated by the need to integrate tools into LLM workflows, an agentic system requires lightning-fast responses. Why? Because an agent often engages in a multi-step "thought process." The LLM needs to decide: "Do I answer directly, or do I call the external inventory API?" If the decision-making step (inference) is slow, the entire multi-step workflow stalls, leading to a poor user experience that feels clumsy and unreliable.

Therefore, the speed offered by highly optimized servers like vLLM is not just about customer satisfaction; it's about enabling the feasibility of complex agent behavior. An agent system built on slow inference is doomed to fail in production.

Bridging the Layers: Serving and Orchestration

Architects are now focusing on how to seamlessly integrate these serving layers with orchestration frameworks like LangChain or LlamaIndex. When querying documentation about "Integrating LLM serving layers with LangChain function calling production," the pattern emerging is clear: the serving framework must expose a standardized, fast API that the orchestration layer can trust implicitly.

This means the serving software itself is becoming part of the business logic interface, demanding tighter integration standards and robust error handling, all while maintaining peak throughput.

Looking Ahead: The Hardware-Software Co-Evolution

While today's benchmarks focus on GPUs, the long-term future of inference efficiency demands looking beyond them. The market is actively researching and deploying specialized silicon designed only for inference—ASICs, custom accelerators, and novel memory architectures.

This realization drives the fourth major trend: the serving software must become hardware-agnostic or easily adaptable. Our research into "Inference acceleration techniques beyond GPUs for LLMs" shows that today's best-of-breed software (like Triton, which has a history of supporting diverse hardware backends) is well-positioned for this transition. Frameworks that only optimize for one specific GPU generation will quickly become obsolete.

What this means for the future: We will see an explosion of specialized inference chips running highly optimized, proprietary versions of the serving logic we see today. The ability of a framework to quickly adopt new hardware acceleration libraries (like TensorRT-LLM on new NVIDIA cards, or custom kernels for specialized silicon) will be the ultimate measure of its long-term success.

Practical Implications: Actionable Insights for Stakeholders

Understanding the competition between vLLM, Triton, and TGI isn't academic; it drives immediate action across the organization.

For ML Engineers and Architects:

- Benchmark Constantly: Do not rely on historical data. As models change (e.g., shifting from Llama 2 to Llama 3), re-benchmark the major serving frameworks against your specific batch sizes and latency requirements.

- Prioritize Memory Efficiency: Techniques like PagedAttention (vLLM's strength) save enormous amounts of money when running large contexts or serving many concurrent users. Understand the memory management strategy of your chosen framework.

- Design for Heterogeneity: If vendor lock-in is a concern, favoring frameworks with broader hardware compatibility (like Triton) might offer better future flexibility than systems tied too tightly to specific CUDA versions or architectures.

For Business Leaders and Product Teams:

- Inference Budgeting: Treat the inference serving layer as a primary budget item. Allocate dedicated resources to optimization; the ROI on reducing serving costs is often immediate and substantial.

- Agent Readiness: If your roadmap includes multi-step AI agents, ensure your current serving setup supports sub-second TTFT. Speed is a core feature of agent reliability.

- API Standard Choice: When building external APIs for your LLM service (like those hosting MCP servers mentioned in initial analyses), ensure they are low-latency and adhere to modern standards that easily integrate with orchestration tools.

The Future Is Fast, Cheap, and Smart

The battle over LLM serving frameworks is arguably the most important technological arms race happening right now, precisely because it is the prerequisite for widespread, profitable AI deployment. The era of showing off massive training clusters is waning; the era of demonstrating superior, cost-effective inference at scale is here.

The future of AI won't just be defined by *what* models we build, but *how* effectively we can run them for billions of interactions every day. Frameworks that master speed, density, and hardware adaptability will be the unsung heroes powering the next wave of truly intelligent applications—from hyper-personalized customer service bots to complex autonomous enterprise agents capable of running complex financial models or managing logistical supply chains in real-time.

The gap between a promising research demo and a globally scaled product is bridged by software like vLLM, Triton, and TGI. Mastering this middleware is mastering the economics and the usability of Artificial Intelligence itself.