The Inference Frontier: Why LLM Serving Frameworks Dictate the Speed and Scale of Future AI Agents

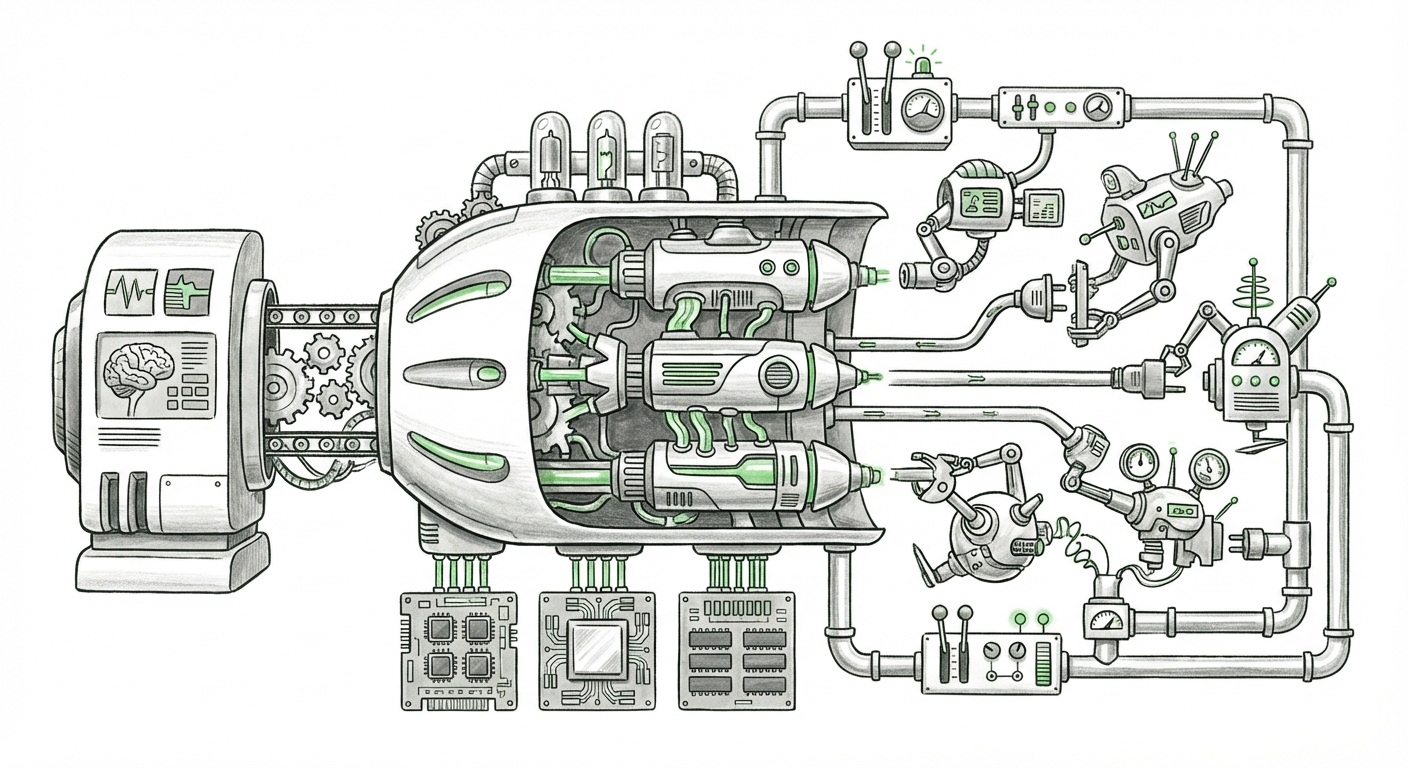

For years, the AI conversation focused on model training—building the biggest, smartest neural networks. Today, the bottleneck has shifted dramatically. It’s no longer just about creating a brilliant Large Language Model (LLM); it’s about delivering that brilliance reliably, quickly, and affordably to millions of users. This is the world of LLM inference serving, and it is the hidden engine powering the next wave of AI innovation.

Recent analyses, such as those comparing frameworks like vLLM, Triton, and TGI (Text Generation Inference), highlight a core tension in MLOps: the trade-off between raw performance (speed) and operational simplicity (deployment ease). To understand where AI is heading—towards sophisticated, autonomous agents—we must look deeper into these serving technologies, the hardware they demand, and how they enable complex workflow integration.

From Research Lab to API Endpoint: The Inference Gap

When an engineer needs to deploy an LLM, they are moving it from a static checkpoint file to a dynamic service capable of answering user requests in milliseconds. This transition is messy. A standard deployment might handle a few concurrent users poorly, leading to slow responses (high latency) or crashing under heavy load (low throughput).

The frameworks we are comparing—vLLM, Triton, and TGI—are specialized software libraries designed to solve this problem by maximizing hardware utilization:

- vLLM: Known for its groundbreaking PagedAttention mechanism, vLLM dramatically reduces GPU memory fragmentation, allowing for much larger concurrent requests (higher throughput).

- TGI (Text Generation Inference): Hugging Face’s solution, deeply integrated with their ecosystem, focusing on optimized text generation features like quantization and continuous batching.

- Triton Inference Server: NVIDIA’s powerful, general-purpose serving engine, offering extensive flexibility and multi-framework support, though often requiring deeper configuration expertise.

The initial decision is often binary: Do we choose the easy path—a managed cloud endpoint—or the high-performance path—customizing one of these open-source servers to squeeze every drop of performance out of our GPUs? The truth is, the future demands both.

1. The Pursuit of Tokens Per Second: Beyond the Initial Comparison

While an introductory article might give a general overview, true MLOps success requires rigorous, up-to-date benchmarking. Performance metrics—tokens generated per second (throughput) and the time until the first token appears (latency)—are constantly shifting as models (like Llama 3 or Mixtral) and serving techniques evolve.

Engineers need to examine data that tests these frameworks against the latest optimization techniques. For instance, how does vLLM's PagedAttention perform when running a quantized 70B parameter model compared to TGI running the same model with speculative decoding?

Actionable Insight for Engineers: Performance is not static. We must treat serving benchmarks as living documents. Regularly cross-referencing benchmark data, such as those provided by the maintainers of Hugging Face’s solutions Hugging Face Inference Endpoints Benchmarks, against self-tests on new hardware is crucial. This deep dive ensures infrastructure investment aligns with actual performance gains.

2. The Bedrock of Speed: Hardware Acceleration and the Roadmap

No serving framework, no matter how brilliant its algorithm, can overcome fundamental hardware limitations. The efficiency gains seen in vLLM and TGI are directly linked to their ability to exploit specific GPU features. For example, techniques like FlashAttention, which reduce the memory read/write overhead in the attention mechanism, are hardware-aware.

This realization forces CTOs and Strategists to look beyond today’s installed base of A100s and H100s. We are entering an era where AI infrastructure is being customized at the chip level specifically for inference. Specialized ASICs (Application-Specific Integrated Circuits) from cloud providers and startups are entering the market, aiming to deliver lower cost-per-inference.

Future Implication: Framework Agnosticism is Risky. If a serving framework is too tightly coupled to legacy hardware instructions, it may become obsolete quickly when cheaper, specialized inference chips arrive. Conversely, a framework that is too generic (like basic TensorFlow Serving) might fail to leverage the cutting-edge tensor cores on the newest GPUs. The optimal framework must strike a balance, adopting new hardware features quickly while maintaining backward compatibility. NVIDIA’s continuous updates on their inference optimization documentation, like the Transformer Engine documentation, signal where optimization efforts are focused.

3. The Agentic Future: Why Serving Speed Becomes a Feature

The most significant shift in AI application development is the move from simple question-answering to complex, multi-step agents. These agents use tools, check databases, call external APIs, and—critically—utilize function calling to bridge the gap between the language model’s intelligence and the real world.

Imagine an AI agent tasked with booking a flight. It must perform several steps: analyze the request, determine the necessary API calls, generate the JSON payload, wait for the external tool’s response, and then synthesize a final answer for the user. This entire loop must feel instantaneous.

If the LLM inference step—the core generation—takes 5 seconds, the entire workflow stalls, and the user experience collapses. A slow response kills the illusion of an intelligent, autonomous assistant.

The Requirement for Low-Latency API Endpoints: This is why the robustness of frameworks like vLLM, which can serve model requests with minimal perceived delay, becomes a mandatory product feature, not just an infrastructure concern. Frameworks that offer easy deployment as a clean, scalable REST API endpoint—making them accessible via tools like LangChain—are preferred for agentic development (as seen in complex agent deployment guides).

For Product Managers: The quality of your AI agent is now directly correlated with the stability and speed of your chosen inference server. If you are building mission-critical workflows, stability under load, provided by robust MLOps tooling, is paramount.

4. The Strategic Dilemma: Managed Services Versus Self-Hosting Dominance

The sophistication of these frameworks forces organizations into a strategic deployment choice, often boiled down to cost versus control. Should we use a managed cloud service that handles the complexity of vLLM or Triton underneath, or should we take ownership of the infrastructure stack?

Managed services (like specialized offerings from AWS, Azure, or GCP) abstract away the painful MLOps configuration. They offer rapid scaling and reliable uptime, often costing more per compute hour (higher OpEx).

Self-hosting means deploying TGI or vLLM directly onto bare metal or owned cloud VMs. This grants complete control over batching parameters, memory allocation, and proprietary optimizations, potentially yielding significant cost savings over time, especially at massive scale. Cloud providers themselves offer guides comparing their integrated solutions against self-managed deployments (e.g., comparing AWS SageMaker vs. self-managed ECS), highlighting this trade-off.

Actionable Insight for Finance/DevOps: For startups prioritizing speed-to-market or small teams, managed services win. For large enterprises running proprietary models 24/7, the engineering investment required to master Triton or vLLM often pays for itself through reduced hourly compute costs. The cost analysis for 2024 heavily favors high utilization, meaning the framework that can handle the highest peak load efficiently is the winner.

What This Means for the Future of AI Workflows

The evolution of LLM serving is not a niche MLOps topic; it is the *pace layer* for all future AI applications.

- Inference Becomes Programmable: Serving frameworks are evolving beyond simple load balancers into sophisticated orchestrators that manage model versions, cascading requests, and dynamic resource allocation. We are moving toward "Inference Meshes" where microservices handle different model types (e.g., one server for vision, one for fast text generation).

- Latency as a Competitive Edge: As LLMs become commoditized, the speed at which a company can integrate and execute complex logic via agents will be the key differentiator. Low latency—achieved through aggressive optimization in frameworks—will translate directly into superior customer experience and faster internal automation.

- Hardware Democratization: As specialized chips mature, the serving frameworks must adapt quickly. The barrier to entry for utilizing cutting-edge hardware will be lowered by software layers that automatically map the model's computational graph onto the capabilities of the underlying silicon.

In summary, the battleground for the next era of applied AI is on the GPU. Choosing the right serving framework is not just an operational detail; it is the strategic decision that determines whether your AI application flies or crawls. Mastering the balance between performance engineering (vLLM/Triton) and integration simplicity (API endpoints/Function Calling) is the defining technical challenge for every enterprise aiming to move beyond chatbots into truly autonomous, agentic systems.