The Hardware Wars: Why LPUs and Specialized Chips Are Overthrowing the GPU Monolith for LLM Inference

For years, the story of Artificial Intelligence acceleration has been dominated by a single hero: the Graphics Processing Unit (GPU). These versatile chips, originally designed for rendering complex video game graphics, became the backbone of deep learning training and, subsequently, large language model (LLM) deployment. But as LLMs move from research labs into mission-critical, high-volume production environments, the GPU's reign is being challenged.

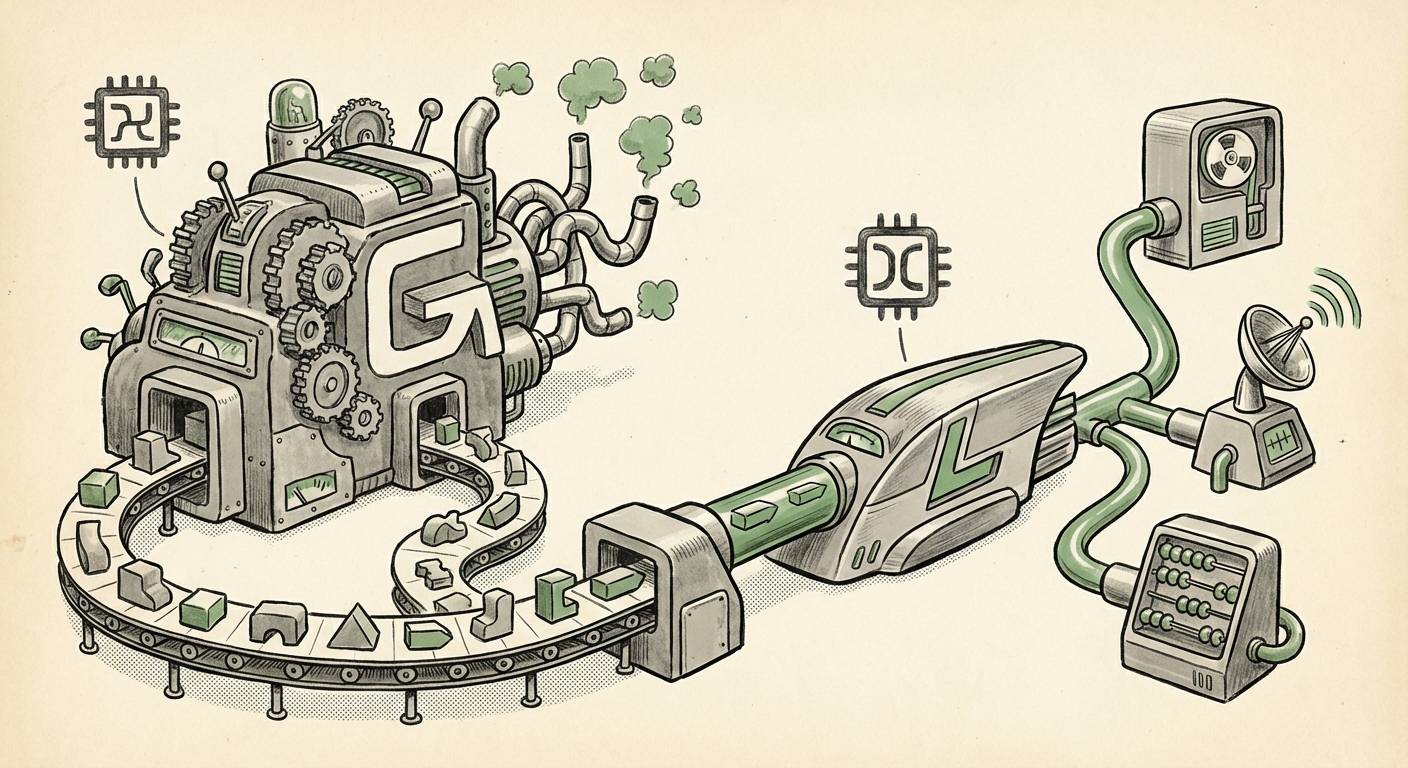

The latest disruption comes in the form of highly specialized silicon, exemplified by the concept of the Language Processing Unit (LPU). This shift signifies a critical pivot in the AI technology landscape: moving from general-purpose computing to surgical specialization for inference. This article dissects what LPUs are, why they matter, and how their integration, coupled with advanced workflow mechanisms like function calling, marks the true operationalization of generative AI.

The End of the Generalist: Why Inference Demands Specialization

To understand the excitement around LPUs, we must first understand the difference between training and inference. Training an LLM (teaching it using massive datasets) requires incredible parallel processing, which is where GPUs currently excel. However, most of the cost and time spent using AI in the real world is during inference—the moment the model generates a response, token by token.

Running inference on a general-purpose GPU is often inefficient because the chip architecture is built to do many things well, not one thing perfectly. LLM inference, particularly sequential text generation, benefits from architectures that can handle long, predictable data flows with maximum throughput and minimal wasted energy.

The LPU Proposition: Speed and Predictability

An LPU, as conceptualized, is an Application-Specific Integrated Circuit (ASIC) designed specifically for the mathematical operations fundamental to Transformer models—the core architecture of modern LLMs. If a GPU is a powerful, versatile Swiss Army knife, an LPU is a high-precision scalpel built only for language tasks.

This specialization yields two crucial benefits, which are corroborated by the industry's move towards competitive inference accelerators (Query 1: "AI inference hardware acceleration vs GPU dominance"):

- Ultra-Low Latency: LPUs aim for deterministic performance, meaning the time it takes to generate the next word (token latency) is extremely low and highly consistent. For real-time applications like customer service bots or instant code completion, waiting even a few hundred milliseconds is unacceptable.

- Superior Efficiency: By eliminating unnecessary components found in general-purpose chips, LPUs can often deliver higher performance per watt, drastically reducing power consumption and cooling costs in large server farms.

Leading industry analyses often compare these architectures, noting that while NVIDIA maintains its training lead, companies focusing purely on inference speed—such as Groq with its Language Processing Unit Architecture (LSPE)—demonstrate that software-defined specialization must be matched by hardware specialization to hit true production scaling goals [1].

The Economics of AI: Why Hardware Choice Equals Business Survival

The shift to specialized hardware is not merely a technical curiosity; it is an urgent economic necessity. As companies move from running small pilot projects to serving millions of users hourly, the operational expenditure (OpEx) related to cloud compute skyrockets. This directly relates to the second line of inquiry: the "Economics of LLM inference serving costs" [2].

The Token-Cost Calculus

For any business embedding AI into its core services, the cost to generate a single token (a word fragment) becomes a critical business metric. If a competitor running on specialized silicon can deliver the same response quality at one-tenth the hardware cost, the competitive gap widens rapidly.

Specialized inference hardware addresses this by optimizing for:

- Throughput: Serving more concurrent user requests on the same server rack.

- Power Efficiency: Lowering the electricity bill associated with running massive data centers full of GPUs.

- Capital Expenditure (CapEx) / Total Cost of Ownership (TCO): While designing custom silicon is expensive upfront, the per-unit cost savings over the chip’s lifespan for high-volume inference tasks can lead to significant TCO advantages compared to perpetually renting or buying high-end GPUs.

This financial pressure forces infrastructure managers and CTOs to move beyond the default choice (NVIDIA) and investigate bespoke solutions that align precisely with their specific workload profile—the profile of LLM inference.

From Smart Calculator to Active Agent: The Power of Function Calling

Having ultra-fast, cheap hardware is only half the battle. The second, equally transformative trend discussed alongside LPUs is the seamless integration of these models into real-world systems using function calling (also known as tool use or agentic workflows).

An LLM, fundamentally, is a text predictor. It cannot check the weather, update a database, or process a purchase order on its own. Function calling bridges this gap. As observed in emerging deployment patterns (Query 3: "LLM function calling production implementation case studies"), this mechanism allows the LLM to:

- Receive a user request (e.g., "Book me a flight to London next Tuesday.")

- Determine which external tool is needed (e.g., a `flight_booking_api`).

- Output a perfectly structured, machine-readable instruction (a JSON object) specifying the function and its arguments.

- The host application executes that function against the actual system (e.g., the airline booking server).

- The result of the function execution is fed back to the LLM, which then generates a natural language summary for the user.

API Endpoints: The New Universal Language

The concept of deploying these enhanced models through public API endpoints—like the suggested integration with "Public MCP servers"—is the key to operationalizing LPU speed. If an LPU cluster can generate tokens incredibly fast, exposing that speed via a reliable, scalable API means businesses can integrate near-instantaneous AI logic into legacy applications.

This capability moves AI beyond simple Q&A. It creates AI Agents capable of complex reasoning and multi-step execution. Official documentation and tutorials frequently highlight the robustness required for production function calling, ensuring the structured output is reliable every time, which is foundational for trust in automated processes [3].

What This Means for the Future of AI and Business Strategy

The convergence of specialized hardware (LPUs) and advanced software integration (Function Calling) is setting the stage for the next decade of AI development. This isn't just about faster chatbots; it’s about systemic automation.

For the AI Engineer and Architect (Technical Audience)

The focus shifts from optimizing Python frameworks on generalized hardware to mastering deployment pipelines for specific acceleration hardware. Engineers need to understand the architectural trade-offs: When is the slightly higher latency of a multi-GPU setup acceptable compared to the raw throughput of a dedicated LPU array? Furthermore, MLOps teams must become experts in managing agentic systems, ensuring the security, latency, and reliability of the external tools called by the LLM.

For the Business Leader and CTO (Strategic Audience)

The takeaway is clear: AI compute is becoming modular and competitive. Relying on a single vendor or architecture creates technological and financial risk. Strategic adoption requires a nuanced understanding of workload type:

- Heavy Training/Research: Continue leveraging high-end GPUs.

- High-Volume Production Inference: Actively investigate specialized ASICs (LPUs, TPUs, etc.) to manage OpEx.

- Agentic Workflows: Invest in robust orchestration layers (like LangChain or direct API management) to enable LLMs to securely interact with proprietary business logic.

The ability to deploy complex, multi-step AI agents that can access real-time enterprise data—all running on the most cost-effective silicon available—will be the primary differentiator for technology-forward organizations.

Actionable Insights: Preparing for the Specialized Inference Era

How can your organization position itself to capitalize on these converging trends?

- Benchmark Inference Costs Now: Perform a rigorous audit of your current inference workloads. If you are serving millions of tokens daily, the economic argument for non-GPU silicon is already strong. Look for hardware providers specifically advertising low-latency LLM serving.

- Develop Internal Tool Libraries: Begin standardizing internal services (databases, inventory systems, CRM functions) as clean, well-documented API endpoints suitable for LLM function calling. This translates your business capabilities into the language of AI agents.

- Decouple Logic from Hardware: Design your AI applications to be hardware-agnostic where possible. Use standardized API wrappers for model invocation. This flexibility allows you to switch seamlessly from a GPU cluster to a dedicated LPU service as costs and performance evolve, protecting your sunk investment in software logic.

The AI revolution is no longer about building bigger models; it is about deploying them smarter, faster, and cheaper. The Language Processing Unit and its specialized cousins are the engines of this operational revolution, transforming theoretical intelligence into tangible, cost-effective business action.