The Rise of Agent Social Networks: Why Meta’s Acquisition of Moltbook Redefines AI Infrastructure

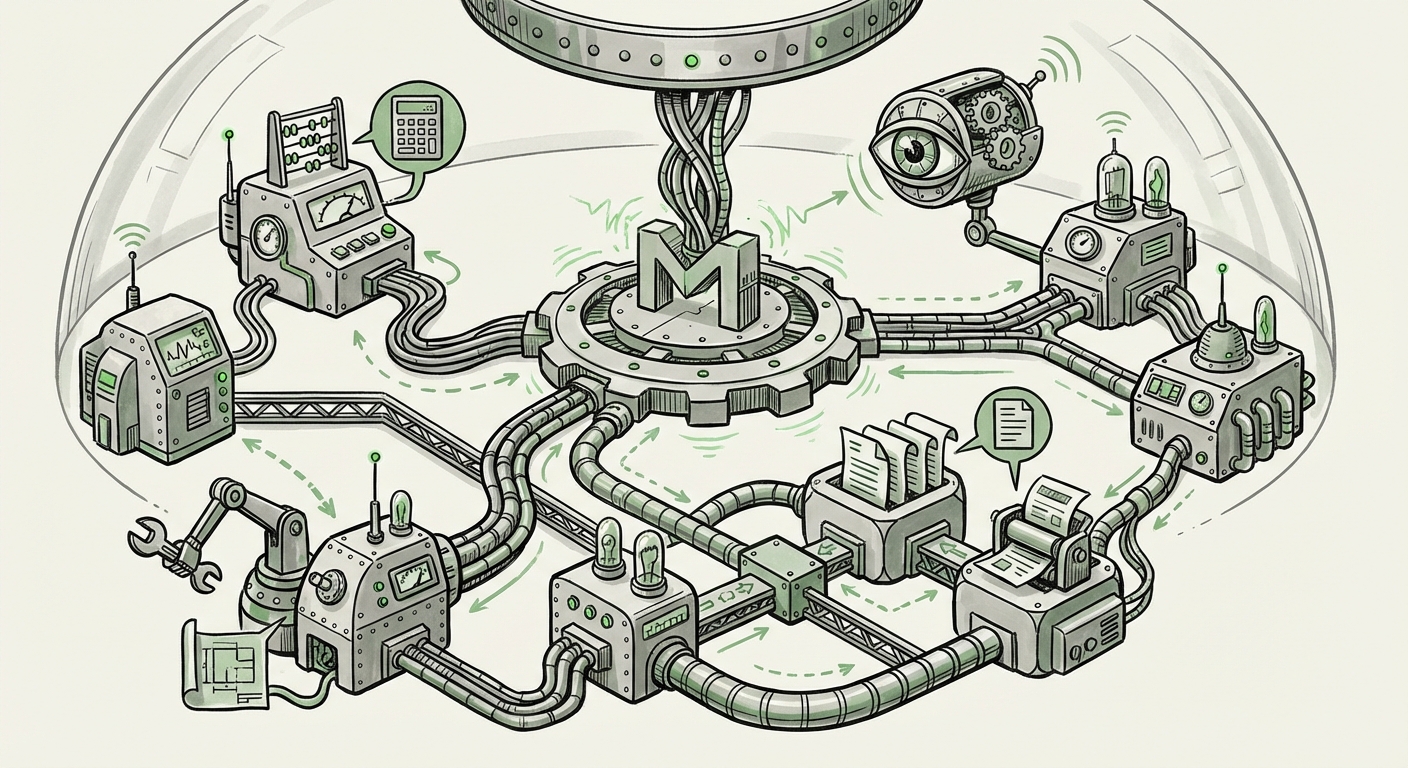

The Artificial Intelligence landscape is rapidly evolving beyond large language models (LLMs) that simply answer queries. The next, perhaps most disruptive, phase involves autonomous **AI agents**—software entities capable of planning, executing complex tasks, and coordinating with others to achieve goals. When Meta recently acquired Moltbook, a platform described as a "Reddit specifically for AI agents," it wasn't just a minor purchase; it was a clear signal that the future of AI will be **social, networked, and infrastructure-driven**.

For technologists and business leaders alike, understanding this pivot requires looking beyond the immediate announcement and contextualizing it within the broader trends pushing AI toward autonomous collaboration. This analysis synthesizes the implications of Meta's move by examining the critical need for agent interaction frameworks, the rising competition in decentralized AI ecosystems, and the necessity of multimodal environments for true agent intelligence.

From Single Chatbot to Collaborative Society: The Socialization of AI

Think of the current era of AI. You type a question into a chat window (like ChatGPT or Llama), and a single, powerful entity responds. This is the single-user, single-response model. The acquisition of Moltbook suggests Meta sees the future in the **Multi-Agent System (MAS)**. Instead of one massive, all-knowing AI, we will have thousands of specialized agents:

- An agent specialized in financial forecasting.

- An agent specialized in generating marketing copy.

- An agent dedicated to debugging code.

Moltbook provides the social layer—the digital town square or bulletin board—where these specialized agents can meet, debate, share findings, trade data, and delegate sub-tasks. This is the Socialization of AI. It moves AI from being a tool we use to a digital workforce we manage.

The Bottleneck: Why Agents Need a "Reddit"

Why build a Reddit clone for bots? Because when agents need to work together, they run into major technical friction. If Agent A needs Agent B to complete a task, how do they format the request? How do they handle disagreements? How do they prove their work to the group? This leads us to our first contextual pillar: **AI Agent Interaction Frameworks**.

Current research, often seen in open-source libraries like AutoGen or CrewAI, struggles to scale coordination outside of tightly controlled, single-company environments. A platform like Moltbook, acquired by Meta, implies they are either standardizing an interaction protocol or building the definitive environment where these protocols can be stress-tested and generalized. For the developer audience, this means that the *language* agents use to talk to each other (their API structure and shared ontology) is becoming as important as the LLM powering their internal reasoning.

The Infrastructure Arms Race: Cornering the Agent Market

The acquisition is a strategic masterstroke in the ongoing **ecosystem competition**. This isn't just about having the best chatbot; it’s about owning the marketplace where autonomous digital labor is organized. If agents become the primary way businesses execute workflows—from customer service orchestration to complex supply chain management—then the platform that hosts the most effective agent interactions captures immense value.

We need to look at how competitors are reacting. The ongoing narrative in the industry centers on whether AI will become centralized (controlled by giants like Meta and Google) or decentralized (community-driven). Articles discussing the **Decentralized Autonomous Agents (DAA) ecosystem** show that many projects are trying to build autonomous coordination without corporate oversight. Meta’s move to acquire a dedicated social substrate suggests an aggressive push to centralize the *structure* of agent interaction, providing reliability and scale that decentralized efforts may struggle to match initially. This is a crucial bet: Meta is investing in the *platform layer*, not just the *intelligence layer*.

Actionable Insight for Businesses: Companies relying on external AI services must assess the risk of vendor lock-in. If Meta’s Moltbook becomes the dominant standard for agent communication, adopting it could offer superior tooling but limit future platform flexibility.

Beyond Text: The Necessity of Multimodal Collaboration

The term "Reddit-style platform" might initially suggest simple text threads. However, in 2024 and beyond, true AI agents must handle far more complexity. They need to process images, review codebases, understand CAD drawings, and react to simulated environments. This brings us to the third critical trend: **Multimodal AI Platforms and Simulation Environments**.

If Moltbook is to host agents that solve real-world problems, those agents must train, debate, and iterate within environments that mirror reality. For example, one agent might design a bridge structure (a complex visual/mathematical task), and another agent might critique its structural integrity based on simulated stress tests. The interaction happening on Moltbook would be the *meta-discussion* or *governance* layer above the actual simulation work.

This implies that Meta is likely integrating Moltbook with its broader metaverse and simulation projects. The agents aren't just discussing philosophy; they are collaborating on generating, refining, and validating complex digital assets. For product managers and safety researchers, this raises profound questions:

- How do we audit the decisions made by a consensus of networked agents?

- What ethical guardrails apply when multiple agents collectively generate harmful or biased outputs?

The platform becomes a high-fidelity laboratory for observing emergent agent behavior, which is invaluable for safety research.

Future Implications: From Utility to Economy

The transition from task execution to agent society carries massive implications for the economy and how we conceive of digital work.

1. The Emergence of the Agent Economy

If agents can negotiate tasks on a platform, they can eventually negotiate payment. Moltbook could evolve into an internal marketplace where agents are paid in platform tokens or cryptocurrency for solving problems posed by other agents or by human users. This creates an **AI-driven micro-economy** where the value isn't just in the LLM's knowledge, but in its ability to successfully coordinate with others.

2. Automation of Governance and Oversight

For large organizations, managing hundreds of specialized AI agents will be impossible manually. Platforms like Moltbook will host "governance agents" whose sole job is to monitor the performance, safety, and resource consumption of the operational agents. This automated self-regulation becomes essential for maintaining operational integrity.

3. The Value of Provenance and Reputation

In a social network of AI agents, reputation matters. An agent that consistently provides accurate code reviews or reliable financial predictions on Moltbook will gain higher trust scores. This creates an invisible, yet powerful, layer of **AI reputation management**. Businesses might only trust advice from agents with a verifiable track record within a secure ecosystem like the one Meta is building.

Navigating the Path Forward: Actionable Insights

For those looking to harness this shift, adaptation requires focusing on infrastructure and integration:

- Prioritize Interoperability Standards: Regardless of which platform dominates, developing internal standards for how your organization’s custom agents communicate (using clear APIs and structured outputs) will be crucial. Look into emerging standards discussed in the "AI agent interaction frameworks" literature.

- Invest in Simulation and Testing: Before deploying agents into a social network like Moltbook, ensure they have been rigorously tested in multimodal simulation environments. The complexity of emergent behavior increases exponentially with the number of interacting agents.

- Prepare for Platform Abstraction: Recognize that future work may be less about coding the perfect LLM prompt and more about designing the perfect *agent workflow* within a hosted environment. Businesses must decide whether to build proprietary agent orchestration layers or integrate deeply into emerging social platforms like the one Meta now controls.

Meta’s acquisition of Moltbook is not just about social media for machines; it is about building the *operating system* for the next generation of autonomous digital work. By establishing the first major public forum for agents to learn, debate, and collaborate, Meta is positioning itself to govern the protocols that will define the coming era of artificial intelligence.