The Multi-Model Revolution: Why Microsoft’s Claude Integration Signals the End of the Single-LLM Era

In the rapidly evolving landscape of artificial intelligence, platform dominance often appears straightforward—whoever has the best foundational model wins. For nearly two years, the narrative around Microsoft’s enterprise AI, powered by Copilot, was intrinsically linked to its partnership with OpenAI. However, recent developments mark a profound strategic pivot: Microsoft is integrating Anthropic’s cutting-edge Claude model into Copilot to specifically handle complex tasks across Outlook, Teams, and Excel.

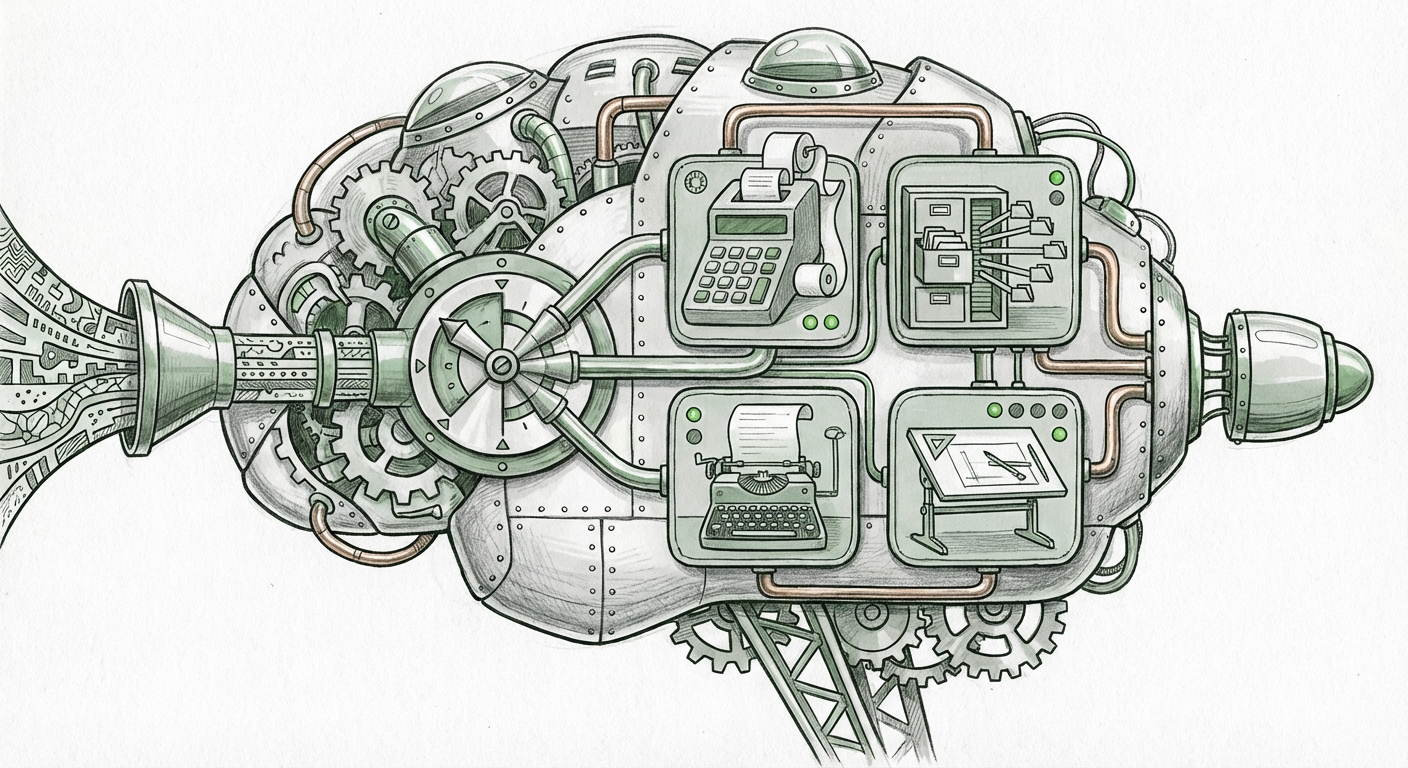

This is far more than a simple addition of a new tool; it signals the arrival of the Multi-Model AI Integration era. It suggests that for sophisticated, real-world business operations, relying on a single "master" Large Language Model (LLM) is becoming obsolete. Instead, successful AI deployment requires an orchestrator capable of dynamically selecting the best specialized tool for the job.

The Strategic Shift: From Single Vendor Lock-in to AI Flexibility

When Microsoft first launched Copilot, it relied heavily on GPT technology. This provided unparalleled capability but also created an inherent dependency on one provider’s roadmap, performance, and ethical guardrails. The decision to bring Claude into the fold—dubbed "Copilot Cowork" in early reporting—is a masterclass in mitigating technological risk and optimizing performance.

Think of it like building a construction crew. You wouldn't hire only plumbers when you need an electrician and a carpenter, too. Microsoft is building a specialized AI crew. By tapping Claude, Microsoft gains access to capabilities where Anthropic is particularly strong, likely in areas requiring high levels of safety, complex logical reasoning, or specific types of contextual memory required for cross-application coordination.

Corroborating the Trend: The Industry Moves Beyond Monoculture

This move aligns perfectly with broader industry analysis suggesting that platform vendors must diversify their LLM backends to future-proof their offerings. Research into "Microsoft Anthropic Copilot strategy diversification" confirms that industry watchers view this as a calculated move to ensure resilience. Major cloud providers, like Microsoft’s Azure, are increasingly positioning themselves as the "full-stack AI provider," meaning they host and facilitate access to the *best* models available, regardless of origin.

For the average business user, this means the AI powering their daily tools will become smarter and more reliable because it is being handed off to the LLM best suited for that specific request. If you ask Copilot to draft a summary of an email thread in Outlook, it might use a model optimized for conversational flow. If you then ask it to analyze a complex budget projection in Excel, it might seamlessly switch to Claude for its supposed superior analytical depth.

Why Claude? Performance, Safety, and Specialized Excellence

The immediate question is: Why not just rely on the latest GPT model? The answer lies in specialization and enterprise priorities. Our analysis, informed by searches regarding "Anthropic Claude vs GPT for enterprise workflow automation," points toward a performance optimization strategy:

- Task-Specific Optimization: Different LLMs excel at different things. GPT models might be world-class at creative writing or general knowledge retrieval, whereas Claude has demonstrated strength in handling extremely long documents (long-context understanding) and complex, step-by-step reasoning, which is crucial for tasks involving large datasets in Excel or detailed procedural workflows in Teams.

- Enterprise Security and Trust: Anthropic was explicitly founded with an intense focus on safety, using techniques like Constitutional AI to build guardrails directly into the model’s training. For CIOs handling sensitive PII or proprietary financial data within Microsoft 365, choosing a model renowned for its rigorous safety standards reduces inherent governance risk.

The Governance Advantage: AI Safety as a Procurement Driver

For technology executives, risk management is paramount. As noted in discussions around "Anthropic's focus on AI safety and enterprise adoption," trust is becoming a primary driver in LLM procurement. Microsoft’s inclusion of Claude sends a powerful signal: our enterprise tools are built not just for performance, but for trustworthy execution. When Copilot Cowork autonomously runs a task that changes a budget forecast, enterprises need the highest degree of assurance regarding its outputs and behavior. Claude brings that proven safety focus directly into the Microsoft ecosystem.

The Technical Horizon: Interoperability and Frameworks

This integration confirms that the future of enterprise AI development rests on interoperability. Applications will not be written to be Model-A-specific; they must be written to leverage the entire "Model Zoo."

This reality is driving massive innovation in developer frameworks. We are seeing a shift toward abstracting the LLM layer, much like cloud computing abstracted the server hardware layer decades ago. Queries focused on the "Future of enterprise AI interoperability LLM frameworks" show that tools like Microsoft’s own Semantic Kernel are vital here.

These frameworks act as intelligent routers. They examine an incoming request—"Schedule a meeting, summarize the notes, and draft the follow-up email based on the spreadsheet"—and decide:

- Step 1 (Excel Analysis): Send to Claude for deep numerical reasoning.

- Step 2 (Teams Scheduling): Send to GPT for conversational clarity and integration with Microsoft Graph APIs.

- Step 3 (Outlook Draft): Send back to the optimized model for final tone setting.

This is the concept of Model Chaining or Orchestration in action. It means businesses will see higher quality results because the system is always using the best specialized tool, leading to fewer AI hallucinations or errors in complex, multi-step processes.

Practical Implications for Businesses Today

What does this multi-model reality mean for the organizations currently deploying or planning to deploy Copilot?

1. Higher Expectations for Automation Depth

If Copilot can now leverage the analytical strengths of Claude on your spreadsheets (Excel) and the organizational logic of its other components on your communications (Teams/Outlook), the ceiling for what you can automate rises significantly. You should start testing more complex, multi-application workflows, as the system is now architected to handle them.

2. Focus on Prompt Engineering for Task Routing (Implicitly)

While the routing is currently managed by Microsoft’s backend, users will naturally learn which types of requests yield the best results. If you notice your complex data analysis always succeeds after the switch, you might phrase future requests in a way that encourages the model to engage its analytical component first. This is an emerging form of "meta-prompting"—directing the AI toward the right internal tool.

3. Reduced Vendor Lock-in Anxiety

For enterprises worried about committing too heavily to one AI provider, Microsoft’s strategy is reassuring. It validates the idea that a robust AI strategy requires flexibility. This market dynamic pushes all major platform players—Google, Amazon, and Microsoft—to adopt similar multi-model architectures, benefiting the customer through continuous competition and innovation.

Societal Implications: Shaping the Next Generation of AI Ethics

The collaboration between OpenAI and Anthropic, mediated by Microsoft, is also a fascinating study in AI governance. These two companies have historically represented different philosophical approaches to AI safety. By bringing them together under one enterprise umbrella, Microsoft is effectively creating a "safety-by-consensus" layer.

For society, this suggests that the most powerful AI tools deployed globally will be stress-tested against multiple ethical frameworks simultaneously. If Claude’s Constitutional AI principles act as a baseline for all tasks it handles, we can expect a slight uplift in the overall safety and predictability of enterprise-facing AI interactions, setting a higher bar for the entire industry.

The AI race is no longer just about who can build the biggest model; it’s about who can build the smartest, safest, and most adaptable *system* of models. Microsoft’s adoption of Claude within Copilot is the clearest signal yet that the era of monolithic LLMs is over, replaced by a sophisticated ecosystem where specialization and strategic interoperability reign supreme.