The Great Firewall of AI: Why OpenAI's Promptfoo Acquisition Signals Mandatory Enterprise Security

The narrative surrounding Generative AI has long focused on capability—how fast, how creative, and how intelligent models like GPT-4 truly are. But as these powerful tools move from exciting demos to essential infrastructure within banks, healthcare systems, and manufacturing floors, the conversation is shifting dramatically. It’s no longer about *what* AI can do, but *how safely* it can be deployed.

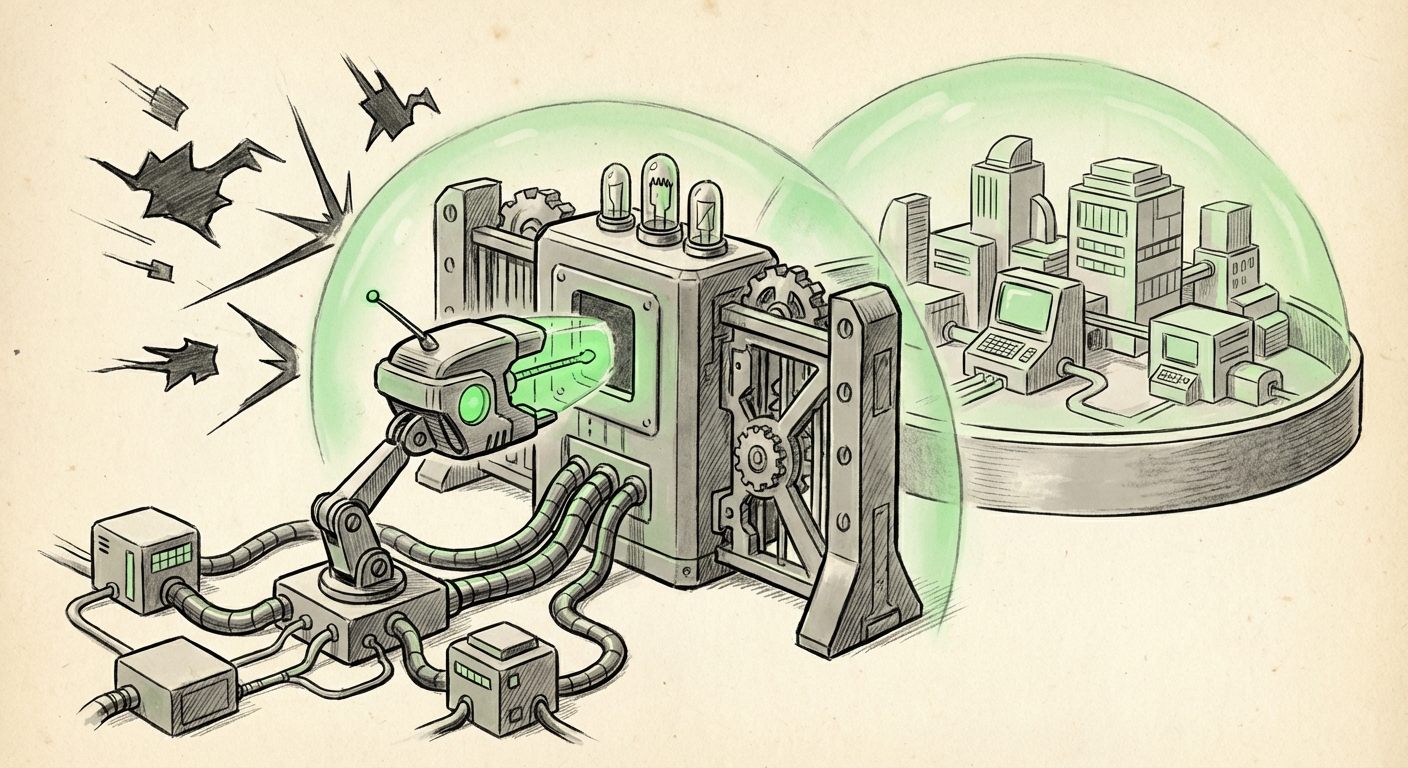

The recent news that OpenAI is acquiring **Promptfoo**, a specialized security testing platform, to build automated vulnerability checking directly into its **Frontier enterprise platform**, is not just a business transaction; it is a flashing signal that the AI industry has entered its maturity phase. This move means **AI security is rapidly transitioning from a specialized, optional concern to a mandatory, baseline requirement for large-scale deployment.**

The Maturity Curve: From Capability to Governance

For years, AI model development resembled the early internet: groundbreaking innovation outpacing adequate safety measures. Developers were focused on building amazing features, often bolting on security as an afterthought. However, the moment AI systems started handling sensitive customer data, making high-stakes decisions, or interacting with critical business logic, the risk profile changed completely.

This acquisition contextualizes the problem perfectly. Promptfoo specializes in testing models against adversarial inputs—the digital equivalent of trying to trick a guard. These threats include:

- Jailbreaks: Techniques used to bypass the model’s built-in safety guardrails to make it produce harmful, biased, or unauthorized content.

- Prompt Injections: When external, malicious user input overrides the system's original instructions, causing the AI to leak confidential data or execute unintended actions.

- Data Leaks: Ensuring that during processing or fine-tuning, sensitive proprietary data used by the enterprise does not inadvertently become part of the public-facing model output.

When a company like OpenAI—the industry leader—invests capital to internalize this specific defense mechanism, it validates the threat landscape. It tells Chief Technology Officers (CTOs) globally: "If we don't have automated testing for these vectors, you are operating at unacceptable risk." This is the **"End of the Wild West"** for AI deployment.

Context 1: Quantifying the Risk – The Escalating Threat Landscape

To understand the gravity of OpenAI’s move, we must look at the growing frequency of these attacks. While security research into these areas continues, statistics from leading security firms suggest that testing for vulnerabilities is no longer a theoretical exercise.

The complexity lies in the fact that these aren't traditional software bugs. They are exploits targeting the *language* and *reasoning* capabilities of the model itself. A prompt injection attack doesn't crash a server; it subtly hijacks the AI's objective. This demands security tools purpose-built for language models, which is precisely the market Promptfoo occupied.

For **Security Architects and Compliance Officers**, this means static code analysis tools are insufficient. They need continuous monitoring and testing frameworks that simulate real-world adversarial prompting. OpenAI absorbing Promptfoo means that enterprise clients using Frontier will have these vulnerability scans running automatically, likely before deployment—a huge leap for regulatory compliance where proof of due diligence is paramount.

Context 2: The Competitive Race for Enterprise Feature Parity

OpenAI does not operate in a vacuum. Its core business is now competing head-to-head with the massive cloud providers—Amazon (AWS), Google (GCP), and its primary partner, Microsoft (Azure). For enterprises choosing where to host their mission-critical AI workloads, security features are as important as API speed or cost.

This leads to the crucial point of **Competitive Feature Parity**. Microsoft, through its Azure AI ecosystem, already offers robust built-in safety mechanisms, such as the Azure AI Content Safety system. This system provides layers of filtering for harmful content and policy adherence. When a customer sees that OpenAI’s enterprise offering, Frontier, is actively acquiring best-in-class external testing capabilities, it’s a clear strategic countermove.

For **Enterprise Architects**, this means that security tooling is no longer a customizable add-on but a core feature expected across the board. If one major platform integrates automated security testing natively, others must follow suit rapidly, or risk being perceived as the less secure option for regulated workloads. This acquisition accelerates the commoditization of basic AI safety features, pushing the competitive edge toward model performance and integration ecosystems.

Context 3: The DevSecOps Revolution for LLMs

The most profound implication of baking security testing directly into the platform concerns the workflow of development. The industry is rapidly moving toward **AI DevSecOps**. In traditional software development, we use SAST (Static Application Security Testing) and DAST (Dynamic Application Security Testing) tools integrated into Continuous Integration/Continuous Deployment (CI/CD) pipelines.

The acquisition signals the formalization of LLM-specific security testing within this pipeline. Instead of building an application, deploying it, and *then* having a red team try to break it, enterprises will soon expect security scans—specifically targeting prompt injection and data leakage—to run every time a new fine-tuned model version is prepared for deployment.

This shift is vital for **DevOps Engineers and AppSec Specialists**. It demands a new understanding of security governance. They must learn to write effective, automated tests (like those Promptfoo specializes in) that probe the model's language understanding, ensuring that system prompts remain intact regardless of user interaction.

By integrating this capability into Frontier, OpenAI is advocating for an ecosystem where security is checked at the source, before the model ever serves a production query, moving security "further left" in the development lifecycle.

The Enterprise Appetite: Trust as the Ultimate Currency

Why are enterprises so eager for these built-in tools? Because adoption hinges entirely on trust, particularly in risk-averse sectors.

When a bank deploys an AI to summarize client portfolios, a single successful prompt injection that exposes confidential financial data can result in massive regulatory fines and irreparable reputational damage. Simply signing a contract with OpenAI stating they are responsible for security is insufficient; organizations need **verifiable, auditable proof** that the system they are using has been rigorously tested against known exploits.

This means that for **Investors and Governance Teams**, the roadmap for the Frontier platform now critically includes security metrics. Quarterly reviews will shift to include reports on failed penetration tests against the LLM interface, similar to how they currently review traditional application security posture.

The acquisition therefore addresses the market's highest demand: **reducing operational liability.** By providing a native, automated testing suite, OpenAI is reducing the friction for heavily regulated industries to move their most sensitive tasks onto their platform.

Looking Forward: Actionable Insights for the AI Landscape

What does this mean for the immediate future of AI technology adoption?

1. Security Tooling Consolidation

We will likely see other major model providers respond by either acquiring similar specialized security firms or rapidly building equivalent, native capabilities. The era of third-party, separate LLM testing tools may shrink as core security functions are bundled into platform fees.

2. Rise of the AI Security Engineer (AISE)

While core security is automated, new roles will emerge dedicated to managing, interpreting, and hardening these automated results. The AI Security Engineer will be crucial—less focused on code and more focused on adversarial language strategy and complex governance policy enforcement within the automated testing frameworks.

3. Focus Shifts to Custom Model Hardening

For companies that choose to fine-tune or host their own models (away from major platforms), the need for tools like Promptfoo remains critical. The general market shift sets a new minimum standard. If OpenAI is baking this in for enterprise, companies deploying bespoke models must demonstrate equivalent, demonstrable testing protocols.

Ultimately, OpenAI’s acquisition of Promptfoo marks a pivotal moment. It’s a tacit admission that raw intelligence without rigorous, automated defense is an enterprise liability. The future of AI deployment isn't just about building smarter models; it's about building smarter firewalls around them. As we integrate these systems deeper into the global economy, security testing is becoming the non-negotiable foundation upon which all future innovation must rest.