The Omni-Model Revolution: Why OpenAI’s Next Leap Means True Multimodal Intelligence

The landscape of Artificial Intelligence is defined by eras. We moved from simple task-specific models to large language models (LLMs) that conquered text. Then came the spectacular advances in generation, exemplified by models like Sora creating hyper-realistic video from text prompts. But what happens when the pipeline between these specialized models breaks down? The latest whispers from OpenAI employees suggest the answer lies in a single, massive step forward: the development of an Omni-Model.

This potential shift, hinted at by internal project codenames like "BiDi" (potentially standing for Bi-Directional or Bi-Modal integration), signifies the move from a collection of specialized tools to a single, unified brain capable of understanding and generating across *all* senses simultaneously. For analysts and technologists alike, this is not just an incremental upgrade; it is a fundamental change in AI architecture that signals the true acceleration toward Artificial General Intelligence (AGI).

The Problem with Specialized Intelligence: Why Stitching Fails

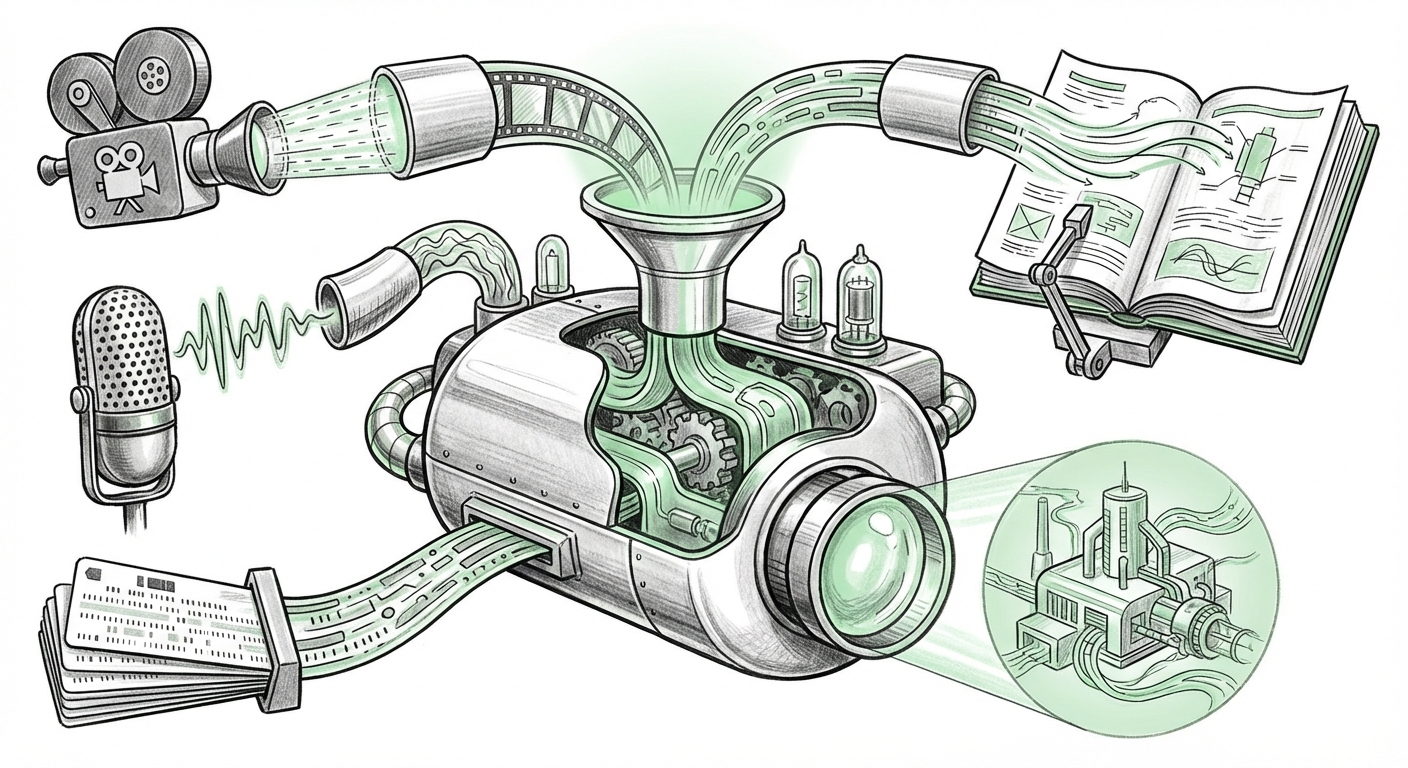

To understand the excitement around an Omni-Model, we must first recognize the current limitation. Today’s powerful AI systems often operate like highly skilled teams, not unified individuals. If you ask GPT-4 to write a scene, it handles the text. If you then ask Sora to generate the video based on that text, the systems must communicate. This communication requires translation.

Imagine trying to describe a complex ballet performance to someone who has only ever read about dance. You have to use words (text tokens) to describe motion, rhythm, and visual spectacle. Information is always lost in translation.

Technically, this means:

- Tokenization Barriers: Text uses tokens, images use patch embeddings, and audio uses spectrograms. These different 'languages' must be translated before one model can process the output of another.

- Latency and Efficiency: Running three or four separate large models sequentially adds significant processing time and computational cost.

- Coherence Drift: When sequential models handle different aspects, the final output can suffer from a lack of deep, unified reasoning across the modalities. The video might look right, but the accompanying sound or the logic of the script might fail subtly.

Corroboration and Competition: The Multimodal Race is On

The theory of an OpenAI Omni-Model is not happening in a vacuum. Market dynamics and competitive pressure heavily suggest this architectural pivot is necessary. Our analysis of corroborating evidence confirms this is the industry's true next battleground:

1. The Gemini Baseline: Setting the Standard for Native Multimodality

Our search into competitive offerings reveals that rivals are already staking their claim on native multimodality. Google’s Gemini architecture was explicitly designed to ingest and reason across text, code, audio, and images from the ground up. Recent developments, like those highlighted in discussions around Gemini 1.5 Pro’s massive context window and video understanding, show a strong commitment to deeply integrated processing.

For OpenAI, an omni-model is less about catching up and more about leapfrogging. If Gemini can natively reason about the contents of a long video file, OpenAI’s answer—the theoretical "BiDi" model—must demonstrate superior contextual integration, perhaps by linking visual concepts directly to emotional tones in audio streams with unprecedented accuracy.

2. The Architectural Blueprint: Seeking Unified Transformers

The shift from component systems to unified systems is a major engineering hurdle. Deep dives into technical discussions around unified sequence models and transformer architectures show researchers are struggling with how to map inputs from vastly different domains (pixels vs. words) into a single, coherent latent space. An Omni-Model solves this by training one massive network across all data types simultaneously. This requires incredible scale but promises emergent reasoning capabilities that were previously impossible.

For the technical audience, this means a transition from models optimized for prediction (like GPT-3) to models optimized for *world simulation*.

3. Executive Vision: The March Toward AGI

The ultimate goal driving this architecture is AGI. When leading figures like Sam Altman discuss the future, the language consistently moves away from "language models" toward "reasoning systems." Recent analyses of Altman's vision for the next frontier often points toward systems that can interact with the physical world—a requirement that necessitates true multimodal fluency. You cannot control a robot or design complex engineering solutions if you cannot reliably connect the sight of a wrench, the sound of its use, and the textual instructions for its assembly.

What the Omni-Model Means for the Future of AI

If OpenAI successfully deploys an Omni-Model, the immediate impact will be felt in reasoning, agency, and creative synthesis. We are moving from "AI generating content" to "AI understanding reality."

Enhanced, True Reasoning

The biggest gain is in cross-modal reasoning. Consider the difference:

- Current System: User inputs text: "Make the music tense." The text model sends this to the music model, which guesses at 'tense' music, possibly by selecting a minor key.

- Omni-Model System: The model inherently understands what 'tense' means across visual tension (a scene shot with high contrast), auditory tension (dissonant chords), and narrative tension (a cliffhanger script). It generates all outputs coherently because they share a single, deep understanding of the concept.

The Next Frontier in Creative Synthesis

The implications for creative industries are staggering, as analyzed in many forward-looking reports regarding multimodal AI integration in workflow automation. Imagine an architect feeding the system an aerial drone video of a site, conversational audio notes about client preferences (e.g., "I want more light here, but keep the view"), and regulatory documents (text). The Omni-Model could instantly generate compliant 3D blueprints, rendered video walkthroughs, and a summary budget.

This level of integration moves AI from being a simple tool to an active, multi-skilled partner.

Efficiency and Accessibility

From an engineering standpoint, consolidating multiple specialized models into one unified core promises massive efficiency gains. Fewer endpoints, simpler deployment, and greater optimization mean that high-fidelity multimodal AI could become cheaper and faster to run, democratizing complex capabilities previously restricted to well-funded labs.

Actionable Insights for Business Leaders and Technologists

Whether the "BiDi" project is the final form or just a stepping stone, the direction is clear: specialized AI silos are obsolete. Enterprises must prepare for unified intelligence.

For Technology Strategists: Embrace Unified Data Pipelines

Stop building separate data pipelines for your image recognition tasks, your NLP tasks, and your speech processing. Begin architecting systems that can handle and cross-reference data streams concurrently. The winners in the next two years will be those who can feed their models rich, messy, real-world data, not just clean text logs.

For Business Leaders: Redefine Workflow Boundaries

Identify workflows that currently require human handoffs between content creation, data analysis, and communication. If a task requires someone to watch a video, write a summary, and then create a presentation slide, this entire sequence is now ripe for single-shot automation by an Omni-Model. Look for bottlenecks in sensory translation within your organization.

For Developers: Master Prompt Engineering Across Dimensions

Prompting will evolve from crafting perfect sentences to crafting perfect *scenarios*. Learning how to instruct an AI model using a combination of written direction, visual examples, and auditory cues simultaneously will be the critical skill. Developers must start thinking in parallel streams of sensory input.

Conclusion: The Convergence Is the Inevitable Next Step

The journey toward Artificial General Intelligence is paved with architectural breakthroughs. The leap from text-centric models to specialized multimodal systems was huge, but the realization that these specialized systems are inherently inefficient has led us to the next logical, necessary destination: the Omni-Model.

OpenAI’s reported work on this unified architecture—whether called BiDi or something else—is not just a competitive maneuver against rivals like Google; it is the structural prerequisite for unlocking deeper, more human-like comprehension. When an AI can see, hear, read, and reason about all these inputs as a single, unified experience, it stops being a tool of simulation and starts becoming a true agent of understanding. The era of truly integrated AI is no longer on the distant horizon; the foundations are being laid right now in the race for the Omni-Model.