900 Million Users Strong: Decoding the Infrastructure, Competition, and Future of Mainstream AI Adoption

The digital world just hit a new milestone. Reports indicating that ChatGPT is now servicing an astonishing 900 million weekly users signal far more than just a popular new app; it marks the moment Generative AI transitioned from a technological novelty into a foundational layer of modern digital life. This rapid, massive adoption forces us, as analysts, to move beyond simply celebrating user counts. We must rigorously examine how this scale is sustained, who is winning the ensuing battle for dominance, and where this shift leads for the global economy.

To truly understand this paradigm shift, we must contextualize this adoption across three critical vectors: the immense technological engine required to support it, the fierce competition rising to meet user demand, and the profound economic implications for enterprises.

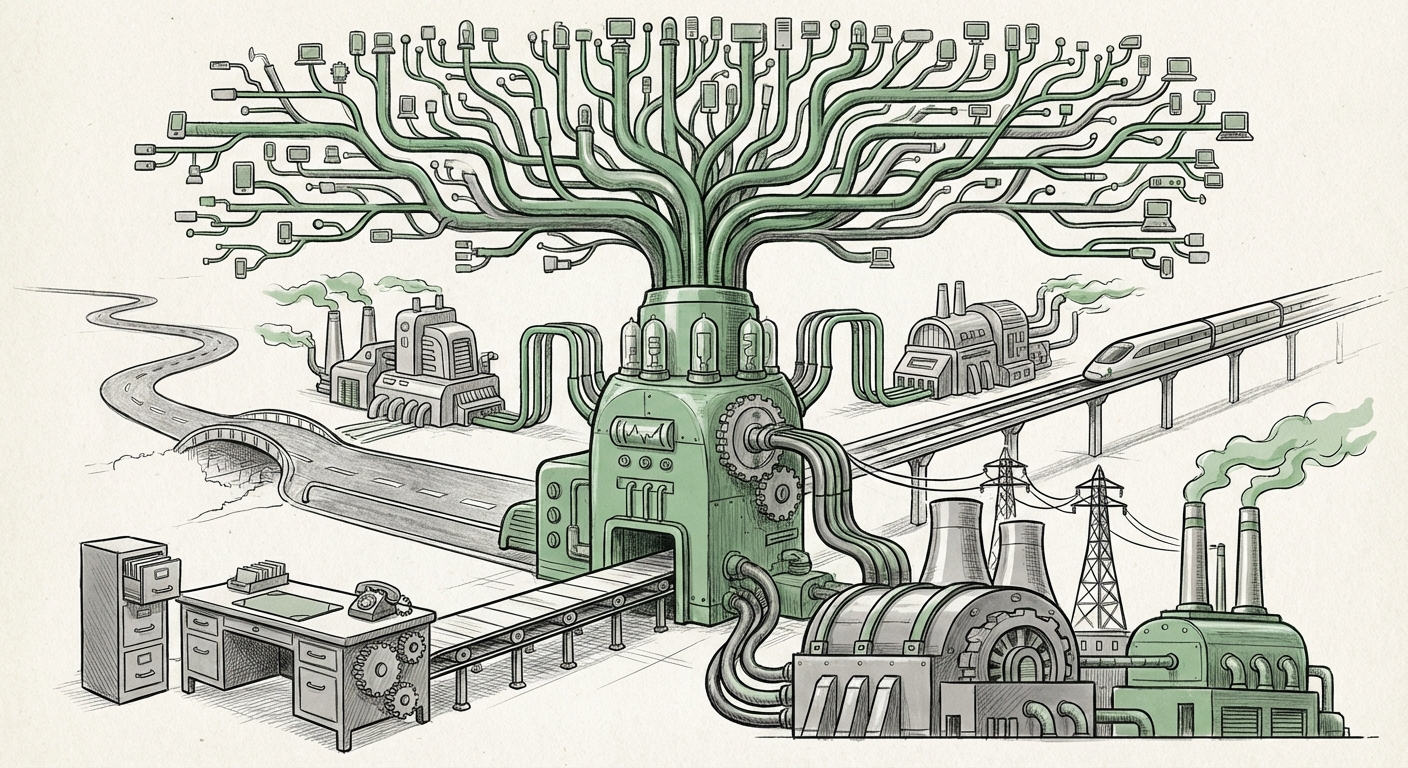

The Invisible Engine: Infrastructure Demands of Mass AI Service

For every query typed into a chatbot by one of those 900 million weekly users, a staggering amount of computational power is unleashed. Supporting this volume is not trivial; it requires an unprecedented commitment to hardware and data center architecture. This is the "how" behind the magic.

When we look at supporting statistics—for instance, through research into the "Cloud computing infrastructure demand for LLMs 2024/2025"—we see the immediate pressure points. Running Large Language Models (LLMs) is incredibly expensive and power-hungry, requiring vast arrays of specialized processors, primarily high-end Graphics Processing Units (GPUs). If ChatGPT handles hundreds of millions of complex interactions daily, the required server farms are equivalent to small cities in their power consumption and cooling needs.

The Chip Wars and Cloud Dominance

This demand validates the intense strategic focus on AI-specific hardware. Companies like NVIDIA, which produce the dominant AI chips, are seeing historic valuations because they supply the literal engine for this adoption. Simultaneously, the major cloud providers—Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP)—are engaged in an arms race to build proprietary "AI Superclusters."

For the technical audience, this means architectural decisions are paramount: moving from simple scaling to optimized inference pipelines, quantization techniques to reduce model size without crippling performance, and the rise of custom silicon (like Google’s TPUs or AWS’s Trainium/Inferentia) designed specifically for the repetitive, massive matrix multiplications that power LLMs.

Implication for the Future: The democratization of AI access is currently bottlenecked by the physical availability of advanced hardware. Future AI breakthroughs may hinge less on theoretical algorithms and more on engineering breakthroughs in chip efficiency and data center logistics. This centralization of infrastructure power gives immense leverage to the hyperscalers who own the chips and the data centers.

The Battle Royale: Competition Reshaping the Landscape

900 million users on one platform is a signal to the entire tech world: the market for conversational AI is enormous. This level of consumer saturation naturally triggers intense, strategic responses from rivals.

Analyzing the "Google Gemini vs OpenAI market share 2024 user statistics" provides the crucial competitive context. OpenAI (backed by Microsoft) demonstrated early mover advantage, capturing mindshare and user habits. However, incumbents like Google possess unparalleled access to real-time data and search infrastructure, giving their models a crucial edge in grounding responses in current facts.

Beyond Chat: The Fight for Integration

Competition is no longer just about who has the best chat interface. It’s about strategic embedding. If the user base is this large, the next phase is locking those users into ecosystems:

- Operating Systems: Integrating AI deeply into Windows, Android, and macOS, making the AI assistant the default entry point for many computing tasks.

- Productivity Suites: Embedding assistants directly into email, spreadsheets, and document creation tools, effectively turning them into co-pilots for all knowledge work.

- Open Source Challenge: Models released by Meta (like Llama) are crucial competitors, offering a path for smaller companies and nations to deploy powerful AI without relying solely on Big Tech APIs, fostering fragmentation and innovation at the edges.

Implication for the Future: We are witnessing a rapid stratification of the AI market. There will be the dominant platform models (likely those leveraging the largest training data and compute budgets) and a vibrant, highly specialized ecosystem of smaller, fine-tuned models tailored for niche professional tasks. Users will likely use multiple AIs depending on the task.

The Economic Tectonic Plates: Enterprise Adoption and Shifting Behaviors

The consumer explosion validates the technology, but sustained AI dominance requires embedding it into the machinery of business. Data on the "Enterprise adoption rate of generative AI tools Q4 2024" shows that the consumer wave has served as a massive, free beta test, accelerating corporate readiness.

From Novelty to Necessity in the Workplace

For CIOs and business strategists, the 900 million user statistic is a mandate: their workforce is already fluent in AI interaction. The conversation has shifted from "Should we use AI?" to "How quickly can we securely integrate it to maximize productivity?"

This integration is most visible in automation and knowledge management. Enterprises are utilizing private, fine-tuned LLMs to handle customer support, rapidly synthesize internal documents, and accelerate code generation. The economic implication is clear: significant productivity gains are achievable now, often requiring retraining of staff to manage and audit AI outputs rather than perform the manual tasks themselves.

The Death of the Blue Link?

Perhaps the most disruptive implication stems from the way these users are accessing information. When people use ChatGPT for answers, they are often bypassing the traditional portal for finding information online: the search engine.

Investigating the "Impact of AI on search engine market share Q1 2025" reveals growing stress on the traditional ad-supported search model. If a user gets a synthesized answer directly from an AI chatbot, they never click on the ten blue links that generate revenue for search providers. The future AI agent must be capable of sourcing and citing information accurately while retaining a revenue model that supports the massive infrastructure costs.

This means the next generation of AI won't just answer questions; they will act as transaction agents—booking flights, managing schedules, and executing trades—all while justifying their operational cost through transaction fees or specialized subscriptions, rather than reliance on generalized advertising.

Actionable Insights: Navigating the New AI Paradigm

For stakeholders across the technology and business spectrum, the massive adoption curve demands proactive strategies:

For Technology Leaders (Engineers & Architects):

- Prioritize Inference Optimization: Focus less on simply training larger models and more on optimizing the *serving* layer. Efficiency in generating responses (inference) is the key to managing the cost of 900 million weekly interactions.

- Embrace Multi-Modality: The next wave won't just be text. Users expect seamless interaction across voice, video, and code. Invest in models and pipelines that can handle diverse data types inherently.

For Business Leaders (Executives & Strategists):

- Audit AI Readiness, Not Just Adoption: Assess existing data governance, security protocols, and employee skill gaps before deploying LLMs enterprise-wide. Scalability is useless if compliance fails.

- Reimagine Value Chains: Identify the 20% of your knowledge work that is highly repetitive and automatable. Focus on repurposing the time saved by AI into high-value, human-centric tasks that require creativity, complex negotiation, or deep empathy.

For the Public (Consumers & Educators):

- Cultivate AI Literacy: Understand the biases and limitations of these tools. Treat AI output as a first draft or a highly informed suggestion, not infallible truth.

- Demand Transparency: Support platforms and providers that are transparent about their data usage and environmental impact, especially as the energy demands of supporting billions of interactions grow.

Conclusion: The Great Acceleration

The reported figure of 900 million weekly users for ChatGPT is the smoking gun proving that AI has entered hyper-growth territory. This isn't a niche technology anymore; it is infrastructural. Its success places immense pressure on the global computing fabric, accelerates the competitive battles between tech giants, and mandates immediate strategic adjustments within every sector of the economy.

What happens next will be defined by how quickly the industry can make the infrastructure cheaper and more decentralized, how effectively competitors challenge the incumbents for deep workflow integration, and how responsibly businesses adapt their core processes. The age of the AI co-pilot is not coming—it is fully operational, and we are all currently signing up for the ride.