The Great AI Data Wall: Amazon vs. Perplexity and the Future of Autonomous Agents

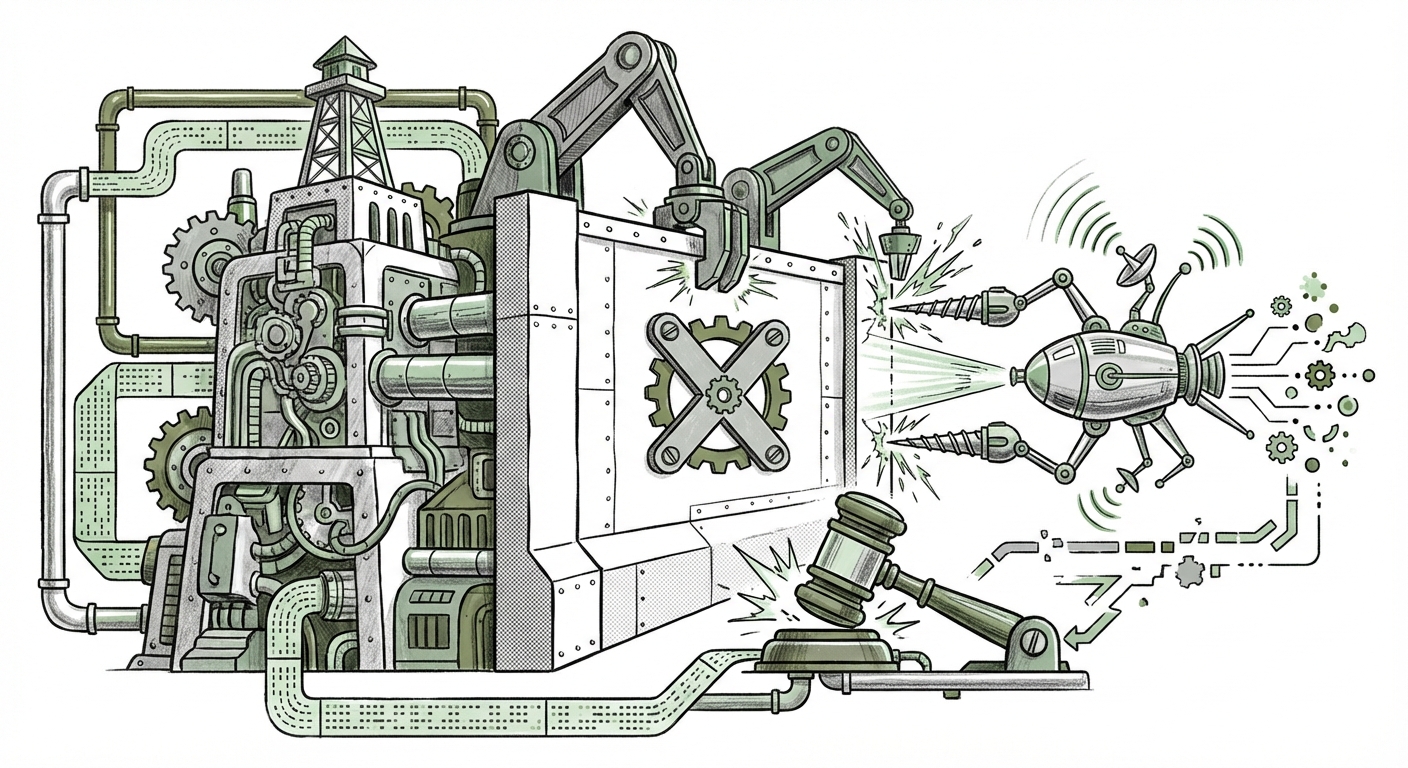

The technological race for Artificial Intelligence dominance is no longer just about who has the biggest Large Language Model (LLM); it’s about who controls the **data pipelines** that feed those models the most accurate, real-time information. The recent legal confrontation between retail giant Amazon and the AI search innovator Perplexity AI serves as a dramatic illustration of this new battleground.

Amazon secured a court order to block Perplexity’s emerging AI shopping agent, which was reportedly using Amazon’s public-facing marketplace data to provide curated purchasing recommendations. This isn't merely a squabble over scraping; it is a pivotal moment defining the boundaries between AI innovation, public web access, and proprietary data rights. For technologists, business leaders, and legal scholars, this case signals a critical inflection point for the viability of autonomous AI agents across every digital industry.

The Rise of the Agent: From Search to Shopping Authority

For years, search engines answered questions. Now, AI agents are being designed to perform tasks. Perplexity, known for its grounded, sourced answers, was extending this capability into e-commerce. An AI shopping agent promises an experience that transcends traditional search: it can compare product variations across multiple retailers, factor in user reviews, synthesize shipping times, and present a final, actionable recommendation—all instantly.

This capability represents a profound shift. Traditional e-commerce relies on funneling traffic directly to a specific retailer’s site. An intermediary agent that seamlessly draws from *all* major platforms—like Amazon, eBay, and direct-to-consumer sites—threatens to disintermediate the established retail hierarchy. As context from the broader tech landscape shows, the rise of autonomous AI shopping agents is seen as the next frontier in commerce innovation. These agents promise efficiency for consumers but represent an existential threat to platforms whose value is built on controlling their own data ecosystem.

Why Amazon Fought Back Aggressively

Amazon’s response was swift and decisive, leveraging the court system to enforce an immediate halt. Why such a strong reaction? This stems from Amazon’s long-standing, highly protective stance on its marketplace data. Amazon’s platform is not just a website; it is a complex, dynamic dataset of consumer intent, pricing elasticity, and competitive positioning. For Amazon, this data is the engine of their competitive advantage. Corroborating analyses of Amazon's historical policies reveal a pattern of fiercely guarding against third-party aggregation that doesn't benefit their bottom line.

When an entity like Perplexity uses sophisticated automation to ingest this massive, constantly refreshed dataset—not merely to display it, but to synthesize new products (shopping lists) from it—Amazon views this as unauthorized extraction of proprietary competitive intelligence. In simple terms, Amazon doesn't want a competitor using their data farm to build a better shopping recommendation engine that pulls users away from Amazon Prime.

The Legal Crucible: Scraping and Trespass to Chattels

The heart of the Amazon vs. Perplexity fight lies in established, yet evolving, legal precedent surrounding web scraping. The key question is: Does data visible on a public website belong to the website owner when an automated system accesses it without permission?

The legal framework governing this is heavily influenced by cases like *LinkedIn v. HiQ Labs*. This landmark case wrestled with whether data that is publicly available on the web can be scraped. While the Ninth Circuit ruling offered some protection for scraping data that is freely viewable, the lines become blurred when the access mechanism violates a site’s Terms of Service (TOS) or utilizes technical means designed to bypass security or identification measures.

Amazon's injunction likely argued that Perplexity’s methods constituted a "trespass to chattels" or an unauthorized access violation. Even if the data is public, if the *method* of extraction violates the site's stated rules or overwhelms their servers, the operator can claim illegal interference with their property. This ruling, therefore, becomes a crucial test: Does the existence of advanced, agent-based scraping override the existing common law protections afforded to platform owners?

Implications for Data Rights in the Generative AI Era

This dispute is far larger than just shopping bots. It intersects directly with the most pressing legal issue facing the AI industry today: Generative AI and proprietary data rights. Companies training LLMs rely on vast datasets, much of which is scraped from the open web. If courts begin routinely siding with platforms like Amazon—stating that scraping, even for a beneficial new AI service, is a violation of platform rules—it creates an immediate barrier to building real-time, grounded AI applications.

Current, large-scale training datasets are often static and historical. The next generation of AI needs real-time information to be truly useful (e.g., "What is the price of this stock *right now*?" or "What is the current inventory level?"). If platforms can legally block AI agents from accessing this dynamic data, AI development could become siloed, forcing models to rely only on data they are explicitly licensed to use, fundamentally altering the speed and scope of AI innovation.

Practical Implications for Technology and Business Strategy

This legal action sends clear signals to both innovators and incumbents about the immediate future of AI deployment:

For AI Developers and Startups (The Innovators):

Actionable Insight: Prioritize Licensing Over Scraping. The path of least resistance is rapidly closing. Startups focused on creating agents that require real-time, structured data from major platforms (e-commerce, finance, travel) must immediately pivot their strategy from automated scraping toward formal data partnerships or robust API licensing agreements. Relying on publicly accessible data that violates a site’s TOS is now a severe legal risk that can result in immediate operational shutdown, regardless of the innovation's merit.

For Large Platforms (The Incumbents):

Actionable Insight: Monetize Access or Build In-House. Platforms like Amazon are signaling that if a technology threatens their core business by leveraging their data ecosystem, they will defend that ecosystem aggressively in court. The choice for platforms becomes: either offer controlled, paid access (APIs) to key data streams, or risk having competitors build superior, agent-based tools that bypass the platform altogether. Furthermore, incumbents are incentivized to accelerate the development of their own internal, proprietary AI agents that offer similar functionality *within* their walled garden.

For Policy Makers and Legal Professionals:

Actionable Insight: Clarify the "Agent" Exception. Regulators must determine if the law needs to evolve to account for sophisticated, low-impact AI agents versus older, brute-force scraping bots. Is an AI agent summarizing data for a single user fundamentally different from a human browsing, or does its automated nature necessitate new protections for the data source? The resolution of the Amazon/Perplexity dispute will heavily influence future legislative attempts to govern AI data usage.

The Future Landscape: Fragmented or Collaborative?

The outcome of this case, and similar ones emerging globally, will likely push the AI ecosystem toward one of two futures:

- The Walled Garden Future: Platforms enforce strict barriers, making AI models reliant primarily on licensed, proprietary data. This results in a highly fragmented digital landscape where different AI agents excel only within their data domain (e.g., one agent for Amazon, another for Walmart, etc.). Innovation slows down because generalized, cross-platform intelligence becomes legally prohibitive to build.

- The Data Brokerage Future: Legal clarity emerges that mandates platforms must offer fair, standardized access (APIs) for legitimate AI training and agent deployment, possibly managed by regulatory bodies. In this scenario, data becomes a globally traded, licensed commodity, fostering competition among agents based on model quality rather than data acquisition advantage.

Currently, the Amazon ruling favors the Walled Garden. It shows that powerful incumbents can effectively use existing legal tools to defend their turf against foundational technological shifts, at least in the short term. This forces AI builders to treat data acquisition as a **first-order legal challenge**, not merely a technical hurdle.

The promise of truly autonomous AI agents—tools that manage complex parts of our digital lives, from optimizing supply chains to managing personal finances across multiple banks—depends entirely on their ability to access and synthesize information securely and legally. The Amazon vs. Perplexity showdown is merely the first major legal salvo fired across the bow of that ambitious future. The technology is ready to operate autonomously; the law is struggling to keep pace.