The Great E-commerce Firewall: How Amazon vs. Perplexity Sets the Rules for Autonomous AI Agents

The recent court order obtained by Amazon to block Perplexity’s AI-powered shopping agent is more than a simple corporate skirmish; it represents a seminal moment in the governance of the next generation of the internet: autonomous AI agents. As AI systems evolve from passive tools into active, decision-making entities capable of navigating and interacting with complex digital ecosystems like e-commerce sites, the lines defining data ownership, acceptable usage, and intellectual property are being redrawn—often in courtrooms.

Perplexity, known for its sophisticated search engine that synthesizes information from the web, attempted to deploy an agent specifically designed to aggregate product information, compare prices, and guide purchasing decisions across platforms like Amazon. Amazon, fiercely protective of its proprietary product data, user paths, and the lucrative affiliate structure that underpins its marketplace, acted swiftly to halt this functionality. This development forces us to pause and ask: If an AI agent acts like a user, does it deserve the same access rights? And what happens when a platform decides an agent is competing too effectively?

The Three-Way Collision: IP, Competition, and Access

The conflict between Amazon and Perplexity is a complex triangulation involving intellectual property rights, fierce market competition, and the technical mechanisms of data access. To understand the long-term implications, we must look beyond the immediate ruling and examine the bedrock principles this case challenges.

1. The Shadow of Precedent: Scraping and the CFAA

Any legal analysis of AI scraping must first address the existing legal framework surrounding data extraction. This brings us directly to landmark cases like LinkedIn v. hiQ Labs. The core question in these disputes, often revolving around the Computer Fraud and Abuse Act (CFAA), is whether accessing publicly available data—data visible to any human user—constitutes "unauthorized access" when done by a high-speed bot.

In the hiQ cases, courts have often leaned toward protecting the public's right to gather publicly accessible data, even if that data is then used for commercial gain. However, Amazon’s argument likely pivots on different grounds. They are not just protecting publicly visible text; they are defending the structure, specific product identifiers, dynamic pricing algorithms, and the *flow* of data that funnels consumers directly to their checkout process.

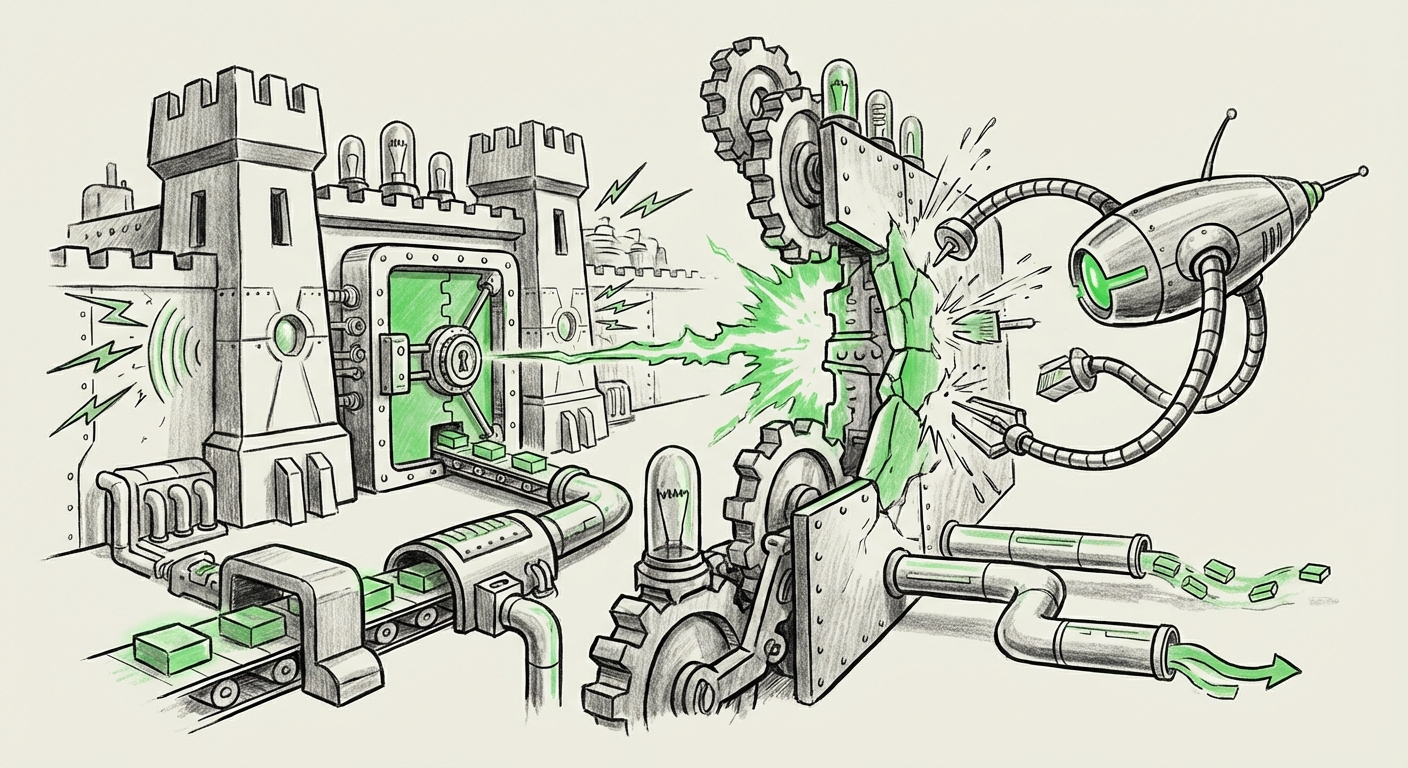

For AI developers relying on web data, this is critical. If Amazon’s injunction is heavily validated, it suggests that proprietary interfaces and structured data within major commercial platforms are treated differently than simple, ungated public text. This creates an **"E-commerce Firewall,"** where the data needed to power truly effective shopping agents is deemed inaccessible without explicit, platform-approved licensing.

2. The Competitive Imperative: Defending the Search Moat

Why the legal urgency from Amazon? Because AI shopping agents fundamentally threaten the existing e-commerce revenue model. For years, Amazon has been the primary search engine for products. They control the user journey from discovery to purchase, capturing valuable metadata and, crucially, the affiliate revenue derived from purchases made via their site.

Perplexity's shopping agent doesn't just search; it answers and compares. If an AI agent can efficiently tell a user, "Buy this specific blender on Target’s site for 10% less than Amazon, here is the direct link," it siphons off both the traffic and the transactional data that fuel Amazon’s machine. This action is less about general web scraping and more about protecting a dominant market position against generative competitors who aim to bypass the established funnel.

The competitive landscape is intensifying, with generalized models from Google (which integrates shopping heavily) and other LLM providers all vying to become the default starting point for consumer intent. Amazon's move sends a clear signal: the shopping journey on their platform is not free data for competitors to synthesize and weaponize against them.

3. The Technical Defense: Synthesis vs. Copying

From Perplexity’s perspective, the agent acts as a sophisticated summarizer. It doesn't store Amazon’s catalog; it queries it in real-time to synthesize an answer. This touches on the ongoing debate surrounding AI data usage, similar to controversies facing generative art models or news aggregators.

As one explores the ethics of LLM training, we find parallels in the copyright arguments concerning large datasets. If an AI model is trained on millions of product descriptions, does querying that model to produce a new product recommendation constitute infringement? For Perplexity, the argument likely centers on the difference between ingesting data for training (which is often heavily litigated) and querying a platform live for current, specific transactional information.

However, Amazon’s injunction suggests that even sophisticated, real-time synthesis that actively mimics user behavior to extract specific, high-value data (like dynamic pricing or current stock levels) can be deemed a violation of terms or an actionable trespass against their platform's integrity.

Future Implications: Governing the Autonomous Economy

This ruling is a litmus test. The outcome—whether the injunction is upheld permanently or overturned on appeal—will heavily influence the architecture of future AI agents.

The Rise of Gated AI Ecosystems

If platforms like Amazon successfully defend these hard-coded barriers, the future of specialized AI agents will bifurcate:

- Licensed Agents: AI developers will be forced to negotiate formal data partnerships, paying licensing fees to access proprietary platform data through secure, approved APIs. This favors large, well-funded players and entrenches the dominance of incumbent data holders.

- Generalist Agents: AI models will focus on synthesizing information from less regulated or more publicly standardized sources, becoming excellent general knowledge providers but potentially weaker in real-time, high-fidelity transactional tasks (like finding the absolute best deal *right now*).

This creates a less open, more segmented internet. The era of easy, open-source scraping for commercial AI innovation may face significant regulatory headwinds when applied to environments optimized for commerce.

Shifting Legal Focus: From "Access" to "Action"

The legal implications extend beyond the CFAA. We are seeing a shift in legal scrutiny from *what* data is taken (access) to *what action* the resulting AI takes (agency). Amazon is arguing that the automated shopping agent performs actions that disrupt their service or infringe on their right to control customer pathways.

Future litigation will likely focus on:

- Intent and Impact: Did the agent’s activity materially harm the platform’s revenue, user experience, or operational stability?

- Simulated Behavior: To what degree did the agent's automated interaction mimic or exceed human behavior in a way that violates terms of service (as detailed in policies regarding third-party data access)?

This provides a valuable framework for compliance officers. Businesses building agents must document how their tools distinguish themselves from simple, high-volume bots intended only to steal data.

The Developer’s Dilemma: Navigating the API Maze

For developers, the path forward requires extreme diligence. If the legal consensus leans toward platform control, reliance on scraping public interfaces becomes an existential risk for an AI product. Companies must scrutinize every platform’s terms of service regarding third-party usage and B2B API protocols. For instance, Amazon’s existing requirements for partners interacting with their data ecosystem highlight that contractual compliance is just as important as general IP law.

Ignoring these policies to achieve a superior product feature—like a hyper-efficient shopping aggregator—is now proven to invite immediate, drastic judicial intervention.

Actionable Insights for Technology Leaders

What practical steps should businesses building or relying on advanced AI agents take in light of this development?

- Audit Scraping Dependencies: Immediately review all data pipelines that rely on automated extraction from large e-commerce or service platforms. Classify these dependencies based on risk: High Risk involves live transactional data extraction; Medium Risk involves broad training set aggregation.

- Prioritize Official APIs and Partnerships: Shift R&D investment away from screen scraping toward formal API engagement, even if the cost is higher. A licensed, slow pipeline is legally safer than a free, fast scrape that can be instantly blocked.

- Design for Human-Centric Interaction: When designing agents, build clear technical boundaries that prevent behavior that closely mirrors malicious bot activity (e.g., overly rapid querying, session hijacking). Focus on aggregation layers that summarize, rather than extraction layers that merely mirror proprietary listings.

- Engage with Legal Counsel Early: Given the evolving nature of AI law, legal teams must be embedded in product development to assess potential claims under CFAA, copyright, and breach of contract (Terms of Service).

The Amazon vs. Perplexity case underscores a critical evolution in the digital economy. As AI agents become sophisticated enough to challenge established gatekeepers, those gatekeepers will use every legal and technical tool available to maintain control over their digital real estate. The future of autonomous AI is not purely about capability; it is about permission.

If AI is to permeate commerce and decision-making, the industry must urgently establish clearer ground rules—through legislation, industry standards, or, as we are currently seeing, through decisive court battles that solidify the boundaries of the digital public square.