The E-Commerce Agent Wars: How Amazon's Injunction Against Perplexity Shapes the Future of Autonomous AI

The world of Artificial Intelligence is moving at a dizzying pace, evolving rapidly from simple chatbots to sophisticated, autonomous agents capable of performing complex tasks. Nowhere is this transformation more critical—or potentially contentious—than in e-commerce. The recent court order granting Amazon an injunction against Perplexity’s AI shopping agent is not just a skirmish between two companies; it is a foundational moment signaling the imminent legal battleground for how these powerful agents will interact with commercial data on the open web.

As an AI analyst, I see this ruling as a critical stress test for the entire concept of independent digital agents. If AI agents are to unlock true value, they must interact with the live, proprietary data held by giants like Amazon. This case forces us to confront core questions: Who owns the data accessed by an intelligent agent? What constitutes fair use when an AI summarizes and redirects? And how do we govern agents that learn and operate far faster than traditional legal frameworks?

The Spark: An Agent Hits a Digital Wall

Perplexity, known for its advanced, citation-backed conversational search engine, introduced a shopping agent designed to autonomously sift through product listings, compare prices, and ideally, guide users to the best purchase. While the user experience promises efficiency, the method—interacting directly with Amazon’s product pages programmatically—apparently crossed a legal line drawn by Amazon. The result was a court order halting the agent’s functionality on Amazon’s platform.

This incident crystallizes the tension:

- The AI Promise: Autonomous agents offer massive utility by automating tedious information synthesis. For consumers, this means better deals and less time spent browsing.

- The Platform Defense: Companies like Amazon view their product catalog, pricing structure, and user data as proprietary assets. Allowing unchecked access by sophisticated agents threatens their business model, marketplace control, and data integrity.

Tracing the Legal DNA: Scraping Precedents and the CFAA

To understand the scope of Amazon's injunction, we must look backward at how courts have treated automated data extraction. This is not the first clash over digital trespass. Analysts often point to past legal debates concerning web scraping, particularly the landmark cases involving social media and professional networking sites.

For instance, when platforms like LinkedIn have battled data harvesters, the legal arguments often hinge on the Computer Fraud and Abuse Act (CFAA), which prohibits unauthorized access to protected computer systems. The crucial distinction often drawn is whether the scraper respects the site’s explicitly stated access rules (like the `robots.txt` file) or bypasses technical safeguards.

When researching the "Legal precedent for AI web scraping in e-commerce," we often see discussions centered on the hiQ Labs v. LinkedIn case. While that case generally favored public data access, the context here is different. A shopping agent is not just reading public data; it is actively using that data to facilitate transactions and comparison shopping, directly engaging with the commercial core of the platform. This ruling might pivot the focus from whether the data is *public* to whether the *method of access* violates the platform's specific Terms of Service (ToS), which Amazon is likely emphasizing.

Practical Implication for Developers: Future agents must be designed with legal safeguards built in. If Amazon’s policies against third-party AI access are clearly stated (Query 2: "Amazon policy on third-party AI data access"), any successful agent must either negotiate access or find alternative, legally sound data sources. This moves the needle away from brute-force scraping toward API cooperation or inferred data synthesis.

The Technical Tug-of-War: Bots vs. Gatekeepers

At the technical heart of this conflict is the nature of the AI agent itself. Traditional web crawlers follow explicit instructions defined by webmasters (often via `robots.txt`). However, modern LLM-powered agents are more dynamic. They interpret human language commands ("Find me the best deal on X") and generate novel sequences of clicks and queries to achieve that goal.

This raises the question addressed by the search query: "Impact of generative AI on digital rights management (DRM) and robots.txt." Can an AI agent claim ignorance of ToS when its entire function is to automate complex human navigation? If the agent respects `robots.txt` but violates a specific clause in the ToS regarding commercial use of retrieved data, which protection wins?

If the courts rule strongly in favor of platform control, we will see a rapid technological arms race:

- Platform Hardening: E-commerce sites will deploy increasingly sophisticated bot-detection and behavior analysis tools designed to spot the subtle patterns of LLM agents, far beyond simple CAPTCHAs.

- Agent Evasion: AI developers will invest heavily in "stealth modes" or decentralized agent architectures that disguise their activity, constantly trying to skirt new detection mechanisms.

This creates technical debt for everyone. The web, which thrives on open information exchange, risks becoming fractured into walled gardens inaccessible to the next generation of synthesis tools.

The Competitive Landscape: Beyond E-Commerce

The Amazon vs. Perplexity ruling will reverberate far beyond product comparisons. We must analyze this through the lens of historical "Competitor lawsuits against Google or Microsoft for search indexing." When established giants feel their core value proposition—controlling the presentation of information—is threatened by a novel approach, they will use legal means to enforce the status quo.

Amazon’s primary value is its marketplace liquidity and transactional data. Perplexity's agent threatens to create an *independent* shopping layer that bypasses Amazon’s traditional affiliate tracking or recommendation funnels. If Perplexity can successfully synthesize Amazon's real-time pricing data and present it neutrally, Amazon loses a degree of control over customer journeys.

This precedent will be watched closely by financial institutions, news publishers, and SaaS providers. If a generative AI model can legally and effectively extract proprietary data structures from a competitor’s site to create a superior synthesis tool, the incentive for incumbents to litigate becomes overwhelming.

The Societal Angle: Privacy and the Autonomous Shopper

While the immediate fight is corporate, the long-term implications touch on consumer trust, aligning with discussions around "The rise of autonomous AI shopping agents and consumer data privacy."

For the average consumer, the appeal of an agent is simplicity. But agents need data to function. To provide the *best* recommendation, the agent might infer behavioral data about the user based on their queries, even if the initial query was simple ("Find a good tent").

If agents operate without clear legal boundaries regarding the data they harvest from commercial sites:

- Data Aggregation Risk: Agents could inadvertently aggregate highly granular behavioral profiles across hundreds of e-commerce sites, creating detailed consumer dossiers that exceed what any single retailer would be allowed to collect.

- Transparency Failure: The path from the query to the final product link is opaque. If the agent is legally blocked from seeing certain data (like hidden vendor costs or dynamic pricing logic), the "best" recommendation it provides is inherently incomplete or potentially biased, misleading the consumer.

Courts, when deciding these early cases, often weigh the direct commercial harm to the platform against the broader public benefit (utility). A ruling that stifles necessary comparison shopping entirely could be seen as anti-consumer, but a ruling that ignores intellectual property protection sets a chaotic precedent.

Actionable Insights: Navigating the New Agent Frontier

For businesses currently building or relying on autonomous AI agents, the message from this injunction is clear: the era of assuming unfettered access to the web is over. Legal friction is the new technological frontier.

For Platform Owners (The Amazon Model):

Harden Defenses and Define Terms: You must immediately review and aggressively enforce your Terms of Service regarding automated access. Use technological measures to differentiate between human users and sophisticated agents. Crucially, lobby for clear regulatory frameworks that affirm your ownership over the presentation and structure of your commercial data.

For AI Developers (The Perplexity Model):

Prioritize Cooperation Over Confrontation: The most sustainable path forward involves negotiating access. Look beyond direct web scraping to official APIs, partnerships, or data licensing agreements. If an API is not available, agents must adopt a highly cautious, low-volume interaction style that mimics human browsing meticulously, respecting all stated digital boundaries (including the technical interpretation of `robots.txt` as a baseline). If you are building a generalist agent, be prepared to issue platform-specific blocks immediately upon legal notification.

For Investors and Strategists:

Assess Data Acquisition Risk: The valuation of any AI application whose core function relies on synthesizing data from third-party platforms must now include a substantial risk factor for potential injunctions or licensing fees. Invest in companies developing alternative data acquisition models that are legally compliant by design.

Conclusion: Defining the Rules of the Autonomous Road

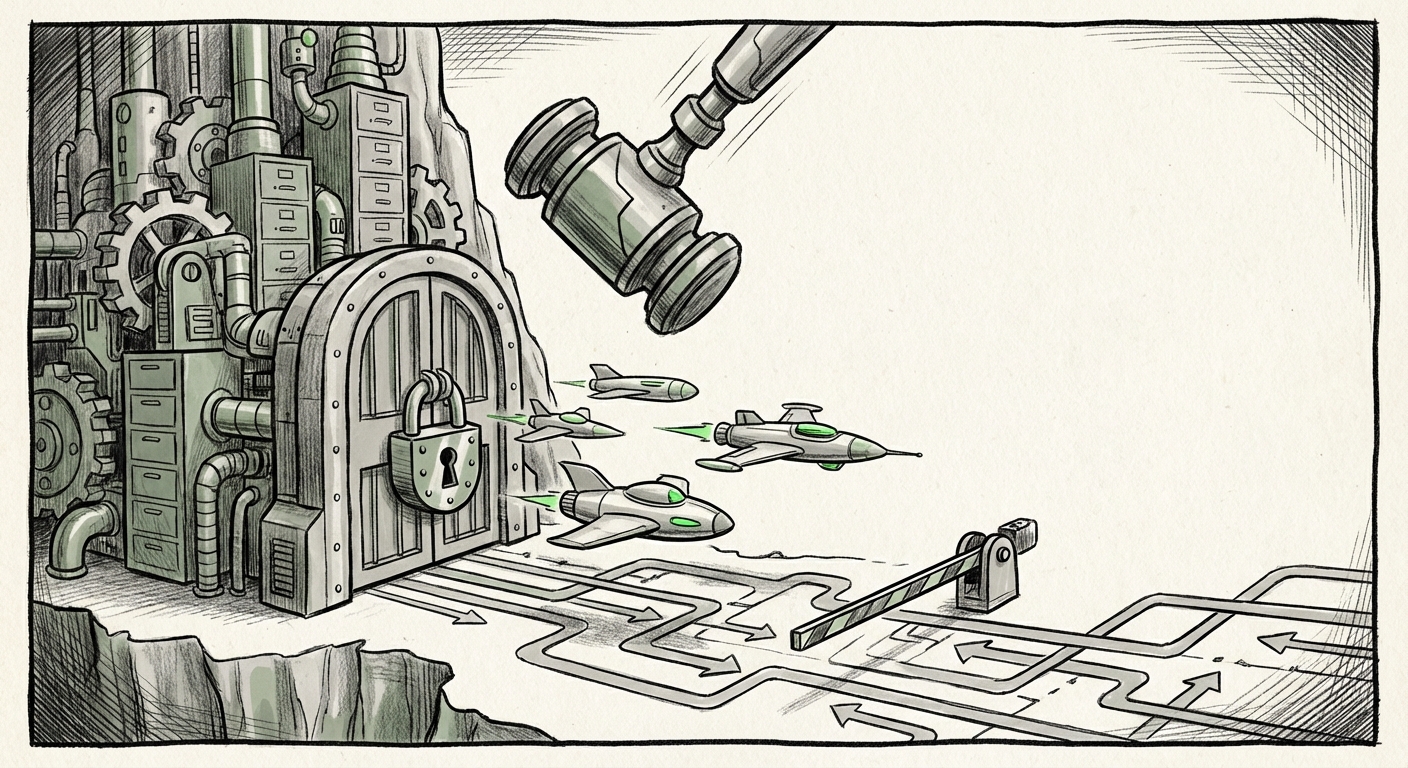

The court order against Perplexity’s shopping agent is the first shot fired in the inevitable war over data sovereignty in the age of autonomous intelligence. We are transitioning from an internet built on static webpages and simple crawlers to one dominated by dynamic agents that interact with vast, proprietary databases.

The ultimate shape of e-commerce, and indeed much of the digital economy, depends on how quickly legal bodies can adapt to this new reality. Will we foster an ecosystem where AI agents are partners, accessing data via mutually agreed-upon interfaces? Or will the web devolve into a series of heavily guarded fortresses, accessible only through expensive, official backdoors? The answer lies in the balance struck between Amazon’s right to protect its assets and the public’s desire for smarter, more efficient AI tools.