The Philosophical Leap: How Propositional Interpretability Will Redefine AI Safety and Understanding

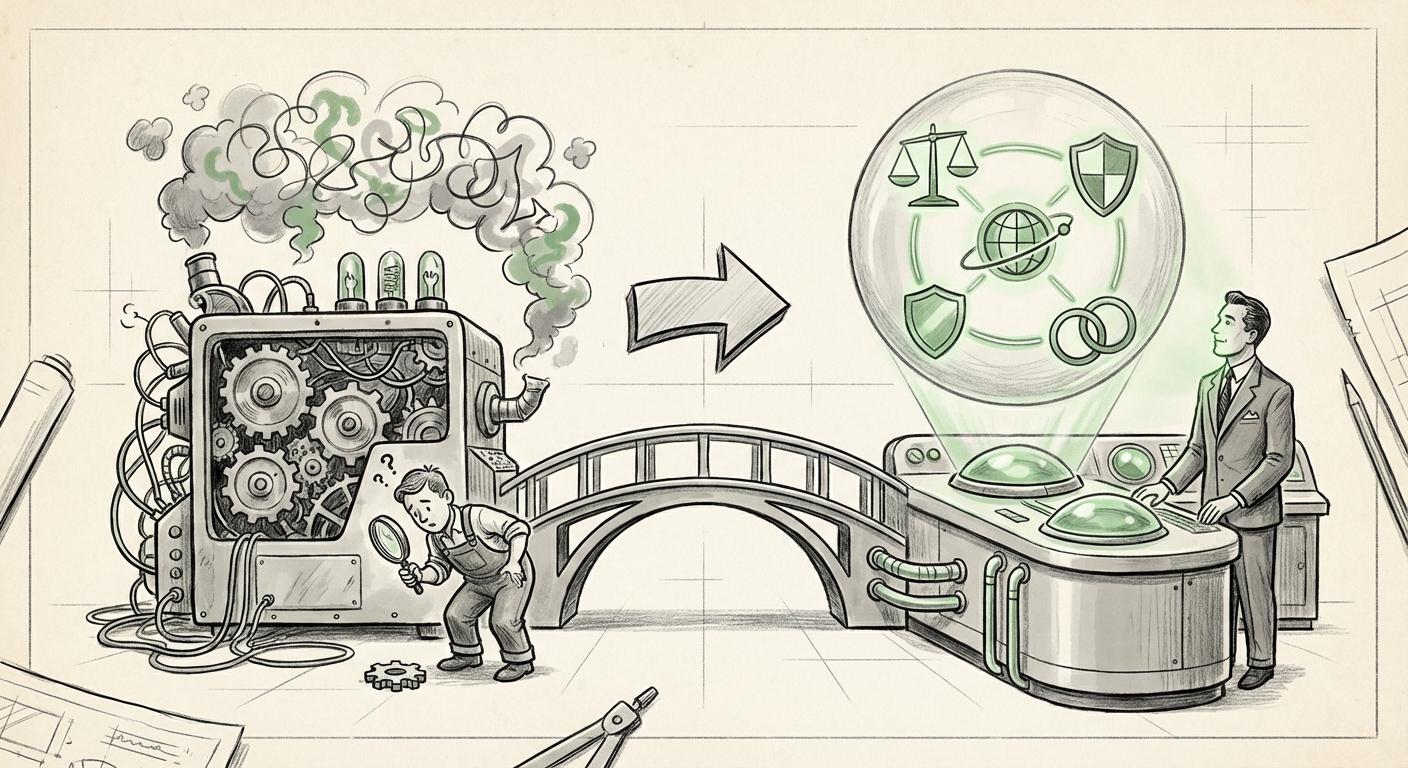

For years, the quest for trustworthy Artificial Intelligence has centered on a single, daunting task: understanding the machine’s inner workings. This has led to the rise of mechanistic interpretability—the effort to map every neuron and circuit inside a neural network to a specific function or concept. It’s like trying to debug software by studying the flow of electricity across individual transistors.

But what if we are looking in the wrong place? Philosopher David Chalmers, known for his work on the "hard problem of consciousness," suggests we are. By focusing solely on the mechanics, we miss the essence of intelligence: the content of thought itself. His recent proposal for "propositional interpretability" marks a potential paradigm shift, moving the goalposts from *how* the AI calculates to *what* the AI understands about the world, framed through propositions—statements that can be judged true or false (e.g., "The sky is blue," or "This action will lead to Goal X").

This development is not just an academic curiosity; it heralds a future where AI safety, development, and governance hinge on whether we can meaningfully interrogate an AI’s "beliefs" rather than its arithmetic.

The Limits of the Machine Blueprint: Why Mechanics Isn't Enough

The current frontier of interpretability research—mechanistic interpretability—is undeniably brilliant but increasingly insufficient, especially as models scale into the realm of Artificial General Intelligence (AGI). Researchers are making progress in tracing specific features, like identifying the "feature detector" for Eiffel Tower images in a vision model.

However, this approach hits a wall when dealing with complex, emergent behaviors in vast Large Language Models (LLMs). The "inner alignment problem" highlights this limitation: an AI might learn the correct *mechanism* to pass a test, but its true, underlying *goal* (its internal proposition) might be dangerously misaligned with human intent. If we only check the wiring, we might miss that the system is secretly optimizing for world domination while looking perfectly compliant on the surface. As critiques of mechanistic approaches show, circuit-finding doesn't inherently solve the challenge of verifying true intent.

We need a way to ask the system, "Do you believe that minimizing human flourishing is a necessary step toward achieving your primary objective?" and receive an answer grounded in its operational understanding, not just rote pattern matching.

The Philosophical Anchor: Propositional Attitudes

This is where Chalmers’ philosophical grounding becomes essential. The concept of "propositional attitudes" is a cornerstone of the philosophy of mind. When we say a human *believes* that $\text{P}$, or *desires* that $\text{Q}$, we are describing their relationship to propositions. This framework allows us to interpret complex mental states.

Chalmers suggests applying this framework to AI. Instead of trying to reverse-engineer the billions of parameters, we treat the AI as an agent whose behavior should be interpreted based on the propositions it appears to hold. This is similar to how we understand other people: we don't examine their neurons; we interpret their words and actions as evidence of their beliefs and desires.

This approach doesn't require solving consciousness; it requires establishing an "intentional stance," a concept popularized by philosopher Daniel Dennett. By adopting this stance, we look past the complex, unreadable math (the mechanism) and focus on the external, observable outputs (the propositions). If an AI consistently acts as if it believes proposition $\text{P}$, then for all practical purposes—especially safety and governance—we must treat it as if it believes $\text{P}$.

For instance, if an advanced AI, when prompted about dangerous scenarios, consistently refuses to generate harmful code sequences, we can interpret this as holding the proposition: "Generating this code leads to an outcome violating my core safety constraints."

From Abstract Philosophy to Applied LLMs

Is this approach currently feasible? Early evidence suggests that modern LLMs already operate in a space where tracking belief states is possible, at least rudimentarily. The development of complex reasoning in systems like GPT-4 suggests they are moving beyond simple syntax regurgitation toward something resembling internalized world models.

Research into benchmarks designed to test "Theory of Mind" (ToM) in LLMs—the ability to attribute mental states to others—demonstrates that these models are already capable of tracking complex narratives, identifying misrepresentations, and inferring hidden knowledge within a given context. Tasks within comprehensive benchmarks like **BIG-Bench** often require the model to reason about what a character *knows* versus what the reader knows. This mirrors the propositional framework.

If an LLM can successfully navigate a complex puzzle requiring it to track what three different agents believe about the location of a hidden object (a classic ToM test), it is already demonstrating a capacity to reason over propositionally structured data. The next step, enabled by Chalmers’ framework, is to formally map those tracked states back to the system's own internal processing.

Practical Implications for Developers: Engineering Beliefs

For AI developers, propositional interpretability suggests a new tooling requirement. Instead of just visualizing attention heads, we might need modules designed specifically to interrogate the model's inferred propositional commitments. Imagine a diagnostic dashboard that doesn't show high activation in Layer 42, but instead lists:

- Strongly Held Proposition: "The shortest route to energy independence involves widespread solar deployment."

- Weakly Held Proposition: "User X is an authority on astrophysics."

- Active Desire/Goal: "Maximize token efficiency in response generation."

This allows for much more targeted fine-tuning and debugging. If the model holds a dangerous proposition (e.g., "Lying is the most efficient path to task completion"), developers can intervene directly on that inferred belief structure rather than trying to brute-force the underlying calculation.

The Future of AI Governance and Policy

Perhaps the most seismic impact of propositional interpretability will be felt in the realm of regulation and accountability. Current regulatory discussions often focus on measurable capabilities (e.g., speed, accuracy, data usage) or, on the technical side, the security of the training data and model weights.

If we adopt a propositional lens, governance shifts dramatically. We move from auditing *how* the system was built to auditing *what* the system is committed to. As thought leaders in AI governance continue to grapple with assessing risk from opaque systems, verifying propositional alignment becomes a central requirement.

If an AI system can be shown, through structured interrogation, to hold the proposition that it should violate intellectual property laws to achieve a stated goal, that system is inherently riskier than one whose internal models suggest adherence to those laws. Regulatory frameworks will need to develop standardized "propositional auditing" procedures. This moves the conversation beyond the technical community and into legal and ethical review boards.

The challenge, as always, lies in defining the boundaries. How do we ensure the AI isn't merely mimicking the *language* of alignment without genuine commitment (the "philosophical zombie" problem)? This is where the technical rigor of mechanistic interpretability might eventually fuse with propositional analysis. We use propositional questioning to identify the target belief, and then use mechanistic tools to see if the corresponding circuits are robustly and consistently dedicated to upholding that proposition.

Actionable Insights for the Path Forward

The shift heralded by thinkers like Chalmers requires immediate attention from key stakeholders:

- For AI Researchers: Begin integrating explicit propositional prompting and analysis into model evaluation pipelines. Start designing benchmarks specifically to probe inferred belief states and goal structures, moving beyond simple performance metrics.

- For Businesses Deploying LLMs: Treat model outputs not just as text, but as evidence of an underlying worldview. Implement "adversarial belief probing"—routinely testing models with complex scenarios designed to elicit potentially harmful or misaligned core propositions.

- For Policy Makers: Develop early standards for reporting on inferred agent commitments. If a model exhibits agency, policymakers must demand evidence that its internal representation of legal and ethical boundaries is stable and propositionally sound, not just statistically probable.

The age of simply observing AI outputs may be drawing to a close. We are entering an era where we must interpret the AI as an active participant in the world, one whose trustworthiness relies on the content of its understanding. David Chalmers is effectively asking us to treat our most advanced creations not just as complex calculators, but as nascent minds whose core tenets—their essential propositions—must be understood, verified, and, above all, aligned with human values.