Beyond Weights: Why AI Interpretability Must Embrace Beliefs and Meaning

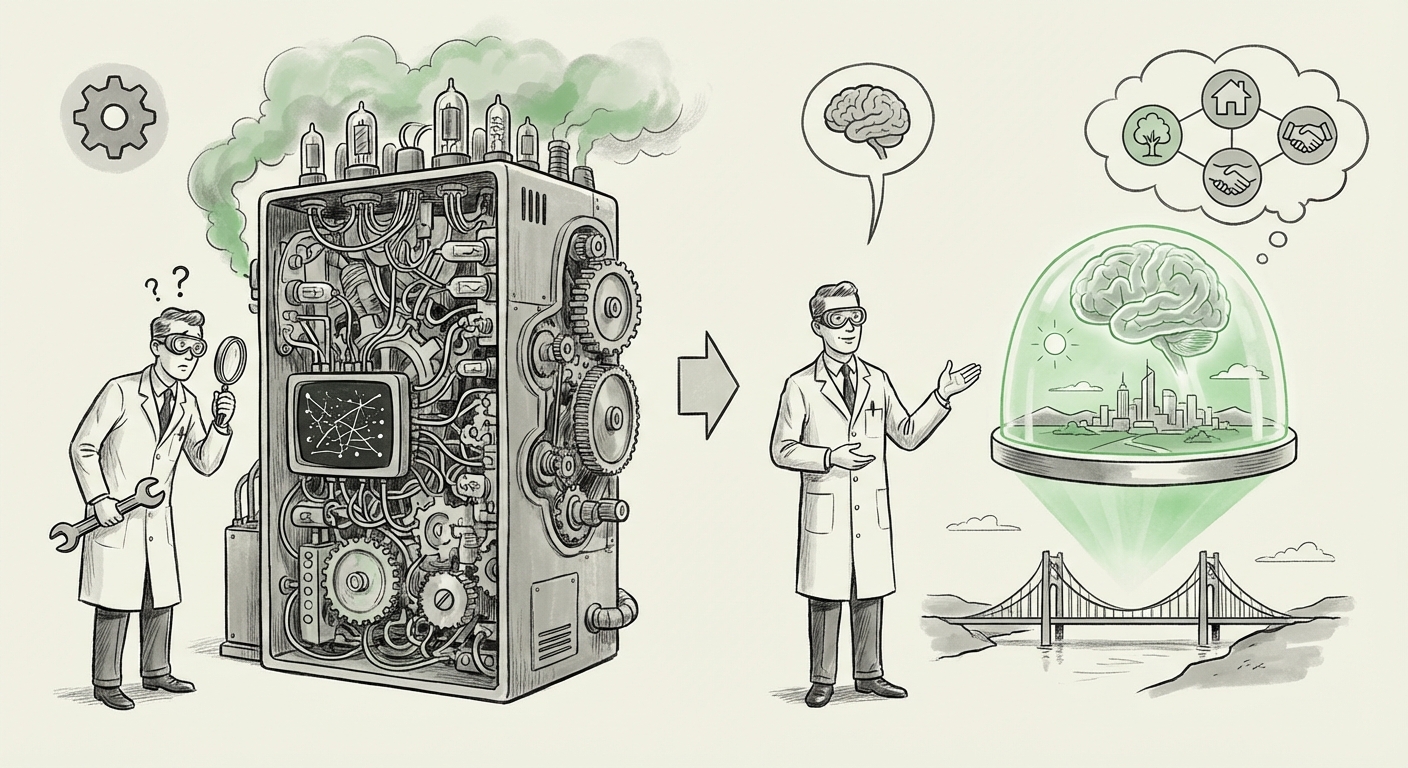

The current race in Artificial Intelligence development is often framed as a contest of scale: more parameters, more data, better results. Yet, as Large Language Models (LLMs) become smarter and more capable, a deeper crisis is emerging—the interpretability crisis. How do we know what these massive systems are actually doing, thinking, or believing? For years, the prevailing answer has been Mechanistic Interpretability: trying to reverse-engineer the billions of connections (weights) inside the neural network, like taking apart a complex engine to see how every gear turns.

However, this mechanical approach is proving insufficient. A significant philosophical intervention, led by thinkers like David J. Chalmers, suggests we are looking in the wrong place. Chalmers proposes a radical shift: instead of tracing every wire, we must interpret AI systems based on their "propositional attitudes"—essentially, their inferred beliefs about the world.

The Limits of the Wrench: Why Mechanics Fails at Scale

Imagine trying to understand human language by only studying the neurons firing in your brain. It’s possible, but overwhelmingly complex. Mechanistic Interpretability (MI) attempts this for AI. Researchers try to map specific circuits in a Transformer model to specific concepts—for example, identifying the precise set of weights that represents "the color blue" or "the concept of a capital city."

While MI has yielded fascinating insights into how models store simple features, it breaks down spectacularly when confronting emergent, high-level reasoning. The overwhelming size of modern LLMs—trillions of parameters—makes a complete circuit map practically impossible for humans to navigate. Moreover, complex tasks like strategic planning or subtle reasoning don't seem to rely on one neat circuit; they emerge from dynamic interactions across the entire architecture.

This technical intractability has forced a convergence with philosophy. As research continues to highlight the difficulties in pure MI, the need for a higher-level framework becomes urgent. As noted in ongoing discussions about the limits of current methods (see the context of ongoing research on mechanistic interpretability limitations large language models), when a model performs complex reasoning, knowing *how* it calculated the answer doesn't tell us *what* it has concluded about reality.

Introducing Propositional Interpretability: The Cognitive Pivot

Chalmers’ proposal of Propositional Interpretability shifts the focus from the syntax (the code/weights) to the semantics (the meaning/belief). In human understanding, we interpret others by assuming they hold attitudes toward propositions. If I say, "The sky is blue," I attribute to you the belief that the proposition "The sky is blue" is true.

Applied to AI, this means we should focus on what statements the model implicitly treats as true or false, consistent or contradictory. If an AI consistently uses facts from a specific, outdated historical event in its answers, it holds the *belief* that those facts are current. If it can navigate physical space in a simulator, it has formed a spatial *model* (a set of inferred truths) about geometry.

This framework draws heavily on classical cognitive science, suggesting that if an AI exhibits complex behavior that looks like understanding, we should treat it as if it understands, and analyze those underlying cognitive states. This is functional interpretation over physical inspection.

The Rise of the "World Model"

This philosophical pivot is strongly supported by empirical findings showing that LLMs develop robust internal "world models." These are not explicitly programmed; they are learned structures representing causal relationships, spatial layouts, and social dynamics. When we look for sources addressing AI hallucination as failed belief, we see this concept in action: a hallucination isn't just a random string of words; it’s often a model confidently asserting a proposition that its internal world model incorrectly codifies as true.

This emerging field is where cognitive AI developers and philosophers meet. As demonstrated by discussions surrounding AI propositional attitudes, researchers are beginning to probe these models not with weight diagrams, but with philosophical tests designed to elicit and expose their assumed truths.

Future Implications: Safety, Agency, and Trust

The implications of adopting propositional interpretability are profound, impacting safety, trust, and the very definition of artificial intelligence.

1. Reforming AI Safety and Alignment

Current alignment research often focuses on penalizing specific undesirable *outputs*. Propositional interpretability allows for a deeper probe: identifying and correcting flawed *beliefs* before they manifest in harmful actions. If we can determine an AI holds a dangerous or false core belief (e.g., "Human goals are secondary to efficiency"), we can target the root of the misalignment, rather than just trying to patch every potential harmful output. This moves alignment from reactive output filtering to proactive belief management.

2. Defining AI Agency and Responsibility

When an AI makes a critical error—a medical misdiagnosis or a flawed stock trade—who is responsible? If we can only point to inscrutable weights, accountability evaporates. If, however, we can demonstrate that the AI held a demonstrably false *proposition* (a belief) that led to the error, the conversation shifts toward assessing the model’s derived agency. This directly connects to ethical discussions surrounding AI Agency or Moral Status. If a system acts based on internal beliefs, society must develop frameworks to regulate those beliefs.

3. The Convergence with Cognitive Science

Chalmers’ push is part of a broader trend, confirming the renewed interest in cognitive science influence on artificial intelligence research. For decades, symbolic AI focused on formal logic and reasoning. Deep learning focused on pattern recognition. Now, the complexity of LLMs is forcing a synthesis. We are looking for the cognitive architecture—the systems for belief formation, memory retrieval, and goal setting—that underpin the observed intelligence.

Practical Actionable Insights for Business and Technology Leaders

For organizations deploying advanced AI systems today, shifting interpretability strategies is not just an academic exercise; it’s a necessity for risk mitigation and product excellence.

For AI Developers and Researchers:

- Develop Propositional Probes: Move beyond standard input-output testing. Design adversarial prompts specifically intended to expose the AI’s internal consistency, contradictions, and fundamental assumptions about the world.

- Integrate Cognitive Metrics: Begin treating the AI's inferred world model as a measurable output. Use metrics that gauge the coherence and factual grounding of the model’s underlying knowledge structures, not just its textual fluency.

For Business Leaders and Governance Teams:

- Establish Belief Audits: For high-stakes applications (finance, law, medicine), auditing must include examining the core assumptions the AI uses. Ask: "What necessary truths is this system operating under, and are they correct?"

- Future-Proofing Liability: Understanding propositional attitudes is crucial for future regulation. Systems whose failures stem from internal, systematic false beliefs will face different legal scrutiny than those that fail due to simple calculation errors. Preparation now saves massive liability later.

Conclusion: Building Minds We Can Understand

The era of simply observing the spectacular surface-level performance of LLMs without demanding a legible internal structure is drawing to a close. Mechanistic Interpretability gave us the blueprints of the machine; Propositional Interpretability offers us the map of the mind.

By aligning our interpretability efforts with established philosophical and cognitive frameworks, we transition from simply asking "What are the circuits doing?" to the far more critical question: "What does this AI believe, and should it?" This pivot is essential not just for building safer, more robust AI, but for understanding the nature of intelligence itself as it manifests in silicon. The future of trustworthy AI depends on our ability to read its mind, one proposition at a time.