The Visual Leap: How Interactive AI Tutors Are Redefining STEM Learning and Simulation

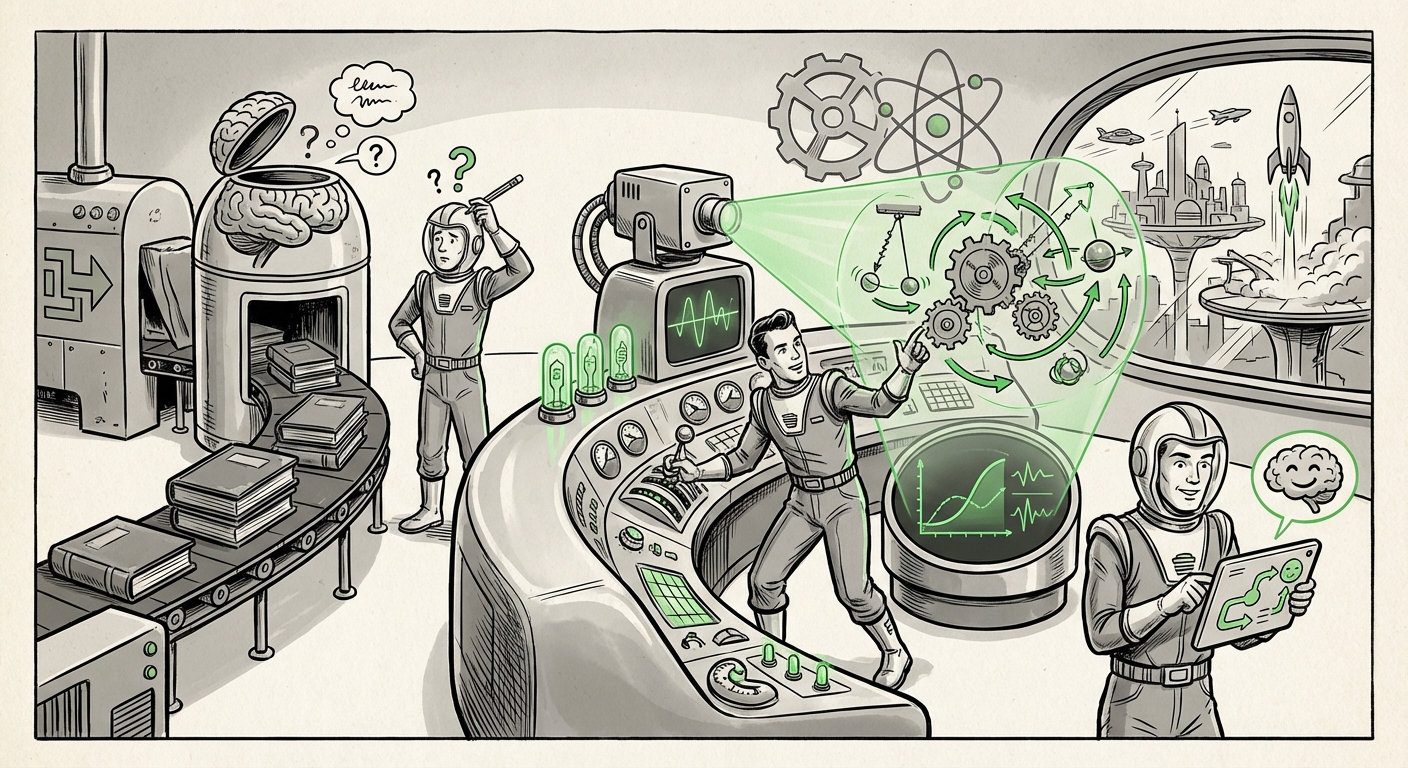

For years, Large Language Models (LLMs) excelled at one primary task: generating highly coherent, contextually rich text. They could explain quantum physics or calculus with stunning clarity. However, the learning experience remained inherently passive—a dialogue of words on a screen. That era is rapidly concluding. The recent introduction of interactive, real-time visualizations directly within platforms like ChatGPT for math and physics concepts signals a tectonic shift in AI capability, moving us toward truly experiential and embodied learning systems.

This is not merely a feature upgrade; it is a fundamental evolution in how AI interacts with complex, abstract concepts. When a user can tweak a variable for, say, the trajectory of a projectile and immediately watch the simulated graph update, they are no longer just reading a definition—they are conducting a micro-experiment. As an AI technology analyst, I view this development as the single most important step toward creating effective, scalable AI tutors for the next decade.

The Shift from Textual Explanation to Experiential Learning

Imagine trying to teach someone about the relationship between voltage and current in an electrical circuit using only paragraphs. It’s difficult. Now imagine giving them a digital rheostat they can turn, with a visual meter instantly reflecting the change. This is the power being unlocked by integrating generative capabilities with dynamic visualization engines.

The ability to instantly render visualizations for over 70 concepts at launch suggests deep integration, likely relying on sophisticated tool-use protocols where the LLM generates the necessary underlying code (like Python plotting libraries or JavaScript frameworks) within a secure, sandboxed environment. The AI explains the concept, identifies the key parameters, writes the code to visualize it, executes the code, and then interprets the resulting graph back into natural language guidance.

Corroborating the Trend: The Multimodal AI Arms Race

This move by OpenAI is a strong indicator of industry direction. Analysts must look beyond the immediate feature to see the competitive landscape. The underlying pressure to offer rich, multimodal interaction validates this trajectory. If one leader implements dynamic visualization, others must follow suit to remain competitive in the race for the most capable AI.

For example, tracking developments in models like Google’s Gemini, which emphasize native multimodality (reasoning across text, images, and video simultaneously), confirms that the industry believes the future lies in models that perceive and generate across sensory modalities. This trend is not limited to education; it will spill over into design, engineering, and data analysis.

For corroboration, one examines reports on competing architectures, such as those detailing advancements like: Google’s Gemini 1.5 Pro Showcases Massive Context Window and Deep Multimodal Understanding.

Implications for Education: Democratizing STEM Mastery

The most immediate impact of interactive visualization is on STEM education. Historically, mastering complex topics like thermodynamics, wave mechanics, or advanced calculus required access to specialized software, expensive lab equipment, or high-quality human tutors. The interactive LLM flattens this barrier.

For a student learning about resource allocation, instead of reading a dry economic model, they can manipulate supply and demand curves interactively. This fosters discovery-based learning, a pedagogy proven to increase conceptual retention. The friction between understanding a concept and seeing it demonstrated is now almost zero.

This technology accelerates the transition from passive absorption to active manipulation:

- Personalized Pace: The visualization adapts instantly to the user’s query, moving at the pace of the learner.

- Conceptual Debugging: If a student makes a mistake in setting parameters, the visualization immediately shows the erroneous outcome, providing instant, visual feedback.

- Accessibility: High-quality, dynamic science instruction becomes accessible via any standard web browser or mobile device.

This transformation challenges existing EdTech models that often rely on rigid, pre-rendered simulations. We are witnessing the evolution toward what many experts predict: The Shift from Passive Learning to Active Simulation: How AI is Redefining Digital Textbooks.

Technical Underpinnings: The Power of Tool Use

How is this powerful visual feedback generated so rapidly? The answer lies in the advanced use of external tools. Modern LLMs are no longer confined to their training data; they are becoming proficient executors of external code.

When a user asks ChatGPT about fluid dynamics, the model performs an internal reasoning step: "To show this effectively, I need to plot pressure against flow rate under variable viscosity." It then leverages its internal coding ability—the very technology often referred to as the "Code Interpreter" or "Advanced Data Analysis" feature—to write and execute the necessary Python, JavaScript, or specialized rendering code.

This capability to generate and execute dynamic code for visualization is crucial. It signifies that LLMs are evolving into true **Agentic Systems** capable of orchestrating complex computational workflows. This technical proficiency in scientific modeling is detailed in analyses focused on:

Beyond Text: Leveraging LLMs for Real-Time Data Visualization and Scientific Workflow Automation.

Actionable Insights: Beyond the Classroom

While education is the immediate beneficiary, the implications for professional fields are profound. This move pushes AI into the realm of **conceptual prototyping** and **rapid hypothesis testing**.

For Businesses and R&D

Engineers, designers, and product managers routinely need to understand physical principles quickly. Instead of spending hours setting up a simulation environment (like Finite Element Analysis software) for a quick "what-if" scenario, they can now query an AI:

- "Show me the structural stress visualization if I reduce the beam width by 10% under a 500N load."

- "Visually demonstrate the effect of increased air resistance on the thermal output of this battery design."

This accelerates the early stages of research and development. As articulated in strategic foresight reports, AI agents are becoming indispensable as:

Accelerating Discovery: AI Agents as Virtual Research Assistants for Conceptual Modeling.

Competitive Pressure and Future Strategy

For technology leaders, the message is clear: multimodal functionality is now table stakes, and interactivity is the next frontier. If an AI cannot *show* its reasoning visually, its utility in technical domains will be perceived as limited. Companies must invest heavily in secure execution environments that allow LLMs to safely run code for visualization and simulation.

The success of this feature will drive demand for **"AI Interactive Visualization" in all STEM education trends**, pressuring incumbents in textbook publishing and learning platform development to rapidly integrate similar capabilities or risk obsolescence.

The Road Ahead: Embodied AI Tutors and Digital Labs

What does the "Future of AI tutoring and real-time simulation" look like in two years, building on this foundation?

We are moving toward AI systems that function as complete, though virtual, laboratories. A high school chemistry student might use the AI to:

- Ask to see the molecular collision rate at a specific temperature.

- The AI visualizes the movement of molecules in 3D space.

- The student suggests doubling the pressure; the AI instantly compresses the visual volume and shows the increased collision frequency in real-time.

For the average user, this means that complex, abstract subjects become far more intuitive. For businesses, it means quicker, cheaper initial R&D cycles. The barrier between having an idea and visually testing that idea is collapsing, driven by generative models mastering the language of computation and visual physics.