The Visualization Revolution: How Interactive AI is Transforming STEM Education and Beyond

For years, Large Language Models (LLMs) like ChatGPT excelled at synthesis, summarization, and creative writing. They were brilliant text engines. However, grasping complex, dynamic concepts—like how changing the angle of incidence affects refraction in physics, or visualizing the rotation of a multi-variable function in calculus—often required stepping outside the chat window to find a separate video or simulation. That era is rapidly ending.

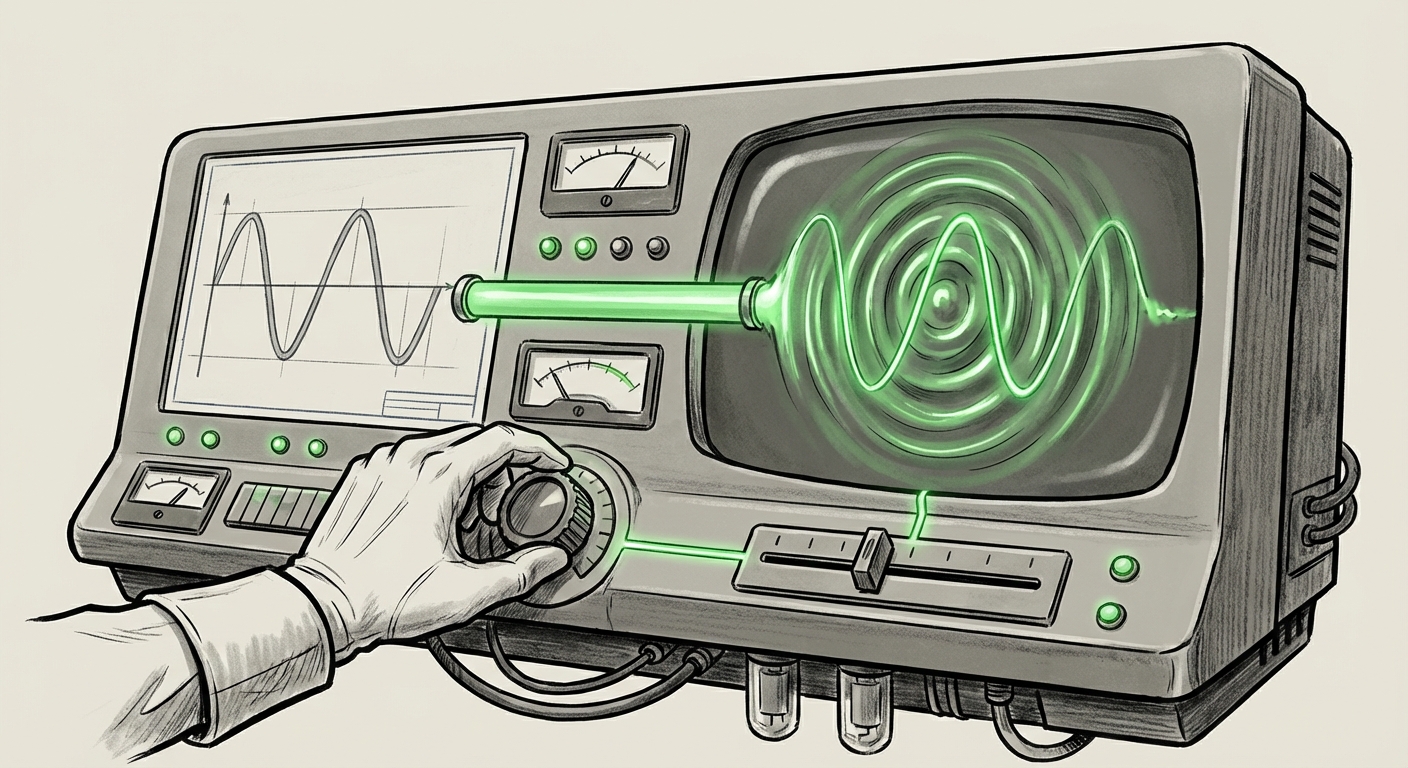

The integration of interactive visualizations for math and physics concepts marks a pivotal moment. It signifies the maturation of AI from a knowledge repository into a true, dynamic, educational partner. This leap is not just about prettier charts; it represents a fundamental upgrade in how Artificial Intelligence interacts with, explains, and models the physical world.

The Inflection Point: From Static Text to Dynamic Worlds

When a user asks an LLM to explain the concept of wave interference, the traditional response would be paragraphs of descriptive text. Now, the AI can present a graph where the user can drag a slider to change the frequency of one wave, and instantly watch the resulting interference pattern shift in real time. This capability, rolling out with over 70 initial concepts, bridges the critical gap between knowing a formula and understanding its behavior.

This transition is critical because STEM subjects are inherently visual and variable. Simply reading about a parabolic trajectory is abstract; manipulating an input variable (like initial velocity) and seeing the resulting path change instantly solidifies conceptual understanding. This is personalization at the deepest level of learning.

Context Check: The Broader Push Toward Multimodality

OpenAI’s move is not happening in a vacuum. It is a direct manifestation of the industry-wide race toward true multimodal AI—systems that fluently process and generate text, images, audio, and actionable outputs simultaneously. My analysis suggests that visualization is the next logical frontier after image generation took hold.

If we examine the broader landscape, this signals that competitors are likely focusing their next major releases on similar capabilities. The ability to handle real-time interaction—where the AI doesn't just show a finished picture, but allows the user to interact with the generation process—is the new benchmark for perceived intelligence and utility. The ability to offer dynamic simulation tools validates the idea that AI is moving from being an excellent librarian to an active laboratory assistant.

The Engine Room: How Real-Time Visualization Works

For the average user, watching a graph update smoothly as they adjust a variable feels like magic. For the technologist, it reveals significant underlying architectural maturity. This feature is heavily reliant on the LLM’s ability to generate, debug, and safely execute code.

This capability is likely powered by a highly refined version of the "Code Interpreter" or "Advanced Data Analysis" tools already seen in certain LLM versions. When a user adjusts a variable, the process looks something like this:

- Interpretation: The LLM understands the user’s visual tweak (e.g., "Increase the damping coefficient by 20%").

- Code Generation: It writes a small snippet of code (likely Python, leveraging libraries like Matplotlib or Plotly) incorporating the new variable value.

- Execution & Sandboxing: Crucially, this code must run in a secure, isolated environment (a sandbox) to prevent any security risks to the host system. Latency here must be minimal to feel "real-time."

- Rendering: The output—the updated graph or simulation—is streamed back to the user interface.

The technical challenge here lies in latency and safety. To maintain the educational flow, the feedback loop (tweak $\rightarrow$ process $\rightarrow$ view) must happen in milliseconds. Furthermore, allowing the model to run arbitrary code necessitates robust AI code execution environment security protocols. The fact that this is being deployed publicly suggests a high degree of confidence in the stability and containment of their sandboxed execution layer.

Implications for the Future of STEM Education (The Educator’s New Toolkit)

The arrival of interactive visualization reshapes the battlefield for EdTech companies and presents a massive opportunity for educators.

Shifting Pedagogy from Rote Learning to Conceptual Mastery

For decades, teaching advanced math and science has relied on either expensive physical lab equipment or static software simulations. LLMs can now serve as an infinitely patient, highly customized tutor that illustrates concepts on demand. This democratization of high-fidelity simulation is revolutionary.

We anticipate a rapid shift in curriculum design. Instead of spending weeks lecturing on the theoretical mechanics of a concept, educators can use class time for problem-solving, encouraging students to use the AI visualization tool to test their hypotheses dynamically. The focus moves from memorization to discovery.

The Competitive Pressure on EdTech

Sources analyzing the future of AI tutors in STEM education suggest that adaptive learning platforms relying solely on pre-programmed decision trees will quickly become obsolete. If a student asks, "What if the initial gravitational constant was slightly different?", a traditional platform might fail, but a generative visualization tool can instantly render the result. This places immense pressure on existing EdTech providers to integrate generative AI capabilities or risk being relegated to providing standardized testing infrastructure rather than true learning tools.

Broader Business and Industry Applications

While education is the most obvious beneficiary, the underlying capability—dynamic parameter adjustment via natural language—has far wider commercial reach.

Engineering and Design Simulation

Engineers often work with complex Finite Element Analysis (FEA) or Computational Fluid Dynamics (CFD) models. While these professional tools are powerful, they can have steep learning curves. Imagine an engineer asking an AI: "Show me the stress distribution on this bracket if I change the material's Young's Modulus by 15%," and instantly seeing the corresponding heat map update. This bridges the gap between early-stage conceptual modeling and full-scale simulation.

Financial Modeling and Risk Assessment

In finance, risk is quantified through variables (interest rates, volatility, market correlations). An LLM paired with interactive financial visualizations could allow portfolio managers to adjust key inputs via conversational commands and see the Monte Carlo simulations or Value-at-Risk (VaR) metrics dynamically recalculate and redraw their confidence intervals. This accelerates scenario planning dramatically.

Actionable Insights for Technology Leaders

For those building or adopting AI tools, the emergence of this feature demands proactive strategy adjustments:

- Prioritize Multimodal Testing: Do not evaluate new AI releases solely on text benchmarks. Demand real-time visualization and actionable output tests relevant to your domain (e.g., CAD rendering in design firms, data pipeline monitoring in IT operations).

- Investigate Code Execution Security: If you plan to utilize internal LLMs for proprietary simulation or analysis, your security team must understand the implications of granting code execution permissions. Robust sandboxing is non-negotiable.

- Redefine "Expertise": The barrier to entry for teaching complex topics is lowering. Focus your internal training efforts less on memorizing procedures and more on framing the right high-level questions that unlock the AI's visualization power.

The Competitive Crucible

The announcement sets a high bar. Analysts studying the comparison of AI tools for scientific simulation will now be assessing how closely competitors match the fidelity and responsiveness of these new visualizations. If a competitor’s visualization lags by a second, it breaks the immersion; if it renders inaccurate physics, it poisons the learning environment.

This is a classic technological arms race. The first model to offer a seamless, browser-based laboratory environment—capable of generating physics, chemistry, or even economic simulations on demand—will capture significant mindshare and market share in the B2B professional services and EdTech sectors.

The shift from LLMs as encyclopedias to LLMs as active simulation engines marks the beginning of the truly embodied AI—systems that don't just talk about the world, but actively model and manipulate its rules in collaboration with the user. This is not just a feature update; it is the scaffolding for the next generation of human-computer interaction.