The Rise of Parallel AI Agents: Analyzing Anthropic's Code Review Revolution and the Future of Autonomous Software

The landscape of software development is undergoing a tectonic shift, driven by advancements in Large Language Models (LLMs). While tools like GitHub Copilot have already revolutionized the *writing* of code, the next frontier is the validation and assurance of that code. Anthropic's recent announcement regarding Claude Code integrating parallel AI agents for code review is not just an incremental update; it signals a fundamental transition toward autonomous quality control in engineering workflows.

This development moves AI from being a helpful pair programmer to an active, specialized quality gatekeeper. To understand the magnitude of this shift, we must analyze the technology underpinning it—the multi-agent system—and how it positions Anthropic in the fiercely competitive AI coding market. This means looking beyond the feature announcement and examining the broader trends driving software engineering into an era defined by AI oversight.

The Shift from Single Agent to Multi-Agent Collaboration in Code Assurance

What makes Anthropic’s move significant is the phrase "parallel AI agents." This is a crucial technical distinction. A standard LLM review often involves one prompt asking the model to critique a block of code. This is powerful, but limited by the model's inherent constraints in one pass.

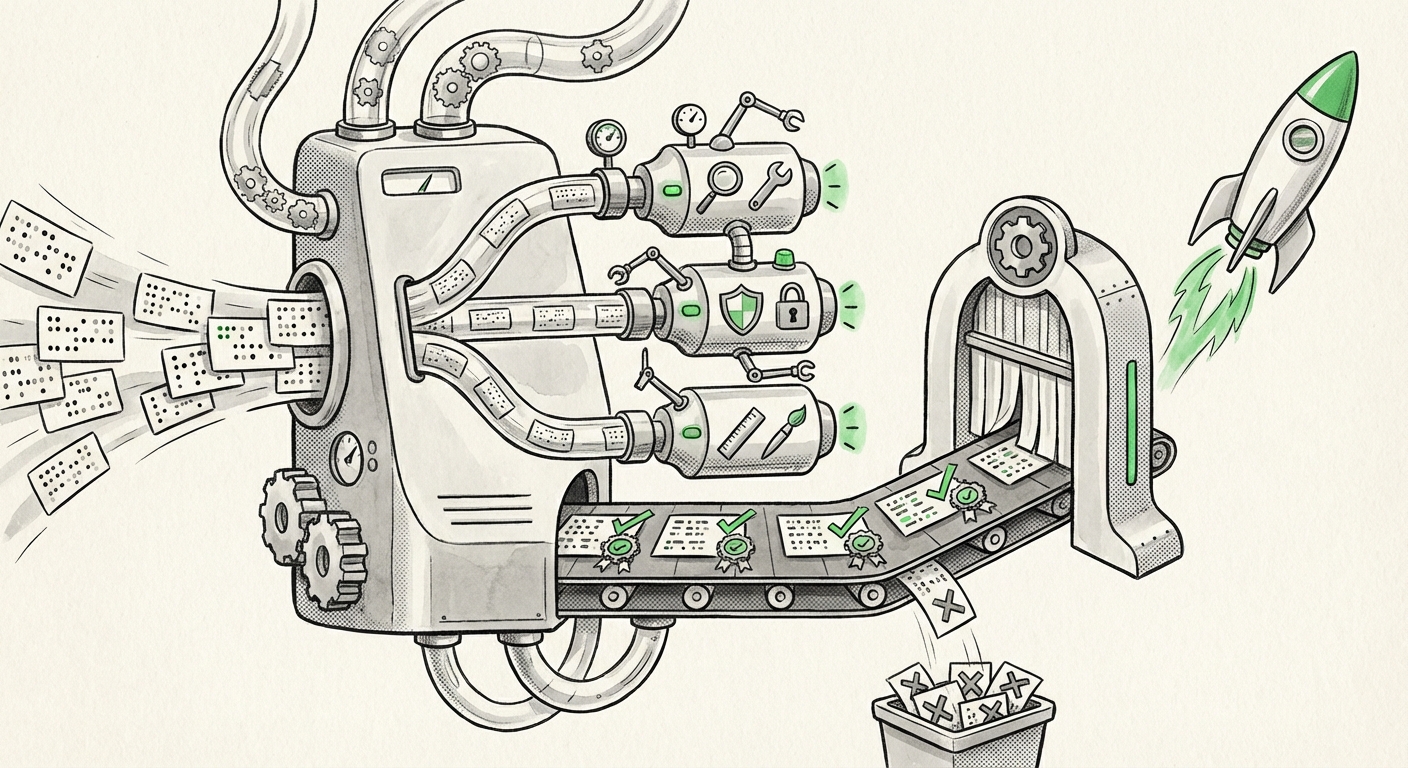

In contrast, a parallel or multi-agent system involves multiple specialized AI instances working simultaneously on a task. Think of it like an expert review board:

- Agent Alpha (The Debugger): Focuses purely on logical errors, off-by-one mistakes, and performance bottlenecks.

- Agent Beta (The Security Auditor): Dedicated to identifying common vulnerabilities (e.g., SQL injection, cross-site scripting, insecure dependency usage).

- Agent Gamma (The Style Enforcer): Ensures adherence to specific organizational coding standards and documentation requirements.

These agents can operate in parallel, feeding their findings back into a central coordination layer (or even debating internally), drastically improving the thoroughness and speed of the review. This architecture allows the system to tackle complexity far beyond what a single, monolithic LLM query can handle.

This approach validates research trends where sophisticated frameworks like **LLM multi-agent code generation and testing frameworks** are proving that collaboration between AI entities unlocks higher levels of problem-solving capability. For the technical audience, this is the realization of agentic AI—systems capable of executing complex, multi-step objectives autonomously.

Corroboration: The Competitive Pressure in AI Coding

Anthropic is not operating in a vacuum. The market for AI-assisted development is incredibly dynamic, currently led by Microsoft’s integration of GPT models via **GitHub Copilot enterprise code review** features.

If Copilot focuses heavily on suggestion and in-line completion, Anthropic’s move emphasizes *enforcement* and *quality gating* before code enters the main branch. For Engineering Managers and Enterprise IT decision-makers, this presents a choice: Do they stick with the integrated ecosystem (Microsoft), or adopt a best-in-class specialized tool (Anthropic) that emphasizes safety and sophisticated review capabilities?

The fact that Anthropic, a company built upon stringent safety principles (Constitutional AI), is applying this advanced, parallel review capability specifically to security gaps underscores its strategic positioning. They are selling not just speed, but *trust* in the automated code merge process.

The Autonomous Future: Quality Gates, Not Just Suggestions

The most profound implication of parallel AI code review is the transition from optional tooling to mandatory quality gates within the Continuous Integration/Continuous Delivery (CI/CD) pipeline.

Historically, code review has been the last human checkpoint—a bottleneck where experienced developers spend significant time ensuring new contributions meet standards and don't introduce regressions or vulnerabilities. If an AI system, powered by parallel agents, can perform this function faster and more reliably than a junior or mid-level human reviewer, the entire development lifecycle accelerates.

Impact on Developer Workflows and Roles

For the vast majority of software developers, this means less time spent debugging mundane issues or arguing about semicolons and style guides. This automation directly addresses the "so what?" question regarding productivity:

- Reduced Cycle Time: Merging code can happen minutes after it’s written, rather than waiting hours or days for a human reviewer to become available.

- Elevated Human Focus: Senior developers will spend less time on low-level review and more time on high-value tasks: architectural design, system integration, performance tuning, and complex business logic—tasks that require deep contextual understanding beyond current AI capabilities.

- Democratization of Quality: The reliability of security and bug checks becomes consistent across the organization, regardless of the experience level of the primary contributor.

However, this also sparks conversations about the future of junior developer roles. If the entry-level task of code review is automated, companies must adapt how they train new engineers. The focus must shift rapidly from mastering syntax to mastering system architecture and prompt engineering for complex AI interaction.

Anthropic’s Enterprise Strategy: Safety as a Differentiator

Anthropic’s core brand identity revolves around safety and responsible AI development. When reviewing their **Anthropic Claude enterprise integration roadmap**, it is clear that "security review" is a natural extension of their safety mandate.

In an era where generative AI can inadvertently produce insecure code, a model specifically trained and structured to aggressively seek out and flag vulnerabilities holds immense appeal for regulated industries (finance, healthcare) and large corporations handling sensitive data. For these CTOs and business analysts, the value proposition of Claude Code might not just be speed, but *compliance assurance*.

This feature serves as a powerful differentiator against competitors who might be perceived as prioritizing raw output speed over rigorous safety auditing.

The Technical Viability: Beyond the Hype of Multi-Agent Systems

While multi-agent systems sound futuristic, their practical implementation in production codebases is still evolving. The success of Claude Code hinges on solving several engineering hurdles:

- Coordination Overhead: Parallel agents need efficient communication protocols. If the overhead of agents passing messages back and forth outweighs the time saved by parallelism, the feature fails.

- Context Window Management: Large codebases require complex context management. The parallel agents must intelligently decide which parts of the surrounding code and documentation are necessary for their specific review tasks.

- Hallucination Mitigation: An AI agent flagging a vulnerability that doesn't exist (a false positive) is just as disruptive as missing a real one. The parallel structure must include cross-validation mechanisms to boost precision.

The industry is closely watching whether Anthropic has successfully baked in robustness sufficient for daily use in high-stakes environments. If they have, it sets a new benchmark for how complex, structured tasks should be delegated to AI systems.

Actionable Insights for Engineering Leaders

The integration of parallel AI agents into the code review process is no longer theoretical; it is commercially available. Engineering leaders must act now to capitalize on this trend and mitigate potential disruptions:

1. Pilot and Benchmark Immediately

Do not wait for your primary vendor (e.g., GitHub) to perfectly replicate this functionality. Begin pilot programs using Claude Code on non-critical projects. Benchmark the quality and speed of AI reviews against your current human review process. Metrics should focus not just on time saved, but on the **reduction of critical bugs discovered post-merge**.

2. Re-evaluate Senior Engineer Utilization

Identify which senior engineers spend the most time on basic code review tasks. Create a clear path for them to transition into roles focusing on system architecture, AI tool configuration, and validation of AI-generated security reports. This proactively addresses workforce transition.

3. Define the "Human Override" Threshold

Determine clear criteria for when a human must intervene. Will the AI handle 90% of merges automatically, requiring human review only for changes above a certain complexity score or those flagged by multiple agents as high-risk? Establishing these rules *before* deployment is crucial for maintaining quality.

Conclusion: The Inevitable Automation of Quality

Anthropic's move with Claude Code is a powerful statement: the era of fully integrating AI deep into the quality assurance phase of software engineering has begun. The implementation of parallel agents suggests a move away from superficial checks toward genuine, multi-faceted inspection powered by collaborative LLMs.

This technology promises a future where software is built faster, is inherently more secure at its foundation, and where human engineers are finally liberated to focus on true innovation rather than exhaustive manual verification. The development signals that the next great leap in productivity won't come from making developers type faster, but from making AI auditors smarter and more specialized.