The 4D Leap: How World Models and D4RT Are Building AGI's Predictive Engine

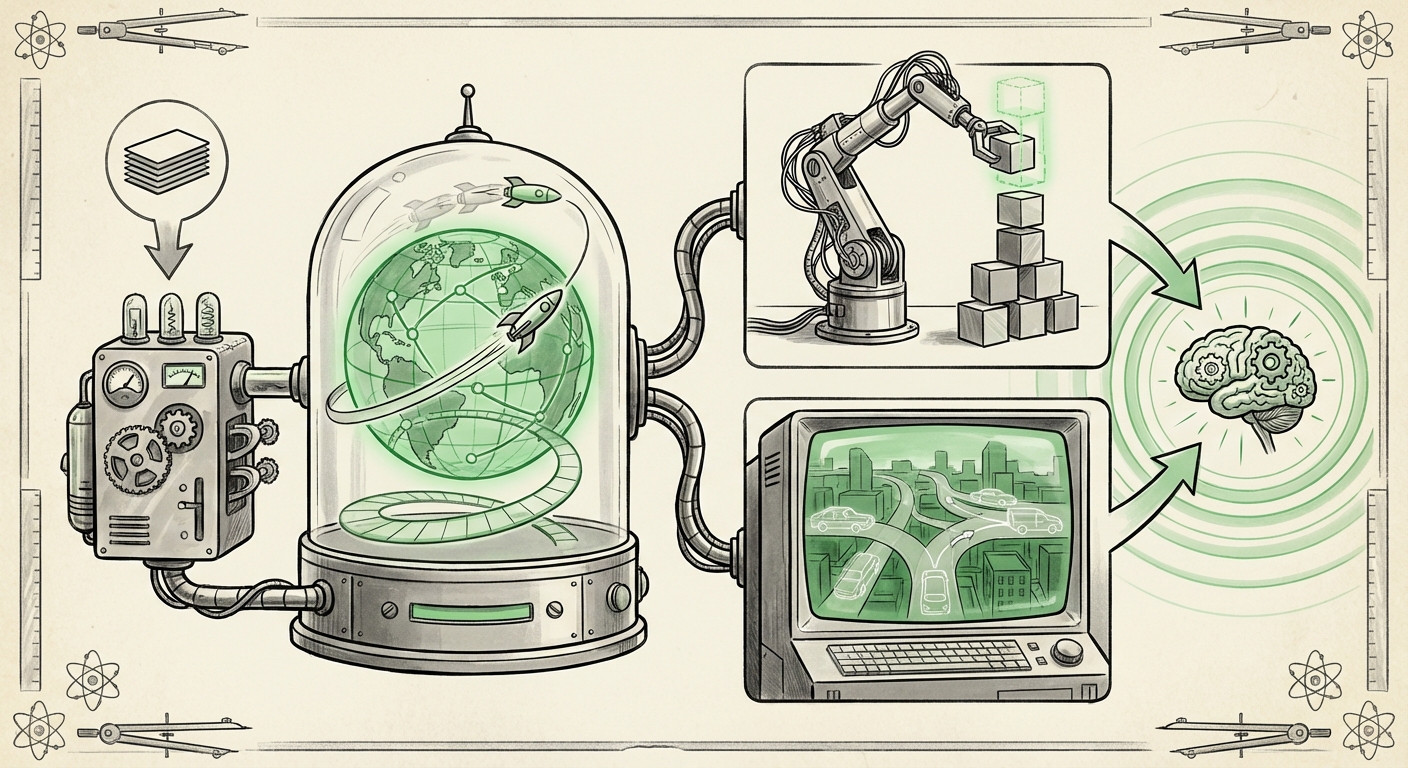

Artificial Intelligence has moved rapidly past recognizing patterns in static data. The new frontier isn't about analyzing what *is*, but accurately predicting what *will be*. This shift is crystallizing around the concept of World Models, driven by groundbreaking work like DeepMind’s D4RT research, which incorporates 4D (three-dimensional space plus time) reasoning. This fusion represents a pivotal moment in AI development, moving us closer to machines that possess genuine, actionable common sense.

For the non-specialist, imagine a child learning physics. They don't just memorize that a ball is red; they learn that if they push it, it will roll, slow down due to friction, and eventually stop. They have built an internal, predictive "world model." Current AI systems are finally catching up to this basic level of understanding. Our analysis synthesizes recent developments in this field to forecast where AI is headed next.

The Core Concept: Why World Models Are Essential for AGI

For decades, large language models (LLMs) have astonished us with their fluency, but they often lack deep physical understanding. They generate plausible text based on statistical likelihood, not underlying causality. World Models aim to solve this.

Predictive Power Over Memorization

A World Model is an AI subsystem whose primary job is to learn a compressed, high-level representation of the world it observes and use that model to forecast future states. This is a paradigm shift championed by leading voices in the field.

Yann LeCun, a titan in deep learning, has heavily promoted the necessity of architectures like JEPA (Joint Embedding Predictive Architecture) as the missing piece for AGI. LeCun argues that true intelligence requires systems to anticipate the consequences of actions, much like humans do, without constant external supervision. The D4RT work from DeepMind aligns perfectly with this philosophy, showing how complex environments can be modeled for prediction.

For the Business Strategist: This means we are moving away from needing trillions of data points for every specific task toward systems that learn the *rules* of interaction once. If an AI learns the rules of gravity and friction in a simulated kitchen, it doesn't need to be retrained from scratch to understand a slightly different kitchen.

D4RT and the Conquest of 4D: Moving Beyond Flat Space

The term "4D" in this context is crucial. It signals the integration of time into the spatial reasoning capabilities of the AI. An AI that only understands 3D space can label objects; an AI that understands 4D can predict where an object *will be* when a door opens, or how a dropped glass will shatter.

Mastering Spatiotemporal Prediction

The work surrounding DeepMind’s D4RT showcases advanced **spatiotemporal prediction**. This requires sophisticated neural architectures capable of handling sequences and complex dynamic interactions. Unlike simple video prediction, D4RT-level systems must learn the underlying physics—how forces and obstacles affect movement over time.

- For the Engineer: This demands specialized architectures, often integrating concepts from Graph Neural Networks (GNNs) or advanced recurrent mechanisms within the model's predictive loop, allowing the AI to project possibilities across various time horizons.

- For the General Reader: Think of a self-driving car. It needs to predict not just where the pedestrian *is*, but where they will be three seconds from now, factoring in their speed and potential turns. World Models equipped with 4D reasoning make these predictions reliable, a necessity for safety.

The Embodied Revolution: Robotics Finds Its Brain

The most immediate and transformative application of high-fidelity World Models is in Embodied AI—systems that interact with the physical world, primarily robotics.

Closing the Simulation-to-Reality Gap

Robotics has long struggled with the gap between simulation and reality. A robot trained purely in a perfect digital twin often fails miserably in the messy, unpredictable real world. World Models are the bridge.

By learning an accurate internal representation of the physical laws governing its actions—its own model of mass, friction, and stability—a robot can plan complex, multi-step tasks internally before executing them. This makes them more flexible and safer.

Companies leveraging advanced simulation platforms, such as those driving autonomous vehicle testing or complex warehouse automation, are rapidly adopting these predictive frameworks. NVIDIA’s work in platforms like Isaac Sim, which focus heavily on realistic physical modeling, exemplifies the industry’s push toward this level of simulation fidelity, where World Models are the necessary operating system for training agents.

Actionable Insight for Automation: Investing in simulation environments that can host and train agents using these sophisticated World Models will provide a massive competitive advantage, allowing companies to iterate hardware and software designs far faster than relying on physical prototyping.

Scaling Intelligence Through Synthetic Reality

Perhaps the greatest hidden benefit of robust World Models is their ability to generate limitless, perfectly labeled training data. This addresses one of AI's most persistent bottlenecks: the cost and time associated with collecting real-world data.

Synthetic Data: Training Without Limits

If an AI can simulate a thousand different scenarios of a complex robotic failure, it can learn how to avoid that failure without ever risking a physical machine. This reliance on synthetic data is a massive trend, validated by market analysts recognizing its essential role in scaling AI development.

The World Model acts as the ultimate simulator. It generates scenarios based on the physics it has learned, allowing downstream AI models (like those controlling motor outputs) to train until they are near-perfect before deployment. This significantly improves training efficiency and robustness.

As Gartner notes, synthetic data is becoming essential for AI success. World Models are the mechanism by which the highest quality, context-aware synthetic data can be produced for highly dynamic tasks.

Implications: What This Means for the Future of AI and Society

The convergence of 4D modeling and World Models signals that AI systems will soon graduate from being excellent calculators to competent planners.

1. Common Sense and Robustness

The primary implication is the end of brittle AI. Today’s specialized models often fail spectacularly when faced with novel inputs outside their training distribution. A system with a mature World Model, however, understands the fundamental principles of its domain. If an input is novel, it can use its learned physics to *guess* the outcome intelligently rather than collapsing.

2. Personalized Digital Twins

In personalized medicine or customized manufacturing, World Models can be quickly tailored to an individual’s specific environment (e.g., the unique wear patterns of a single machine or the specific physiology of a patient). This creates hyper-accurate "digital twins" capable of running risk-free "what-if" scenarios.

3. New Frontiers in Creative AI

While we focus heavily on robotics, the creative applications are also vast. Imagine an AI authoring a novel that understands consistent character motivation and physical world reactions, or an AI game designer that can instantly generate realistic, explorable 3D worlds that obey consistent rules.

Actionable Takeaways for Technology Leaders

For organizations looking to capitalize on this next wave of intelligence, focus must shift from pure data ingestion to predictive internal capability:

- Prioritize Simulation Infrastructure: Assess your current simulation tools. Are they merely rendering graphics, or are they capable of hosting and evaluating agents based on predictive internal models? Upgrading simulation capabilities is now equivalent to upgrading your R&D lab.

- Embrace Predictive Architectures: Look beyond standard Transformer setups for sequential or physical tasks. Begin researching and experimenting with architectures explicitly designed for predictive state representation, aligning with the JEPA philosophy advocated by LeCun.

- Re-evaluate Data Sourcing: Shift resources away from purely gathering more real-world data toward generating highly diverse, boundary-testing synthetic data using world models. This lowers acquisition costs and rapidly expands the agent's experiential learning space.

The journey to Artificial General Intelligence is often debated, but the path forward seems increasingly clear: it must be paved with systems that can accurately model and predict the dynamics of the universe they inhabit. The DeepMind D4RT project, by aggressively tackling 4D spatiotemporal reasoning within the World Model framework, is not just an interesting piece of research; it is laying the foundation for the next generation of truly intelligent, adaptable, and autonomous AI.