The AI Leap: Why 4D World Models Are the Next Frontier Beyond LLMs

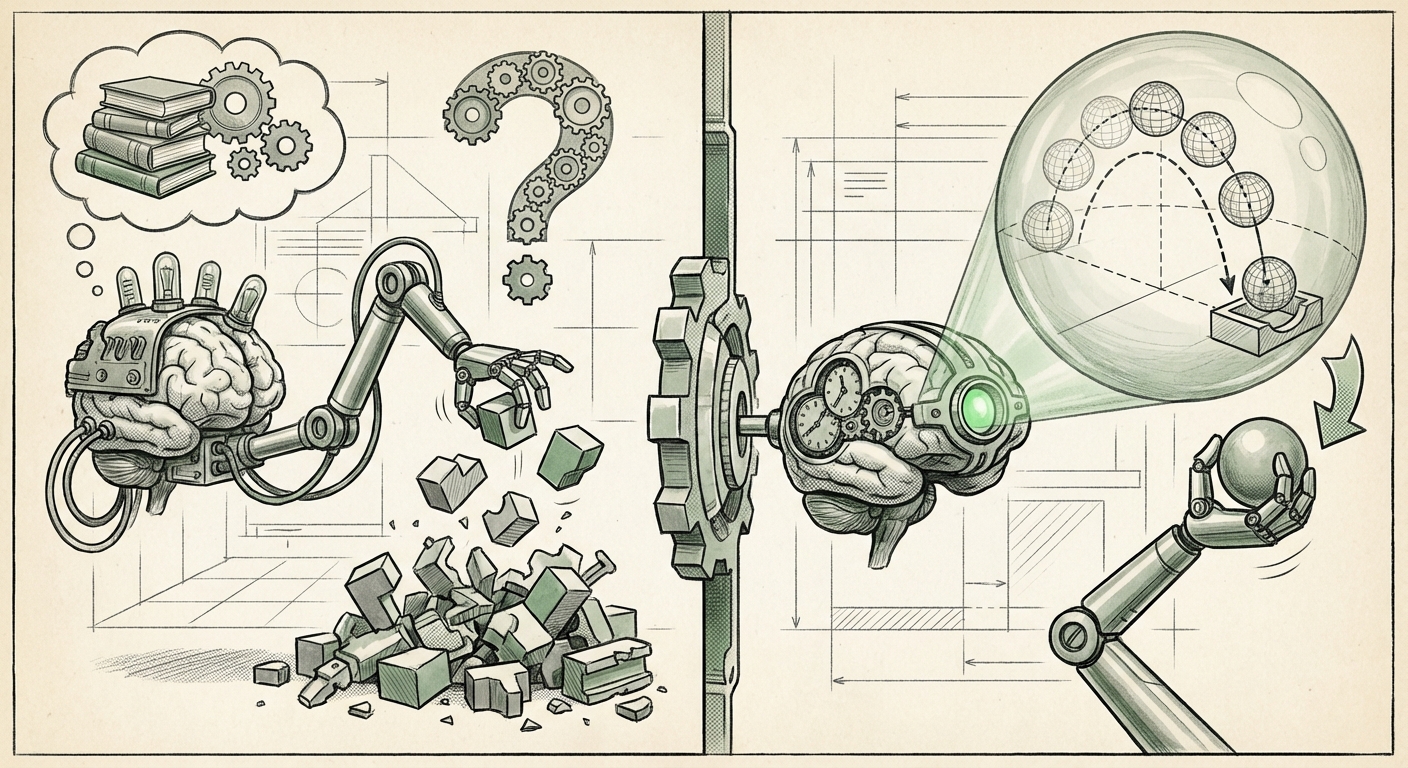

For the last few years, the world of Artificial Intelligence has been dominated by Large Language Models (LLMs)—systems that are masters of text, code, and static knowledge. While revolutionary, LLMs fundamentally operate in a space devoid of true physical consequence. They do not feel gravity, anticipate the arc of a thrown ball, or understand inertia. They predict the next word, not the next physical state.

A seismic shift is underway, signaling the next major breakthrough: the move toward World Models coupled with an understanding of 4D dynamics. As highlighted by recent work like DeepMind’s D4RT (Deep Dynamics for Real-Time Control), AI is finally learning to inhabit and predict a continuous, changing reality. This isn't just about creating better video games; it is about building agents that can interact with, learn from, and ultimately master the physical world.

What Are World Models and Why Do They Matter?

Imagine trying to learn to cook by only reading recipes versus learning by actually watching the ingredients change state over time—boiling, browning, solidifying. World Models provide the AI with the "watching" capability.

In simple terms, a World Model is an AI’s internal, simplified blueprint of reality. Instead of relying solely on trial-and-error in the real world (which is slow and expensive), the AI builds a predictive simulation inside its own architecture. It learns the rules of physics, object permanence, and cause-and-effect simply by observing data. This process is often closely tied to predictive coding, a concept where the brain (or AI) constantly tries to predict what it will sense next, minimizing surprise.

The Efficiency Dividend: Learning from Imagination

The core excitement surrounding World Models is sample efficiency. Training current advanced AI often requires millions of interactions with an environment. For a robot hand trying to grasp an object, this means thousands of dropped items and destroyed components.

When an agent possesses a World Model, it can practice complex maneuvers entirely within its internal simulation—it can imagine millions of attempts without touching reality. This is powerfully corroborated by research building on the foundational ideas of leaders like Yann LeCun. When looking into corroborating technical analyses, the emphasis is clear: World Models are the key to bridging the gap between data efficiency and true general intelligence. If an AI can learn complex tasks faster by simulating outcomes internally, the cost and time barriers for advanced robotics plummet.

For the technical audience: This internal simulation allows for planning in a compact, latent space, making optimization algorithms far more effective than pure reactive policy learning.

The Crucial Fourth Dimension: Moving Beyond Static 3D

When we talk about "3D," we refer to space: height, width, and depth. But the real world is dynamic. If you move a chair in a 3D world, it stays there. If you move a chair in the real world, you must account for when and how long it takes for gravity to settle it, or how it interacts with the floor underneath.

The inclusion of the 4th dimension—Time—is what DeepMind’s D4RT and related research are mastering. They are not just training agents to navigate static mazes; they are training agents to master dynamics. This involves understanding momentum, friction, elasticity, and fluid dynamics, all while making real-time decisions.

Grounding Theory in Physics: The Robotics Challenge

This pursuit of 4D understanding is directly relevant to the challenges facing embodied AI. As external analyses into "4D physics simulation" confirm, moving AI from simulated environments to the messy real world (the "sim-to-real" gap) is the biggest hurdle in robotics. A slightly inaccurate model of friction can cause a complex robot arm to fail catastrophically.

D4RT shows that by integrating a learned dynamics model directly into the control loop, the AI becomes highly adaptive. It learns to account for minute discrepancies between its prediction and reality almost instantly. This temporal awareness means future robots won't just execute pre-programmed paths; they will adapt their grip strength based on the subtle sway of an object or the slight unevenness of the floor they are crossing.

For the engineering audience: This implies a move toward neural network architectures that specialize in temporal convolution and trajectory forecasting, moving away from purely feed-forward perception systems.

The Competitive Push: Validating the Trend Across Industry

Significant technological shifts are rarely isolated to one lab. The excitement around DeepMind’s results is amplified when we see equivalent massive investment and research focus across the AI landscape, confirming this is the recognized "next frontier."

The Race Beyond Text Generation

While LLMs remain powerful, industry watchers and venture capitalists are increasingly focused on where true, tangible, real-world autonomy will emerge. Corroborating analyses on "AI world models investment trends" show that funding is rapidly shifting toward multimodal and embodied intelligence.

Competitors are developing analogous capabilities, albeit sometimes under different banners. Meta, for instance, pushes boundaries in generative models like **Make-A-Video** and **Emu**. While these focus on generating realistic sequences of images, the underlying requirement is the same: a sophisticated internal representation of temporal coherence and physical plausibility. They must understand that a human arm bends at the elbow, not the wrist, and that water splashes a certain way when dropped.

When major labs like Google/DeepMind, Meta, and OpenAI focus resources on agents that can interact with complex simulations (like robotics tasks or advanced gaming environments), it signals that the era of purely textual AI is maturing, and the next decade belongs to embodied AI.

Practical Implications: What This Means for Business and Society

The maturation of 4D World Models is not an abstract academic pursuit; it carries profound implications for how industries operate.

1. Autonomous Robotics and Manufacturing

This is the most direct beneficiary. Imagine a warehouse robot that doesn't need weeks of reprogramming every time a product box changes shape or when the lighting shifts. A robot with a robust 4D World Model can observe a new item, mentally simulate how to pick it up under current lighting/friction conditions, and execute the task with high confidence on the first try. This accelerates automation deployment drastically.

2. Hyper-Realistic Simulation and Digital Twins

For industries like aerospace, automotive design, and urban planning, simulation fidelity is paramount. World Models allow for the creation of "digital twins" that behave virtually identically to their real-world counterparts, factoring in chaotic variables like weather, traffic flow, and material fatigue. Engineers can test extreme scenarios safely and quickly, leading to safer, more resilient physical systems.

3. Scientific Discovery and Drug Design

In chemistry and biology, simulating molecular interactions is crucial. A true 4D model could simulate how a complex protein folds over time or how a new catalyst interacts with reactants under varying pressures. This capability speeds up hypothesis testing by allowing scientists to run countless, highly accurate physical experiments entirely in silico (on a computer).

The Path Ahead: Actionable Insights for Navigating the Shift

The move toward agents that understand time and dynamics is inevitable. How should organizations prepare?

- Invest in Simulation Infrastructure: The primary testing ground for 4D models will be advanced simulation platforms (often requiring GPU clusters). Companies that already utilize digital twins or high-fidelity physics engines are best positioned to adopt D4RT-like agents quickly.

- Prioritize Multimodal Data Pipelines: World Models thrive on rich, temporally tagged data (video, sensor readings, force feedback). Data collection strategies must shift from simple labeled datasets to complex, synchronized streams that capture the continuous evolution of the environment.

- Focus on Latent Space Understanding: For technical teams, the focus needs to shift from optimizing large, static model weights (like in LLMs) toward optimizing the latent dynamics—the internal representation of how the world changes. This requires expertise in variational autoencoders and recurrent network architectures that specialize in prediction.

- Re-evaluate Real-World Deployment: Recognize that the "last mile" of deployment will become far easier. If an AI can learn complex motor skills in simulation (thanks to 4D modeling), the time needed to deploy that skill in a physical robot drops from months to days.

Conclusion: From Talking Machines to Acting Machines

The development of robust, 4D World Models marks a fundamental inflection point in AI evolution. It signifies a necessary departure from systems that merely mimic human communication toward systems that truly understand physical interaction. DeepMind’s D4RT is a powerful marker showing that the tools for creating truly autonomous, context-aware, and physically intelligent agents are now in active development.

The AI of tomorrow won't just write poetry about a ball rolling down a hill; it will calculate the exact time it takes to roll, anticipate where it will stop, and adjust its environment to catch it. This transition from passive knowledge representation to active, predictive interaction is the defining technological trend of the coming decade.