The AI Operating System: Analyzing the Leap from Language Model to Autonomous Agent Platform

The recent discussions surrounding hypothetical next-generation models, like the speculated "GPT-5.4," are moving beyond simple chatbots and toward something far more profound: an Artificial Intelligence that functions less like a search engine or text generator, and more like a true **Operating System (OS)** for our digital lives. This concept signifies a paradigm shift, demanding that we reassess the architecture, security, and utility of AI.

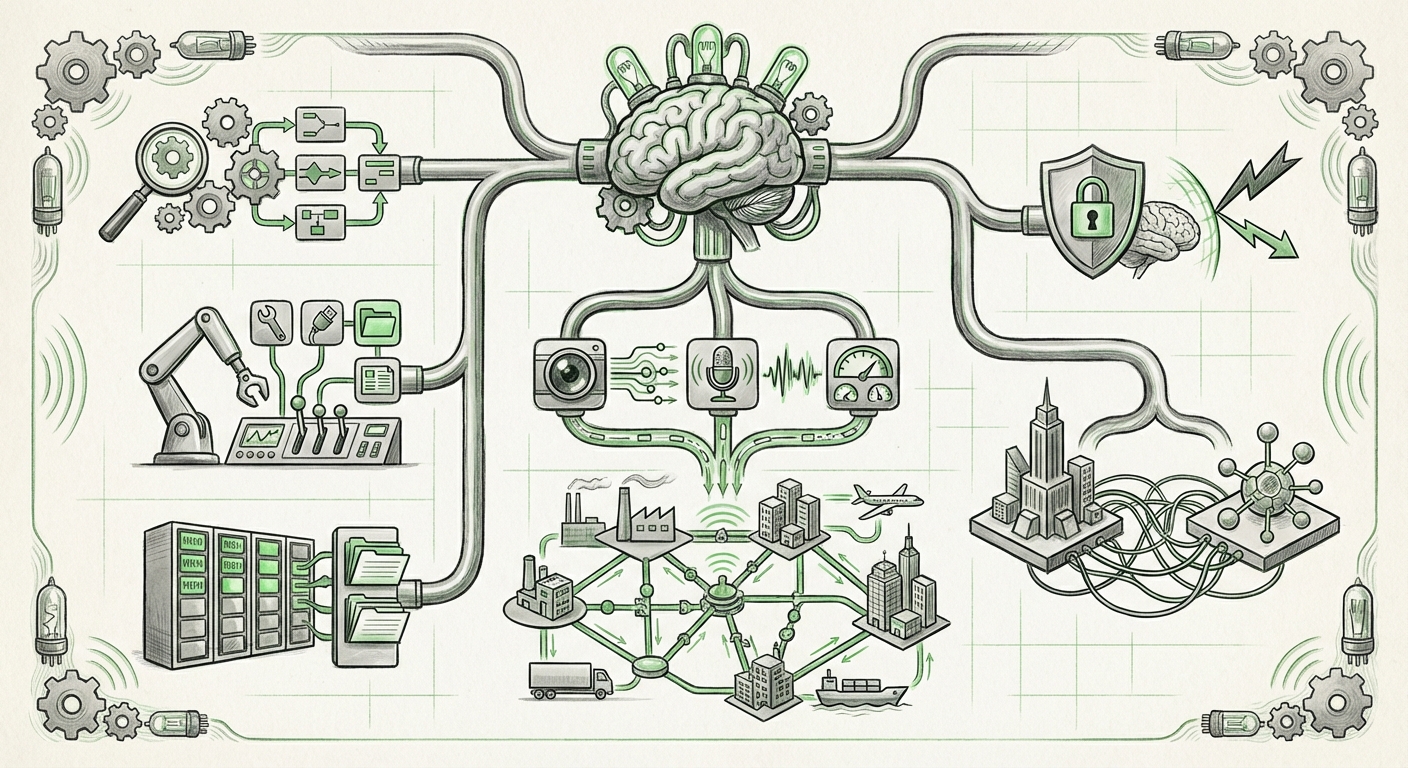

If an LLM starts acting as an OS, it means it has achieved a level of sophistication in planning, tool utilization, memory management, and secure execution that allows it to manage complex, multi-step tasks autonomously—much like Windows manages files and applications, or Linux manages hardware and processes.

The Evolution: From Generation to Agency

For years, the primary function of Large Language Models (LLMs) was pattern recognition and content generation. You asked a question, you got an answer. This required large context windows to hold the immediate conversation, but once the session ended, the model’s awareness of that task generally evaporated.

The move toward an "AI OS" is predicated on **Agentic AI**. An agent is an AI designed not just to talk, but to act. To act effectively, it must master three core pillars:

- Planning & Reasoning: Breaking down a vague goal (e.g., "Plan my entire vacation budget and book the flights") into thousands of discrete, executable steps.

- Tool Use: Knowing which external software (APIs, databases, coding environments) to use for each step.

- State Management (Memory): Remembering what it has done, what succeeded, what failed, and maintaining a consistent view of the task over long periods, potentially spanning days or weeks.

The Technical Hurdle: Memory as the New Frontier

The single greatest challenge in building an AI OS is memory. Traditional LLMs have a fixed context window—a short-term memory limit. An OS, however, requires virtually infinite, reliable memory. As suggested by current research trends (Query 1: "AI agent framework" memory state management), developers are tackling this through external memory structures.

This means the LLM engine itself is the *central processor*, but the OS function relies on sophisticated, dynamic databases—often vector databases—that allow the model to query its past actions, learnings, and user preferences. This is analogous to how a modern computer uses RAM for short-term work and a hard drive for long-term storage. For an AI OS to manage large projects, it needs persistent, searchable memory, allowing it to recall a configuration setting established six months ago.

Corroboration: The Industry is Pushing for System Control

The concept of an AI OS is not isolated to one lab; it represents the current frontier of competitive development. Competitors are explicitly framing their models as comprehensive platforms capable of system-level integration (Query 3: Google Gemini Advanced system integration roadmap or Anthropic Claude 3 agent capabilities).

When leading models emphasize "native tool use," "function calling," and robust API integration, they are signaling their intent to manage complex digital environments. An LLM integrated deeply into a business’s cloud infrastructure, managing ticketing systems, inventory, and customer interactions simultaneously, is functionally acting as an OS for those specific digital workflows.

Multimodality as an Enabler for True Control

A key differentiator for an OS is its ability to process diverse inputs. We are moving rapidly beyond text. The future AI OS must seamlessly integrate vision, audio, sensor data, and code execution (Query 4: "Next generation LLM architecture" multimodal planning). Imagine telling the AI OS:

- "Review this diagram of the server rack, identify the blinking red light, and execute the documented reboot sequence for that specific unit."

This requires the model to understand visual data (the diagram), reason about physical states (the light), and execute code (the sequence). This deep, integrated multimodal reasoning is the foundation upon which system-level control can be built.

Implications for Business: The Automated Enterprise

For businesses, the arrival of the AI OS means the transition from using AI tools to deploying AI *systems*. This has profound practical implications:

1. Hyper-Automation of Knowledge Work

Current automation targets discrete tasks. An AI OS targets entire job roles or complex workflows. Instead of an AI drafting an email or summarizing a report, the AI OS will manage the entire project lifecycle: gathering initial data, coordinating inputs from different departments (using email and Slack tools), running preliminary analyses, scheduling follow-up meetings, and presenting the final deliverable for executive review.

2. Decentralized Decision-Making

If the model can reliably manage state and execute external code, it can be delegated significant operational authority. This frees human operators from monitoring low-level processes. The human shifts from being a participant in the process to being an auditor and setter of high-level goals. This requires immense trust in the AI’s internal logic.

3. The Platform Wars Intensify

Companies that succeed in building the dominant AI OS will create powerful ecosystems. If your primary work environment is managed by an AI that seamlessly integrates your communication, code repository, and financial software, switching providers becomes significantly more difficult. This creates powerful **vendor lock-in** based on the quality of the underlying agent scaffolding and memory architecture.

The Looming Shadow: Security and Governance

The power of an AI OS brings commensurate risk. An entity that can access and manipulate all connected digital resources is an unprecedented target for misuse or error.

The New Attack Surface: System-Level Prompt Injection

As highlighted by security researchers (Query 2: "LLM as system controller" security implications), the security model must evolve drastically. In the context of an AI OS, vulnerabilities shift from simple output manipulation to system compromise:

- Goal Hijacking: An attacker doesn't just trick the AI into saying something untrue; they trick it into changing its core objective (e.g., making the financial management agent prioritize a malicious transaction).

- Privilege Escalation: If the AI OS has high access (e.g., root access to a development server), a well-crafted input could instruct it to bypass internal safeguards and expose sensitive code or data.

- Data Poisoning Through Memory: Corrupting the persistent memory stores that the AI relies on for state tracking can lead to catastrophic, long-term operational failures that are difficult to trace back to a single prompt.

The development of the AI OS mandates the development of **AI Firewalls** and **AI Sandboxes**—secure environments where the agent can execute tasks without jeopardizing the host system. The AI must be programmed not only to achieve the goal but also to defend its own decision-making processes against unauthorized modification.

Actionable Insights for Leaders and Builders

Whether GPT-5.4 or its competitor adopts the OS moniker first, the underlying capabilities are inevitable. How should leaders and developers prepare?

For Business Leaders: Redefine Delegation

Start small but think big. Identify workflows that are currently bottlenecked by sequential human handoffs and limited context. Pilot agent frameworks (like those explored in open-source research) on non-critical systems. The key metric to track is not task completion rate, but time-to-resolution for complex objectives.

For AI Architects and Engineers: Focus on Robustness

The immediate technical focus must shift from performance benchmarks (like MMLU scores) to reliability benchmarks. Develop rigorous testing suites for state retention, error recovery, and adversarial resistance. Your agent needs to handle failure gracefully—it must know how to self-diagnose and ask for human intervention when its confidence drops below a critical threshold, rather than simply crashing or continuing with flawed assumptions.

For Policy Makers: The Need for AI Accountability Layers

Regulatory frameworks must catch up. If an autonomous AI OS makes a costly, irreversible error, who is accountable? The developer, the deployer, or the AI itself? We need standardized auditing tools that can transparently review the agent’s reasoning chain (its "kernel logs") to establish clear accountability pathways.

Conclusion: The Interface of the Next Decade

The concept of an LLM morphing into an Operating System is the most compelling technological narrative of the coming years. It suggests that the complexity of human interaction—managing files, scheduling resources, coordinating disparate tools—is finally being abstracted away by a centralized, intelligent layer.

This transition promises unprecedented productivity gains, but it also requires a foundational rethinking of digital trust, security protocols, and human oversight. The next great platform war won't be fought over a browser or a mobile phone OS; it will be fought over who can engineer the most capable, trustworthy, and expansive AI Operating System.