The Visualization Revolution: How Interactive AI is Redefining Learning and Symbolic Reasoning

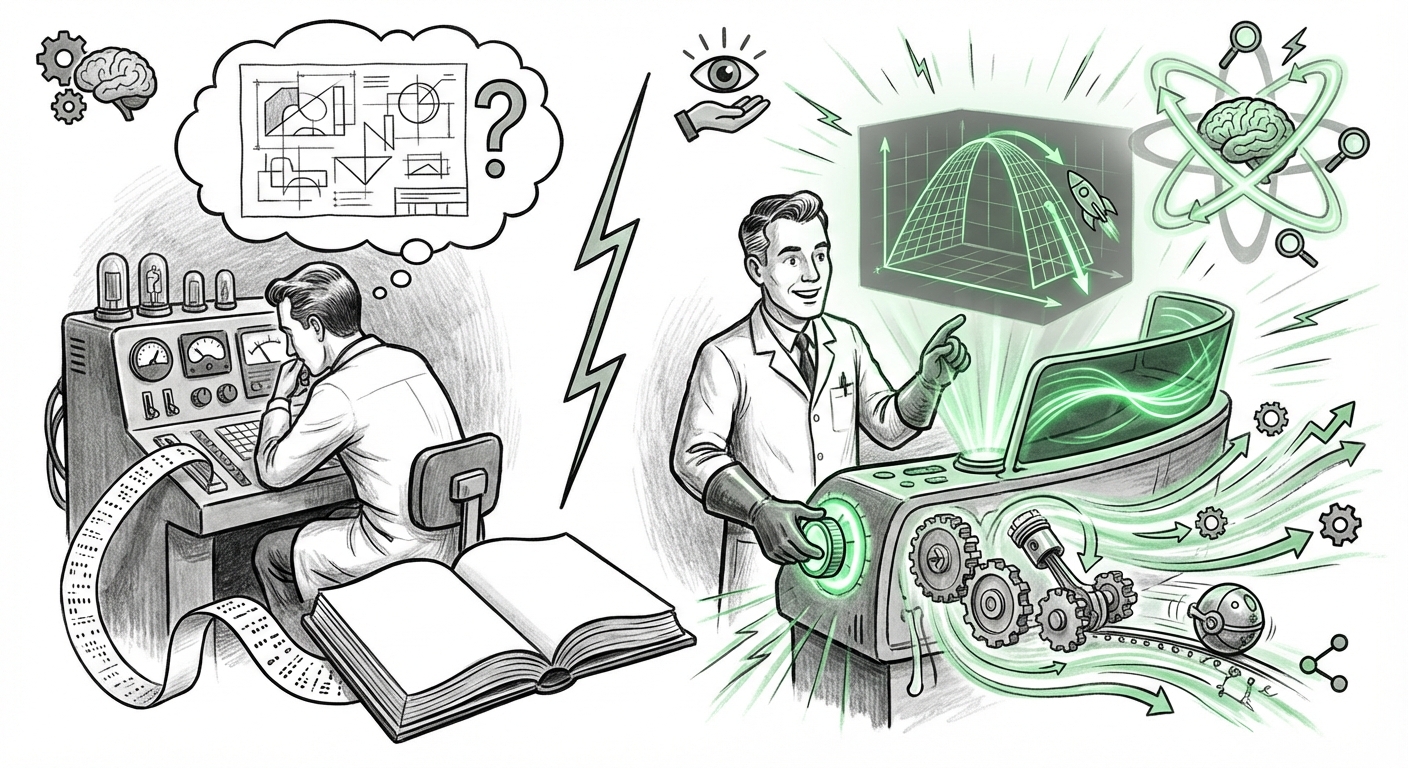

For years, the cutting edge of Generative AI has been defined by its mastery of language—producing coherent essays, complex code, and surprisingly human dialogue. This capability transformed Large Language Models (LLMs) into powerful *knowledge containers*. However, knowledge alone is often abstract. To truly understand the universe, from the parabola of a thrown object to the dynamics of a collapsing star, one needs more than text; one needs to see and interact.

The recent integration of interactive, real-time visualizations within platforms like ChatGPT for mathematics and physics marks a pivotal moment. This transition is not merely an aesthetic upgrade; it represents a fundamental shift in how AI reasons and communicates. We are moving from the era of the textual explanation to the era of embodied, experiential AI learning.

The Leap Beyond Text: From Explanation to Demonstration

Historically, if you asked an LLM about Kepler's laws of planetary motion, it would reply with a description drawn from its training data—a brilliant, yet static, block of text. While users could ask follow-up questions, the process remained sequential and narrative.

The new capability fundamentally changes this dynamic. Now, when a user queries a concept like projectile motion, the AI doesn't just describe the formula for trajectory; it *generates* an interactive graph. The user can immediately adjust the initial launch angle or velocity, and the resulting parabolic path updates instantly on the screen. This immediate, cause-and-effect feedback loop mirrors the scientific method itself.

The Technical Foundation: Bridging Symbolism and Visual Rendering

To understand the magnitude of this change, we must look at the underlying technology. This feature validates the maturation of multimodal AI architecture. It confirms that modern foundation models are no longer constrained to predicting the next token of text; they are now effectively generating functional code (e.g., JavaScript, Plotly, or specialized visualization frameworks) that executes within a secure sandbox environment.

As corroborating analyses suggest, this points toward robust internal capabilities for code generation and execution, a critical component of advanced reasoning. This isn't just a retrieval mechanism; it’s synthesis. The AI must:

- Comprehend the Symbolic Input: Parse the mathematical or physical principle.

- Generate Executable Code: Write the necessary programming language commands to render the visualization tool.

- Establish Data Bindings: Link user-adjustable variables (like mass or friction) to the graph output in real time.

For the technical audience, this confirms the platform is evolving into a true reasoning engine capable of generating dynamic outputs, moving closer to the functionality of dedicated computational software.

Implications for Education: The Personalized, Infinite Tutor

The most immediate and profound impact will be felt in education, particularly in STEM fields, which often suffer from high drop-out rates due to introductory difficulty. Articles focusing on AI personalized learning visualization often cite the need to cater to different learning styles. This AI feature is the ultimate tool for the visual and kinetic learner.

For a student struggling with trigonometry, being able to manipulate the angle slider on a unit circle and watch the sine and cosine waves change in tandem is infinitely more effective than reading a static textbook diagram. It democratizes complex understanding:

- Concept Mastery: Visualization reduces abstract concepts to tangible relationships, accelerating intuitive understanding.

- Experimentation Without Cost: Students can "break" simulations (e.g., setting gravity to zero) without physical or material consequences, fostering fearless exploration.

- Accessibility: Concepts previously requiring expensive lab equipment or advanced software are now available instantly via a chat interface.

This sets a new bar for EdTech competition. As the technology matures, we expect competitors like Google Gemini and Anthropic Claude to rapidly integrate similar, if not more advanced, interactive modules to maintain feature parity in the race to build the definitive AI tutor [Search for 'Google Gemini interactive tutoring'].

The Enterprise Shift: From Modeling to Active Simulation

While the educational use case is compelling, the business and R&D implications are perhaps even more disruptive. Engineers, designers, and research scientists rely on complex simulation software—Digital Twins, Finite Element Analysis (FEA), and Computational Fluid Dynamics (CFD)—to validate prototypes before physical construction.

The integration of live visualization within an LLM points toward a future where AI acts as the front-end interface for these heavy computational backends. Instead of learning complex simulation code or navigating dense proprietary software menus, an engineer could prompt the system:

"Simulate the thermal stress on this specific alloy bracket under a sustained load of 500 Newtons, allowing me to adjust the ambient temperature between 20°C and 80°C."

The AI then generates the environment, runs the simulation, and provides the resulting stress map visually. This concept, detailed in discussions around LLM simulation engine integration, promises to drastically lower the barrier to entry for complex engineering tasks. It means faster iteration cycles, reduced reliance on highly specialized legacy software operators, and democratized access to sophisticated modeling tools.

The Challenge of Trust and Accuracy

However, with great power comes the crucial challenge of verification. If an LLM generates a graph that visually appears correct but is based on a subtle flaw in its internal code generation or assumption set, the error is magnified. A text description might be fact-checked against source material; a dynamic simulation must be verified against physical laws.

This emphasizes the need for **auditable execution environments**. For these tools to gain trust in high-stakes industrial or scientific settings, developers must ensure the visualization execution sandbox is transparent and that the underlying mathematical assumptions are clearly stated. The user must know *why* the graph looks the way it does.

Actionable Insights for Industry Leaders

The move toward interactive, visual reasoning is not a fleeting trend; it is the next evolutionary stage of AI integration. Leaders must act now to prepare their organizations:

- Invest in Multimodal Fluency: Train teams not just on prompt engineering for text, but on structuring queries that explicitly demand visual, interactive outputs for problem-solving. Treat the AI as a co-pilot capable of generating executable interfaces, not just reports.

- Audit Current Workflows for Visualization Gaps: Identify areas in R&D, design, or compliance where complex, abstract data sets are currently poorly communicated. These are immediate targets for AI visualization pilots.

- Prioritize Simulation Integration Security: If your organization uses proprietary simulation models, begin exploring secure methods to connect these engines to LLMs. The AI becomes the "translator" between human intent and deep simulation code.

- Rethink Training Paradigms: For HR and L&D departments, this technology offers a chance to overhaul foundational technical training. Shift focus from rote memorization to experimental hypothesis testing guided by AI visualization.

The future of AI isn't about better chatbots; it’s about AI systems that can effectively model and manipulate the real world within a digital space. By allowing users to tug at the variables of physics and mathematics in real time, these platforms are transforming abstract theory into hands-on experience. This is the key to unlocking genuine understanding and accelerating discovery across every technical field.