The Inference Revolution: Why Language Processing Units (LPUs) Signal the End of GPU Monoculture in AI

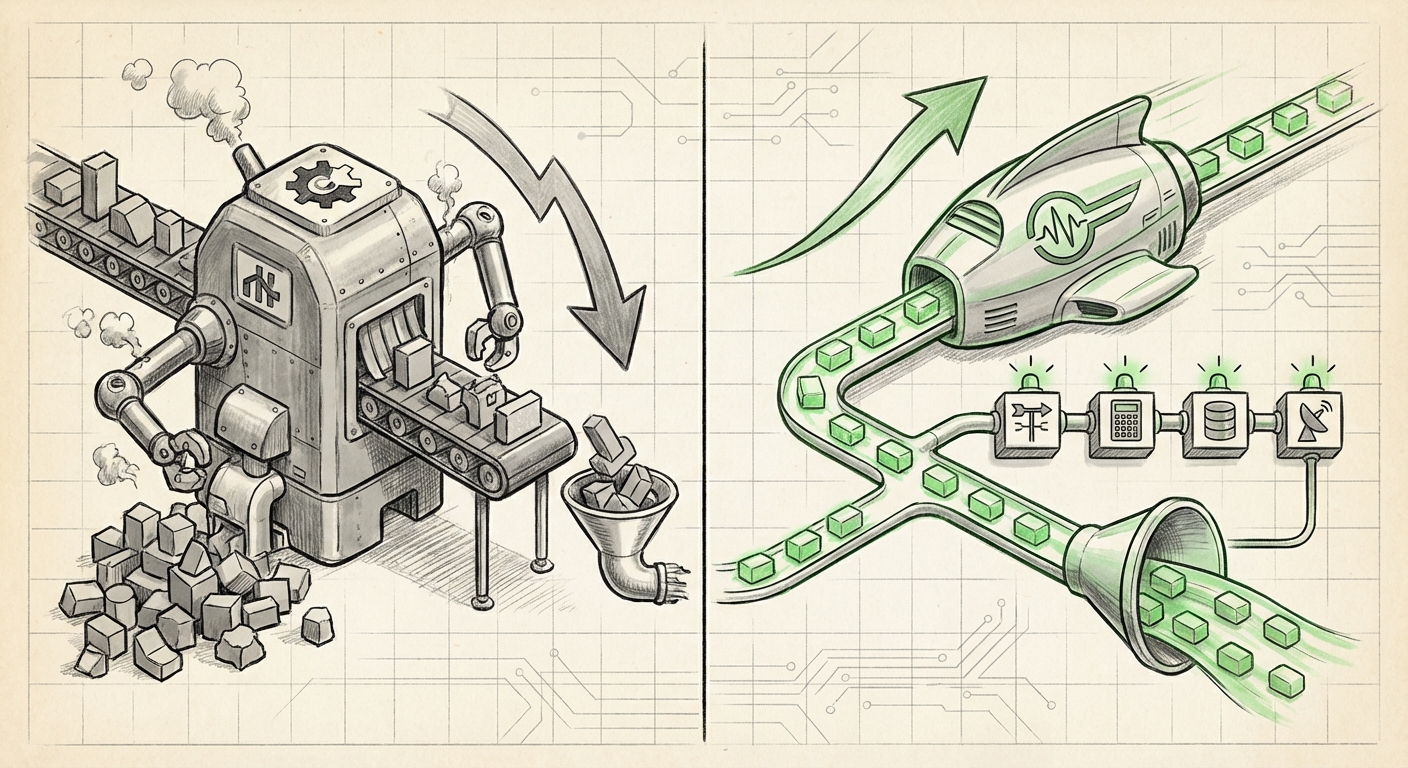

The world of Artificial Intelligence often feels dominated by one acronym: GPU. For years, Graphics Processing Units—designed originally for rendering video games—have served as the workhorses for training and running massive Large Language Models (LLMs). However, the demands of modern, deployed AI are shifting. We are moving rapidly from the age of training behemoths in research labs to the age of serving millions of instant, affordable answers to the public.

This inflection point is driving a massive wave of hardware innovation aimed squarely at inference—the process of using a trained model to generate output. The recent introduction and promotion of the Language Processing Unit (LPU) as a dedicated accelerator highlights a clear trend: the era of general-purpose silicon is yielding to specialized architecture built for the unique complexities of language processing and real-time API deployment.

The Inference Bottleneck: Why GPUs Are Starting to Struggle

To understand why LPUs matter, we must first understand the cost and efficiency crisis facing current LLM deployment. Training a model requires immense computational power, often justifying the high cost of flagship GPUs. Inference, conversely, requires speed, low latency, and, critically, extreme cost-efficiency at massive scale.

As confirmed by industry analysis on the need for hardware specialization, running large models like GPT-4 requires staggering amounts of memory and computational cycles for every single query. This leads to an unsustainable operational expenditure (OPEX) for companies offering public AI services. General-purpose GPUs, while powerful, carry architectural baggage optimized for parallel floating-point arithmetic (training), not necessarily the specific matrix multiplication patterns or memory access required for the constant, repetitive demands of inference serving.

This recognition fuels the move toward **specialized hardware**. We see this across the industry, where major cloud providers are developing their own custom silicon, like AWS’s Inferentia, specifically to reduce latency and operational costs compared to renting standard GPU time. This market pressure validates the need for accelerators like the LPU, which promise to deliver better performance per watt and per dollar specifically for language tasks.

Actionable Insight for Architects: If your goal is scaling public-facing AI services with predictable workloads, relying solely on the flexibility of GPUs is becoming an economic liability. Specialized accelerators are moving from niche performance boosters to necessary cost controllers.

The LPU Advantage: Tailored for Action and API Deployment

The LPU concept, often presented in the context of deploying public Model Control Plane (MCP) servers as an API endpoint, suggests a chip optimized not just for raw token generation, but for the *workflow* surrounding modern LLM applications. Two key aspects distinguish this approach:

1. API Focus and Deployment Density

By positioning the LPU for immediate API endpoint deployment, the focus is on maximizing throughput density. This means fitting more high-performance inference engines into a smaller server footprint while ensuring stable, low-latency connections essential for customer-facing applications. This is a shift from the lab cluster model to the production server model.

2. The Critical Role of Function Calling

Perhaps the most forward-looking element highlighted is the integration of tools using function calling. LLMs are evolving from static text generators into dynamic reasoning engines. Function calling allows the LLM to interpret a user request (e.g., "What's the weather in London and book me a flight?"), pause its text generation, call an external, specialized software tool (like a weather API or a booking system), ingest the result, and then synthesize a final answer.

This process is computationally complex. It involves the initial LLM reasoning, context switching, executing external code, and then resuming the LLM context. As confirmed by technical documentation surrounding tool use, this sequence places unique architectural demands on the processor. A dedicated LPU suggests an architecture designed to handle these multi-step reasoning hops with minimal latency overhead. For developers, this means AI agents that feel instantaneous rather than delayed while waiting for external data retrieval.

To put it simply for all readers: If the GPU is a Swiss Army knife that can do everything reasonably well, the LPU is being designed as a specialized, high-speed wrench designed specifically for the unique bolts and gears of language reasoning and tool use.

Contextualizing the Competition: Beyond the GPU/TPU Binary

The emergence of LPUs forces us to look past the traditional training/inference split between GPUs (NVIDIA) and TPUs (Google). We are entering an era of hyper-specialization where the hardware is designed around the *application* of the model, not just the model itself.

When examining the **emerging AI inference chip landscape**, the market is fragmenting into segments optimized for specific parameters:

- Latency Optimization: Companies prioritizing near-instantaneous response times, sometimes sacrificing absolute throughput volume.

- Throughput Optimization: Maximizing the total number of tokens served per second, often for batch processing or less latency-sensitive tasks.

- Workflow Optimization: Chips like the LPU, aiming to accelerate specific sequences of operations, such as the reasoning required for function calling or complex multi-agent orchestration.

For example, the progress made by major cloud providers in creating custom silicon ([AWS Custom Silicon for AI Inference](https://aws.amazon.com/machine-learning/custom-silicon/)) proves that the economic argument for bespoke hardware is winning. While NVIDIA still commands the training market, the inference market is proving fertile ground for challengers who can undercut the cost structure significantly.

The Economic Imperative: Lowering the Cost of Intelligence

The underlying force driving all these hardware innovations is economics. The staggering cost of running LLMs at scale is the single biggest barrier to widespread, democratized AI access. We need to move away from solutions where the cost of a simple query is prohibitively high.

Research into the **cost efficiency of LLM inference serving** consistently points to specialized ASICs (Application-Specific Integrated Circuits) as the key to unlocking mass adoption. If an LPU can deliver the same quality of inference as a high-end GPU cluster at a fraction of the hardware cost and energy consumption (performance per watt), the entire business model for AI services changes.

Imagine an AI customer service agent. If the LPU drastically reduces the operational cost of that agent, companies can afford to deploy that agent across every customer touchpoint, rather than limiting its use to premium tiers. This democratization of affordable, high-quality inference is the true societal implication of the LPU trend.

Practical Implications for Businesses and Developers

For businesses currently integrating LLMs, the rise of specialized inference accelerators like LPUs presents both a challenge and a massive opportunity.

For Infrastructure Leaders (CTOs/CIOs):

You must begin auditing your current dependency on centralized GPU providers. The time to pilot specialized inference platforms is now. Understanding the specific computational profile of your deployed models—training vs. inference, simple generation vs. complex tool use—will determine which accelerator architecture you adopt. Flexibility in deployment (API endpoints) means vendors might increasingly offer their compute capabilities as managed services, reducing the need for massive on-premise hardware investment.

For Developers and AI Engineers:

Your development focus must expand beyond model weights. Proficiency in designing workflows that leverage function calling and external tooling will become paramount. If the LPU architecture is inherently superior at coordinating these tool calls, mastering how to structure prompts and schemas to leverage this capability will become a core competency. You are building agents, not just chatbots.

Actionable Insights for Navigating the Future

The LPU announcement is not just about a new chip; it's a signal flare regarding the future path of AI deployment. Here are actionable insights derived from corroborating industry trends:

- Benchmark Workflow, Not Just FLOPS: Stop using peak theoretical performance numbers (like TeraFLOPS) as your primary metric. Instead, benchmark latency for complex, multi-step tasks that involve external API calls (function calling simulation).

- Embrace API Standardization: If dedicated inference providers win the cost war, their preferred method of integration (like standardized API endpoints for MCP servers) will become the industry standard for deployment, forcing a move away from bespoke local hosting.

- Watch the Ecosystem Build-Out: The success of an LPU relies on a robust software ecosystem. Look for supporting frameworks, easy integration libraries, and cloud deployment templates that make shifting from older hardware seamless.

- Prioritize Total Cost of Ownership (TCO): Factor in energy consumption and cooling alongside purchase or rental price. Specialized ASICs almost always win on long-term TCO for high-volume, specific workloads.

The silicon race is accelerating, moving from a general contest of raw processing power to a sophisticated battle for efficiency in specific, high-value computational tasks. The Language Processing Unit represents a significant milestone in this specialization, promising a future where powerful, reasoning AI agents are not only possible but economically viable for everyone.