The Inference Revolution: How LPUs, Function Calling, and Custom Silicon Are Redefining AI Deployment

For years, the world of Artificial Intelligence has been defined by two primary phases: training and inference. Training is where we teach the massive neural networks, requiring supercomputers filled with thousands of powerful, expensive GPUs. Inference is where the models actually do the work—answering questions, generating images, or powering customer service bots. As foundational models have scaled into the trillions of parameters, the cost and speed of inference have become the single biggest bottleneck preventing widespread, instantaneous AI application.

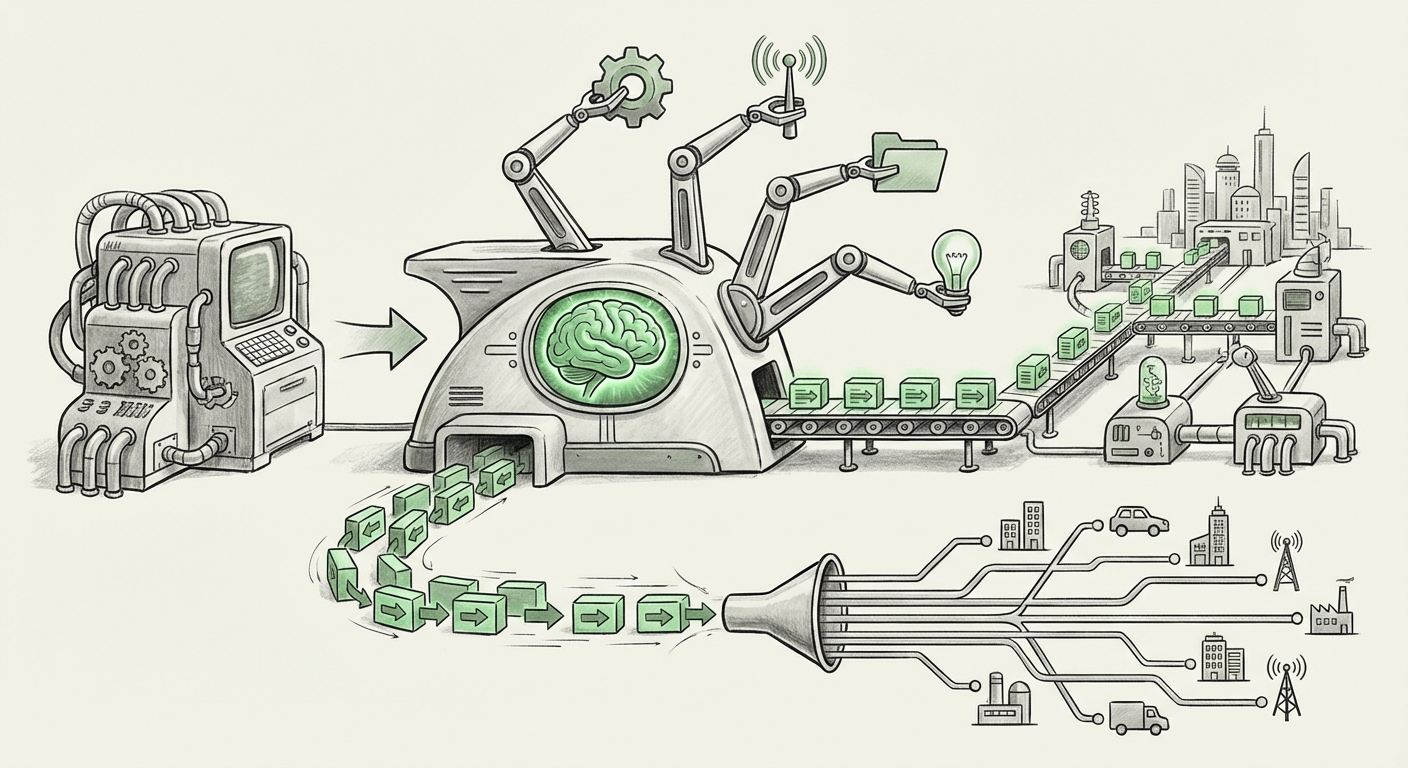

Recent industry movements, highlighted by the emergence of specialized hardware like Language Processing Units (LPUs) and sophisticated software integration techniques like function calling, signal a massive pivot. We are moving from an era defined by raw training power to one obsessed with efficient, scalable deployment. This shift is not just incremental; it is foundational to how AI will integrate into every business workflow.

The Hardware Arms Race: Beyond the Reign of the GPU

Nvidia’s GPUs have been the undisputed champions of the AI era, largely because they were flexible enough to handle both the massive parallel computations required for training and the subsequent inference load. However, this flexibility comes at a cost: generalized hardware is often inefficient for highly specific tasks.

The rise of specialized chips—often termed Domain-Specific Architectures (DSAs)—is a direct response to this inefficiency. The introduction of concepts like the Language Processing Unit (LPU) suggests that the industry recognizes that LLMs have unique computational signatures that can be accelerated dramatically by custom silicon.

Why Specialization Matters for Inference

Consider the difference between a Swiss Army knife (a general-purpose GPU) and a dedicated precision screwdriver (an LPU). While the knife can do many jobs, the screwdriver is exponentially better and faster at one specific job: turning screws.

Recent industry analysis on specialized AI accelerators confirms this necessity. If inference dominates operational costs—often far outweighing the initial training investment—then finding a chip that can process tokens at lightning speed with minimal energy consumption is crucial. This pursuit has led companies to develop architectures optimized specifically for the sequential data flow inherent in language models, rather than the matrix multiplication dominant in training.

The competitive landscape now includes not just cloud provider custom chips (like Google’s TPUs) but also startups focusing on pure inference speed. This pursuit of alternatives validates the push represented by the LPU concept: to achieve orders of magnitude improvement in latency and cost for running the models we already possess.

Actionable Insight for Hardware Leaders: The competitive moat is shifting from who can build the biggest chip for training to who can build the most cost-effective and lowest-latency chip for serving the existing model catalog. The race is now firmly focused on the "last mile" of AI deployment.

The Software Glue: From Static Models to Dynamic Agents

A fast chip is useless if the software infrastructure around it is slow and brittle. The second major trend underpinning this LPU discussion is the operational shift toward treating LLMs not as standalone chatbots, but as programmable reasoning engines.

This is where the integration of LLMs via public API endpoints and, critically, function calling, becomes central. Function calling allows an LLM to recognize when it needs external data or needs to perform an action—such as checking inventory, sending an email, or querying a specific database—and output structured instructions for the application to execute.

The Latency Budget in the Age of Orchestration

When an application relies on LLM API calls—especially those involving function chaining—the total user experience hinges on the cumulative latency. If a simple query requires the LLM to call a database API, wait for the result, and then formulate a final answer, every millisecond counts. Slow inference hardware directly translates into poor user experiences and costly API bills.

As established industry guides on scalable LLM serving architecture frequently point out, latency is now the primary metric of success for deployed AI services. The entire MLOps ecosystem is adapting to support rapid model serving and orchestration layers that manage these dynamic calls.

Key Takeaway for Developers: The modern AI application stack is inherently asynchronous and orchestrated. Hardware improvements must match the sophistication of the software architecture. An LPU that provides single-digit millisecond response times on the core reasoning task allows developers to build complex, multi-step agents without frustrating the end-user.

Corroborating the Trend: Economic and Technical Pressures

To truly understand the magnitude of this shift, we must look at the pressures driving it from both economic and technical viewpoints. These external data points validate why specialized hardware and API efficiency are paramount:

1. The Inference Cost Cliff

While training models like GPT-4 costs tens of millions of dollars, the long-term cost of running those models for millions of users dwarfs the initial training expenditure. Reports consistently show that operational inference costs are quickly becoming the dominant financial burden for AI companies. This economic reality means that a 10x speedup on inference, even at a marginal hardware cost increase, provides massive ROI.

This financial pressure is the engine demanding custom chips. If a standard GPU costs $X to run 1,000 inferences per second, and a specialized LPU can do it for $0.1X while handling the same load, the choice is obvious for any business scaling globally.

2. Architectural Alignment

The hardware architecture must align with the required software patterns. As software engineers build complex agents using function calling and tool use (Source 2 focus), they require reliable, predictable latency profiles. They are looking for predictable performance from an API endpoint, not variable performance dictated by queuing on a shared, generalized accelerator.

This technical alignment is why services are being deployed as clean API endpoints. It abstracts the underlying silicon complexity—whether it’s an LPU, a custom ASIC, or a dedicated cloud inference chip—allowing developers to focus solely on the application logic that leverages the model’s reasoning capabilities.

Practical Implications: What This Means for Businesses and Society

The convergence of ultra-efficient inference hardware (LPUs) and robust API integration (Function Calling) is paving the way for the next generation of AI applications.

For Business: Real-Time, Everywhere AI

This synergy enables AI to move from the lab or the slow web portal directly into high-frequency, real-time business operations.

- Hyper-Personalized Interactions: Low-latency inference means sophisticated AI can be embedded directly into live customer support chat, truly mimicking human responsiveness, or used for instantaneous, context-aware sales recommendations.

- Edge AI Expansion: While LPUs are currently cloud-focused, the principles of efficiency scale down. If inference becomes cheap enough and fast enough in the cloud, many edge applications (like advanced robotics or local device processing) become economically viable sooner.

- Democratization of Agentic Systems: Function calling makes complex, multi-step tasks manageable. When this is underpinned by affordable, fast hardware, building sophisticated, automated business agents (e.g., an AI that handles an entire travel booking process end-to-end) becomes practical for mid-sized enterprises, not just tech giants.

For Society: Lowering the Barrier to Entry

On a societal level, the shift toward inference efficiency democratizes access to cutting-edge AI. If the operational cost drops significantly, smaller developers, non-profits, and independent researchers can afford to run powerful models continuously.

This efficiency mitigates the "AI divide" where only the wealthiest entities can afford to deploy sophisticated AI at scale. When deployment becomes cheaper, innovation accelerates across diverse sectors, from localized medical diagnostics to specialized educational tools.

Actionable Insights: Preparing for the Inference-First World

For organizations looking to capitalize on this technological acceleration, focus must shift from model novelty to deployment mastery:

- Audit Inference Pathways: Identify the top 5 most frequently called LLM endpoints in your current infrastructure. Benchmark their current latency and cost per 1,000 tokens. These are your primary targets for migration to specialized inference platforms.

- Standardize on API Contracts: Ensure that all LLM interactions are abstracted behind standardized API contracts that explicitly define expected function call structures. This modularity future-proofs your application against rapid hardware changes (like switching from a GPU cluster to an LPU farm).

- Experiment with Hybrid Architectures: Don't wait for the perfect LPU standard. Explore current optimized inference solutions, including specialized hardware from major providers or dedicated inference startups. Evaluate performance based on your specific model structure (e.g., context window size, required throughput).

The age of brute-force scaling is waning. The future belongs to those who can optimize the execution of intelligence. The synergy between customized hardware like LPUs, focused on raw speed, and intelligent software orchestration like function calling, focused on utility, defines the next great frontier in applied AI. The revolution is not in creating the next giant model; it is in making the current giants fast, cheap, and useful everywhere.