The $1 Billion Pivot: Why Yann LeCun's Push Beyond LLMs Signals AI's Next Era

For the last several years, the AI narrative has been dominated by one architecture: the Transformer, the engine powering Large Language Models (LLMs) like GPT-4 and Llama. These models have achieved remarkable feats in generating text, code, and conversation. However, a seismic shift is occurring, marked by a colossal financial commitment that confirms what many deep researchers have suspected: the LLM paradigm, while powerful, is hitting fundamental ceilings.

The confirmation arrived in the form of a staggering $1 billion seed funding round for Advanced Machine Intelligence Labs (AMI Labs), founded by Turing Award winner and Meta’s former chief AI scientist, Yann LeCun. This is not just a large investment; it is Europe’s largest seed round ever, and its explicit mission is to build AI beyond LLMs. As an analyst, this single event serves as a powerful market signal, validating years of under-the-radar research into alternative, more robust architectures.

The LLM Plateau: Why the Market is Ready to Move On

To understand the significance of AMI Labs, we must first understand the limitations of the current standard. LLMs are, fundamentally, sophisticated prediction engines. They excel at predicting the next most probable word in a sequence based on the massive datasets they’ve been trained on. This mechanism leads to fluency, but often lacks true comprehension, causal reasoning, and long-term planning.

The investment rationale driving this pivot (as reflected in discussions around this funding) centers on three critical shortcomings:

- Lack of World Models: LLMs do not inherently possess an internal model of how the physical world works. They know that "the cup falls when you drop it" because they read it, but they don't *simulate* gravity or object persistence. True intelligence requires understanding cause and effect.

- Data Inefficiency: Current models require astronomical amounts of data (and energy) to achieve competence. Humans, conversely, can learn complex tasks from just a few examples, or through interaction.

- The Reasoning Gap: While LLMs can mimic reasoning steps, they often fail on complex, multi-step logic problems or tasks requiring planning that falls outside their training distribution.

When top-tier investors—the gatekeepers of capital—pour a billion dollars into a venture focused on solving these exact problems, it signals a clear consensus: the current path of simply scaling up existing transformer models is reaching diminishing returns in terms of achieving Artificial General Intelligence (AGI).

The Technical Pivot: World Models and Predictive Architectures

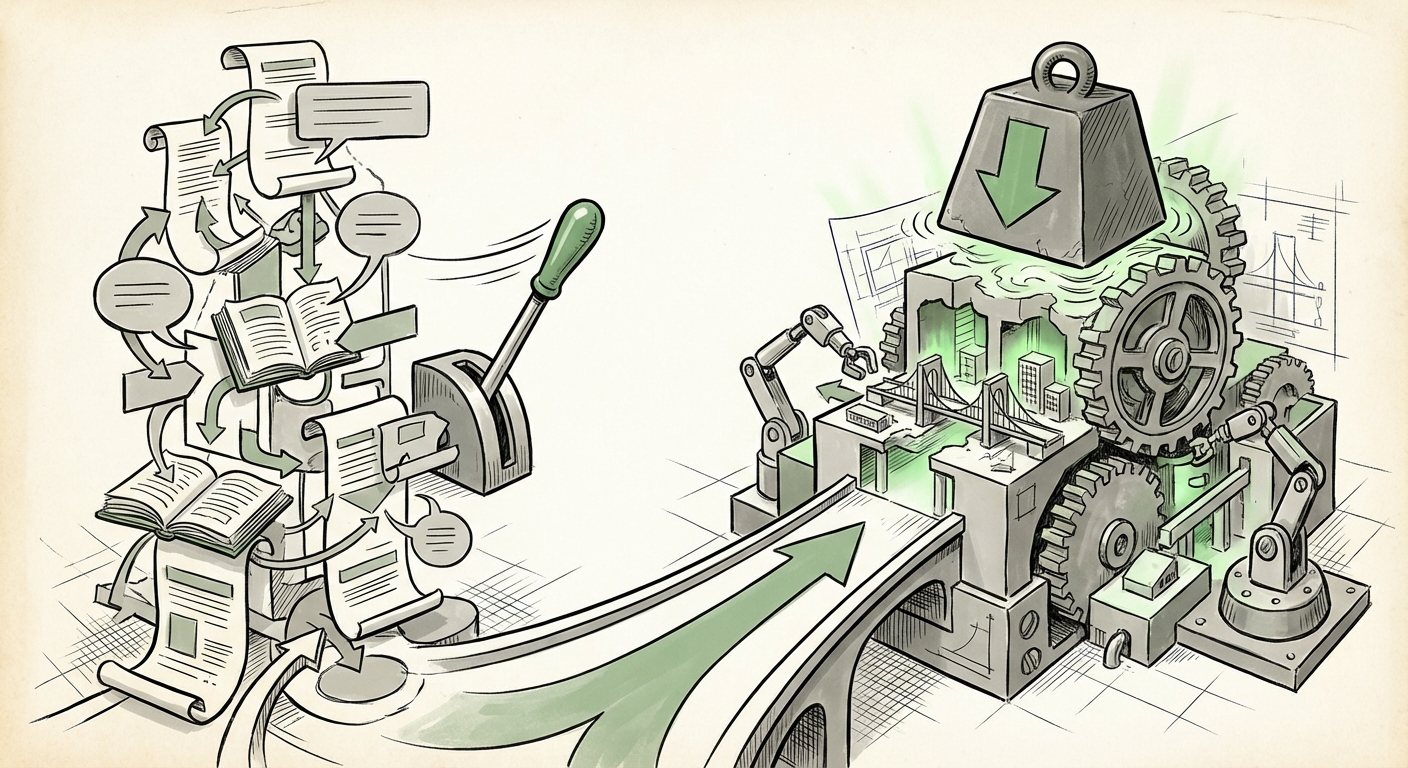

If the future isn't just bigger LLMs, what is it? Yann LeCun has been a consistent advocate for an alternative pathway centered on **self-supervised learning** that constructs internal representations of reality, often termed **World Models**.

This is where the technical architecture matters. We are looking for a departure from the auto-regressive (next-token prediction) method characteristic of LLMs. The expected foundation for AMI Labs likely involves architectures that prioritize predictive learning about the environment, rather than just language.

The JEPA Hypothesis

One of the most discussed alternatives championed by LeCun is the Joint Embedding Predictive Architecture (JEPA). Imagine you are looking at a fuzzy, partial image. A traditional model might try to guess every missing pixel. JEPA, however, learns to predict the *essence* or the *meaning* of the missing part, represented in a compressed, abstract form (an embedding). This forces the system to learn concepts and high-level representations necessary for real-world understanding, rather than superficial statistical correlations.

For a non-technical audience, think of it this way: An LLM memorizes what the world *says*. A World Model system, utilizing concepts like JEPA, learns how the world *behaves*. This is the difference between reading a cookbook and actually being able to cook well enough to substitute ingredients when one is missing.

This focus on predictive coding and world models is crucial for the next wave of AI, specifically for systems that need to interact with the physical world—robotics, autonomous agents, and embodied AI.

Contextual Note: LeCun’s foundational work in this area, often discussed in comparison to current generative models, provides the technical scaffolding for this investment thesis.

The Geopolitical and Ecosystem Context: Europe's AI Moment

The geographic location of this massive funding round cannot be overlooked. The fact that this is the largest seed round in European history highlights a strategic maturation of the continent’s deep-tech ecosystem. For years, leading AI research and funding dominated the US (Silicon Valley) and China.

This funding surge suggests a potent combination:

- Talent Magnetism: The ability to lure one of the field's most recognized figures (LeCun) away from a tech titan like Meta with the promise of radical freedom.

- Investor Confidence in Deep Tech: A belief among European investors that they can back foundational, high-risk, high-reward research that doesn't necessarily need immediate commercial application pathways, contrasting sometimes with the rapid monetization focus seen elsewhere.

- Regulatory Clarity (or Difference): While the EU AI Act is stringent, it also establishes a clear framework. Some investors might see this structure as creating a stable environment for building long-term, trustworthy AI foundations, potentially contrasting with the often ambiguous regulatory landscape in the US.

This success acts as a catalyst. If AMI Labs proves the thesis that non-LLM architectures can unlock the next tier of intelligence, expect a significant capital inflow into European research labs focusing on embodied AI, robotics, and novel computational paradigms.

Relevant Trend Corroboration: News tracking the growth of European AI funding suggests increasing sophistication beyond application layers and into foundational research. (Search Query 3 Context)

The Talent Migration: Experts Seeking Unconstrained Innovation

LeCun’s move is emblematic of a growing trend: top-tier foundational researchers leaving the security and scale of Big Tech labs to pursue novel, potentially riskier research paths via well-funded startups. Big tech companies, while brilliant, are often constrained by existing product lines and immediate shareholder demands. If your primary product is an LLM chatbot, betting a massive portion of your R&D budget on something explicitly *not* an LLM is difficult.

When figures like LeCun step out, they signal that the fundamental problems they wish to solve—creating agents that truly understand the world—require a clean slate, free from the legacy constraints of optimizing the existing Transformer stack.

This dynamic suggests that the most disruptive breakthroughs in the next five years might originate from these nimble, heavily-funded independent labs rather than the integrated product pipelines of the FAANG giants. These founders are not seeking incremental improvements; they are seeking architectural revolutions.

Relevant Trend Corroboration: Analysts tracking the movement of senior AI talent in 2024 note an increase in foundational researchers exiting large firms to found ventures focused on next-generation paradigms. (Search Query 4 Context)

Implications for Business and Society: What Comes After Text?

The pivot beyond LLMs is not merely academic; it has profound practical implications for nearly every industry currently integrating generative AI.

1. The Rise of Embodied and Agentic AI

If AMI Labs succeeds in creating AI with robust world models, the focus shifts dramatically from chatbots to doers. Current LLMs can write great instructions for a robot, but they struggle to adapt in real-time when the physical world doesn't perfectly match expectations. Future AI, built on LeCun's vision, will possess the intrinsic understanding needed for complex, unpredictable physical tasks:

- Advanced Robotics: Robots capable of complex tasks in unstructured environments (warehouses, homes, disaster zones) without constant human retraining.

- Truly Autonomous Agents: Software agents that can define long-term goals, plan sub-tasks, execute them, learn from failure within the simulation of a world model, and adapt strategy dynamically.

2. Efficiency and Sustainability

Current LLM training is resource-intensive, demanding massive GPU clusters and colossal energy consumption. Architectures like JEPA, which aim to learn abstract concepts efficiently through self-supervision, promise a future where high-level intelligence requires significantly less computational overhead. This makes advanced AI accessible to smaller organizations and lowers the environmental footprint of future AGI development.

3. New Investment Thresholds

For the business audience, the \$1 billion seed round sets a new benchmark. Investors are now signaling readiness to fund *foundational research* in AI that is inherently riskier and longer-term than iterating on existing transformer APIs. Businesses looking to invest in "the next big thing" in AI should look past simple prompt engineering services and start assessing companies building genuine world models or causal reasoning engines.

Actionable Insights for Navigating the Next Wave

The transition from the LLM era to the World Model era will be messy, involving both incremental improvements to transformers and radical architectural overhauls. Here is how organizations and researchers can prepare:

- De-risk LLM Dependence: Do not build critical business infrastructure solely on proprietary LLM APIs. Begin exploring or piloting systems that incorporate external reasoning modules or simulation environments. Assume that the next breakthrough might render current reasoning shortcuts obsolete.

- Prioritize "Understanding" Over "Fluency": When evaluating emerging AI tools, ask whether the system is merely fluent (like an LLM) or if it demonstrates underlying comprehension (like a World Model). Tools that can simulate outcomes or adapt to novel sensory input are closer to the next generation.

- Engage with Alternative Research Communities: Researchers must actively study papers related to JEPA, predictive coding, and world models. The talent pool knowledgeable in these areas is smaller now but will be critical tomorrow.

- Monitor the Embodiment Sector: Keep a close watch on startups bridging AI with advanced robotics. These fields are the natural proving grounds for World Models, as failure in the physical world offers immediate, undeniable feedback about flawed reasoning.

Yann LeCun’s $1 billion bet is more than just a financial transaction; it is a powerful declaration that the AI race is not over, and the current champion (the Transformer) is being challenged by a more sophisticated contender. The coming years will be defined by the race to build machines that don't just talk about the world, but truly understand and interact with it.

References for Corroboration:

- The initial funding announcement provides the core context: Investors bet $1 billion on Yann LeCun's vision for AI beyond LLMs (https://the-decoder.com/investors-bet-1-billion-on-yann-lecuns-vision-for-ai-beyond-llms/)

- Discussions on the limitations and future trajectories of scaling laws often frame the need for world models: (Context derived from Search Query 1 analysis).

- Technical deep dives into LeCun's proposed architectures confirm the focus on predictive learning: (Context derived from Search Query 2 analysis on JEPA).

- Analysis of the broader European deep-tech funding climate validating the scale of the investment: (Context derived from Search Query 3 analysis).