The $1 Billion Pivot: Why Yann LeCun's Quest Beyond LLMs Signals AI's Next Frontier

The world of Artificial Intelligence has been captivated by the transformative power of Large Language Models (LLMs) for the last two years. From ChatGPT to sophisticated enterprise tools, these text-generating engines have dominated headlines, research grants, and venture capital flow. However, a seismic shift is occurring beneath the surface, underscored by a staggering financial declaration: the mobilization of over $1 billion in seed funding for Yann LeCun’s new venture, Advanced Machine Intelligence Labs (AMI Labs).

This isn't just a story about a large check; it’s a powerful market signal. When one of the Turing Award winners and foundational architects of modern deep learning dedicates his focus—and secures such massive capital—to building AI *beyond* the LLM paradigm, the entire industry must pay attention. This move suggests that the limits of current generative AI have been reached, and the next great leap requires a fundamental architectural redesign.

The $1 Billion Bet: Contextualizing the AMI Labs Announcement

The sheer magnitude of the seed round for AMI Labs—reportedly Europe’s largest ever—is difficult to overstate. It demonstrates an immediate, high-conviction belief from investors that the next generation of Artificial General Intelligence (AGI) will not be achieved by merely adding more data or parameters to the existing transformer architecture.

This funding serves as a robust **corroboration** of a budding sentiment among leading AI thinkers. It tells us that major financial players are willing to back foundational, high-risk research if the proponent is credible. This is not a bet on an application layer built *on top* of existing models; it is a bet on a brand-new operating system for intelligence.

For the European technology scene, this investment is a massive vote of confidence, suggesting that true AI leadership might not remain exclusively concentrated in Silicon Valley. It validates a strategy focused on deep, scientific breakthroughs rather than incremental feature rollouts.

The Cracks in the LLM Foundation: Why We Must Look Beyond Text

To appreciate LeCun’s pivot, we must understand why LLMs, despite their impressive fluency, are hitting a wall. Current LLMs excel at pattern matching, language generation, and summarization because they are trained to predict the next most likely token (word or part of a word) based on vast amounts of existing human text. But this method has inherent weaknesses that frustrate technologists and business leaders alike:

- Lack of Grounding and Common Sense: LLMs do not truly *understand* the world they talk about. They lack a predictive model of physics, object permanence, or causality. This leads directly to "hallucinations"—confidently stated falsehoods.

- Inefficient Learning: Humans learn concepts rapidly, often from very few examples (one-shot learning). LLMs require petabytes of data and staggering computational power (massive scaling) to achieve proficiency, making them environmentally costly and slow to adapt to new tasks.

- Poor Planning and Reasoning: While they can mimic reasoning steps, LLMs struggle with complex, multi-step planning where outcomes depend on sequences of actions in a dynamic environment.

These limitations become glaring when AI moves out of the chat window and into the physical world or critical decision-making loops. If an AI is controlling a robot, diagnosing a complex system failure, or managing a supply chain, statistical correlation (what LLMs do best) is insufficient; the AI needs **causal understanding**—knowing *why* things happen.

The Destination: World Models and Embodied Intelligence

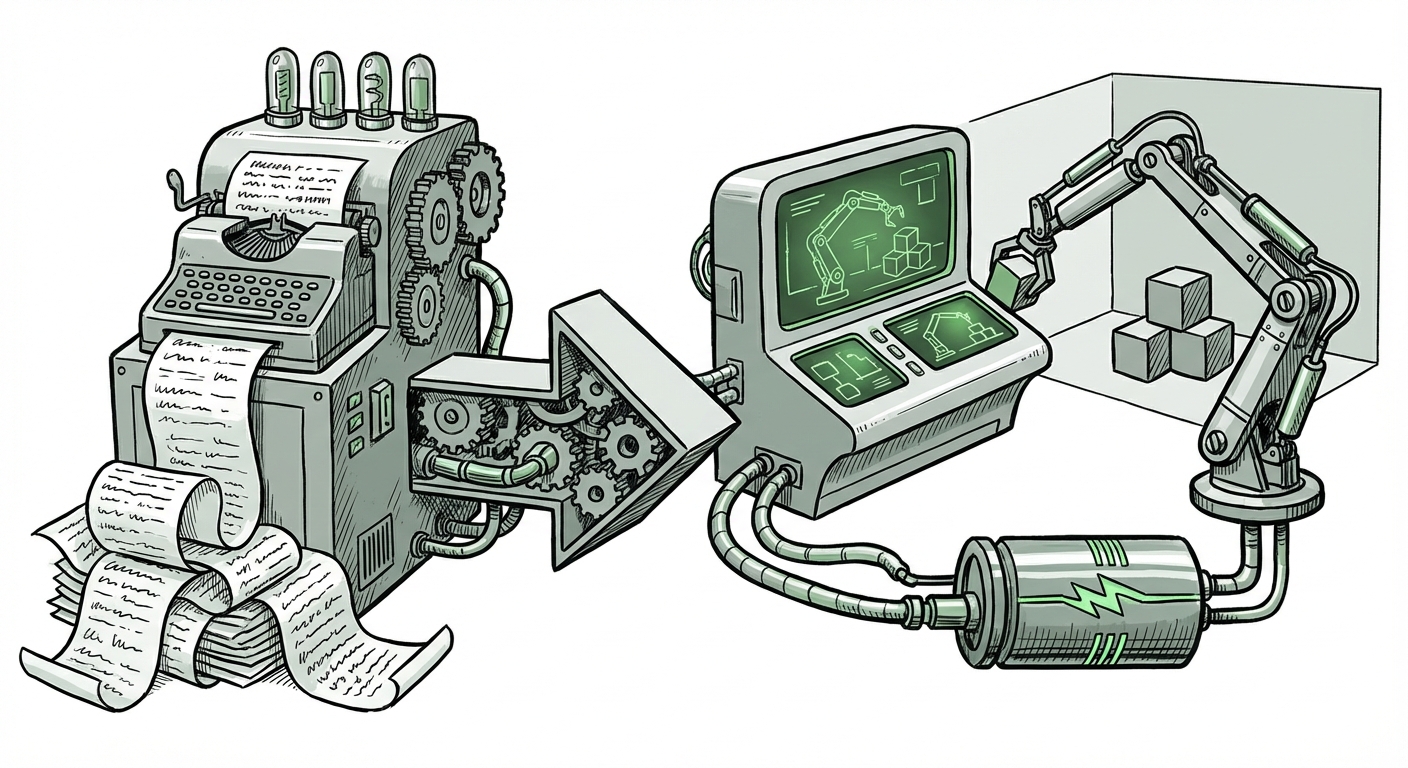

LeCun’s stated alternative centers on **World Models** and advanced **Self-Supervised Learning**. This is the technical core of the $1 billion bet and represents the true "Beyond LLM" vision.

What is a World Model?

Imagine a child playing with blocks. They don't need to read millions of books on gravity to learn that if they push a block off a table, it will fall. They build an internal simulation, a "world model," that predicts the consequences of their actions. Yann LeCun believes AI needs this same capability.

A World Model is an internal representation of the environment that allows an AI agent to:

- Predict: Look ahead and simulate potential future states given an action.

- Plan: Determine the best sequence of actions to achieve a distant goal based on those simulations.

- Understand Hierarchy: Grasp that complex tasks are made up of smaller, predictable steps.

This framework moves AI away from being purely reactive (like a chatbot responding to a prompt) toward being **proactive** and goal-oriented. This architecture is inherently better suited for Embodied AI—systems that interact with the physical world, such as advanced robotics, autonomous vehicles, and sophisticated industrial automation.

The Power of Self-Supervised Learning (SSL)

How do these models learn without being fed trillions of labeled examples? Through SSL. Instead of requiring humans to label everything ("This is a cat," "This is a stop sign"), SSL allows the model to learn structure and relationships directly from raw, unlabeled data—much like we learn by observing the world. This significantly reduces the dependence on expensive, slow, human-annotated datasets, unlocking massive scalability in new domains.

Shifting Investor Sentiment: From Scaling to Robustness

The massive investment into AMI Labs is not an anomaly; it reflects a maturing investment landscape. For a time, the narrative was "scale equals intelligence." Investors poured billions into companies focused solely on building the next 10-trillion-parameter model.

However, recent analyses show a growing recognition of diminishing returns in pure scale and rising concerns over the enormous operational costs (inference and training). Investors are beginning to ask:

- Can this model reliably perform in the real world?

- How much compute does it cost to deploy this reliably?

- Does it possess true reasoning capabilities, or just sophisticated parroting?

This subtle but powerful **shift in investment focus** is pushing capital toward architectural innovation. Investors are seeking "general intelligence" solutions that are more efficient, more reliable, and capable of true agency. The success of LeCun’s fund confirms that the market views novel architectures like World Models not as esoteric academic pursuits, but as the most direct path toward realizing robust, beneficial AGI.

Practical Implications for Business and Society

This divergence—the ongoing refinement of LLMs on one hand, and the push toward World Models on the other—will create two distinct, powerful waves of AI impact over the next decade.

For Software and Information Industries (LLM Refinement)

LLMs will continue to dominate tasks centered on human communication, content creation, legal analysis, and customer service. Businesses should continue integrating these tools for efficiency gains in knowledge work. However, they must implement rigorous validation layers because the LLM's confidence does not equal truth.

For Physical and High-Stakes Industries (World Models)

The true disruption, fueled by systems like those AMI Labs aims to build, will occur where intelligence meets the physical world:

- Manufacturing and Logistics: Robots that can fluidly adapt to unexpected changes on the factory floor, not just follow pre-programmed paths.

- Scientific Discovery: AI agents that can design novel molecular structures or simulate complex quantum systems without constant human guidance, by 'imagining' the results first.

- Advanced Robotics: Truly autonomous systems capable of operating safely and effectively in dynamic, unstructured environments (homes, construction sites, disaster zones).

For businesses, the actionable insight is diversification. Relying solely on language-based APIs may soon leave you behind solutions that can *act* intelligently in the real world.

Actionable Insights: Navigating the Next AI Era

As an analyst, I see three immediate actions for stakeholders:

- Re-evaluate AI Talent Strategy: While data scientists fluent in transformer models are vital, companies must now actively seek researchers and engineers skilled in reinforcement learning, self-supervised learning, and probabilistic programming—the core skills required for building World Models.

- Pilot Embodied AI Projects: Start small proofs-of-concept that require sequential action and planning rather than just summarization. Even if the current tech is nascent, understanding the gap between LLM planning and model-based planning is crucial for future readiness.

- Monitor Compute Allocation: World Models, while potentially more data-efficient than LLMs, will still require specialized, high-performance compute for their predictive simulations. Strategize on future hardware needs that support complex internal simulation environments.

Conclusion: The Dawn of Predictive Intelligence

Yann LeCun’s $1 billion venture is more than a funding event; it is a declaration of technological intent. It signals the end of the "language-only" honeymoon and the beginning of a more rigorous, scientifically challenging, but ultimately more powerful era of AI. The goal is moving from systems that *describe* the world brilliantly to systems that truly *understand and predict* the world robustly.

This pivot promises AI that is not just clever, but wise—systems capable of reasoning, planning, and safely interacting with the complexities of reality. The massive capital supporting this vision ensures that the race is now on to build the intelligence architecture that will power the next century of technological advancement.